7

DoD R&D Efforts in Ground-Control Systems

For this workshop session, the presenters responded to the steering committee’s comments about research and development (R&D) efforts by the U.S. Department of Defense (DoD) regarding Unmanned Aerial Systems (UASs) and other ground-control systems (GCSs):

Most of the R&D efforts in HSI [human-systems integration] for UASs to date have focused on DoD UAS platforms (as well as GCSs for other unmanned and/or autonomous vehicles). This session will review lessons learned and issues identified from that community with reference to their bearing on human-automation interaction considerations for operating UASs in the NAS. In addition, this session will offer suggestions for ongoing collaboration and information sharing across these fields.

Chris Miller (steering committee member) introduced the session by saying its purpose was to tap into 30-plus years of DoD research on GCS for unmanned systems to identify lessons learned and issues. He noted that the panelists included representatives from the Air Force and the Army.

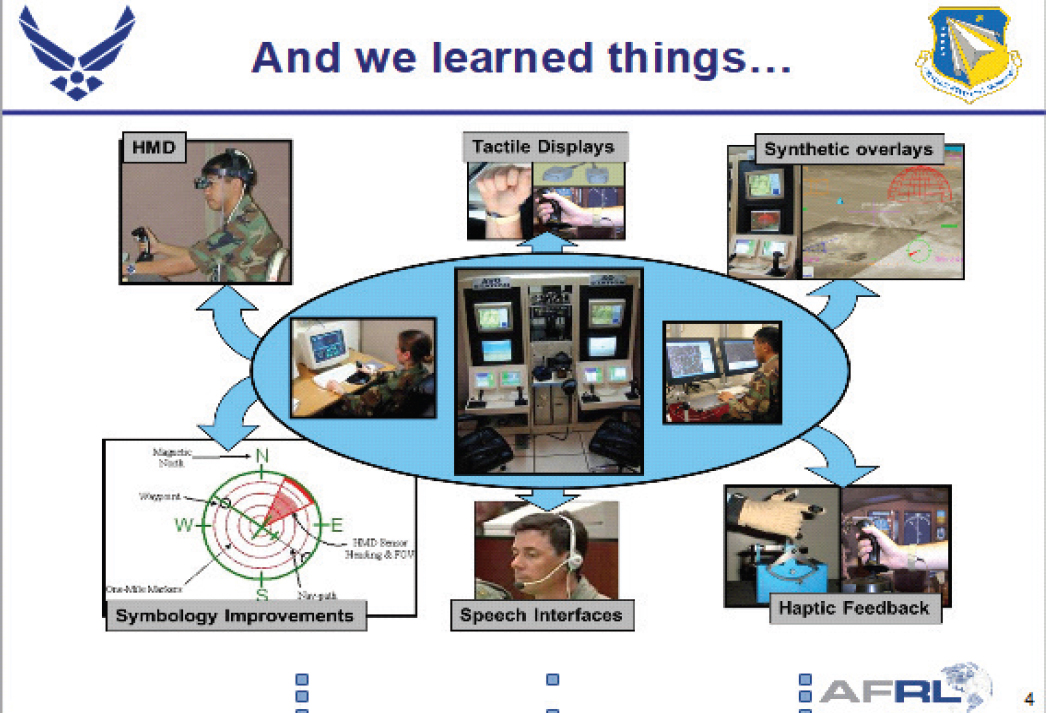

Mark Draper (Air Force Research Laboratory) discussed Air Force research involving the human role with semiautonomous systems. This research has involved human-automation interaction and the design of human-machine interfaces to enhance operator decision making and ensure flexible and robust mission operations. In the beginning, there was research on operator(s) for a single UAS, which resulted in a plethora of issues and much learning: see Figure 7-1.

Next, Draper said, there was the issue of controlling multiple UASs with a single operator, and that research—in interfacing, [machine] intellect, and integration—led to foundational knowledge, which could advance current operations and enable future visions. With respect to the appropriate balance of human interaction with autonomy, there are many issues, he said, for example, (1) How should autonomy aid decision making without inadvertently biasing the operator? and (2) How can one provide visibility into an autonomous capability to include its sense of task competency?

In the case of inadvertent bias, Draper said that his research group is working with experts, including those from Ohio State University, who have done extensive research in the area of induced bias based on order of decision steps. There are fruitful areas to explore in the ways in which information can be presented that can reduce such bias, which are directly affected by interface design. Ongoing research continues to make progress in this area.

In the case of the second example, he explained that it should be understood as “machine self-confidence.” This concept was developed in response to the notion of receiving recommendations from a machine to do

SOURCE: Draper, M. (2018). UAS Human-Automation Research in AFRL/RH. Presentation for the Workshop on Human-Automation Interaction Considerations for Unmanned Aerial System Integration (slide #4). Reprinted with permission.

something. But this concept raises three questions: Is the machine confident that its recommendation is a good solution? Does the machine know if it got all the requisite inputs it needed to come up with a solution? Does the machine know if some of the information is horribly dated and could affect the results? In response, he said, his group started toying with this idea of machine self-confidence, and they provided some funding to Chris Miller and Mary Cummings (steering committee members) to explore the area. Chris Miller used this funding to hold a couple of workshops in machine self-confidence.

Draper said that among the many lessons learned are that multi-UAS control offers many advantages, when perfected, and that workload and attention management are major challenges. He concluded by listing many other research topics: (1) multi-UAS transit control, (2) operator functional-state sensing and assessment, (3) multisensor inspection and management research, (4) agent cognitive framework and capability, (5) human autonomy team structure, (6) human cognitive and workload modeling, (7) planners development for keep-in/keep-out zones, (8) verifying and validating methods for intelligent automation, (9) cyber representation, and (10) communications.

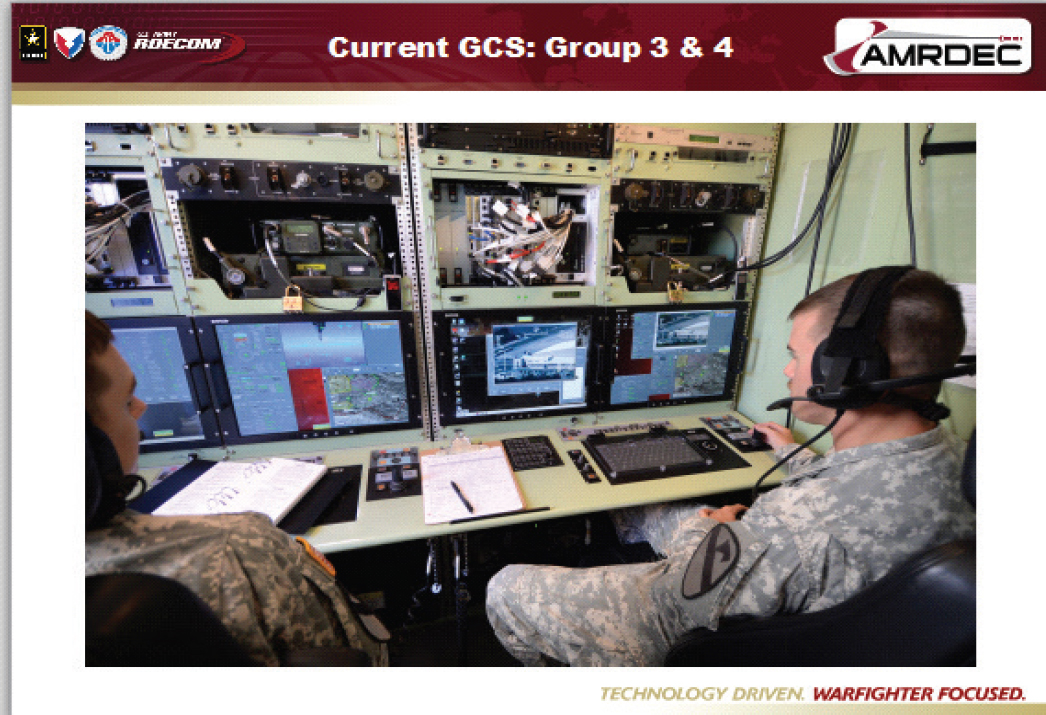

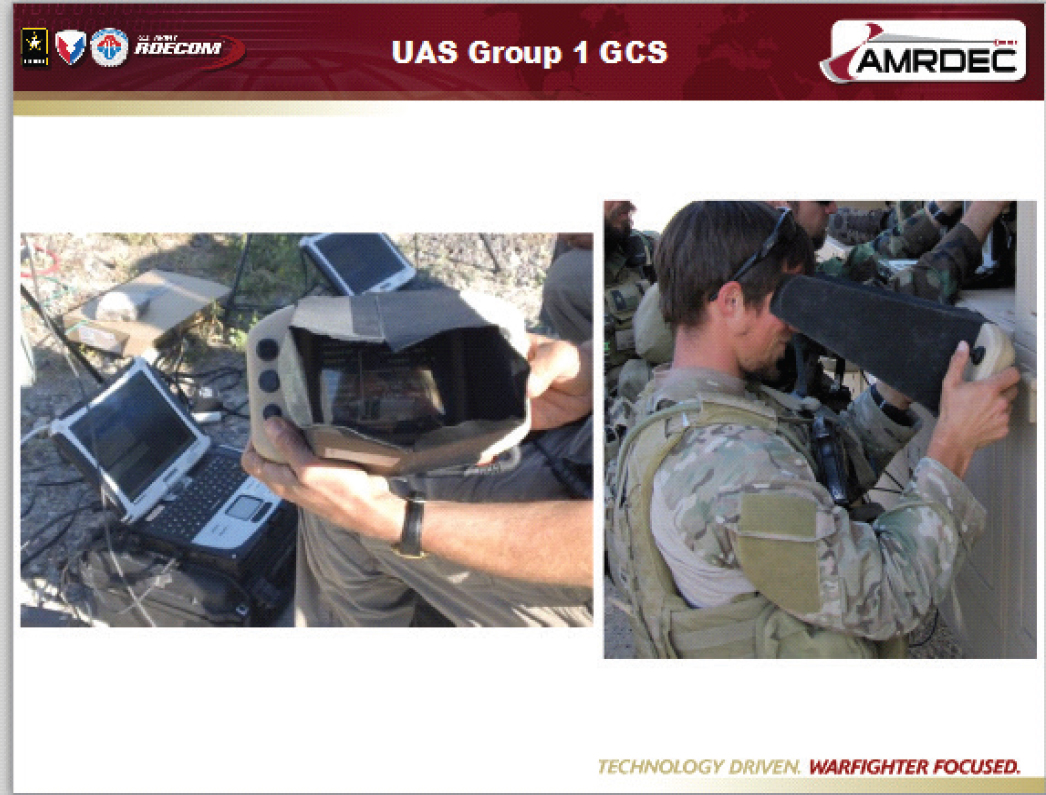

Grant Taylor (U.S. Army) noted that the principal Army UAS missions are reconnaissance, surveillance, targeting, and attack. He showed GCS arrangements for large UASs and very small UASs: see Figures 7-2 and 7-3. He discussed the pros and cons of current interfaces with automation, such as relatively simple with limited automation, but requiring specialty training and complicating communications with pilots, air traffic control, and commanders. He also discussed multi-UAS operations and “drowning in data, starved for information.” In addi-

SOURCE: Taylor, G. (2018). Lessons Learned from Army GCS Research. Presentation for the Workshop on Human-Automation Interaction Considerations for Unmanned Aerial System Integration (slide #9). Reprinted with permission.

tion, Taylor described three areas of ongoing research: multi-UAS operations, manned-unmanned teaming, and multimodal inputs and displays.

Taylor said that manned-unmanned teaming was the primary focus of his current research, which involves taking control stations forward from their traditional location on the battlefield and integrating them into manned cockpits. He explained that, in the current fielded Apache E-model attack helicopter, copilot gunners are not only responsible for managing their own aircraft’s weapons and sensors and navigation but they also have the ability to reach out and interact with command and control and receive full-motion video from a single unmanned aircraft. His research is looking at how to streamline that process and look at the control ratio. Taylor pointed out that he, like everyone else in the field, is trying to get as much capability at the pilots’ fingertips as possible without detracting from their primary responsibility of being a copilot gunner on an attack helicopter.

Chris Miller discussed the Navy’s swarm research. He first described swarm robotics, which are a large collection of agents with no centralized control and limited capabilities. He then offered an overview of human-swarm interaction, when individual robots are not typically addressable, and discussed a range of lessons learned and their relevance. For example, benefits of flexible multilevel interaction for UAS control have been shown, but for swarms, individual vehicles are beyond users’ effective control. Nevertheless, he said, UASs in the NAS will likely demand individual attention, so it is likely that flexible interaction and control at multiple levels of aggregation will be very important.

SOURCE: Taylor, G. (2018). Lessons Learned from Army GCS Research. Presentation for the Workshop on Human-Automation Interaction Considerations for Unmanned Aerial System Integration (slide #12). Reprinted with permission.

In response to Miller’s presentation, a participant noted: “This is probably more of a comment, but in some cases, it sounds like you’re discussing the controller as if the controller is a remote pilot. In our current airspace system, the controller is not a remote pilot: the controller does not control the aircraft in the way that a pilot does, so that’s something to at least keep in mind.”

Miller responded: “I think that’s absolutely true. I think we’re exploring, and across these different application areas in lots of the military research, we’re exploring where the operator ought to exist or operators in multiple aggregations. So, well taken, and we should probably talk more about all the implications of what those distinctions mean.”