2

Conceptual Foundations of Nutrient Reference Value Development

The following discussion provides background and supporting evidence for the committee’s assessment of methodological approaches to achieve a uniform and consistent basis for deriving nutrient reference values (NRVs) for young children (birth up to 5 years of age) and women of reproductive age across countries. The committee drew on evidence from the published literature as well as evidence presented and discussions that took place in the workshop Global Harmonization of Methodological Approaches to Nutrient Intake Recommendations (NASEM, 2018). Specifically, this chapter provides historical perspective on efforts to harmonize approaches to deriving NRVs; it describes the NRVs identified in this report; it reviews the components of the framework being used to derive NRVs; and it offers a recommendation for which NRVs to prioritize.

BACKGROUND

As described in Chapter 1, the United Kingdom (1991), the European Union (1992), and the United States jointly with Canada (1994) initiated the new approach to setting nutrient intake recommendations based on a set of NRVs rather than a single recommended intake. This new approach allowed for greater flexibility and broader application of the reference values, including the ability to plan and assess diets for individuals as well as groups. As more countries began to adopt the concept of a set of NRVs, the need for a more unified and consistent approach to deriving the values became apparent. In response, a core organizing framework for deriving NRVs was developed by an expert international panel convened by the

United Nations University’s Food and Nutrition Programme, in collaboration with the Food and Agriculture Organization (FAO), the World Health Organization (WHO), and the United Nations Children’s Fund (UNICEF) (King and Garza, 2007).

The 2007 international panel’s next task was to identify the core reasons justifying the need for a conceptual framework to harmonize the process used to derive NRVs (King and Garza, 2007). They identified four core reasons:

- Improve the objectivity and transparency of values that are derived by diverse national, regional, and international groups.

- Provide a common basis for groups of experts to consider throughout processes that lead to nutrient reference values.

- Permit developing countries, which often have limited access to scientific and economic resources, to convene groups of experts to identify how to modify existing intake values to meet their populations’ specific requirements, objectives, and national policies.

- Provide a common basis for the use of NRVs across countries, regions, and the globe for establishing public and clinical health objectives and food and nutrition policies, such as fortification programs, and for addressing regulatory and trade issues.

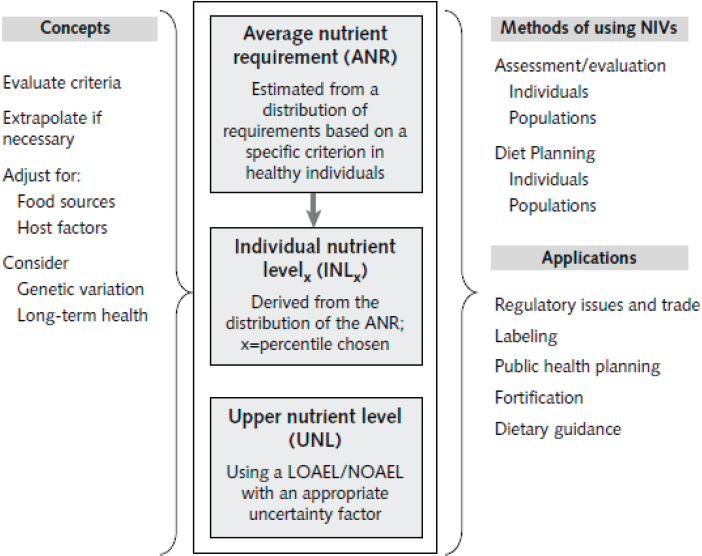

The organizing framework developed by the 2007 international panel focused on two key concepts: (1) estimating the average nutrient requirements (ANRs) for a population and (2) defining an upper nutrient level (UNL). The ANR was to be derived from a normal distribution of nutrient intakes needed to achieve a specified health outcome for the reference population. The UNL was determined as the highest level of usual intake of a nutrient that would pose no risk of adverse health effects in most individuals in the population.

The 2007 organizing framework, shown in Figure 2-1, is grounded in a risk assessment model and is based on expert review of primary data (King and Garza, 2007). That framework set the stage for developing future NRVs and became a baseline for this committee’s development of a separate framework for harmonizing methodological approaches for deriving NRVs globally.

Collectively, NRVs are described in this report as reference values rather than intake values because actual intakes of any nutrient vary widely across a population (Bourges et al., 2005; EFSA NDA Panel, 2010; Paik, 2008; Sasaki, 2008). The NRVs identified in the King and Garza (2007) organizing framework, while not standardized across countries, can be described in a consistent way (i.e., according to their application). Table 2-1

NOTES: NRVs are the average nutrient requirement (ANR) and the upper nutrient level (UNL). Other NRVs can be derived from these two values, (e.g., the individual nutrient levelx [INLx], which is used for guiding individual intakes, is the ANR plus some percentile of the mean). The ANR and UNL are derived from estimates of amounts needed for a specific physiological criterion, such as tissue stores, metabolic balance, or a biochemical function. The NRVs are modified for population differences in the food supply, host factors (e.g., infection and genetic variations), and needs for sustaining long-term health. The methods of using NRVs to assess or evaluate intakes of individuals and populations differ from those used for planning diets for individuals and populations. NRVs are the basis for a number of policy applications. LOAEL = lowest observed adverse effect level; NIV = nutrient intake value; NOAEL = no observed adverse effect level.

SOURCE: King and Garza, 2007.

compares terminology used to describe nutrient reference values currently in use around the world.

NUTRIENT REFERENCE VALUES

In this report, the committee describes the four core reference values: average requirement (AR), recommended intake (RI), adequate intake (AI),

TABLE 2-1 Comparison of Terms for Nutrient Reference Values (NRVs) Currently in Use Around the World

| Recommendation | United States and Canada | United Kingdom | European Union/EFSA | WHO/FAO |

|---|---|---|---|---|

| Umbrella term for NRVs | DRI | DRV | DRV | |

| Average requirement | EAR | EAR | AR | |

| Recommended intake level | RDA | RNI | PRI | RNI |

| Lower reference intake | LRNI | LTI | ||

| Adequate intake | AI | AI | ||

| Safe upper level of intake | UL | SUL | UL | UL |

| Appropriate macronutrient distribution range | AMDR | AMDR | RI | Population mean intake goals |

NOTE: AR = average requirement; DRI = Dietary Reference Intake; DRV = dietary reference value; EAR = estimated average requirement; EFSA = European Food Safety Authority; FAO = Food and Agriculture Organization; LRNI = lower reference nutrient intake; LTI = lower threshold intake; PRI = population reference intake; RDA = recommended dietary allowance; RI = reference intake range for macronutrients; RNI = reference nutrient intake; SUL = safe upper intake level; UK = United Kingdom; UL = tolerable upper intake level; WHO = World Health Organization.

SOURCE: Adapted from King and Garza, 2007.

and safe upper intake level (UL). Of these, ARs and ULs are critical for evaluating and planning nutrient intakes at the population level. RIs are used as the basis of dietary planning for individuals, and AIs are useful only as a benchmark that enables the risk of inadequate intake to be judged as low. Box 2-1 shows the four reference values and their definitions.

Average Requirement

The AR is defined as the intake needed by 50 percent of a population subgroup (defined based on age, gender, and physiological status) to meet a specific criterion of nutrient adequacy. Examples of adequacy criteria include liver stores of vitamin A, normal hematological status and serum concentrations in the case of vitamin B12, and normal hematology and plasma homocysteine in the case of folate.

Two commonly used methods to derive the AR are dose–response (or intake–response) and the factorial approach. Dose–response, the most

frequently used, provides a direct determination of a nutrient requirement (e.g., the level of intake that maintains the plasma level of a vitamin and its metabolites). The factorial approach involves estimating the amount of a nutrient needed to replace the amount lost through fecal, urine, and skin routes, either unchanged, or as a metabolite; then estimating the additional amount required to support growth, pregnancy, or lactation. Another, less commonly used method to derive an AR is the nitrogen balance study for use in estimating protein requirements.

The prevalence of intakes below the AR has been shown to be a statistically valid estimate of the prevalence of inadequate intakes in a population subgroup (Carriquiry, 1999; IOM, 2000). In what is known as the “EAR cut-point” method, both the AR and nutrient intake data are used to calculate the prevalence (percent of persons in each population subgroup) of inadequate intakes (i.e., intakes below the AR) (Barr et al., 2002). This cut-point method is valid for almost all nutrients except energy; protein; and, in premenopausal menstruating women, iron (Carriquiry, 1999).

In countries or regions where risk of deficiency is high, food fortification or other programs can be used to plan fortification levels in foods such that intakes of only 2.5–5 percent of a group will fall below the AR (Allen, 2006; Allen et al., 2006).

Unfortunately, WHO/FAO and many other authoritative bodies do not provide ARs in their recommendations, in part because when ARs were developed, their importance for population dietary assessment and planning was not recognized. The exceptions in the WHO/FAO recommendations are vitamin B12 and folate, because these recommendations were established later than the AR values already established by the Institute of Medicine (IOM, 1998).

An additional problem is that the evidence for deriving an AR is limited for some nutrients. Indeed, in the recently completed set of nutrient intake recommendations by the European Food Safety Authority (EFSA), ARs were determined for only seven vitamins and three minerals owing to the fact that there were insufficient data to derive an AR with adequate certainty (EFSA, 2017a). In the absence of an AR, it is not possible to derive RI levels (i.e., the RDA, RNI, or PRI). In which case, the AI is closest in value as it represents the daily intake that appears to be adequate for a population.

Recommended Intake

RIs are calculated to meet the needs of 97.5 percent of individuals in a population, most commonly by adding two standard deviations to the AR of that group. RIs can be used to plan the diets of individuals, but not those of population subgroups. Individuals with intakes above the recommended

levels will have a very low risk of inadequacy. If the variability of nutrient requirements among individuals (interindividual variability) is not known precisely, in most cases it is assumed to be 10 to 15 percent of the AR. Because RIs meet the needs of almost all individuals, estimating the prevalence of inadequacy as the percent of intakes below these recommendations will substantially overestimate the true prevalence of inadequate intakes in a population subgroup; thus ARs are the only useful value for estimating the prevalence of inadequacy.

Adequate Intake

AIs should be established as a last resort if adequate data to derive an AR are lacking. An AI is the observed median intake of a nutrient by a group of healthy people with apparently adequate status of that nutrient. Because the AI of a population subgroup is likely to be even higher than the RI for that group, it should not be used as the basis for estimating the prevalence of inadequate intakes either. The only assumption that can be made is that, if the mean intake of a group is greater than the AI, then the prevalence of inadequacy is likely to be low. If the mean intake is less than the AI, then assessment of adequacy will have to depend on clinical and/or biochemical measures of nutrient status. It is clearly important that future efforts to develop nutrient intake recommendations should aim to develop ARs rather than AIs, given their limited usefulness.

Tolerable Upper Levels

ULs represent daily intakes that, if consumed chronically over time, will have a very low risk of causing adverse effects. They do not, however, refer to the acute effects of a high dose that might be consumed in a supplement. Criteria for deriving ULs have been described most recently by EFSA (EFSA, 2017b) and have been established for most nutrients by the IOM (1998b). Given the higher risks attached to excessive intake during different life stages, such as pregnancy, ULs can vary substantially across population subgroups. A general goal is to have less than 5 percent of a population subgroup with an intake greater than the UL, including intakes from supplements and fortified foods (IOM, 2000). This is sometimes a challenge when planning nutrient levels for fortification, and the goal is to have a low proportion of a population with intakes less than the AR; yet, the ULs of some population subgroups, such as children consuming fortified cereals, are easily exceeded. This problem is sometimes resolved by providing different types of fortified foods to meet the varying needs for specific nutrients among different population subgroups. There is no UL for some nutrients owing to insufficient data.

GUIDING PRINCIPLES IN SETTING NUTRIENT REFERENCE VALUES

New tools and methods either previously unavailable or not used in the past have enabled global opportunities for harmonizing methodological approaches to deriving NRVs. Since the publication of the original organizing framework by King and Garza (2007), NRVs developed, for example by the United States and Canada for calcium and vitamin D, have incorporated components into the original framework that introduced greater transparency and scientific rigor into the process and enhanced the accuracy and replicability of the values derived (IOM, 2011a). More recently, guidelines for the inclusion of chronic disease endpoints have been proposed by the U.S. National Academies (NASEM, 2017; Yetley et al., 2017). Before examining in depth how these changes have created an opportunity for the committee to propose a way toward harmonizing the nutrient review process, core components and guiding principles of the current process for setting NRVs are described in brief below. Relevant components are further described and analyzed relative to the committee’s task in Chapter 3.

The Average Requirement and Upper Level: Core Components of the Organizing Framework

The two core reference values used to derive all other reference values in the King and Garza (2007) organizing framework are the AR and the UL. As described by Janet King at the Global Harmonization workshop (NASEM, 2018), the process for setting an AR should:

- Be based on the mean nutrient intake of a specific population;

- Be established for all essential nutrients and food components that have public health relevance;

- Include acceptable macronutrient distribution ranges for carbohydrates, protein, and fat that reduce chronic disease risks associated with the intake of these macronutrients;

- Consider nutrient–nutrient interactions and quantify them, if possible; and

- Consider subpopulations with special needs, keeping in mind, however, that reference values are intended for apparently healthy individuals.

As described by King and Garza (2007), the UL is not a recommended intake, rather it is the highest level of usual daily nutrient intake that poses no risk of adverse health effects in most individuals in the population. Although the magnitude of uncertainty in risk of adverse effects needs to

be considered when setting the UL, it cannot be assumed that a selected uncertainty factor will be the same for all nutrients (NASEM, 2018).

Defining the population to be covered by an NRV has been an evolving concept over the past several decades. Previously, NRVs were derived to meet the needs of an “apparently healthy” population. This definition did not include individuals with disease states requiring medical management; frank malnutrition; malabsorption syndromes; or energy requirements linked to medical or physical disability. At the present time, while there is some correlation between obesity prevalence and a country’s wealth, recent data supports that about one-third of the global population is overweight or obese (Ng et al., 2014).

The implication of a rising global prevalence of overweight and obesity is a corresponding increase in risk of chronic disease. Thus, across most developed countries, and increasingly among low- and middle-income countries, a healthy population has become difficult to characterize; and the definition of an “apparently healthy” population has come into question. Concerns about the applicability of future DRIs to the variability in the health status of the general U.S. population informed the National Academies’ report Guiding Principles for Developing Dietary Reference Intakes Based on Chronic Disease, which recommends that future DRI committees “characterize the health status of the population in terms of who is included and excluded for each DRI” (NASEM, 2017, p. 30). The committee recognizes the importance of chronic disease, however, most NRVs for pregnancy and children are aimed at meeting specific nutrient needs for the most relevant health outcomes (e.g., growth, cognitive development). For the target age groups in this report, chronic disease is not a priority outcome.

Overview of the Process for Setting Nutrient Reference Values

After an authoritative body is convened to identify a nutrient for review, the overarching steps described by most authoritative bodies, including the National Academies (NASEM, 2018) and EFSA1 for deriving NRVs are:

- An existing systematic review is updated or a new review is initiated:

- An indicator is selected for the systematic review;

- The appropriate method is selected:

- Dose/intake–response assessment,

- Factorial approach, or

- Balance study;

___________________

1 See https://www.efsa.europa.eu/en/topics/topic/dietary-reference-values-and-dietary-guidelines (accessed August 7, 2018).

- The usual intake characteristics and dietary patterns of the target population subgroup are assessed; and

- Implications or special concerns for the population are considered.

Each of these steps is described in turn below. Relevant components are further described and analyzed relative to the committee’s task in Chapter 3.

Revise or Initiate and Carry Out a Systematic Review

Systematic reviews are conducted using evidence from a range of population subgroups; however, they generally comprise adult populations. Evidence from adults is frequently used as a basis for extrapolating values to children.

In the past, nutrient reviews were carried out using narrative evidence reviews. Now NRVs are derived from evidence gathered through systematic evidence-based reviews. The systematic review is a core tool for evaluating the strength and quality of evidence for associations between a nutrient under review and relevant health outcomes, as well as relevant endpoints. Generally, the systematic review is initiated once the nutrient for review has been identified by an authoritative body and before an expert nutrient review panel begins its work.

The process of systematically reviewing the literature in order to draw a conclusion about a scientific question hinges on the concept of evidence-based research. This concept promotes the systematic and transparent use of existing studies in order to increase validity, transparency, and accessibility of research results. It is based on the assertion that “to avoid waste of research, no new studies should be done without a systematic review of existing evidence” (Lund et al., 2016). Although originally developed to address intervention questions (Higgins and Green, 2011), over time, the systematic review approach has been adapted to broader questions and is now seen by various organizations as a way to strengthen the scientific value of their assessments (EFSA NDA Panel, 2010; IOM, 2011b; Slutsky et al., 2008).

In making his case (at the Global Harmonization workshop) that the systematic review should be a core component of the global harmonization of methodologies for NRVs (NASEM, 2018), Joseph Lau argued that because human physiology is essentially the same across the same subpopulations around the world, the same evidence base can be used to inform nutrient intake recommendations. Plus, the cost in expertise, time, and money to conduct a systematic review can be minimized through collaboration and sharing of resources. In addition, review panels now have at their disposal the Systematic Review Data Repository (SRDR), an open access, collaborative, Web-based repository of systematic review data established by the U.S.

Agency for Healthcare Research and Quality (2013). The SRDR serves as an archive and a data extraction tool that is available to users worldwide to assist with the development of a systematic review. An advantage of this database is that it can be reviewed, revised, and supplemented on an ongoing basis (NASEM, 2017).

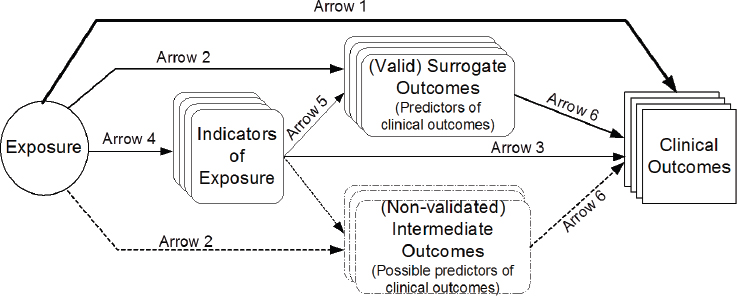

Also at the Global Harmonization workshop (NASEM, 2018), Lau emphasized that constructing a predefined analytic framework for a systematic review is a key step in the systematic review process (see Figure 2-2). The framework defines the scope of the evidence review, influences the selection of specific outcomes to assess, helps to establish a common set of research questions and review criteria for the systematic review, and can be used to construct an evidence map of the issues and questions to be addressed. Other key steps of the systematic review process are described below. The EFSA Guidance Document (EFSA NDA Panel, 2010) also describes key steps in the systematic review process.

Eligibility criteria The evidence to be included in a systematic review is selected according to eligibility criteria defined a priori. This reduces the risk of bias that could be introduced into the process if studies were selected according to their results, such as if only studies with statistically significant results were included. Eligibility criteria include study design

NOTES: Arrow 1: association of exposure with clinical outcomes of interest. Arrow 2: association of exposure with surrogate or intermediate outcomes. Arrow 3: association of indicators of exposure to clinical outcomes. Arrow 4: association between exposure and indicators of exposure. Arrow 5: association of indicators of exposure to surrogate or intermediate outcomes. Arrow 6: association between surrogate outcomes and clinical outcomes.

SOURCES: Presented by Joseph Lau at the Health and Medicine workshop, September 21, 2017. From Russell et al., 2009.

(e.g., randomized trials, observational studies, mechanistic studies, animal studies), age and sex of the study populations, and outcomes of interest as specified in the PICO/PECO tables (see Box 2-2). Depending on the indicator being set (e.g., AR or UL), an outcome can be a clinical condition associated with nutrient deficiency or excess; a measureable, reliable, and valid biomarker (e.g., plasma concentration) of the intake/status of the nutrient concerned; or a biomarker associated with a metabolic or inflammatory process, immune status, or other functional metrics of nutrient status.

Select an indicator The nutrient review process begins by selecting an indicator for the AR or UL (see Figure 2-2). This indicator is a health outcome or surrogate marker that serves as a measure of exposure to the nutrient of interest and becomes the foundation for estimating the reference values. In most cases, there is a single outcome measure for each nutrient’s AR or UL, and age/sex group. Indicator selection is generally based on expert judgment, and selection includes a thorough review of all relevant evidence, particularly when associations between a nutrient and health outcome are controversial.

Appraisal of internal and external validity (risk of bias) Evaluation of risk of bias is an inherent step in the systematic review process that requires assessment of limitations to the internal and external validity of each study included in a systematic review (Higgins and Green, 2011). Internal validity represents the extent to which a given study is able to provide accurate estimates of its outcomes of interest: External validity indicates the extent to which the results of a study can be extrapolated to a population that differs from the study population in some respect. Limitations to internal

and external validity can also be expressed as risk of internal and external bias. Limitations to internal validity can arise, for example, by using samples from a limited number of countries to provide estimates of the entire population, or by considering a limited number of food items as a proxy for the entire diet of an individual. The concept of risk of bias is different from that of study quality since even a well-designed study can be affected by some type of risk of bias (e.g., sometimes, for ethical reasons it is not possible to randomize the treatment to the individual). Risk of bias and ways to evaluate and manage it are discussed in more detail in Chapter 3.

Select an Appropriate Method: Intake–Response Assessment, Factorial Approach, or Balance Studies

Intake–response assessment The intake–response assessment describes how a known physiological outcome changes according to the intake of a nutrient. The physiological outcome may be a biomarker of function, disease, or other health outcome. Biomarkers are indicators on the causal pathway that link the intake of a nutrient to an endpoint or health outcome of interest and, as such, can be used to establish a causal relationship between nutrient intake and a deficiency disease. To illustrate, vitamin C is required to prevent scurvy, a disease characterized by fatigue, inflamed and bleeding gums, easy bruising, and poor wound healing (Medscape, 2017). Research in men recovering from scurvy has shown that vitamin C intakes (60 to 100 mg/day) elevate plasma ascorbate concentrations to about 50 μmol/L and body stores to between 1 and 1.5 grams (EFSA NDA Panel, 2013; Medscape, 2017). The EFSA Committee on Dietary Reference Values chose an average vitamin C requirement of 80 mg/d for women and 90 mg/d for men to maintain fasting plasma ascorbate concentrations of 50 μmol/L (EFSA NDA Panel, 2013).

As discussed below, biomarkers can also be used to establish an association between a nutrient intake and risk of chronic disease. Also described below, in addition to being used for the goals of preventing deficiency and preventing risk of chronic disease, intake–response assessments are also used to determine safe upper intake levels.

Preventing deficiency Avoiding nutrient deficiency was the original basis for establishing NRVs. In the United States, the first RDAs (1941) were used to inform food relief programs following the Great Depression; provide nutritionally adequate food provisions for the military in the Second World War; and serve as a basis for food fortification and other federal food guidance policies (Murphy et al., 2016; NRC, 1941). Establishing intake recommendations to prevent deficiency requires a thorough understanding of a population’s dietary patterns, adequacy of intake, and a sensitive and

specific biomarker of nutritional status with an established cutoff value that defines deficiency. Even when the relationship between nutrient intake and clinical manifestations of deficiency are clear, deriving NRVs is often complicated by related questions, such as how to maintain reserves of the nutrient or ensure optimal biological activity.

Preventing risk of chronic disease Avoiding nutritional deficiency is still a more pressing public health concern than reducing risk of chronic disease in much of the world. However, although scarcity of data remains a problem, as scientific understanding of the relationship between diet and disease advances, it has become apparent that it is necessary to evaluate the influence of different nutrients and nonessential food substances on the long-term probability of developing chronic diseases such as cancer, cardiovascular disease, or diabetes (NASEM, 2017; Yetley et al., 2017). Yet, chronic diseases can take decades to develop, and many factors influence their etiology, including diet, with each factor playing only a small part in the causal pathway. Additionally, intake of a given nutrient or nonessential food substance varies widely over the latency period for a chronic disease; much of the uncertainty in assessing relationships between intake and chronic disease lies in accurately measuring dietary patterns (NASEM, 2017).

Although biomarkers of intake can be objective measures of diet, they are prone to random error. As such, using biomarkers of intake for chronic disease endpoints introduces uncertainty. Thus, a biomarker of intake must be validated (NASEM, 2017; Yetley et al., 2017). Another challenge lies in choosing meaningful and suitable outcome indicators for the chronic disease in question, or a valid surrogate for these outcomes (NASEM, 2017). The analytical strategy for incorporating chronic disease into consideration for deriving an NRV has to account for such challenges, and the experts responsible for such analysis, as with all such studies, must be clear about how uncertainty in the data is addressed.

Determining a safe upper level of intake NRVs usually include a safe upper intake level. As stated previously, ULs are not recommended intakes, rather they are estimates of the highest level of daily intake that convey no appreciable risk of adverse health effects (EFSA NDA Panel, 2006; King and Garza, 2007). While setting the lower bound of a reference value is often a matter of estimating an intake that would avoid deficiency, the upper bound is both conceptually and analytically more complicated to derive. For this reason, the concept has been called ambiguous, “based more on what it is not, than on what it is” (Vieth, 2007). Either the no observable adverse effect level (NOAEL) or the lowest observable adverse effect level (LOAEL) is used to identify a UL, with adjustments made for uncertainty (also see Chapter 3).

An additional challenge with the UL is that when the usual dietary intake pattern results in a percentage of the population exceeding the UL, questions arise about the observable toxicity level. Vitamin A intake in young children illustrates the problem. In 2002, roughly one-quarter of preschool-aged children in the United States exceeded the UL for vitamin A intake from dietary sources alone; among those who took multivitamins, three-quarters exceeded the UL (Allen and Haskell, 2002). In contrast, dietary vitamin A deficiency is common among children in South Asia and sub-Saharan Africa. In low-income countries, twice-yearly supplementation programs for young children are a common strategy to combat this problem (Kraemer et al., 2008; WHO, 2018), and there is growing interest in home and commercial fortification programs to improve intake of vitamin A and other micronutrients on a more regular basis (Kraemer et al., 2008). When faced with such variation in dietary intake patterns, policy makers all over the world need to know just how narrow the safe intake range is.

Even consumption at or near the upper bound of a nutrient intake is not necessarily desirable, especially if sustained over a long period of time. Yet, consumption of many nutrients at higher levels is not uncommon in affluent countries, partly because of supplement use. U.S. national survey data suggest that about 30 percent of children and one-quarter to one-half of adults take dietary supplements regularly (Bailey et al., 2013; Office of Dietary Supplements, 2015). Research from Europe suggests wider variation in supplement use, ranging from low prevalence in Greece to half of men and almost two-thirds of women in Denmark (Skeie et al., 2009).

Factorial approach The factorial approach is used when biochemical indicators are not representative of actual nutrient levels. Instead, this method uses quantification of nutrient losses as an estimate of the physiological requirement. Use of the factorial approach requires taking into consideration the efficiency of absorption, and accounting for phytates and other mineral-binding compounds in food that can affect absorption.

Balance study Similar to the factorial approach that can be used when a nutrient under review does not have a biomarker representative of actual nutrient level is the balance study. Balance studies measure input and excretion—when they are equal it is assumed that the body is saturated. Also assumed is that the size of the body pool of the nutrient is appropriate and that increasing the levels of intake do not provide additional benefit. This approach is most often used to determine protein and mineral requirements. For protein, nitrogen balance studies are used to determine the amount of protein needed to replace losses without increasing the total body nitrogen level. As an example of the use of balance studies to estimate a mineral NRV, EFSA found that adults older than 25 years need

only replace the calcium lost in urine, feces, sweat, and skin cells (EFSA NDA Panel, 2015).

Conduct a Dietary Intake Assessment of the Population

The dietary intake assessment is used to assess the prevalence of intakes that fall outside a reference value or range in a specific population subgroup. Population-based intake data, generally available from national surveys or other large population databases, is used to estimate the prevalence of deficiency or excess. Correction for intraindividual variability (day-to-day) is important and can be achieved by quantifying intake on one day for the full sample, or two or more nonconsecutive days on a representative subsample (NRC, 1986). Biomarkers may be useful for estimating and validating the adequacy of reported intakes relative to the reference value (Carriquiry, 1999).

Consider Implications or Special Concerns for the Population of Interest

Of particular concern is consideration for adjustments in the reference values for populations, especially for those consuming a plant-based diet. In the case of zinc, for example, NRVs are adjusted to account for reduced absorption where phytates are present in food sources (Gibson et al., 2010). Deriving reference values for vulnerable population subgroups also includes consideration of uncertainties when making these adjustments. Additionally, the public health policy implications of the reference values need to be considered. In Chapter 4, the committee examines how these adjustments, uncertainties, and implications are managed for young children (birth up to 5 years of age) and women of reproductive age.

CONCLUSION AND RECOMMENDATION

The committee came to the following conclusion:

The purpose of deriving NRVs is to ensure that the majority of a generally healthy population will have sufficient nutrient intake levels to prevent deficiency disease and avoid adverse effects of excessive intake. Additionally, when applicable, reference values may be determined to reduce risk of chronic disease. The AR and UL are the two key values needed to carry out the necessary risk assessment and to develop public health policies, such as food fortification strategies. Statistical tools can be employed to calculate other values that represent the needs of a specific proportion of the population, for example, the intake value that meets the needs of

97.5 percent of the population (i.e., the RI). Additionally, information on the degree of uncertainty is an important consideration when estimating these values.

Recommendation 1. Nutrient reference expert panels should make two values their priority: specifically, the population average requirement (AR) and safe upper levels of intake (UL). Their reports should estimate the interindividual variability of requirements and use it to derive the AR. The expert panel should also acknowledge the basis and uncertainty in estimation of both values.

REFERENCES

AHRQ (Agency for Healthcare Research and Quality). 2013. SRDR: Systematic Review Data Repository. Rockville, MD: Agency for Healthcare Research and Quality. http://www.ahrq.gov/cpi/about/otherwebsites/srdr.ahrq.gov/index.html (accessed May 31, 2018).

Allen, L. H. 2006. New approaches for designing and evaluating food fortification programs. Journal of Nutrition 136:1055-1058.

Allen, L. H., and M. Haskell. 2002. Estimating the potential for vitamin A toxicity in women and young children. The Journal of Nutrition 132:2907S-2919S.

Allen, L. H., B. de Benoist, O. Dary, and R. Hurrell. 2006. Guidelines on food fortification with micronutrients. Geneva, Switzerland: World Health Organization, Food and Agriculture Organization.

Bailey, R. L., J. J. Gahche, P. R. Thomas, and J. T. Dwyer. 2013. Why US children use dietary supplements. Pediatric Research 74:737.

Barr, S. I., S. P. Murphy, and M. I. Poos. 2002. Interpreting and using the dietary references intakes in dietary assessment of individuals and groups. Journal of the American Dietetic Association 102:780-788.

Bourges, H., E. Casaneuva, and J. Rozado. 2005. Recomendaciones de ingestion de nutrimentos para la poblacion Mexicana. Mexico: Instituto Danone.

Carriquiry, A. L. 1999. Assessing the prevalence of nutrient inadequacy. Public Health and Nutrition 2:23-33.

EFSA (European Food Safety Authority). 2017a. Dietary reference values for nutrients. Summary report. EFSA supporting publication 2017:e15121.

EFSA. 2017b. Overview on tolerable upper intake levels as derived by the Scientific Committee on Food (SCF) and the EFSA Panel on Dietetic Products, Nutrition and Allergies (NDA). https://www.efsa.europa.eu/sites/default/files/assets/UL_Summary_tables.pdf (accessed January 11, 2018).

EFSA Dietetic Products, Nutrition, and Allergies (NDA) Panel. 2006. Tolerable upper intake levels for vitamins and minerals. EFSA Journal 1-480.

EFSA NDA Panel. 2010. Scientific opinion on principles for deriving and applying dietary reference values. EFSA Journal 8(3):1458-1488.

EFSA NDA Panel. 2013. Scientific opinion on dietary reference values for vitamin C. EFSA Journal 11(11):3418:68. https://doi.org/10.2903/j.etsa.2013.3418.

EFSA NDA Panel. 2015. Scientific opinion on dietary reference values for calcium. EFSA Journal 13(5):4101.

Gibson, R. S., K. B. Bailey, M. Gibbs, and E. L. Ferguson. 2010. A review of phytate, iron, zinc, and calcium concentrations in plant-based complementary foods used in low-income countries and implications for bioavailability. Food and Nutrition Bulletin 31(2 Suppl.):S134-S146.

Higgins, J. P. T., and S. Green (editors). 2011. Cochrane handbook for systematic reviews of interventions version 5.1.0. The Cochrane Collaboration. http://handbook.cochrane.org (accessed February 10, 2018).

IOM (Institute of Medicine). 1998a. Dietary Reference Intakes for thiamin, riboflavin, niacin, vitamin B6, folate, vitamin B12, pantothenic acid, biotin, and choline. Washington, DC: National Academy Press. https://doi.org/10.17226/6015.

IOM. 1998b. Dietary Reference Intakes: A risk assessment model for establishing upper intake levels for nutrients. Washington, DC: National Academy Press. https://doi.org/10.17226/6432.

IOM. 2000. Dietary Reference Intakes: Applications in dietary assessment. Washington, DC: National Academy Press. https://doi.org/10.17226/9956.

IOM. 2011a. Dietary Reference Intakes for calcium and vitamin D. Washington, DC: The National Academies Press. https://doi.org/10.17226/13050.

IOM. 2011b. Finding what works in health care: Standards for systematic reviews. Washington, DC: The National Academies Press. https://doi.org/10.17226/13059.

King, J. C., and C. Garza. 2007. Harmonization of nutrient intake values. Food and Nutrition Bulletin 28(1):S3-S12.

Kraemer, K., M. Waelti, S. de Pee, R. Moench-Pfanner, J. N. Hathcock, M. W. Bloem, and R. D. Semba. 2008. Are low tolerable upper intake levels for vitamin A undermining effective food fortification efforts? Nutrition Reviews 66(9):517-525.

Lund, H., K. Brunnhuber, C. Juhl, K. Robinson, M. Leenaars, B. F. Dorch, G. Jamtvedt, M. W. Nortvedt, R. Christensen, and I. Chalmers. 2016. Towards evidence based research. British Medical Journal 355:i5440.

Medscape. 2017. Scurvy: Practice essentials. https://emedicine.medscape.com/article/125350overview (accessed November 9, 2017).

Murphy, S. P., A. A. Yates, S. A. Atkinson, S. I. Barr, and J. Dwyer. 2016. History of nutrition: The long road leading to the Dietary Reference Intakes for the United States and Canada. Advances in Nutrition 7(1):157-168.

NASEM (National Academies of Sciences, Engineering, and Medicine). 2017. Guiding principles for developing Dietary Reference Intakes based on chronic disease. Washington, DC: The National Academies Press. https://doi.org/10.17226/24828.

NASEM. 2018. Global harmonization of methodological approaches to nutrient intake recommendations: Proceedings of a workshop. Washington, DC: The National Academies Press. https://doi.org/10.17226/25023.

Ng, M., T. Fleming, M. Robinson, T. Blake, N. Graetz, C. Margono, E. C. Mullany, S. Biryukov, C. Abbafati, S. F. Abera, J. P. Abraham, et al. 2014. Global, regional, and national prevalence of overweight and obesity in children and adults during 1980–2013: A systematic analysis for the Global Burden of Disease Study 2013. Lancet 384(9945):766-781.

NRC (National Research Council). 1941. Recommended dietary allowances. Washington, DC: National Academy Press. https://doi.org/10.17226/13286.

NRC. 1986. Nutrient adequacy: Assessment using food consumption surveys. Washington, DC: National Academy Press. https://doi.org/10.17226/618.

Office of Dietary Supplements. 2015. Multivitamin/mineral supplements fact sheet for health professionals. https://ods.od.nih.gov/factsheets/MVMS-HealthProfessional (accessed November 30, 2017).

Paik, H. Y. 2008. Dietary reference intakes for Koreans (KDRIS). Asia Pacific Journal of Clinical Nutrition 17(Suppl. 2):416-419.

Russell, R., M. Chung, E. M. Balk, S. Atkinson, E. L. Giovanucci, S. Ip, A. H. Lichtenstein, S. T. Mayne, G. Raman, A. C. Ross, T. A. Trikalinos, K. P. West, Jr., and J. Lau. 2009. Opportunities and challenges in conducting systematic reviews to support the development of nutrient reference values: Vitamin A as an example. The American Journal of Clinical Nutrition 89(3):728-733.

Sasaki, S. 2008. Dietary reference intakes (DRIs) in Japan. Asia Pacific Journal of Clinical Nutrition 17:420-444.

Skeie, G., T. Braaten, A. Hjartåker, M. Lentjes, P. Amiano, P. Jakszyn, V. Pala, A. Palanca, E. M. Niekerk, H. Verhagen, K. Avloniti, et al. 2009. Use of dietary supplements in the European Prospective Investigation into Cancer and Nutrition Calibration Study. European Journal of Clinical Nutrition 63:S226-S238.

Slutsky, J., D. Atkins, S. Chang, and B. A. Sharp. 2010. Comparing medical interventions: AHRQ and the effective health care program. Journal of Clinical Epidemiology 63(5): 481-483.

Vieth, R. 2006. Critique of the considerations for establishing the tolerable upper intake level for vitamin D: Critical need for revision upwards. The Journal of Nutrition 136:1117-1122.

WHO (World Health Organization). 2018. Vitamin A supplementation in infants and children 6-59 months of age. http://www.who.int/elena/titles/guidance_summaries/vitamina_children/en (accessed January 11, 2018).

Yetley, E. A., A. J. MacFarlane, L. S. Greene-Firestone, C. Garza, J. D. Ard, S. A. Atkinson, D. M. Bier, A. L. Carriquiry, W. R. Harlan, D. Hattis, J. C. King, D. Krewski, D. L. O’Connor, R. L. Prentice, J. V. Rodricks, and G. A. Wells. 2017. Options for basing Dietary Reference Intakes (DRIs) on chronic disease endpoints: Report from a joint US/Canadian-sponsored working group. American Journal of Clinical Nutrition 105(Suppl.): 249S-285S.

This page intentionally left blank.