5

Building the Evidence Base: Research Approaches for Nutrients in Disease States

Session 5 was moderated by Alex Kemper, Chief of the Division of Ambulatory Pediatrics at the Nationwide Children’s Hospital and a member of the U.S. Preventive Services Task Force. The first presentation was delivered by Amanda MacFarlane, Research Scientist and Head of the Micronutrient Research Section in the Nutrition Research Division at Health Canada. MacFarlane discussed the type and strength of evidence needed to determine special nutrient requirements. The second talk, by Patrick Stover, Texas A&M AgriLife, focused on identifying and validating biomarkers in disease states. Nicholas Schork, Professor and Director of Human Biology at the J. Craig Venter Institute, delivered the next presentation, which examined innovative research designs aimed at determining efficacy of interventions. Two presentations then provided case examples of the issues involved in building an evidence base for special nutrient requirements for complex diseases. Gary Wu, Professor in Gastroenterology at the Perelman School of Medicine at the University of Pennsylvania and a Planning Committee member, discussed inflammatory bowel disease (IBD), and Steven Clinton, Professor in the Department of Internal Medicine at The Ohio State University and a Planning Committee member, discussed cancer. The session concluded with a moderated panel discussion and questions and answers with workshop participants.

TYPE AND STRENGTH OF EVIDENCE NEEDED FOR DETERMINING SPECIAL NUTRIENT REQUIREMENTS1

Dietary Reference Intake (DRI) values2 are based on nutrient intakes and indicators, especially when talking about indicators of adequacy. The public health orientation of the DRIs, MacFarlane said, is that nutritional deficiencies in a population can be avoided. She added that the DRIs are also focused on adverse effects of high levels of intakes of specific nutrients (i.e., Tolerable Upper Intake Levels [ULs]). The DRIs are therefore based on data from apparently healthy populations and they apply to apparently healthy populations.

The DRIs do take into consideration the concept of chronic disease risk reduction, and, she clarified, that this has always been done when sufficient data for efficacy and safety exist. Additional guidance has recently been provided in the 2017 National Academies consensus study report Guiding Principles for Developing Dietary Reference Intakes Based on Chronic Disease (NASEM, 2017).

Adapting the Dietary Reference Intake Approach to Special Nutrient Requirements

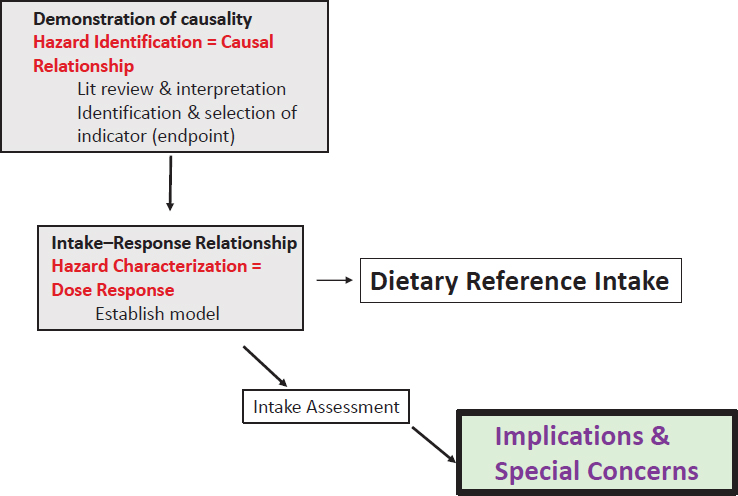

MacFarlane continued, saying that the DRI approach has some fundamental aspects that could be adapted and potentially applied to special nutrient requirements and disease states. However, knowing that a relationship between nutrient and disease state exists is not sufficient to develop standards. To develop standards, she said, a certain level of evidence is needed. The first step in the risk assessment approach for setting DRIs is the demonstration of causality, which is the hazard identification (see Figure 5-1 and the presentation by Patsy Brannon in Chapter 1).

This requires a systematic review and, if possible, a meta-analysis of the literature. It also means interpretation of the data. As part of the systematic review, this process also includes the identification and selection of the appropriate indicators or endpoints, which, in the context of the DRIs, are health outcomes.

Once a causal relationship between the intake of a particular nutrient and a disease of deficiency is established, the next step is to model the intake–response relationship. The data required for this step are different from those required to identify a causal relationship. Data are needed to show, at multiple doses, that an association with the endpoint of inter-

___________________

1 This section summarizes information presented by Amanda MacFarlane.

2 For DRI values, see http://nationalacademies.org/HMD/Activities/Nutrition/SummaryDRIs/DRI-Tables.aspx (accessed June 6, 2018).

SOURCE: As presented by Amanda MacFarlane, April 3, 2018.

est exists. Once that dose–response model is developed, it is possible to mathematically derive DRI values.

Finally, DRI committees do not end their work with setting the intake values. They also look at the intake assessment of the two populations of concern, which are Canada and the United States, to assess the risk of deficiency or excess. Additionally, DRI committees discuss implications and special concerns, for example, among specific population groups.

Applying the Dietary Reference Intake Risk Assessment Framework to Disease States

MacFarlane then turned her attention to applying this framework to nutrient requirements in specific disease states, arguing that some aspects of the DRI risk assessment approach are fundamental to establishing special nutrient requirement intake values in disease states.

Establishing Causality and Selecting Health Outcomes

When establishing causality, it is necessary to have a high level of confidence that an association exists between the exposure or intake of a

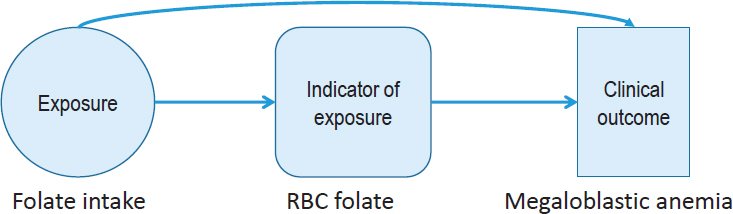

given nutrient and the clinical outcome (see Figure 5-2). Therefore, identifying and selecting appropriate indicators of intake, status, and health outcome is critical.

Using the example of folate, in this figure, she said that folate intake modifies red blood cell folate (the indicator of status), which is directly linked to the clinical outcome, which is megaloblastic anemia. Vitamin C and scurvy and vitamin D and rickets are two other examples where a high level of certainty exists that the nutrient has a causal relationship with the endpoint of concern.

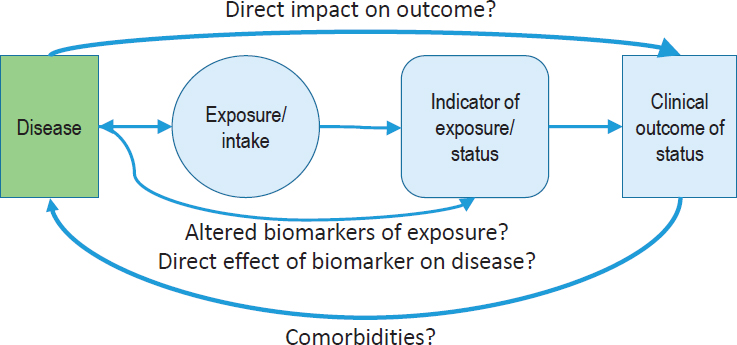

In the case of chronic disease, the aim is still to associate an exposure or intake with a clinical outcome, but the relationship is much more complicated. Essentially, a lower level of certainty of that relationship between the exposure and the clinical outcome or chronic disease exists, and it is often necessary to rely on the use of intermediate outcomes. MacFarlane then posed the question of how these relationships change when considering nutrient requirements for specific disease states. The direction of the relationship is different because instead of nutrient intake being associated with a disease of deficiency or chronic disease outcome, clinicians and investigators are looking at the disease state, which can affect exposure, and/or the indicator of status, and/or the clinical outcome associated with that indicator or status (see Figure 5-3). That is, the relationships are not always in one direction because the disease could have a direct impact on the outcome or vice versa, or because the disease itself could actually alter the biomarkers of status.

For example, IBD results in intestinal inflammation and malabsorption and is associated with low B vitamin and iron status and with anemia. The change in iron status could be the result of low iron intake or the result of the inflammation or malabsorption, that is, the disease itself. Another complicating factor in determining the outcome is the exposure.

NOTE: RBC = red blood cell.

SOURCE: As presented by Amanda MacFarlane, April 3, 2018.

NOTES: The direction of the nutrient–disease relationship differs depending on which outcome is addressed by nutrient intake and whether it demonstrates an intake–response relationship. The further away from intake, the higher the potential is for uncertainty of relationships.

SOURCE: As presented by Amanda MacFarlane, April 3, 2018.

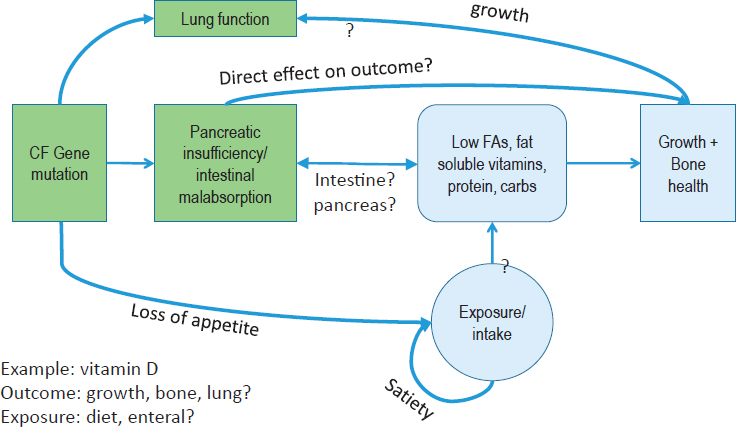

In the DRIs, the focus is dietary intake, but in disease states, the exposure could be intravenous, oral, or intraperitoneal. Every time the complexity increases, the level of uncertainty also increases. Cystic fibrosis (CF) is another good example of the complexities involved in establishing a special nutrient requirement for a disease (see Figure 5-4).

In this instance, the CF gene mutation is related to pancreatic insufficiency and to low status for fatty acids, fat soluble vitamins, proteins, and carbohydrates. All of these can be related to growth and bone health, with the latter being the traditional DRI health outcome associated with inadequate vitamin D. In this case, however, the CF presentation suggests that growth or lung function also could be the outcome of interest. It is critical to be transparent in these decisions, MacFarlane said, and to provide the data to support the decisions about selecting outcomes for establishing a special nutrient requirement for a disease.

Estimating Intake–Response Relationships

The next step is estimating intake–response relationships. For traditional diseases of deficiency, intakes are considered in terms of a threshold between what would be considered inadequate intakes and what would be considered safe and adequate. Traditionally, the gold standard for

NOTES: What could be selected as the outcome of interest when establishing the requirements for a nutrient such as vitamin D? This figure shows proof of principle in nature and does not represent the likely higher complexity of the nutrient–disease relationship. CF = cystic fibrosis; FA = fatty acid.

SOURCE: As presented by Amanda MacFarlane, April 3, 2018.

setting those thresholds is evidence from randomized controlled trials (RCTs) assessed through a systematic review and meta-analysis. Once those data have been obtained, it is possible to estimate the requirements. This becomes more difficult in the case of rare diseases, however, because evidence may be available on only a few people. Approaches to setting special nutrient requirements must consider the likelihood that a paucity of evidence exists. Future committees will have to grapple with questions about the acceptability of a lower level of evidence in the causal relationship and the ability to conduct dose–response modeling.

The Concept of Harm as It Applies to Dietary Reference Intakes

The DRIs focus not only on the low end of intakes but also include the UL, above which adverse effects may potentially occur. The DRIs also assume an interval of safe intakes between inadequacy and an UL. MacFarlane suggested that it could be argued that an interval of safe intakes may disappear when considering special nutrient requirements

and disease states. Nutrient intakes in what would be considered the healthy range may actually pose harm for some, such as potassium in kidney disease, protein in phenylketonuria, or supplemental iron in hemochromatosis.

MacFarlane explained that it is even more complicated because the potential for overlap exists. For example, an increase in nutrient intake could decrease risk of one disease endpoint (or health outcome) but increase risk of another. For example, ketogenic diets for people with mitochondrial disease may have beneficial effects for patients with epilepsy, but they could also have dyslipidemic effects and thus exacerbate existing dyslipidemia.

Defining a Distinct Nutrient Requirement

MacFarlane then posed a basic question: How is “special” or “distinct” defined when talking about nutrient requirements? Is it simply a matter of a shift in the intake distribution? If it is just a matter of shifting the Estimated Average Requirement (EAR) to a lower level, for example, how different does the EAR have to be to be considered distinct? In the context of setting nutrient requirement values in disease states, the reality of making decisions may depend not only on differences in distribution of requirements but on other considerations as well, such as prevalence and severity of disease; disease activity (i.e., whether a person is in an active disease state or remission); presence of inflammation; effective dose, method of administration, and matrix (i.e., diet, supplement, formulated diet); sex and life stage; nutrient form (i.e., synthetic or natural); genetics of the individuals; confidence that everyone is equally responsive if extrapolation from one group to another is done; and maintenance of nutritional balance (i.e., whether the removal of one nutrient will adversely affect the nutritional balance of other nutrients).

In summary, MacFarlane said that aspects of the DRI risk assessment approach are applicable and adaptable to determining special nutrient requirements in disease states, but that any adaptation of the approach must be transparent. Moreover, decisions about defining the clinical population for such nutrient requirements and the level of evidence that will be used to set the requirements must be defined very carefully. In addition, clear and transparent analysis of the risks and benefits is needed, especially when they overlap. Rationales for extrapolation, if they are even acceptable, are needed, as are the inclusion and definition of any special considerations when setting special nutrient values.

IDENTIFICATION AND VALIDATION OF BIOMARKERS IN DISEASE STATES3

Stover began his presentation by highlighting that when an individual has a chronic disease, the cutpoint between accumulated risk and actual disease onset is not well defined. In that way, the question becomes whether the disease trajectory can be measured, and how nutrition can modify that trajectory over the lifespan. The state of the art on knowledge of nutritional biomarkers has been covered in many excellent reports, Stover noted as he began his presentation. For example, the BOND (Biomarkers of Nutrition for Development) Program report defined “biomarker” as a distinct biological or biologically derived molecule found in blood or other bodily fluids or tissues that is a sign of a process, event, condition, or disease (Raiten et al., 2011). Stover explained that measuring nutrient-specific biomarkers can determine exposure, identify changes in nutrient status, clarify function within the body, and indicate direct and indirect effects on systems.

When classifying and evaluating human nutrient needs in disease states, it is desirable to have a good indicator or biomarker of the disease itself and its severity. In addition, it is desirable to have measures that indicate whole body nutritional status, biomarkers for normal physiological function and clinical outcomes, and predictive biomarkers in terms of future chronic disease risk that may even be independent of the current disease state. In considering these measures, Stover noted that comorbidities must also be considered.

Evaluation of Biomarkers and Surrogate Endpoints in Chronic Disease, a report on the current state of evaluation of biomarkers and surrogate endpoints in chronic disease, discusses validated surrogate biomarkers, that is, biomarkers that directly relate a nutrient intake to a health outcome along a causal pathway (IOM, 2010). Because validated surrogate biomarkers are not common, non-validated intermediate functional biomarkers are often used, explained Stover. These biomarkers may not necessarily relate to a clinical outcome but indicate a dose–response relationship between a nutrient intake level and a physiological response.

The challenge in thinking about chronic disease, stated Stover, is that it can often be tissue specific. As a result, measuring the systemic nutrient status might not be appropriate. A good example, Stover noted, is the blood–brain barrier (BBB), where it may not be possible to detect what may be occurring in the brain if some disease process is occurring in brain tissue only. Functional biomarkers can provide insights into the physiology and pathology of a specific tissue.

___________________

3 This section summarizes information presented by Patrick Stover.

In the case of disease, Stover noted that it is important to determine what is being measured—the whole body, tissue, or the cell. Moreover, the major interest for biomarkers is in disease progression and/or comorbidities related to the disease-induced nutritional deficiency. For example, if certain proteins that are present in cerebrospinal fluid, such as s100beta and glial fibrillary acidic protein (GFAP), appear in the blood, this would potentially be an indication of a dysfunctional BBB, which could be used as a proxy for nutrient deficiencies in the brain. This type of biomarker could help in predicting nutrient deficiencies in certain diseases.

Precision Medicine

Predictive biomarkers are needed that can help clarify what nutrients will be affected by a disease, and whether increased input of that nutrient will affect a deficiency. Once those questions are answered, then other nutritional biomarkers can be used to determine the dose–response relationship that is required to meet that new requirement in the disease.

Stover noted that one of the challenges, however, is that as a disease progresses in severity, nutrient needs are altered. However, new, inexpensive, microfluidic devices (the same technologies used for pregnancy tests) are being developed that can yield immediate real-time readouts of nutritional and disease status, allowing individuals to make real-time adjustments. Many companies are commercializing these point-of-care diagnostics, said Stover, showing that technology may be ahead of current science.

Disease Modifies Nutrition Status and Nutritional Biomarkers

Complex relationships sometimes exist between disease and biomarkers. For example, the BRINDA (Biomarkers Reflecting Inflammation and Nutritional Determinants of Anemia) project (https://brinda-nutrition.org), which seeks to examine the relationship between inflammation and biomarkers, contends that acute and chronic inflammation can modify nutrient biomarker measures and inflammation can have a direct effect on actual nutrient status. To support these points, BRINDA investigators concluded that the prevalence of low total body iron is underestimated if it is not adjusted for inflammation (Mei et al., 2017). That means, said Stover, that biomarkers in a disease state must be adjusted in order to fully understand actual nutritional status.

In conclusion, Stover pointed to the need for systems biomarkers. These are needed in part because many of the specific tissues of interest are not accessible for measurement. To illustrate, Stover described ongo-

ing work by Karsten Hiller in Germany, who is investigating whether people with diabetes can be classified in terms of their Cori cycle functionality, which could in turn serve as a biomarker to prescribe either nutrients, diet, or drugs to treat that diabetes. Hiller and his team are now examining the potential for dietary interventions to see how diet can modify the Cori cycle in various disease states.

INNOVATIVE CAUSAL DESIGNS FOR EFFICACY: WHAT TYPE OF EVIDENCE IS NEEDED?4

New legislation, such as the 21st Century Cures Act, will have substantial implications for how evidence is brought to the U.S. Food and Drug Administration (FDA). The 21st Century Cures Act, explained Schork, calls for a reconsideration of the RCT, and perhaps even the substitution of RCTs with data summaries and real-world evidence. FDA has released a number of reports that advocate for these changes, including the idea to substitute Phase III and Phase IV clinical trials with “learning systems.” These systems provide a way to collect data on people, through approaches such as mining electronic medical records to look for trends that might be clinically useful for patients in the future.

N-of-1 Trials

Schork then described N-of-1 trials, a good example of the trend toward real-world evidence that has been gaining attention in recent years. In N-of-1 trials, a clinician takes a baseline measure, then provides an intervention to the patient and records clinical measures. The clinician may decide at some point to stop that intervention and try an alternative. The intervention(s) are done some number of times, with the clinician recording the clinical measure throughout the cycle of alternating treatments. This focus on helping a patient get better, rather than solely on evaluating an intervention, allows clinicians to test multiple interventions, and so long as the condition is not acute and life threatening, the clinician can see which one performs optimally for the patient. It is possible to provide this trial to multiple patients, where one patient may respond to one intervention, and another may respond to a different intervention. Data can be collected on these individuals to enable claims about correlations between the changes in the clinical parameter and certain measures. These trials leverage big data for individual patients to allow conclusions about health status in response to many kinds of interventions.

___________________

4 This section summarizes information presented by Nicholas Schork.

Many of the initial N-of-1 trials were not done with a great deal of rigor and guides on conducting these studies have since been published. Schork emphasized that the power of N-of-1 studies is not due to the number of participants. It is due to the number of observations that can be made and the study designs that are used to make causal claims about the influence of an intervention on a clinically meaningful parameter. This approach is fundamentally different than the way historical population-based trials have been pursued.

Schork illustrated the methodology of N-of-1 trials by showing several different study designs. For example, a sequential nature design could be used if the clinician wants to minimize the amount of time that a patient is on one therapy because evidence is building that another therapy is more beneficial. Trial designs can also be optimized to minimize the amount of time a patient is on a less beneficial trial but still allow enough data to be collected to make objective claims about the efficacy of a particular intervention. For example, if a clinician is contrasting two interventions and wants to optimize the distribution of a finite number of measurements, one way to optimize the design of having the patient be on alternating treatments is to rotate the number of times the patient is on each intervention. In this way, the data that are generated are not influenced by the overt serial correlation between the observations. Collecting data on a single individual will result in strong correlations between the measures and with an assumption about the strength of the serial correlation, it is possible to optimize these designs to maximize the power to bring out one effect over another.

Explaining further, Schork said that it is also possible to aggregate N-of-1 studies into clusters and identify trends and factors in common (e.g., a genotype or a specific environmental exposure) to predict the best intervention for future patients.

Personal Versus Population Thresholds

Schork continued with another aspect of N-of-1 trials, which illustrates personal versus population thresholds. He used the example of a clinician with cholesterol measures on 25 individuals. Some of these individuals may have a cholesterol number that is greater than the population threshold (e.g., 200 mg/mL).

If one of those individuals has fairly low cholesterol values, a personal average for that patient could be established and perhaps errors could also be established. Over time, it could be observed that the patient’s cholesterol level has deviated significantly from his initial values but is still below the population threshold. The increased trend, however, might indicate a health status change, despite the fact that the cholesterol level is

below the population threshold. This idea has been used to illustrate the identification of ovarian cancer about 1 year before population thresholds would have indicated the disease (Speake et al., 2017).

Identifying, Verifying, and Vetting Nutrition Strategies for Individuals

The use of vetting algorithms to match nutritional interventions to patient profiles is also gaining ground, Schork said. This approach uses an entire battery of omics profiling to determine what kind of perturbations might be present in any one individual’s profile; the results can be used to identify an optimal diet or supplementation intervention.

In this approach, it is important to identify a strategy for matching the profiles to the nutritional interventions, recognizing that what is actually being tested is an algorithm, not an individual intervention. To illustrate, Schork noted that individual cancer patients’ tumors exhibit perturbations and certain drugs can uniquely counteract the pathophysiology induced by those specific tumor mutations. To identify matches between tumor perturbations that might be unique to a small set of the population and specific drugs, cancer clinicians would have to run many trials with small numbers of patients, and that would be inefficient. The cancer community has developed an alternative strategy, called “bucket” or “basket” or “umbrella” trials, in which a treatment basket is given to an individual based on the perturbations profile of the tumor. It is not the individual drugs, but the perturbations that are being tested, a crucial difference in the approach to vetting interventions.

Schork then reviewed the following questions raised by this approach:

- What happens if the trial fails? For example, in a recent basket trial, patients experienced no better outcomes than people that received the standard of care. Although these results generated considerable concern about the approach of personalized oncology, a key message was that the community must look critically at the basis for the matching algorithm before discarding the whole field of personalized oncology or personalized nutrition.

- How should insights that arise external to the trial be accommodated? For example, what happens if the day after a basket trial begins, Nature publishes a paper saying people with the HER2 perturbation should get a different drug than the one prescribed in the trial? The solution may be to allow the trial rules to be adapted over time, even though that solution would be difficult for the biostatisticians analyzing the data.

Other issues to consider relate to whether the perturbations in the tumor can be assessed properly, the nature of the algorithm itself, and the role of the Tumor Board, which decides whether oncology drugs that were recommended by the algorithm are likely to benefit the patient. Similar issues could occur if algorithms were used to guide nutrition interventions. Schork concluded his presentation by noting that these issues raise the question of whether all the algorithms that are being developed by different groups should be vetted in a regulated environment.

EXAMPLES OF A COMPLEX DISEASE: INFLAMMATORY BOWEL DISEASE5

In wet bench research that uses animal model systems, animals have defined environmental conditions and genetics and a monotonous diet. Results from studies with these animals have a high signal-to-noise ratio, and it is possible to demonstrate proof of concept cause-and-effect relationships with a modest-sized cohort. In contrast, Wu explained, humans are free-living in a highly variable environment, with genetic diversity and a variable diet. As a result, this inter-subject variability results in a very low signal-to-noise ratio in many of the topics studied, making research in humans immensely complicated.

Given this reality, Wu said, an increasingly desired approach is to embrace the complexity of human biology through the use of high dimensional analytic technologies together with advanced computational bio-statistical platforms. When doing human research, the goal is to match a physiological response with many different biospecimens, then run the data through very high throughput analytic technologies. Enormous databases are then generated that can be analyzed using the best medical approaches, bioinformatics, biostatistics, and computational biology to understand patterns and to identify and characterize biological mechanisms that drive the human response to an intervention.

The Example of Inflammatory Bowel Disease

The prevalence of IBD is high in the United States and worldwide. The genetic contribution to the development of Crohn’s disease is at most 30 to 40 percent, and about 10 percent for ulcerative colitis. Environmental factors, including industrialization, are the largest contributor to the pathogenesis of IBD. Consideration, therefore, could be given to engineer the environment of the gut through diet, through the microbiome, or perhaps both to improve the efficacy of current therapeutic strategies focused on

___________________

5 This section summarizes information presented by Gary Wu.

host immunosuppression. The gut microbiota is deeply dysbiotic in IBD, as the microbial community is responding to an environmental stress (i.e., inflammation of the intestinal tract). It is thought that this dysbiosis plays a role in perpetuating IBD. As a result, strategies have been developed to change the environment of the gut to alter dysbiosis in ways that favor the health of the host.

Dietary Intervention in Inflammatory Bowel Disease

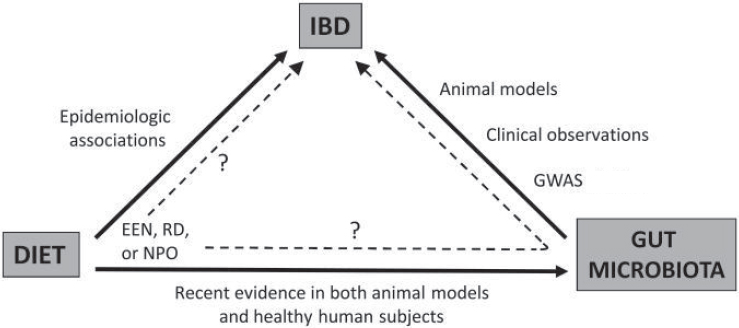

Current treatments for Crohn’s disease, such as metronidazole and ciprofloxacin, and fecal microbiota transplantation, do not work as robustly and as consistently as desired. This provides an opportunity to use the best technologies in human subject research to examine how altering the environment of the gut, perhaps through dietary interventions, might be beneficial in treating IBD. The paradigm being used to support this notion, said Wu, is that diet is epidemiologically associated with IBD, gut microbiota unquestionably play a role in the pathogenesis of IBD, and diet can shape the composition of the microbiota, which leads to the production of many different types of metabolites.

With this paradigm in mind, researchers have examined whether understanding the biological principles by which a specific diet is beneficial would inform efforts to design better diets for patients with IBD (see Figure 5-5). For example, defined formula diets for the treatment of Crohn’s disease are most effective when they are consumed entirely in place of a normal diet (i.e., exclusive enteral nutrition [EEN]). This raises the question, explained Wu, of whether EEN provides something good, or excludes something bad, for patients with IBD that is different from the regular whole food diet. To understand EEN diets and their impact on IBD, investigators can study the microbiome by using DNA sequencing technologies or by identifying the metabolites that are made by these microbes because they may have an impact on the host.

Wu then described the Food and Resulting Microbial Metabolites (FARMM) study (part of the Crohn’s & Colitis Foundation’s Microbiome Initiative6), which explored the relationship between dietary composition, gut microbiome composition, and the metabolic products that are ultimately present in the gut lumen and in the plasma of humans. Healthy vegans and omnivores were randomized to consume an EEN diet. In the first period of the study, the researchers looked at the impact of these diets on the gut microbiome composition. Halfway through the intervention, the subjects’ guts were purged, reducing bacterial load very substantially.

___________________

6 See http://www.crohnscolitisfoundation.org/science-and-professionals/research/current-research-studies/microbiome-initiative.html (accessed June 14, 2018).

NOTE: EEN = exclusive enteral nutrition; GWAS = genome-wide association study; IBD = inflammatory bowel disease; NPO = nil per os (i.e., complete bowel rest); RD = restriction diet.

SOURCES: As presented by Gary Wu, April 3, 2018; Albenberg et al., 2012. Reprinted with permission from Wolters Kluwer: Albenberg, L. G., J. D. Lewis, and G. D. Wu. 2012. Food and the gut microbiota in inflammatory bowel diseases: A critical connection. Current Opinion in Gastroenterology 28(4):314–320. https://journals.lww.com/co-gastroenterology/pages/default.aspx (accessed June 14, 2018).

Comparing the two time points would reveal differences in metabolites produced and consumed by microbes. As the microbiota reconstituted itself, the researchers could then correlate increases or decreases in metabolites with the occurrence of microbes and diet to identify key drivers.

Throughout the study, the team completed shotgun metagenomic sequencing of all the different stool samples. The team performed fecal and plasma metabolomics as well as collected rectal biopsies to isolate lamina propria mononuclear cells for analysis by a mass cytometry platform to understand how the mucosal immune system was actually responding at each of the different study intervals.

Wu then presented results showing numbers of different types of microbes in the gut environment based on the three different diets. The vegan microbiome was more resilient to environmental stress than the omnivore microbiome based on the ability of the gut microbiome to reconstitute itself after flushing of the gut. The gut microbiota of study participants on an EEN diet was the least resilient. Wu also presented data using a novel statistical approach suggesting that EEN excludes some metabolites that are in whole food diets (some that plausibly could be bad for patients with IBD) as well as includes other metabolites provided

by EEN that may not be present in whole food diets (some that plausibly could be beneficial for patients with IBD).

In a second study of diet, the plasma metabolome, and the microbiota of vegans and omnivores, a heat map of different nutrients in diets showed, not surprisingly, substantial differences between the vegans and the omnivores. Results from the plasma metabolome showed a similar result. A random forest algorithm, a machine learning tool that helps identify features that determine a dichotomous outcome, was able to predict with 94 percent accuracy whether an individual was a vegan or an omnivore, simply by looking at 30 small molecules in blood, only 5 of which are generated by the gut microbiota. These results suggest that the main impact of diet on the human plasma metabolome is a direct effect on the host with a smaller contribution working through the gut microbiota.

EXAMPLES OF A COMPLEX DISEASE: CANCER7

The World Cancer Research Fund and the American Institute for Cancer Research (WCRF-AICR) reports are the most authoritative global report on food, nutrition, nutrients, weight control, and physical activity.

Clinton stated that WCRF-AICR reports were published in 1997 and 2007 (WCRF-AICR, 1997, 2007). Since then, WCRF-AICR has conducted a continuous update program to review the data for three or four cancers per year. All of these will be compiled into a new report that will be published in 2018. An international panel of 20 to 25 leaders in the field and more that 250 reviewers and contributors around the world participated in its development, which was based on systematic reviews and metaanalyses. Clinton summarized the WCRF-AICR nutrition-related recommendations as the following:

- Body fatness: Be as lean as possible within the normal range of body weight.

- Foods and drinks that promote weight gain: Limit consumption of energy-dense foods. Avoid sugary drinks.

- Plant foods: Eat mostly foods of plant origin.

- Animal foods: Limit intake of red meat and avoid processed meat.

- Alcoholic drinks: Limit alcoholic drinks.

- Preservation, processing, preparation: Limit consumption of salt. Avoid moldy grains or legumes.

- Dietary supplements: Aim to meet nutritional needs through diet alone.

___________________

7 This section summarizes information presented by Steven Clinton.

The WCRF-AICR reports make no specific nutrient requirement recommendations, such as DRIs related to cancer outcomes, because the data have not reached a sufficient level of scientific rigor to make such recommendations. The WCRF-AICR strength of the evidence for recommendations are categorized as convincing, probable, limited but suggestive, or a substantial effect is unlikely.

The Dietary Guidelines for Americans policy document, which was based in large part on an expert committee’s technical report, also makes no specific nutrient requirement recommendations regarding cancer. Rather, the focus of the 2015–2020 Dietary Guidelines for Americans was dietary patterns. Overall, the dietary patterns recommended in the Dietary Guidelines for Americans are consistent with the WCRF-AICR recommendations.

Effect of Nutrients on the Cancer Continuum

Clinton then addressed how different phases of the cancer continuum have implications for nutrition and nutrient requirements. For example, all of the factors that enhance cancer risk will require unique and specific nutritional interventions; cancer treatment has numerous nutrition-related impacts; and the end-of-life phase has significant nutrition implications, including malnutrition related to pain management, cachexia, and sarcopenia. Nutrition is also a critical issue for the millions of cancer survivors, as cancer therapies increase the risk of obesity, metabolic syndrome, and fitness issues. Hearing, taste, neuropathies, gastrointestinal dysfunction, cardiopulmonary disease, long-term heart function, and reproductive and renal issues are all prevalent based on different cancer therapies. Clinton stated that nutritional interventions clearly may play a role in many of these processes.

The Complexity of Host Genetics

Clinton then briefly discussed how host genetics affect many aspects of cancer biology within an individual. These include the more than 50 inherited cancer syndromes and cancer susceptibility genes, such as BRCA1 for breast cancer, adenomatous polyposis coli (APC) for polyposis coli, retinal blastoma, and p53 for Li-Fraumeni syndrome. Many of these mutations are of low prevalence and contribute to only a small proportion of the human cancer burden.

In contrast, host susceptibility genes, such as polymorphisms and variations in cytochrome p450s, have high prevalence. The absolute risk associated with any one of these genes is low, but the attributed risk can be much higher. One example is p53, the gene related to Li-Fraumeni

syndrome, which can lead to sarcomas and breast, leukemia, lung, brain, and adrenal cancers.

Clinton then explained that murine models and very simple studies in mice have demonstrated that nutritional status can alter the biology of inherited cancer syndromes. For example, a 1994 study looking at caloric restriction and spontaneous tumorigenesis in the p53 knockout mice found a fairly strong inhibitory effect of calorie restriction on the development of this disease in the murine models (Hursting et al., 1994). Translating to a human system, Clinton noted that similar results could easily mean 20 to 30 years of added life for an individual with Li-Fraumeni syndrome. Clinton concluded that dozens of nutrients may affect the cancer process and human studies are desperately needed to define DRIs that may be relevant to inherited cancer syndromes.

The Complexity of Cancer Genetics

Moving on to the genetics of cancer itself, Clinton said that this is where the real complexity lies. This is illustrated by the National Institutes of Health’s Cancer Genome Pan-Cancer Atlas (https://cancergenome.nih.gov), a national effort to define the landscape of the mutational signatures across human cancers, through integrating omics across platforms from human samples.

Even though the mutational landscape is large, the array of driver mutations is more limited. These mutations drive certain critical biological processes, such as evading growth suppression, avoiding immune recognition and destruction, tumor-associated inflammation, and angiogenesis. Addressing these “hallmarks of cancer” is key to successful treatment. Cancer evolves with more and different mutations as it goes through the therapeutic and metastatic process, and although driver mutations may be present in the early stages up through diagnosis, treatment may eliminate certain clones and allow others to grow and progress. Achieving cures in such types of malignancy where different metastatic sites with very different mutational patterns exist in a single individual is very difficult.

Special Nutrient Requirements in Cancer

Clinton concluded his presentation with the following comments about DRIs and cancer:

- Developing DRIs for cancer prevention using the public health approach should and will continue.

- The complexity of cancer and the unique aspect of each individual’s experience will drive efforts toward personalized nutrition therapy. New study designs, such as N-of-1 trials and personalized treatment, are very relevant to the food and nutrition aspects of cancer.

- Improved technology permits a more precise definition of nutritional status in each individual in real time, and this will allow for more efficient, effective, and meaningful applications to each person. Much more research is needed to define efficacy and safety of interventions.

- Currently, most cancer patients do not have optimal access to nutrition support services. This requires changes in reimbursement and full integration of the expertise from registered dietitians (RDs) into programs at hospitals. RD researchers are desperately needed to do the science to define future interventions.

- A category of bioactive food components should be formally established to encompass components such as fiber and polyphenols. The term nutrient should be limited to those compounds that have a defined deficiency syndrome that is immediately reversed by replacing that component. Efforts should be pursued to define optimal intake of these bioactive food components.

MODERATED PANEL DISCUSSION AND Q&A

As the presenters gathered for the panel discussion, Kemper reflected on the theme of tension, which emerged across the presentations. This included the tension of the single nutrient versus the dietary pattern, the tension of genes versus the environment, the tension between understanding things at the population level versus personalized medicine, the tension of traditional RCTs versus N-of-1 study designs, and the tension between looking at final health outcomes versus intermediate biomarkers.

Patient Perspectives on Developing Nutrient Recommendations

To a question related to the patient perspective in establishing nutrient requirements and the feasibility of individuals changing their diet, MacFarlane responded that a regulatory body’s responsibility would be to have good standards based on good evidence of what disease responds to what particular nutrient intake. Other groups with other responsibilities, such as health care providers, the food industry, and the insurance industry would also need to be involved.

Dietary Recommendations When Good Biomarkers Are Not Available

A discussion was initiated on the question of how to pursue research when a paucity of good biomarkers for some diseases, such as irritable bowel syndrome or even osteoarthritis exists. Wu responded by suggesting that biomarkers might not be available because researchers have not looked hard enough. For example, a somewhat controversial dietary intervention for the treatment of IBD is a low fermentable oligosaccharides, disaccharides, monosaccharides, and polyols (FODMAP) diet. If one were to ask what happens to the metabolome on the low FODMAP diet, and the diet is matched to bacterial taxa, or one asks what happens to the metabolome of plasma and the feces, it might be possible to identify biomarkers that might actually predict somebody’s response to the low FODMAP diet.

Schork added that to discover such biomarkers, better designs to get at causal relationships between, for example, variables that are measured routinely on individuals and outcomes of relevance might be needed. For example, very large observational studies are valuable, but this design is not able to separate the findings that might be secondary to the intervention being used.

Wu then added that researchers have increasingly begun to conduct association studies using omic technologies. Even though the reasons for the association may not be clear, these studies are enormously valuable because if a wet bench researcher can phenocopy the association in a culture system and/or in an animal model, it might help show more about cause-and-effect relationships that drive the biological process in human biology.

What Level of Evidence Is Necessary?

Wu then followed up this comment with a question for MacFarlane about the necessary level of evidence. If a study shows a very strong association between certain biomarkers and a certain outcome, would some type of cause-and-effect evidence still be necessary? If a laboratory were able to provide very strong evidence of a biological process in an animal model, must it also be proved in humans, or is the animal evidence a sufficient level of evidence? MacFarlane said that validation in humans still must be done. Animal models are critical for looking into the mechanisms underlying the associations being seen. Without validation in humans, however, the modeling would include a level of uncertainty that might not be acceptable.

Stover added to the discussion by saying that researchers would want to make sure, obviously, that a cause-and-effect relationship exists. He noted that the prevention of neural tube defects with folic acid comes

to mind as an example. The reason why folic acid prevents neural tube defects is still unknown, but early clinical observations showed that at a certain level of exposure, 70 percent of these defects can be prevented. That level of exposure then became the standard of care. The World Health Organization (WHO) had to respond to countries who wanted to know how much folic acid should be put in the food supply. WHO used a big data approach, where they used observational data and big datasets to create a computed dose–response curve. The fortification amount was completely computed from observational data and then it was validated from very small clinical studies. However, no biomarkers that relate the exposure to the disease exist. In the ensuing discussion, MacFarlane responded that a causal effect was still demonstrated in an intervention trial first. A workshop participant added that decisions on folic acid fortification made by the FDA Folic Acid Subcommittee of the Food Advisory Committee were challenging because of the variability on dose response and level of toxicity, even though it was clear that a dose response existed. Clinton joined the conversation by pointing out that in some situations the rules can be bent; for example, public health recommendations around tobacco use in the 1960s were never based on an RCT of cigarette smoking and lung cancer because the evidence from observational studies was so strong. A comment was made that in contrast to the case of tobacco, in nutritional studies, the relative risks are almost invariably less than two and given the noise of food intake assessments, variability in composition of diets, and other unaccounted for variables in the environment, it is difficult to know when the signals are greater than the noise.

Bridging the Gap Between Evidence and Clinical Practice

A question was brought up related to the reluctance of some gastroenterologists to accept the contribution of diet to IBD when substantial evidence is accumulating in actual clinic practice. Wu added that the most frequent questions gastroenterologists hear from patients with IBD are related to diet. Although evidence exists, studies are often anecdotal or not well controlled or rigorous. Wu said that he thinks the defined formula diets probably reflect the best currently available evidence. Gastroenterologists and scientists have finally accepted the fact that diet does have an impact and if more funding becomes available, the field will be able to make progress and provide evidence-based recommendations about diet. He added that the only current evidence-based recommendation is that patients with Crohn’s disease who may have intestinal strictures should avoid fibrous food products because it might lead to intestinal obstruction. Wu responded to a question related to noncompliance in following diets and implications for the specificity and sensitivity of

the measurements with these metabolomic differences. Wu said that he attempts to control as many variables as possible so as to reduce the noise level and still see associations with a biomarker, which is then used in outpatient studies in a much larger group. A useful study to validate results would be to take various types of biomarkers that are seen in an inpatient setting, track them in an outpatient setting, and then ask several hundred individuals to complete a food frequency questionnaire or a dietary recall. This approach would allow researchers to assess methods that are currently considered to be the best way to understand what people are actually eating.

RCTs and N-of-1 Trials in an Era of Big Data

A comment was made related to increasing the power of N-of-1 studies by aggregating and using the results toward personalized nutrition categories over time. Schork replied that the NIH-funded Clinical and Translation Science Awards Program is pursuing efforts in this regard, for example, in having the nodes in the network interact to collect and share data. For many conditions, he said, the data to make good clinical decisions are insufficient, but an RCT in some cases may not be the best design to use to determine a better clinical course. One idea is to collect records on patients that could be predictive of a particular outcome and publish the results after a peer review process. On this basis, trends could be identified to help make decisions about optimal treatments. In the current era of big data, this approach would be feasible. A concern with the N-of-1 studies would be that an investigator justifies something post hoc. The idea of using algorithms has been challenged on the grounds that the fact that many studies might be consistent with a particular algorithm does not necessarily reveal causality. Schork agreed with this point, but also commented that RCTs rarely collect enough data on any one participant to say unequivocally whether that person responded to the treatment or not. The N-of-1 study designs are intended to bring out the response phenotype in ways that are more compelling than is possible in standard RCTs. In this era of personalized medicine and nutrition, the objective, Schork said, is to identify the responders and non-responders. Marriott added the final comment of the discussion session by noting that many states are now collecting very large datasets of individual data across hospitals, but protection of the data is essential for the public to be confident that their own personalized information will not be jeopardized.

REFERENCES

Albenberg, L. G., J. D. Lewis, and G. D. Wu. 2012. Food and the gut microbiota in inflammatory bowel diseases: A critical connection. Current Opinion in Gastroenterology 28(4):314–320.

Hursting, S. D., S. N. Perkins, and J. M. Phang. 1994. Calorie restriction delays spontaneous tumorigenesis in p53-knockout transgenic mice. Proceedings of the National Academy of Sciences of the United States of America 91(15):7036–7040.

IOM (Institute of Medicine). 2010. Evaluation of biomarkers and surrogate endpoints in chronic disease. Washington, DC: The National Academies Press.

Mei, Z., S. M. Namaste, M. Serdula, P. S. Suchdev, F. Rohner, R. Flores-Ayala, O. Y. Addo, and D. J. Raiten. 2017. Adjusting total body iron for inflammation: Biomarkers Reflecting Inflammation and Nutritional Determinants of Anemia (BRINDA) project. The American Journal of Clinical Nutrition 106(Suppl. 1):383S–389S.

NASEM (National Academies of Sciences, Engineering, and Medicine). 2017. Guiding principles for developing Dietary Reference Intakes based on chronic disease. Washington, DC: The National Academies Press.

Raiten, D. J., S. Namaste, B. Brabin, G. Combs, Jr., M. R. L’Abbe, E. Wasantwisut, and I. Darnton-Hill. 2011. Executive summary—Biomarkers of nutrition for development: Building a consensus. The American Journal of Clinical Nutrition 94(2):633S–650S.

Speake, C., E. Whalen, V. H. Gersuk, D. Chaussabel, J. M. Odegard, and C. J. Greenbaum. 2017. Longitudinal monitoring of gene expression in ultra-low-volume blood samples self-collected at home. Clinical and Experimental Immunology 188(2):226–233.

WCRF-AICR (World Cancer Research Fund and American Institute for Cancer Research). 1997. Food, nutrition and the prevention of cancer: A global perspective. Washington, DC: American Institute for Cancer Research.

WCRF-AICR. 2007. Food, nutrition, physical activity, and the prevention of cancer: A global perspective. Washington, DC: American Institute for Cancer Research.

This page intentionally left blank.