5

Quantitative Dose-Response Assessment and Derivation of Occupational Exposure Levels

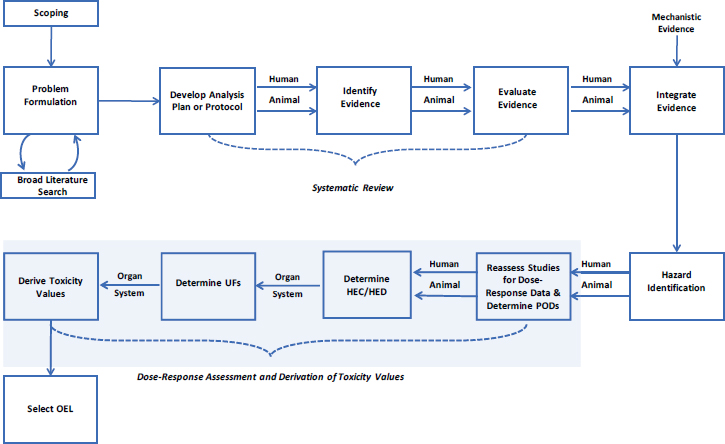

This chapter presents the committee’s evaluation of the U.S. Department of Defense’s (DOD’s) process for quantitative dose-response assessments and derivation of an occupational exposure level (OEL) for inhalation exposures to trichloroethylene (TCE). These elements, highlighted in Figure 5-1, include the selection of studies advanced for dose-response assessment, identification of points of departure (PODs), adjustment of dose(s) for human equivalent inhalation exposures for the workplace, and addressing uncertainty in the derivation of an OEL for each noncancer end point.

SELECTION OF STUDIES FOR DOSE-RESPONSE ASSESSMENT AND DETERMINE POINTS OF DEPARTURE

After hazard identification, a subset of studies is selected for dose-response assessment. The studies must contain adequate quantitative data on dose-response response relationships that are either suitable for benchmark dose modeling or provide a no-observed-adverse-effect level (NOAEL) or lowest-observed-adverse-effect level (LOAEL) to establish PODs for adverse effects. Additional considerations for advancing studies might include weighing human versus animal data, choice of animal species, exposure route and duration, accuracy of exposure data, the relevance of exposure timing, reliable outcome measurement, and the precision of results.

DOD’s draft report states that “After scoring all studies, points of departure (PODs) were derived for each critical endpoint from each study” (Sussan et al. 2019, p. 49). The strategy for selecting the POD from among the candidate values was not explicitly discussed, although it appeared that the general strategy was to choose among the lower end of the POD range in each outcome domain. PODs were presented graphically to illustrate the range of candidate PODs, along with each study’s applicability score. The committee recommends that DOD describe its process for selecting PODs in more detail, perhaps with an example. The committee also found the presentation of the applicability scores in this step of the process to be inappropriate, because study evaluation techniques should already have been used to

exclude studies that were irrelevant, poorly conducted, or had unsuitable data. Separation of study evaluation from selection of PODs will make the process more transparent and defensible. For example, DOD presented six candidate PODs for immunological end points. The lowest POD based on increased relative thymus weight reported by Keil et al. (2009) was two to three orders of magnitude lower than the other candidate POD values. This POD was discounted by DOD because it was deemed to be based on a “low value” study and thymus weight was considered a poor predictor of immunotoxicity. Although these might be valid reasons for excluding a study, it could be viewed as having been influenced by knowing the POD value. If the studies had been evaluated using criteria to assess study quality and relevance for dose-response assessment first, no irrelevant or poorly conducted studies would have been used to identify candidate PODs. Thus, it is important that criteria be established and studies evaluated before determining PODs. This will increase transparency and reduce the possibility of having study selection be influenced, consciously or subconsciously, by the POD value results. One option would be to restrict dose-response assessments to studies included in bodies of evidence rated with moderate- to high levels of certainty in the systematic review (NRC 2014). Following this restriction, individual studies within those bodies of evidence could be excluded from dose-response assessment based on risk-of-bias, study quality, availability of suitable data, or other concerns.

The DOD draft report indicates that dose-response assessments for TCE were based on inhalation studies, and that oral and other routes of exposure were only used if there were insufficient inhalation data. DOD used oral data in the assessment of immunological, reproductive, and developmental effects, and used a well-developed physiologically based pharmacokinetic (PBPK) model for route-to-route extrapolations. By not considering oral data for the other end points, DOD is not taking advantage of the substantial database on TCE, especially because a PBPK model is available. There are clearly uncertainties when using other routes of exposure for setting an inhalational OEL, but they can be lessened with the application of PBPK modeling and Bayesian uncertainty adjustment approaches for studies determined to be of otherwise sufficient quality for dose-response assessments.

Another approach DOD could consider is performing dose-response meta-analyses to derive a composite POD for each end point. A composite POD is desirable, as there can be less uncertainty with the use of multiple data points than there is with a POD from a single study, which is often subject to low statistical power and possibly unknown errors. Sensitivity analyses could be performed to assess the impact of including or excluding outlier data.

DOSE-RESPONSE ASSESSMENT

DOD used standard approaches for dose-response assessment. Where the study data were suitable, benchmark dose (BMD) modeling using the U.S. Environmental Protection Agency’s (EPA’s) Benchmark Dose Software (BMDS Version 2.7) was conducted. The benchmark responses (BMRs) modeled were typically one standard deviation for continuous outcomes and 10% response for dichotomous outcomes, although in some cases different BMRs were used for “frank” health effects (e.g., developmental abnormality that impacts survival). The POD was taken as the 95% lower confidence limit on the BMD (the BMDL). From datasets where a BMDL could not be properly derived, the POD was identified as the study reported NOAEL or LOAEL. DOD followed modeling practices established by Haber et al. (2018), and characteristics of the final model(s) used for determination of each POD are noted in Appendix E of the DOD draft report.

The Haber et al. (2018) paper describes various practices that sometimes differ from EPA with regard to the choice of BMR, model restriction, model selection, and model averaging. DOD’s draft report was not always clear in stating which practice was followed. The table in Appendix E of the DOD report listing PODs and model descriptions indicates that various models were “restricted” and there were studies where the POD was based on an “average.” However, details are missing from this shorthand notation, such as the typical restrictions employed and the approach for obtaining an average. More description of the general BMD modeling approaches and exceptions taken is warranted. It should be clear, for example, how the POD was derived if multiple BMD models fit one set of data adequately. In this situation, the POD may have been selected from the model with the best fit (using a metric like the Akaike’s information criterion) or from

the model with the lowest BMDL. Another possibility is BMD model averaging, which weighs multiple models to develop a unified model (Haber et al. 2018). In addition, continuous data for some outcomes were dichotomized to enable model fitting (e.g., liver lymphocyte infiltration from Griffin et al. [2000]), and the approach to dichotomization should be explicitly stated. These choices have implications for the POD derived from any one study, as well as for the range of PODs identified across studies.

APPLICATION OF PBPK MODELING FOR HEC DETERMINATIONS

The committee fully supports DOD’s decision to refine and verify the EPA (2011) PBPK model for TCE as the basis for deriving an OEL and views its application as a strength of the overall process that is in keeping with recommendations made in other National Academies reports (NRC 2006, 2011, 2014). The modifications made by DOD to the EPA model following its initial evaluation included translating the PBPK model format specific to MCSim1 to run in the commercial code acslX (The Aegis Technologies Group, Huntsville, Alabama). Adding equations to verify mass and flow balance and resolving prior instabilities during certain Monte Carlo simulations were also appropriate and well-justified (Covington et al. 2017, 2019). Furthermore, the extensive verification against experimental data, evaluating consistencies with EPA (2011) results, and sensitivity analyses are also considered critical components to model evaluation for regulatory purposes, including DOD’s OEL determinations. Determining human equivalent concentrations (HECs) for each POD assuming an 8 hour per day, 5 day per week exposure regimen for 13 weeks (to ensure week-to-week stability in internal dose metric [periodicity] was achieved) was considered appropriate for a TCE OEL. In addition, DOD should be commended for providing detailed appendices to the Covington et al. (2019) report that should enable users and evaluators to replicate results in the future.

The committee offers a few suggestions that could provide additional support and ultimately improve confidence in several of the potential internal dose-metrics and HEC derivations. Specifically, the PBPK model does not have a component designed for pregnant or lactating animals, which is a potential limitation for internal dose metric determinations for pregnant or nursing mothers exposed to TCE in the workplace. PBPK models have been developed for pregnant rats, lactating rats, and nursing pups (e.g., Fisher et al. 1989, 1990). DOD should improve transparency in its choice of the PBPK model by discussing the reasons for, and potential impact of, not accounting for anatomic, physiologic, and metabolic changes that vary between species during pregnancy and nursing of infants. Given the general lack of significant parameter impacts (aside from ventilation and cardiac output rates for inhalation exposures) following sensitivity analyses and other toxicity end points driving the OEL, the committee does not expect major changes to the ultimate outcomes of OEL determinations. Regardless, the additional discussion or evaluation of potential impact of model changes for gestational or

___________________

lactational exposures could improve confidence in the assessment for this potentially sensitive population.

With regard to choices in internal dose metrics used for each POD (see Tables 8-10 of Sussan et al. 2019), it would be useful for DOD to briefly summarize the key experimental toxicokinetic (dosimetry) data that support the pathways that are directly associated with each internal dose-metric calculation as part of the mechanistic arguments currently presented (i.e., how strong is the experimental evaluation for each predicted dose metric for each POD). Additional explanation is also needed for using different internal dose metrics across studies for common end points (e.g., use of either total TCE metabolized by liver versus oxidative metabolism versus area under the curve for TCE in liver for liver toxicity). By including a presentation of the overall behavior of the model across potential exposures, breathing profiles, and species, DOD could also demonstrate conditions where species-specific non-linearities occur in each of the key pathways and potential dose-surrogates, aiding in the assessment of confidence in DOD’s choices of internal dose for HEC and OEL determinations.

Lastly, all PBPK-based derivations of HECs were performed using resting ventilation and associated cardiac output physiological profiles. This may be appropriate for clerical or other office workers (e.g., vapor intrusion within an office building) but for other DOD occupations where ventilation and cardiac output are elevated by more strenuous exertion for extended durations, the resulting HECs may not be sufficiently protective. If such workplace exposure cases are considered relevant to DOD, the committee recommends incorporating exercise (work) physiology and realistic durations from actual job profiles into PBPK simulations for selected end points most likely to drive the OEL. This will allow DOD to take advantage of the PBPK capabilities rather than using estimations that assume all processes involved in estimating internal dose metrics for each POD and HEC are linearly related.

USE OF EXTRAPOLATION TOOLS FOR DERIVATION OF UNCERTAINTY FACTORS

Uncertainty factors (UFs) are used to account for uncertainties associated with calculating toxicity values from the dose adjustment (e.g., HEC). Five UFs are considered to account for uncertainties associated with intrahuman variability, extrapolation of animal data to humans, extrapolation of subchronic exposure data to chronic exposure scenarios, use of a LOAEL instead of a NOAEL, and database deficiencies. Ideally, data are used to determine the value of the UFs; default values of 1, 3, or 10 can be used in the absence of data. NRC (2014) recommended using a Bayesian statistical approach to determine the UF where possible. This approach assumes each UF is defined by a distribution further defined by a central value (adjustment) and deviation from the standard value (variability). A major advantage of the Bayesian approach is the ability to combine data from multiple sources. Simon et al. (2016) proposed an approach that assumes UFs follow a lognormal distribution. For example, the approach was applied to data from

Pieters et al. (1998) that calculated the geometric mean (4.5) and geometric standard deviation (1.7) of the LOAEL/NOAEL ratio values from 175 chronic studies to determine the value of the UF for LOAEL to NOAEL extrapolation. The committee concurs with DOD’s use of the Bayesian method to apply UFs to the OEL. The committee also concurs with DOD’s use of the BMDL rather than the method recommended by Simon (2016) of using the BMD with a log-normal distribution based UF. Although Simon et al. (2016) recommended applying the UFs in a stage-wise fashion, the committee supports DOD’s decision to apply all UFs after derivation of the HEC.

There is a growing body of literature on how to evaluate and express uncertainty. The committee recommends that DOD keep current with developing methods, particularly the probabilistic framework developed by the World Health Organization (Chiu and Slob 2015; WHO 2017; Chiu et al. 2018), which provides a more comprehensive and rigorous framework for evaluating uncertainties than that of Simon et al. (2016).

CANCER EXPOSURE-RESPONSE ASSESSMENT

The DOD draft report appropriately included studies on the carcinogenicity of TCE in its literature review, under section 9.4 for subacute/chronic target organ toxicities. The DOD draft also acknowledged the evidence for carcinogenicity of TCE, in line with previous reviews (NRC 2006; EPA 2011), and estimated cancer risks to evaluate the protectiveness of the OEL for this end point. Although this comparison is similar to other OEL-setting approaches (e.g., ACGIH), the committee is concerned because the cancer data were not evaluated using the same approach as the noncancer end points; for example, the cancer studies were not assessed for risk of bias (see Chapter 4). Because of this, the quality of the cancer assessment was diminished.

Additional methodological considerations are warranted beyond no-threshold linear models to estimate unit risk, such as re-evaluating the mode of action for each cancer end point to determine whether there is a likely threshold effect and deciding whether to apply UFs or slope adjustment factors for non-threshold effects (NRC 2009a; Danovic et al. 2015; Bevan and Harrison 2017). The committee recommendation, consistent with NRC (2009a), is to include all health end points within a unified framework for dose-response assessment, with an emphasis on distinguishing risk assessment models by mode of action rather than cancer versus noncancer.

DOD estimated risks for kidney cancer, and for kidney cancer and non-Hodgkin’s lymphoma (NHL) combined. It selected these types of cancer based on its own review of the literature, as well as prior conclusions of the National Research Council (NRC 2009b), EPA (2011), and the International Agency for Research on Cancer (2014). The evidence for a relationship between TCE and liver cancer was considered inconclusive and/or not relevant; therefore, liver cancer was not assessed. The dose-response relationship for kidney cancer with occupational exposure to TCE was derived using epidemiologic data from Charbotel

et al. (2006). The DOD report does not explain the rationale for selecting this study over others. A cancer slope and corresponding confidence limits were estimated from the odds ratios (ORs) and exposure data in the study, using a linear regression model, with weighting by the inverse variance of the OR estimates. DOD acknowledged that the assumption of a no-threshold linear response for kidney cancer might be conservative, given an uncertain mode of action for TCE and kidney cancer. Using the upper confidence limit of the cancer slope, DOD then estimated excess risk of cancer for 1 parts per million (ppm) exposure to TCE using a life-table approach, and subsequently applied this analysis to estimate various cancer risks. DOD’s approach to kidney cancer risk assessment for TCE closely follows the methods used by EPA in its Integrated Risk Information System (IRIS) assessment (EPA 2011), and is based on the same data from the Charbotel et al. (2006) study. DOD also estimated the excess risk from TCE exposure for kidney cancer and NHL combined. For this estimation, DOD did not conduct a dose-response assessment for NHL directly; instead it estimated combined risk for the two cancers by using relative risks for each type of cancer from two meta-analyses by Karami et al. (2012, 2013) to estimate the ratio of excess NHL risk relative to that for kidney cancer. This approach of estimating excess risk of one type of cancer relative to another is similar to an approach used by EPA (2011) in its IRIS assessment for TCE, although DOD used different data than EPA for this purpose.

DOD did not work with a pre-defined “acceptable” cancer risk level for the OEL, although the report notes the wide range of cancer risk at OELs derived by standard-setting bodies such as the Occupational Safety and Health Administration and the American Conference of Governmental Industrial Hygienists, spanning from 1 in 100 to 1 in 100,000 or lower risk (based on DOD’s observations but also discussed in the literature [Deveau et al. 2015; Bevan and Harrison 2017]). The DOD draft report presents the OELs associated with risks of 1 in 100, 1 in 1,000, and 1 in 10,000 for kidney cancer, and for kidney cancer and NHL combined, based on 30 years or 45 years of occupational exposure. A 1 in 1,000 risk for kidney cancer was estimated to occur at exactly the suggested OEL of 0.9 ppm, with 45 years exposure (see Table 18, Sussan et al. 2019). The DOD report discusses several reasons why the estimated risks might be overestimated, and following this discussion, the report concludes, “we expect our OEL would be protective of cancer as well as noncancer effects. Even with the assumption that cancer unit risks have no threshold, these ranges reflect the varying levels of risk that may be deemed acceptable” (Sussan et al. 2019, p. 89).

In addition to considering that cancer risk might be overestimated, DOD should also consider implications of continuous workplace exposure if the predicted risks are accurate, consistent with its stated goal of setting an OEL that is adequately protective across all health end point domains. Furthermore, it would be prudent to balance the discussion by considering the possibility that the cancer risk was underestimated. For example, DOD estimated the 1 in 1,000 excess risks for kidney cancer at the proposed OEL using background cancer rates from 2005-2006, similar to the time frame of the Charbotel et al. (2006) study. DOD

acknowledges that these background rates are lower than current/recent rates in the United States, and this could underestimate risk in the intended setting. This 1 in 1,000 cancer risk considers kidney cancer only and does not include possible increases in other types of cancer, such as NHL. Although the total risk for kidney cancer and NHL combined is not shown at the proposed OEL of 0.9 ppm, a risk of 1 in 1,000 was estimated for the two cancers with 45 years of exposure at an occupational exposure level of 0.25 ppm, a level less than one-third of the proposed OEL. To better enable evaluation of the proposed OEL, the committee recommends a straightforward presentation of excess cancer risks at the proposed OEL (for each type of cancer, for each assumed number of years of exposure), in addition to the current presentation of occupational exposure levels associated with various benchmark cancer risks. Following this, an explicit discussion of the acceptability of estimated cancer risk levels at the proposed OEL is warranted.

In summary, DOD’s estimated cancer risks lack the rigor of estimates derived from data identified through a defined protocol for study evaluation and dose-response assessment as recommended in previous chapters. The process of data selection for cancer risk estimation was not transparent and the selection could be viewed as arbitrary. It is also possible that with updated evidence driven by evaluations using a well-defined protocol, other types of cancer may be identified as related to TCE, which could lead to different cancer risk estimates. Inclusion of cancer through all of the steps of the evaluation and dose-response process shown in Figure 5-1 will increase confidence in the adequacy of DOD’s cancer risk assessment results.

FINDINGS

DOD incorporated many best practices in its dose-response assessments and derivation of OELs for TCE consistent with recommendations from prior National Academies committees. These included:

- The use of BMD modeling where the data permit, or NOAELs or LOAELs when the data did not, to derive PODs;

- The use of graphical displays of PODs derived from each of the studies advanced for dose-response assessment;

- The use of PBPK modeling to derive HECs for inhalation exposures; and

- The use of Bayesian approaches to derive data-driven UFs for each POD.

The committee also identified potential weaknesses in DOD’s approach that, if addressed, could lead to increased transparency and confidence in the derivation of an OEL for TCE inhalation exposures. Key improvements include:

- Establishing and applying criteria to evaluate studies for relevance and quality for dose-response assessment before PODs are determined;

- Include human epidemiology studies in all steps of hazard identification and dose-response assessment (where possible), along with animal studies. Without the same level of evaluation, DOD’s use of human studies to either support decisions on PODs or assess cancer risks appear arbitrary, lack transparency, and reduce confidence in the assessment;

- Because DOD has a PBPK model, it should consider including both oral and inhalation studies for all end points considered in the dose-response assessment. This can strengthen DOD’s choices of internal dose metrics for cross-species comparisons and improve confidence in the overall approach to deriving HECs;

- Quantitative scores should not be used to rate studies (also see Chapter 4);

- DOD should address that its PBPK model does not allow for internal dose comparisons to pregnant or nursing mothers in the workplace. To improve transparency and confidence in the HECs for this potentially sensitive population of workers, DOD should either discuss the impact of using its model for dose comparisons of this population or specifically address the anatomic, physiologic, and metabolic changes that vary between species during pregnancy and nursing; and

- If certain DOD occupations or tasks involve strenuous exertion for extended durations, DOD should consider using the PBPK model to adjust HECs using realistic exposure and physiological conditions for end points that are most likely to drive the OEL.

REFERENCES

Bevan, R.J., and P.T. Harrison. 2017. Threshold and non-threshold chemical carcinogens: A survey of the present regulatory landscape. Regul. Toxicol. Pharmacol. 88:291-302.

Charbotel, B., J. Fevotte, M. Hours, J.L. Martin, and A. Bergeret. 2006. Case-control study on renal cell cancer and occupational exposure to trichloroethylene. Part II: Epidemiological aspects. Ann. Occup. Hyg. 50:777-787.

Chiu, W.A., and W. Slob. 2015. A unified probabilistic framework for dose-response assessment of human health effects. Environ. Health Perspect. 123(12):1241-1254.

Chiu, W.A., D.A. Axelrad, C. Dalaijamts, C. Dockins, K. Shao, A.J. Shapiro, and G. Paoili. 2018. Beyond the RfD: Broad application of probabilistic approach to improve chemical dose-response assessments for noncancer effects. Environ. Health Perspect. 126(6):067009.

Covington, T.R., J.M. Gearhart, T.R. Sterner, and D.R. Mattie. 2017. Evaluation of a physiologically based pharmacokinetic (PBPK) model used to develop health protective levels for trichloroethylene. Air Force Research Laboratory, Wright-Patterson AFB, OH. Report No. AFRL-SA-WP-TR-2017-0014. February 2017.

Covington, T.R., J.M. Gearhart, T.R. Sterner, D.R. Mattie, H.A. Pangburn, and D.K. Ott. 2019. Translation of a physiologically based pharmacokinetic (PBPK) model used to develop health protective levels for trichloroethylene. Report No. AFRL-SA-WPTR-2019-0006. February 2019. Airforce Research Laboratory, Wright-Patterson AFB, OH.

Deveau, M., C.P. Chen, G. Johanson, D. Krewski, A. Maier, K.J. Niven, S. Ripple, P.A. Schulte, J. Silk, J.H. Urbanus, and D.M. Zalk. 2015. The global landscape of occupational exposure limits—Implementation of harmonization principles to guide limit selection. J. Occup. Environ. Hyg. 12(Suppl. 1):S127-S144.

EPA (U.S. Environmental Protection Agency). 2011. Toxicological Review of Trichloroethylene (CAS No. 79-01-6) in Support of Summary Information on the Integrated Risk Information System (IRIS), September 11, 2011. EPA/635/R-09/011F. Washington, DC: EPA [online]. Available: https://www.epa.gov/iris/supporting-documents-trichloroethylene [accessed April 24, 2019].

Fisher, J., T. Whittaker, D. Taylor, H. Clewell, III, and M. Andersen. 1989. Physiologically based pharmacokinetic modeling of the pregnant rat: A multiroute exposure model for trichloroethylene and its metabolite, trichloroacetic acid. Toxicol. Appl. Pharmacol. 99:395-414.

Fisher, J., T. Whittaker, D. Taylor, H. Clewell, III, and M. Andersen. 1990. Physiologically based pharmacokinetic modeling of the lactating rat and nursing pup: A multiroute exposure model for trichloroethylene and its metabolite, trichloroacetic acid. Toxicol. Appl. Pharmacol. 102:497-513.

Haber, L.T., M.L. Dourson, B.C. Allen, R.C. Hertzberg, A. Parker, M.J. Vincent, A. Maier, and A.R. Boobis. 2018. Benchmark dose (BMD) modeling: Current practice, issues, and challenges. Crit. Rev. Toxicol. 48(5):387-415.

Karami, S., Q. Lan, N. Rothman, P.A. Stewart, K.M. Lee, R. Vermeulen, and L.E. Moore. 2012. Occupational trichloroethylene exposure and kidney cancer risk: A meta-analysis. Occup. Environ. Med. 69(12):858-867.

Karami, S., B. Bassig, P.A. Stewart, K.M. Lee, N. Rothman, L.E. Moore, and Q. Lan. 2013. Occupational trichloroethylene exposure and risk of lymphatic and haematopoietic cancers: A meta-analysis. Occup. Environ. Med. 70(8):591-599.

Keil, D.E., M.M. Peden-Adams, S. Wallace, P. Ruiz, and G.S. Gilkeson. 2009. Assessment of trichloroethylene (TCE) exposure in murine strains genetically-prone and non-prone to develop autoimmune disease. J. Environ. Sci. Health A Tox. Hazard Subst. Environ. Eng. 44(5):443-453.

NRC (National Research Council). 2006. Assessing the Human Health Risks of Trichloroethylene: Key Scientific Issues. Washington, DC: The National Academies Press.

NRC. 2009a. Science and Decisions: Advancing Risk Assessment. Washington, DC: The National Academies Press.

NRC. 2009b. Contaminated Water Supplies at Camp Lejeune: Assessing Potential Health Effects. Washington, DC: The National Academies Press.

NRC. 2011. Review of the Environmental Protection Agency’s Draft IRIS Assessment of Formaldehyde. Washington, DC: The National Academies Press.

NRC. 2014. Review of EPA’s Integrated Risk Information System (IRIS) Process. Washington, DC: The National Academies Press.

Pieters, M.N., H.J. Kramer, and W. Slob. 1998. Evaluation of the uncertainty factor for subchronic-to-chronic extrapolation: Statistical analysis of toxicity data. Regul. Toxicol. Pharmacol. 27(2):108-111.

Simon, T.W., Y. Zhu, M.L. Dourson, and N.B. Beck. 2016. Bayesian methods for uncertainty factor application for derivation of reference values. Regul. Toxicol. Pharmacol. 80:9-24.

Sussan, T.E., G.J. Leach, T.R. Covington, J.M. Gearhart, and M.S. Johnson. 2019. Trichloroethylene: Occupational Exposure Level for the Department of Defense. January 2019. U.S. Army Public Health Center, Aberdeen Proving Ground, MD.

WHO (World Health Organization). 2017. Guidance Document on Evaluating and Expressing Uncertainty in Hazard Characterization. 2nd edition. Geneva, Switzerland: World Health Organization.