The task statement for this study charges the committee to develop a roadmap built on the goals and objectives of the 2008 NEHRP Strategic Plan. In this context, a roadmap is a plan that describes the actions and activities that will be needed to achieve the plan’s objectives. Further, the charge requires an estimate of costs, recognizing that some activity costs can be specified fairly accurately (e.g., based on previous detailed studies), whereas others can only be estimated roughly. Also, some activities are scalable, that is, they can be conducted at varying levels of effort or units.

At the outset of its work, the committee was briefed on the NEHRP Strategic Plan and subsequently reviewed the plan, with supporting documents, in detail. The committee then considered the steps that would be required to make the nation and its communities more resilient to the impacts of earthquakes, based on the collective expertise of committee members and taking into account the substantial input from a community workshop (see Appendix D), but without constraining its thinking to the specifics of the Strategic Plan. In the end, 18 broad, integrated tasks, or focused activities, were identified as the elements of a roadmap to achieve earthquake resilience. These tasks are focused on specific outcomes that could be achieved in a 20-year timeframe, with substantial progress realizable within 5 years. We consider these tasks to be critical to achieving a nation of more earthquake-resilient communities.

Although the committee did not set out to explicitly ratify the elements of the Strategic Plan, in the end the committee embraced and supported these elements. The goals address loss reduction by expanding knowledge,

developing enabling technologies, and applying them in vulnerable communities. The objectives identify the logical elements in fulfilling these goals.

The committee endorses the 2008 NEHRP Strategic Plan, and identifies 18 specific task elements required to implement that plan and materially improve national earthquake resilience.

The tasks identified are:

1. Physics of Earthquake Processes

2. Advanced National Seismic System

3. Earthquake Early Warning

4. National Seismic Hazard Model

5. Operational Earthquake Forecasting

6. Earthquake Scenarios

7. Earthquake Risk Assessments and Applications

8. Post-earthquake Social Science Response and Recovery Research

9. Post-earthquake Information Management

10. Socioeconomic Research on Hazard Mitigation and Recovery

11. Observatory Network on Community Resilience and Vulnerability

12. Physics-based Simulations of Earthquake Damage and Loss

13. Techniques for Evaluation and Retrofit of Existing Buildings

14. Performance-based Earthquake Engineering for Buildings

15. Guidelines for Earthquake-Resilient Lifeline Systems

16. Next Generation Sustainable Materials, Components, and Systems

17. Knowledge, Tools, and Technology Transfer to/from the Private Sector

18. Earthquake-Resilient Community and Regional Demonstration Projects

The tasks generally cross cut the goals and objectives described in the 2008 NEHRP Strategic Plan because they are formulated as coherent activities that span from knowledge building to implementation. The linkage between the goals and objectives, on the one hand, and the tasks on the other, is shown in the following matrix (Table 3.1). The matrix is richly populated, illustrating the cross-cutting nature of the tasks.

Each of the 18 tasks is described below under a series of subheadings: proposed activity and actions, existing knowledge and current capabilities, enabling requirements, and implementation issues.

TASK 1: PHYSICS OF EARTHQUAKE PROCESSES

Goal A of the 2008 NEHRP Strategic Plan is to “improve understanding of earthquake processes and impacts.” Earthquake processes are difficult to observe; they involve complex, multi-scale interactions of matter and energy within active fault systems that are buried in the solid, opaque earth. These processes are also very difficult to predict. In any particular region, the seismicity can be quiescent for hundreds or even thousands of years and then suddenly erupt as energetic, chaotic cascades that rattle through the natural and built environments. In the face of this complexity, research on the basic physics of earthquake processes and impacts offers the best strategy for gaining new knowledge that can be implemented in mitigating risk and building resiliency (NRC, 2003).

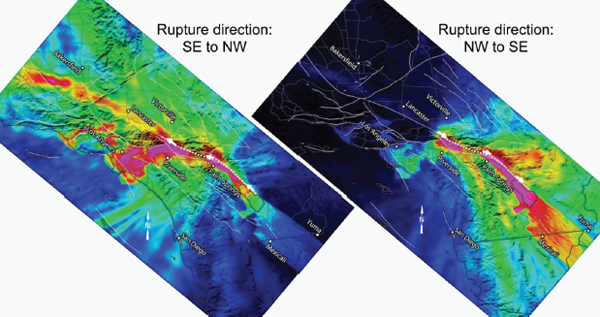

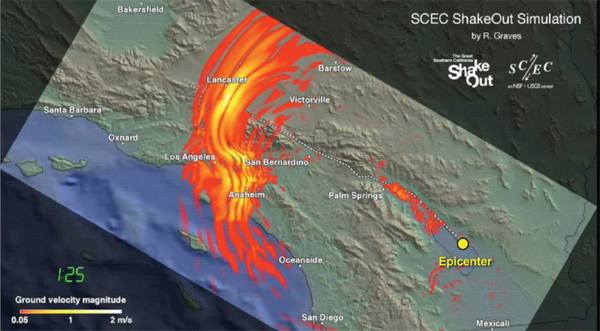

The motivation for such research is clear. Earthquake processes involve the unusual physics of how matter and energy interact during the extreme conditions of rock failure. No theory adequately describes the basic features of dynamic rupture and seismic energy generation, nor is one available that fully explains the dynamical interactions within networks of faults. Large earthquakes cannot be reliably and skillfully predicted in terms of their location, time, and magnitude. Even in regions where we know a big earthquake will eventually strike, its impacts are difficult to anticipate. The hazard posed by the southernmost segment of the San Andreas Fault is recognized to be high, for example—more than 300 years have passed since its last major earthquake, which is longer than a typical interseismic interval on this particular fault. Physics-based numerical simulations show that, if the fault ruptures from the southeast to the northwest—toward Los Angeles—the ground motions in the city will be larger and of longer duration, and the damage will be much worse, than if the rupture propagates in the other direction (Figure 3.1). Earthquake scientists cannot yet predict which way the fault will eventually go, but credible theories suggest that such predictions might be possible from a better understanding of the rupture process. Clearly, basic research in earthquake physics will continue to extend the practical understanding of seismic hazards.

Proposed Actions

To move further toward NEHRP Goal A and improve the predictive capabilities of earthquake science, the National Science Foundation (NSF) and the U.S. Geological Survey (USGS) should strengthen their current research programs on the physics of earthquake processes. Bolstering research in this area will “advance the understanding of earthquake phenomena and generation processes,” which is Objective 1 of the 2008 NEHRP Strategic Plan. Many of the outstanding problems can be grouped into four general research areas:

TABLE 3.1 Matrix Showing Mapping of the 18 Tasks Identified in This Report Against the 14 Objectives in the NEHRP Strategic Plan (NIST, 2008)

| Task |

1. Advance understanding of earthquake phenomena and generation processes |

2. Advance understanding of earthquake effects on the built environment |

3. Advance understanding of the social, behavioral, and economic factors linked to implementing risk reduction and mitigation strategies in the public and private sectors |

4. Improve post-earthquake information acquisition and management |

5. Assess earthquake hazards for research and practical application |

6. Develop advanced loss estimation and risk assessment tools |

7. Develop tools that improve the seismic performance of buildings and other structures |

8. Develop tools that improve the seismic performance of critical infrastructure |

9. Improve the accuracy, timeliness, and content of earthquake information products |

10. Develop comprehensive earthquake risk scenarios and risk assessments |

11. Support development of seismic standards and building codes and advocate their adoption and enforcement |

12. Promote the implementation of earthquake-resilient measures in professional practice and in private and public policies |

13. Increase public awareness of earthquake hazards and risks |

14. Develop the nation’s human resource base in earthquake safety fields |

| A. Improved Understanding—Processes and Impacts | B. Develop Cost-Effective Measures to Reduce Impacts | C. Improve Community Resilience | ||||||||||||

|

1. Physics of Earthquake Processes |

||||||||||||||

|

2. Advanced National Seismic System |

||||||||||||||

|

3. Earthquake Early Warning |

||||||||||||||

|

4. National Seismic Hazard Model |

||||||||||||||

|

5. Operational Earthquake Forecasting |

||||||||||||||

|

6. Earthquake Scenarios |

||||||||||||||

|

7. Earthquake Risk Assessment and Applications |

||||||||||||||

|

8. Post-Earthquake Social Science Response and Recovery Research |

||||||||||||||

|

9. Post-Earthquake Information Management |

||||||||||||||

|

10. Socioeconomic Research on Hazard Mitigation and Recovery |

| Task |

1. Advance understanding of earthquake phenomena and generation processes |

2. Advance understanding of earthquake effects on the built environment |

3. Advance understanding of the social, behavioral, and economic factors linked to implementing risk reduction and mitigation strategies in the public and private sectors |

4. Improve post-earthquake information acquisition and management |

5. Assess earthquake hazards for research and practical application |

6. Develop advanced loss estimation and risk assessment tools |

7. Develop tools that improve the seismic performance of buildings and other structures |

8. Develop tools that improve the seismic performance of critical infrastructure |

9. Improve the accuracy, timeliness, and content of earthquake information products |

10. Develop comprehensive earthquake risk scenarios and risk assessments |

11. Support development of seismic standards and building codes and advocate their adoption and enforcement |

12. Promote the implementation of earthquake-resilient measures in professional practice and in private and public policies |

13. Increase public awareness of earthquake hazards and risks |

14. Develop the nation’s human resource base in earthquake safety fields |

| A. Improved Understanding—Processes and Impacts | B. Develop Cost-Effective Measures to Reduce Impacts | C. Improve Community Resilience | ||||||||||||

|

11. Observatory Network on Community Resilience and Vulnerability |

||||||||||||||

|

12. Physics-Based Simulations of Earthquake Damage and Loss |

||||||||||||||

|

13. Techniques for Evaluation and Retrofit of Existing Buildings |

||||||||||||||

|

14. Performance-Based Earthquake Engineering for Buildings |

||||||||||||||

|

15. Guidelines for Earthquake-Resilient Lifeline Systems |

||||||||||||||

|

16. Next Generation Sustainable Materials, Components, and Systems |

||||||||||||||

|

17. Knowledge, Tools, and Technology Transfer to/ from the Private Sector |

||||||||||||||

|

18. Earthquake-Resilient Community and Regional Demonstration Projects |

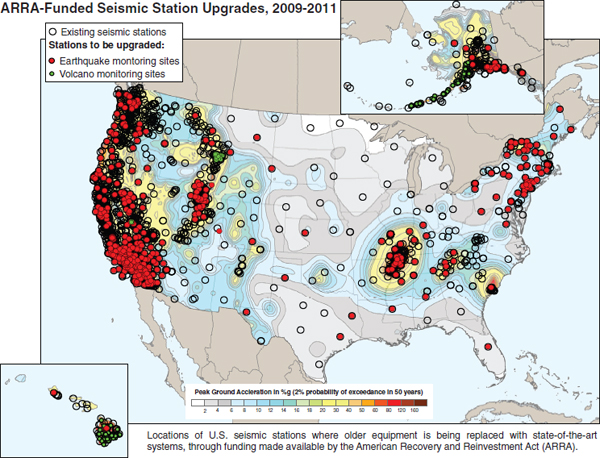

FIGURE 3.1 Maps of Southern California showing the ground motions predicted for a magnitude-7.7 earthquake on the southern San Andreas Fault; high values of shaking are purple to red, low values blue to black. The left panel shows faulting that begins at the southeast end and ruptures to the northwest. The right panel shows faulting that begins at the northwest end and ruptures to the southeast. The ground motions predicted in the Los Angeles region are much more intense and have longer duration in the former case. SOURCE: Courtesy of K. Olsen and T.H. Jordan.

• Fault system dynamics: how tectonic forces evolve within complex fault networks over the long term to generate sequences of earthquakes. The tectonic forces that drive earthquakes are still poorly understood. They cannot be directly measured and are influenced by unknown heterogeneities within the seismogenic upper crust as well as by slow deformation processes. The latter include intriguing new discoveries—aseismic transients such as “silent earthquakes,” as well as newly discovered classes of episodic tremor and slip. How these slow phenomena are coupled to the earthquake cycle is currently unknown; a better understanding could potentially provide new types of data for improving time-dependent earthquake forecasting. A major issue is how the distribution of large earthquakes depends on the geometrical complexities of fault systems, such as fault bends, step-overs, branches, and intersections. In many cases, fault segmentation and other geometrical irregularities appear to control the lengths of fault ruptures (and thus earthquake magnitude), but large ruptures often break across segment boundaries and branch to and from subsidiary faults. For example, the magnitude-7.9 Denali earthquake in Alaska initiated as a rupture on

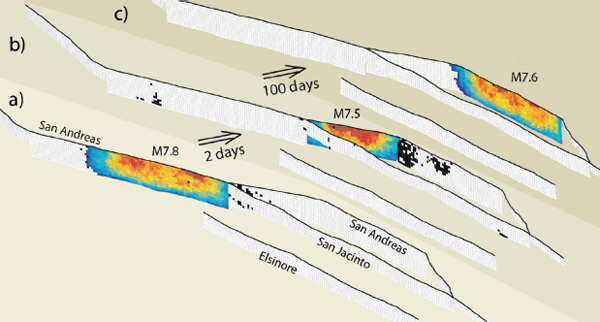

the Susitna Glacier Thrust Fault; the rupture branched onto the main strand of the Denali Fault, and then branched again onto the subsidiary Totschunda Fault. A key objective is to develop numerical models of a brittle fault system that can simulate earthquakes over many cycles of stress accumulation and fault rupture for the purpose of constraining the earthquake probabilities used in time-dependent forecasts (see Task 5). An example of a sequence of earthquakes on the San Andreas Fault system from such an “earthquake simulator” is shown in Figure 3.2.

• Earthquake rupture dynamics: how forces produce fault ruptures and generate seismic waves during an earthquake. The nucleation, propagation, and arrest of fault ruptures depend on the stress response of rocks approaching and participating in failure. In these regimes, rock behavior can be highly nonlinear, strongly dependent on temperature, and sensitive to minor constituents such as water. A major problem is to understand how the microscopic processes of fault weakening control the dynamics of rupture. Are mature faults statically weak because of their compositions and elevated pore pressures, or are they statically strong but slip at low average shear stress because of dynamic weakening during rupture? Many potential weakening mechanisms have been identified—

FIGURE 3.2 Example output from an earthquake simulator showing a sequence of large earthquakes during a 4-month period on the southern San Andreas Fault. There were 72 aftershocks in the 2-day interval between the magnitude-7.8 and magnitude-7.5 events, and 183 aftershocks during the 100-day interval before the magnitude-7.6 event. The three snapshots displayed here were part of a longer simulation that included 227 earthquake greater than magnitude-7. Of these, 137 were isolated by at least 4 years; 34 were pairs, and 5 were triplets such as this one. SOURCE: Courtesy of J. Dieterich.

the thermal pressurization of pore fluids, thermal decomposition, flash heating of contact asperities, partial melting, elasto-hydrodynamic lubrication, silica gel formation, and normal-stress changes due to bimaterial effects—but the physics of these processes, and their interactions, remains poorly understood. A combination of better laboratory experiments, field observation of exhumed faults, and numerical models will be required, including studies of how ruptures propagate along geometrically complex faults with distributed damage zones and off-fault plastic deformation. A priority is to validate models for application in ground motion forecasting (see Tasks 4 and 5).

• Ground motion dynamics: how seismic waves propagate from the rupture volume and cause shaking at sites distributed over a strongly heterogeneous crust. Seismic hazard analysis currently relies on empirical attenuation relationships to account for event magnitude, fault geometry, path effects, and site response. These generic relationships do not adequately represent the physical processes that control ground shaking: rupture complexity and directivity, basin effects, the role of small-scale crustal heterogeneity, and the nonlinear response of the surface layers (such as soft soils). Physics-based numerical simulations of the generation and propagation of seismic radiation have now advanced to the point where they are becoming useful in predicting the strong ground motions from anticipated earthquake sources (e.g., Figure 3.1). The physics needs to account for the complexities of rupture propagation along the fault, wave propagation through the heterogeneous crust, response of the surface rocks and soils, and response of the buildings embedded in those soils. An important objective is to couple numerical models of these physical processes in end-to-end (“rupture-to-rafters”) earthquake simulations (see Task 12).

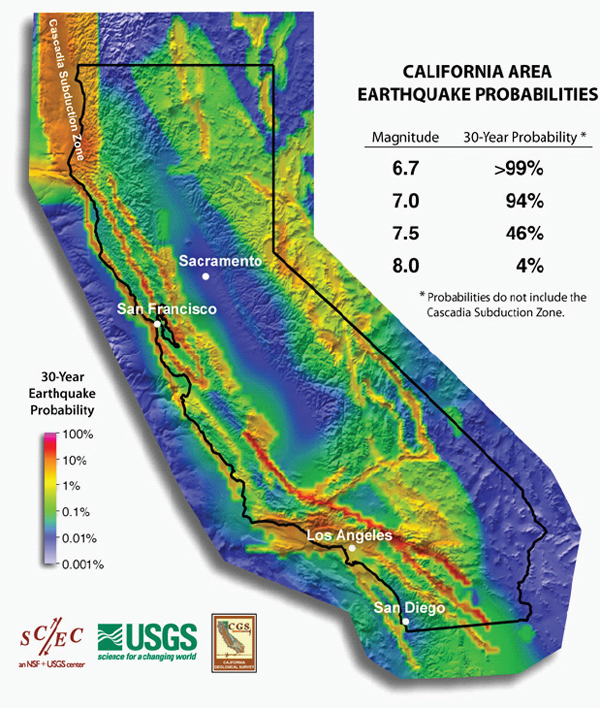

• Earthquake predictability: the degree to which the future occurrence of earthquakes can be determined from the observable behavior of earthquake systems. Because earthquakes cannot be deterministically predicted, forecasting requires a probabilistic (i.e., statistical) characterization of earthquake sources in terms of space, time, and magnitude (Jordan et al., 2009). Long-term earthquake forecasting is the basis for seismic hazard analysis. Current forecasts, such as those used in all three iterations of the National Seismic Hazard Maps (Frankel et al., 1996, 2002; Petersen et al., 2008), are time-independent; i.e., they assume earthquakes occur randomly in time and are independent of past seismic activity. This assumption is known to be false—almost all large earthquakes have many aftershocks, some of which can be damaging, and they often occur in clustered sequences. For example, the three largest earthquakes in the historical record of the central United States—each magnitude-7.5 or larger—occurred in the New Madrid region between mid-December, 1811, and mid-February, 1812, within a period of just 2 months.

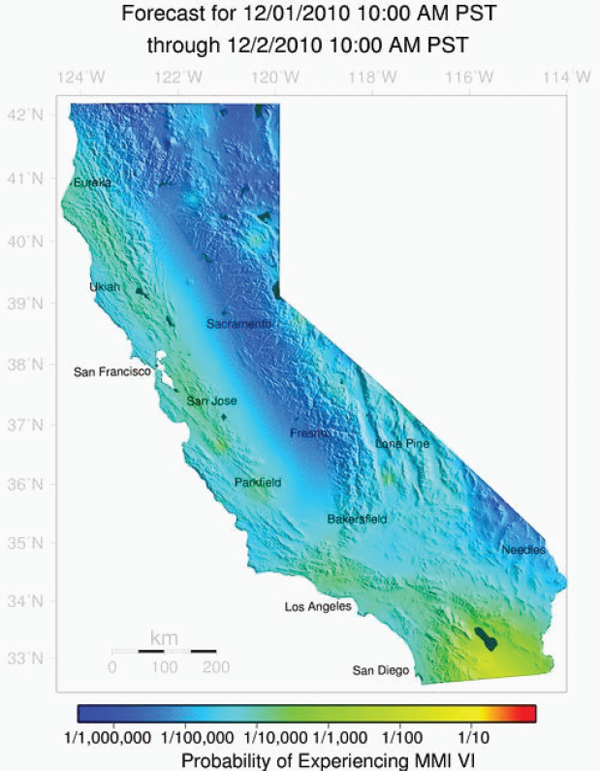

Time-dependent forecasts that account for the occurrence of past earthquakes using stress renewal models have been developed for California (see Figure 3.10 under Task 5). However, according to these long-term models, large earthquakes on major faults decrease the probability of additional events on that fault, and they cannot therefore adequately represent the increased probability of event sequences, such as New Madrid or the hypothetical sequence illustrated in Figure 3.2. The goal of research on earthquake predictability is to develop a consistent set of probabilistic models that span the full range of forecasting timescales, long-term (centuries to decades), medium-term (years to months), and short-term (weeks to hours). Bridging the current gap between the long-term renewal models such as the Uniform California Earthquake Rupture Forecast–Version 2 UCERF2 (see Task 5) and short-term models based on triggering and clustering statistics, such as the USGS Short-Term Earthquake Probability (STEP) forecast for California1 (Gerstenberger et al., 2007; see Figure 3.11 under Task 5), will require a better understanding of how earthquake probabilities depend on the quasi-static stress transfer caused by permanent fault slip and related relaxation of the crust and mantle, as well as the dynamic stress triggering caused by the passage of seismic waves.

Many of the potential advances in earthquake forecasting, seismic hazard characterization, and dissemination of post-earthquake information will depend on harnessing the predictive power of earthquake physics.

• Physics-based earthquake simulations can be used as tools to improve the rapid delivery of post-earthquake information for emergency management and to enable the new technology of earthquake early warning (Task 3).

• Ground motion dynamics can be used to transform long-term seismic hazard analysis into a physics-based science that can characterize earthquake hazard and risk with better accuracy and geographic resolution (Task 4).

• Research on earthquake predictability can yield better models for operational earthquake forecasting, which can help communities live with natural seismicity and prepare for potentially destructive earthquakes (Task 5).

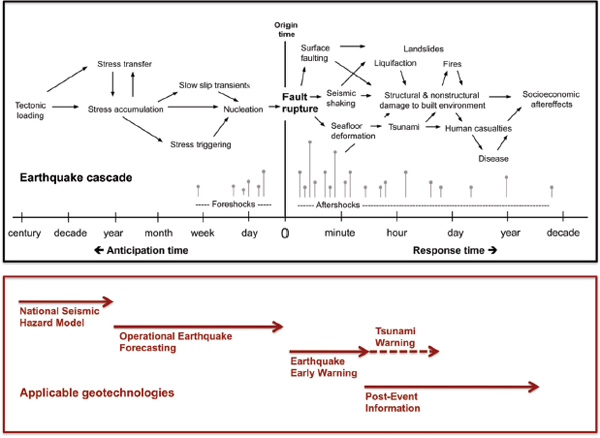

Taken together, the technologies of Tasks 3-5 can deliver timely information needed to improve societal resilience during all phases of the earthquake cascade (Figure 3.3).

Research on earthquake physics can also contribute directly to four other NEHRP objectives. Better dynamical models of earthquake ruptures

_________________

FIGURE 3.3 A diagram of the earthquake cascade showing the time domains of four geotechnologies that can improve earthquake resilience (as described under Tasks 3, 4, 5, and 8). A better understanding of earthquake physics will be needed to implement and improve these technologies. SOURCE: Courtesy Southern California Earthquake Center.

and seismic wave propagation can advance the understanding of earthquake effects on the built environment (Objective 2). A better understanding of earthquake predictability can guide the development of improved forecasting models needed for assessing earthquake hazards for research and practical application (Objective 5). Physics-based models capable of tracking earthquake cascades in real time can be used to improve the accuracy, timeliness, and content of earthquake information products (Objective 9). And more accurate earthquake simulations can provide a physical basis for developing comprehensive earthquake risk scenarios and risk assessments (Objective 10).

Existing Knowledge and Current Capabilities

Since its inception in 1977, NEHRP has been organized to gain new knowledge about earthquake hazards and risks and to implement

this knowledge through effective risk mitigation and rapid earthquake response. Some of the advances in understanding earthquake processes have been highlighted in the 2008 NEHRP Strategic Plan (see Chapter 1 and Appendix A), as well as in the Earthquake Engineering Resaerch Institute (EERI) (2003b) report (see Appendix B). The National Research Council (NRC) (2003) report, Living on an Active Earth: Perspectives on Earthquake Science, provides one of the most expansive treatments of earthquake science and its rise under NEHRP.

The science of earthquakes, like the study of many other complex natural systems, is still in its juvenile stages of exploration and discovery. Until recently, research was focused on two primary problems: (a) earthquake complexity and how it arises from the brittle response of the lithosphere to deep-seated forces and (b) the forecasting of earthquakes and their site-specific effects. Investigations of the first problem began with attempts to place earthquake occurrence in a global framework and contributed to the discovery of plate tectonics, while work on the second addressed the needs of earthquake engineering and led to the development of seismic hazard analysis. The historical separation between these two lines of inquiry has been narrowed by progress on dynamical models of earthquake occurrence, fault ruptures, and strong ground motions. This research has transformed the field from a haphazard collection of disciplinary activities into a more coordinated “earthquake system science” that seeks to describe seismic activity not just in terms of individual events, but also as an evolutionary process involving dynamical interactions within networks of interconnected faults. Such a system-level approach recognizes that the earthquakes are emergent phenomena that depend on a wide range of interactions, from the microscopic inner scale (frictional contact asperities breaking over microseconds) to the fault-system outer scale (regional tectonic loading and relaxation over hundreds of kilometers and thousands of years).

Much has been learned from multidisciplinary investigations coordinated in the aftermath of large earthquakes, and this experience makes clear the importance of standardized instrumental data and geologic field work. Research has been accelerated through the development of new observational and computational technologies. Subsurface imaging can now be applied with sufficient resolution to delineate the deep architecture of fault systems and the three-dimensional earth structure that controls the propagation of seismic waves. In well-studied regions of the western United States, neotectonic research has improved constraints on fault geometries and long-term slip rates, and paleoseismology has furnished an extended record of past earthquakes (McCalpin, 2009), providing evidence for the clustering of large events in “seismic storms.” The Global Positioning System (GPS) and Interferometric Synthetic Aperture

Radar (InSAR) satellites are mapping, with unprecedented resolution, the crustal deformations associated with individual earthquakes, long-term tectonic loading, and the stress interactions among nearby faults. Networks of broadband seismometers have been deployed to record earthquake ground motions faithfully at all frequencies and amplitudes (see Figure 3.4 under Task 2). By using high-performance computing and communications, scientists now have the means to process massive streams of observations in real time and, through numerical simulation, to quantify the many aspects of earthquake physics that have been resistant to standard analysis. New discoveries include slow slip transients that propagate at velocities systematically lower than ordinary fault ruptures.

Large earthquakes can be forecast on timescales of decades to centuries by combining the information from the geological record with data from seismic and geodetic monitoring (see Figure 3.10 under Task 5). Earthquake scientists have begun to understand how geological complexity controls the strong ground motion during large earthquakes (Figure 3.1) and, working with engineers, how to predict the site-specific response of buildings, lifelines, and critical facilities to seismic excitation. The long-term expectations for potentially destructive shaking have been quantified in the form of seismic hazard maps, which display estimates of the maximum shaking intensities expected at each locality in the United States (see Figure 3.8 under Task 4). Once a large earthquake has occurred, automated systems can rapidly and accurately compute hypocenter location, fault-plane orientation, and other source parameters. Predicted distributions of the extent of strong ground motions can be broadcast in near real time, helping to anticipate damage and guide emergency response (e.g., Figure 3.1). In the case of distant, sub-oceanic earthquakes, post-event predictions of the earthquake-generated sea waves (tsunamis) can warn coastal communities with sufficient lead times to permit evacuations. All of these advances have benefited from NEHRP-sponsored research in earthquake physics.

Enabling Requirements

Knowledge of earthquake processes is highly data limited, and there is an urgent and continuing need for better observations of earthquakes, especially through remote sensing of deformation and seismicity, and detailed field-based studies of fault rupture processes. Essential observations are provided by seismology, tectonic geodesy, and earthquake geology. The general objectives recommended in NRC (2003) have not yet been achieved:

• An Advanced National Seismic System (ANSS) capable of recording all earthquakes down to moment magnitude-3 and up to the largest anticipated magnitude with fidelity across the entire seismic bandwidth and with sufficient density to determine the source parameters of these events. The location threshold for regional networks should reach down to magnitude-1.5 in areas of high seismic risk. Full implementation of the current ANSS plan (see Task 2) would provide this capability.

• Geodetic instrumentation for observing crustal deformation within active fault systems with high enough spatial and temporal resolution to measure all significant motions, including aseismic events and the transients before, during, and after large earthquakes. Critical new data on earthquakes are coming from the denser networks of GPS receivers and strainmeters that have been deployed since 2005 in the Plate Boundary Observatory of NSF’s EarthScope Program (EarthScope, 2007). Spatial imaging of differential motions by satellite-based InSAR has demonstrated its potential for the study of fault deformation (e.g., Helz, 2005; Pritchard, 2006). However, an InSAR satellite for collecting crustal deformation data, proposed in the original EarthScope plan (NRC, 2001), has still not been launched by the United States, and as a result researchers remain dependent on data from European and Japanese satellites (Williams et al., 2010). This reinforces the importance of the planned NASA DESDynI (Deformation, Ecosystems Structure, and Dynamics of Ice) mission, proposed to launch in 2018,2 to provide a dedicated InSAR platform optimized for studying hazards and global environmental change.

• Programs of geologic field study to locate active faults, quantify fault slip rates, and determine the history of fault rupture over many earthquake cycles. Light Detection and Ranging (LiDAR) techniques are now capable of high-resolution topographic imaging of fault-controlled surface morphology. For example, airborne LiDAR mapping has been used to reduce by 40% the slip along the Carrizo section of the San Andreas Fault previously ascribed to the 1857 Fort Tejon earthquake (magnitude-7.9), implying a higher medium-term probability that another large earthquake will occur on this section of the fault (Zielke et al., 2010). However, LiDAR data have been collected on a synoptic scale for only a few major faults. The methods for dating rock on neotectonic timescales of hundreds to thousands of years have been greatly improved in the past decade, but well-constrained geologic slip rates are still not available for many of the faults known to be active in the United States. Again, only a few have been studied with the paleoseismic techniques needed to resolve the slip history of fault ruptures over many earthquake cycles.

_________________

2 See eospso.gsfc.nasa.gov/eos_homepage/mission_profiles/show_mission.php?id=75.

Large earthquakes are rare events, and the strong motion data from them are sparse. Numerical simulations of large earthquakes in well-studied, seismically active areas are important tools for basic earthquake science, because they provide a quantitative basis for comparing hypotheses about earthquake behavior with the limited observations. Simulations are playing an increasingly crucial role in our understanding of regional earthquake hazard and risk. This convergence of basic and applied science is comparable to the situation in climate studies, where the largest, most complex general circulation models are being used to predict the hazards and risks of anthropogenic global change. Considerable computational power will be needed to fully realize this scientific transformation and put it to practical use. Earthquakes are among the most complex terrestrial phenomena, and modeling of earthquake dynamics is one of the most difficult computational problems in science. Taken from end to end, the problem comprises the loading and eventual failure of tectonic faults, the generation and propagation of seismic waves, the response of surface sites, and—in its application to seismic risk—the damage caused by earthquakes to the built environment (see Task 12). This chain of physical processes involves a wide variety of interactions, some highly nonlinear and multi-scale. For example, long-term fault dynamics is coupled to short-term rupture dynamics through the nonlinear processes of brittle and ductile deformation, which requires earthquake simulators that can span this range of scales (see Figure 3.2).

The implementation of physics-based ground-motion prediction using numerical simulations requires estimates of the three-dimensional structure of the fault network and the material properties—seismic velocities, attenuation parameters, and density distribution—within the tectonic blocks. These structures are interrelated, because material property contrasts are often governed by fault displacements. Therefore, the development of unified structural representations requires cross-disciplinary collaboration between geologists and seismologists.

The key research issues of earthquake science are true system-level problems: they require an interdisciplinary approach to understand the nonlinear interactions among many fault-system components, which themselves are often complex subsystems. Because the behavior of each fault system is contingent on its structure, earthquake studies are necessarily conducted in a system-specific context (e.g., the Cascadia subduction zone or the San Andreas transform-fault system). Therefore, a generic understanding of earthquake processes requires a synthesis of the knowledge obtained from different regions. International collaborations can promote such a synthesis by bringing together data from many fault systems around the world.

Implementation Issues

NSF and UGSG already have well-developed research programs in earthquake physics, and strengthening those programs along the lines described here poses no major implementation issues. That said, these agencies will have to work together more closely to foster highly integrated collaborations that are (1) coordinated across scientific disciplines and research institutions, (2) enabled by high-performance computing and advanced information technology, (3) capable of assimilating new theories and data into system-level models, and (4) can partner with earthquake engineering and risk-management organizations in delivering practical knowledge to society. An additional implementation issue, of course, is the need for information from major earthquakes that can only be provided by the monitoring systems described in Task 2.

TASK 2: ADVANCED NATIONAL SEISMIC SYSTEM

Seismic monitoring is vital for meeting the nation’s needs for timely and accurate information about earthquakes, tsunamis, and volcanic eruptions—information to determine their locations and magnitudes and estimate their potential effects. As well as guiding response efforts, this information also provides the basis for research on the causes and effects of earthquakes. ANSS is the USGS initiative to broadly improve the monitoring and reporting of earthquakes in the United States by integrating and modernizing the prior patchwork of state, local, and academic regional seismic networks, and coupling the seismological data with a modern earthquake information center. Begun in 2000, ANSS is modernizing and expanding capabilities nationally by establishing an integrated national system of 7,100 sensors providing data to national and regional centers. ANSS provides real-time information on the distribution and intensity of earthquake shaking to emergency responders so that they can rapidly assess the full impact of an earthquake and speed disaster relief to the most heavily affected areas. ANSS also provides engineers and designers with the information they need to improve building design standards and engineering practices to mitigate the impact of earthquakes, and provides scientists with high-quality data to understand earthquake processes and solid earth structure and dynamics. After analyzing the economic benefits of seismic monitoring, NRC (2006b; p. 8) concluded that

Full deployment of the ANSS offers the potential to substantially reduce earthquake losses and their consequences by providing critical information for land-use planning, building design, insurance, warnings, and emergency preparedness and response. In the committee’s judgment, the potential benefits far exceed the costs—annualized buildings and building-related earthquake losses alone are estimated to be about $5.6

billion, whereas the annualized cost of the improved seismic monitoring is about $96 million, less than 2 percent of the estimated losses. It is reasonable to conclude that mitigation actions—based on improved information and the consequent reduction of uncertainty—would yield benefits amounting to several times the cost of improved seismic monitoring.

Proposed Actions

The rate at which ANSS was deployed was relatively modest between 2000 and 2008, but because of substantially increased investment as part of the ARRA (American Recovery and Reinvestment Act) economic stimulus, ANSS will be about 25 percent complete by the end of 2011 (Figure 3.4). By that time, ANSS will consist of more than 1,500 modern digital seismic stations, upgraded regional seismic networks, and a National Earthquake Information Center that is operated 24×7 and delivers information for emergency response to state and local officials, operators of lifeline facilities, the Federal Emergency Management Agency (FEMA), and other critical users.

Deployment of the remaining 75 percent of ANSS is a critical requirement for national resilience, reflected by the many tasks listed in this chapter that require full ANSS deployment. One of the important components of ANSS that is still needed is an expansion of the building instrumentation component to provide crucial information on how common buildings respond to earthquake shaking.

Existing Knowledge and Current Capabilities

The ANSS plans were developed through a broad consultative process that resulted in a comprehensive description of the infrastructure elements and a detailed deployment strategy (USGS, 1999). Implementation of the plan has been approved through the USGS appropriation process, with availability of funding being the only impediment to full deployment. Because the system is already partially deployed, the technical and scientific knowledge base for ANSS is fully developed and tested.

As part of its monitoring activities, ANSS includes:

• A national “backbone” network of seismological stations.

• The National Earthquake Information Center (NEIC), the central focus for analysis and dissemination of earthquake information.

• The National Strong Motion Project, to monitor and understand the effects of earthquakes on man-made structures in densely urbanized areas to improve public earthquake safety.

• Fifteen regional seismic networks operated by USGS and its partners.

The range of products produced by the USGS Earthquake Hazards Program3 derived from the ANSS network has grown steadily over recent years, as the network elements have been deployed, and these products now serve a diverse scientific, emergency management, and community base:

• Descriptions of Recent Earthquakes. Automatic maps and event information are available online from the Earthquake Hazards Program website within minutes of an earthquake occurring.

• Did You Feel It Maps and Reports. Present a compilation of community reports of shaking in the form of a Community Internet Intensity Map (CIIM) that summarizes the questionnaire responses provided by Internet users.

• ShakeMaps. Provides near-real-time maps of ground motion and shaking intensity following significant earthquakes, for use by federal, state, and local organizations, both public and private, for post-earthquake response and recovery, public and scientific information, as well as for preparedness exercises and disaster planning.

• ShakeCasts. Critical users, e.g., lifeline utilities, can receive automatic notifications within minutes of an earthquake, indicating the level of shaking and the likelihood of impact to their own facilities.

• Hazard Maps. National Seismic Hazard Maps show earthquake ground motions for various probability levels across the United States, for application in the seismic provisions of building codes, insurance rate structures, risk assessments, and other public policy uses (see Task 4).

• PAGER Earthquake Notification. Automated notifications of earthquakes through e-mail, pager, or cell phone. Rapid information and updates to first responders, and resources for media and local government.

A broad range of additional data and resources—information about earthquake hazards, historical seismicity, faults, etc. is available by state; an online searchable earthquake catalog providing downloadable information and technical data; QuickTime movies created from the recordings of fully instrumented structures during earthquakes; and real-time waveforms and spectrograms.

_________________

Enabling Requirements

To be fully functional, the ANSS will require the following additional components:

• Structural instrumentation. ANSS requirements call for extensive instrumentation of buildings, bridges, and other structures in areas of high earthquake risk. This is the least developed component of ANSS; 9,000 data channels are needed, and instrumentation installed to date is less than 1000 channels.

• Expanded urban monitoring. ARRA funding is targeted for the modernization of existing seismic stations, but not for an expansion of the networks. To meet the ANSS requirements, an additional 1,700 stations are needed for deployment in the highest risk urban areas.

• Data management. Currently, a large proportion of the data management needs of the system are being accommodated through the IRIS Data Management System, funded by NSF. At full implementation, USGS needs to assume this funding responsibility, as well as the task of developing seamless data and product access for ANSS.

Implementation Issues

Full implementation of ANSS simply requires additional funding; there are no technical issues.

TASK 3: EARTHQUAKE EARLY WARNING

ANSS, when fully implemented, will provide the infrastructure necessary for development of earthquake early warning (EEW) systems. The goal of network-based EEW is to detect earthquakes in the early stages of fault rupture, rapidly predict the intensity of the future ground motions, and warn people before they experience the intense shaking that might cause damage. The most damaging shaking is usually caused by seismic shear and surface waves, which travel at only half the speed of the fastest seismic waves, and much slower than an electronic warning message. EEW systems detect strong shaking at an earthquake's epicenter and transmit alerts ahead of the damaging earthquake waves.

Potential warning times depend primarily on the distance between the user and the earthquake epicenter. There is a “blind zone” near an earthquake epicenter where early warning is not feasible, but at more distant sites, warnings can be issued from a few seconds up to about 1 minute prior to the strong ground shaking (Figure 3.5). Such warnings can be used to reduce the harm to people and infrastructure during earth-

FIGURE 3.5 Snapshot of the seismic waves produced by a simulated magnitude-7.8 earthquake on the southern San Andreas Fault (dashed white line) with an epicenter at the southeastern end of the fault (yellow point). The snapshot is taken 85 seconds after the earthquake origin time, just as strong surface waves are arriving in downtown Los Angeles. In this scenario, an EEW system deployed along the southern San Andreas Fault could provide up to a minute of warning at sites in the most urbanized regions of Los Angeles. This particular earthquake simulation was used to define the hazard for the 2008 Great Southern California ShakeOut.

SOURCE: Courtesy of R. Graves, G. Ely, and T.H. Jordan.

quakes. Potential applications include alerting people to “drop, cover, and hold-on,” move to safer locations, or otherwise prepare for shaking (e.g., surgeons in operating rooms), as well as many types of automated actions: stopping elevators at the nearest floor, opening firehouse doors, slowing rapid-transit vehicles and high-speed trains to avoid accidents, shutting down pipelines and gas lines to minimize fire hazards, shutting down manufacturing operations to decrease potential damage to equipment, saving vital computer information to avoid losses of data, and controlling structures by active and semi-active systems to reduce building damage.

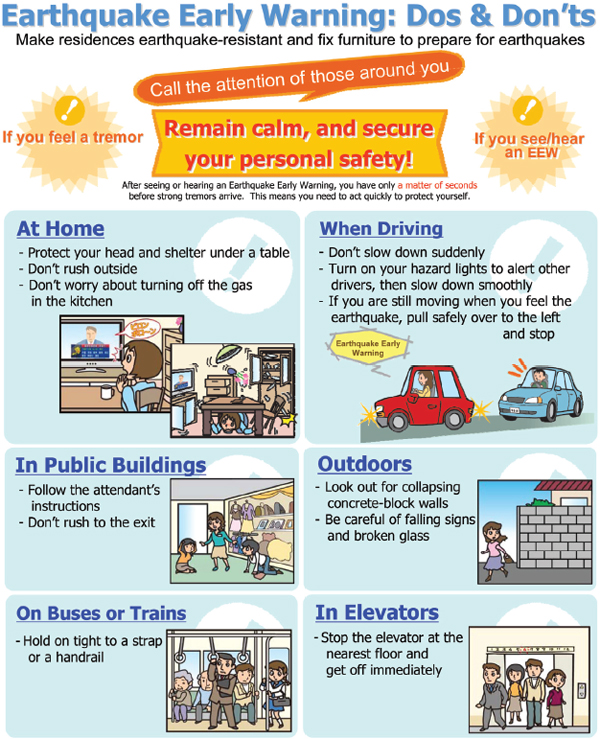

Operational EEW systems been deployed in at least five countries—Japan, Mexico, Romania, Taiwan, and Turkey. Japan is the only country with a nationwide system that provides public alerts. The Japan Meteorological Agency uses a national seismic network of about 1,000 seismological stations to detect earthquakes and issue warnings, which are transmitted via the Internet, satellite, and wireless networks to cell phone users, to desktop computers, and to automated control systems that stop trains, place sensitive equipment in a safe mode, and isolate hazards while the public takes cover (Figure 3.6). Mexico City and Istanbul also have public warning systems.

Proposed Actions

EEW has been identified as an ANSS objective (USGS, 1999), and it will be an important outcome of ANSS implementation. The NEHRP 2008 Strategic Plan recommended the evaluation and testing of EEW systems as part of Objective 9, to “Improve the accuracy, timeliness, and content of earthquake information products.” Some activities are under way in California, where a USGS-sponsored demonstration project is testing several EEW algorithms using real-time data from the California Integrated Seismic Network (CISN), a component of ANSS. AARA stimulus funding is being used to upgrade many of the older seismic instruments throughout the CISN and reduce the time delays in gathering data and issuing alerts. When completed, this prototype system, called the CISN ShakeAlert System, will provide warnings to a small group of test users including emergency response groups, utilities, and transportation agencies (USGS, 2009). While in the testing phase, the system will not provide public alerts. If these tests are successful, high priority should be given to the development and deployment of an ANSS-based operational earthquake early warning system that can issue public alerts through various types of public media. The most suitable location for the first fully operational deployment of EEW would be the San Andreas Fault system, where the risk level is high and early detection of large strike-slip ruptures can provide up to a minute of early warning (e.g., Figure 3.5). If sufficient funding

FIGURE 3.6 Portion of a leaflet prepared by the Japan Meteorological Agency describing simple instructions on how to react when an EEW alert is received. SOURCE: Japan Meteorological Agency. Available at www.jma.go.jp/jma/en/Activities/EEWLeaflet.pdf.

is made available, upgrading the prototype CISN ShakeAlert System to a fully operational, public system should be possible within 5 years.

Planning should also begin for an EEW system for the Cascadia region of the northwestern United States. Earthquakes with magnitudes greater than 8 (and as large as 9) are anticipated on the offshore megathrust of the Cascadia subduction zone. In favorable situations, EEW could provide more than a minute of warning for urban centers such as Seattle and Portland. For example, the megathrust faulting that caused the great Sumatra-Andaman Islands earthquake of December 26, 2004, (magnitude-9.2) had a total rupture duration that exceeded 1,200 seconds (Shearer and Bürgmann, 2010). Moreover, an EEW capability would complement and improve the accuracy of the tsunami warning systems already operated by National Oceanic and Atmospheric Administration (NOAA) and USGS (see NRC, 2010).

EEW systems should include the capability for enhanced alerts during periods of aftershock activity following major earthquakes, which can warn rescue personnel operating in dangerous and unstable conditions. The enhancements could be based on existing dense urban seismic networks with directed annunciation of the warning to the exposed individuals, or on fully mobile aftershock monitoring networks that can be rapidly installed in sparsely monitored locales.

Current EEW systems are based on earthquake detection and forecasting by seismometer networks such as the CISN. However, as described in the following section, continuously recording GPS networks can also provide real-time information on large fault displacements that is potentially valuable for EEW, especially in subduction zones such as Cascadia (Hammond et al., 2010). Additional research and development is needed to facilitate the rapid integration of GPS network data with seismometer network data.

Existing Knowledge and Current Capabilities

Three basic seismographic strategies have been developed for earthquake early warning (Allen et al., 2009):

• on-site or single-station warning: predicting the peak shaking from the P wave recorded at the site,

• front detection: detecting strong ground shaking at one location and transmitting a warning ahead of the seismic energy, and

• network-based warnings: using seismic networks to locate and estimate the size of a growing fault rupture.

Research indicates that dense seismometer arrays in the vicinity of shallow hypocenters can determine whether an event will grow into a large earthquake (magnitude > 6) using only several seconds of recorded P-wave data (Allen and Kanamori, 2003; Lancieril and Zollo, 2008). However, whether such measurements saturate above magnitude-7 is an unresolved problem that is related to fundamental issues of earthquake predictability.

Operational EEW systems been deployed in at least five countries—Japan, Mexico, Romania, Taiwan, and Turkey (see review by Allen et al., 2009). The most highly developed systems are in Japan. Japan Railways began using alarm-seismometers in the 1960s and then front-detection EEW systems in 1982 to shut off power to the Shinkansen bullet trains. An onsite system (Urgent Earthquake Detection and Alarm System, UrEDAS) started operation along the Shinkansen lines in 1992, which was improved after the 1995 Kobe earthquake. The system demonstrated its effectiveness during the magnitude-6.6 Niigata Ken Chuetsu earthquake of 2004, when it issued an alert that stopped a Shinkansen train. Although the train derailed, all but one car remained on the tracks. Japan has also developed a technology for network-based EEW that now provides public alerts (Kamigaichi et al., 2009). The Japan Meteorological Agency (JMA) employs a network of 1,000 seismic instruments to detect earthquakes and predict the intensity of the resulting ground motions. Warnings are sent via TV and radio and go out over public address systems in schools, some shopping malls, and train stations. Alerts of impending shaking are also transmitted via the Internet, satellite, and wireless networks to cell phone users, to desktop computers, and to automated control systems that stop trains, place sensitive equipment in a safe mode, and isolate hazards while the public takes cover (Figure 3.6). Mexico, Taiwan, Istanbul, and Bucharest have active systems providing warning to one or more users.

The finite bandwidth and the dynamic range of current seismometers limit their accuracy in measuring ground displacements near large earthquake ruptures. Complementary information can be obtained from geodetic observations using GPS networks. Continuously monitoring GPS stations can provide total displacement waveforms at sampling intervals on the order of 1 second, which can be used directly to estimate earthquake source parameters (Crowell et al., 2009). This sampling rate is lower than the seismic observations, and the noise levels of the GPS data are higher. Therefore, an integrated network of seismometers and GPS receivers can provide better performance for EEW than either type of instrument alone.

Enabling Requirements

Full implementation of ANSS, as recommended in Task 2, will provide the instrumental platform for the development of EEW systems. As noted

above, development of the CISN ShakeAlert prototype is already underway. A fully operational, end-to-end system will require the densification of seismic networks in the likely epicentral regions of large earthquakes, such as along California’s San Andreas Fault, which can be guided by the long-term earthquake rupture forecasts discussed under Task 4. Upgrades to the equipment currently used to record, transmit, and process seismic signals will be necessary to reduce the latencies in the automated broadcasting alerts. The robustness of the ANSS components such as the CISN will need to be improved through redundant telecommunication paths and software enhancements.

Substantial research and development will be needed on the algorithms used to detect earthquakes in the early stages of fault rupture, to predict future ground motions, and to automatically issue alerts. The basic science requirements are described under Task 1. Particularly important is a better understanding of the earthquake rupture physics, including the processes that govern the nucleation, propagation, and arrest of seismic ruptures. Short-term earthquake rupture forecasts can improve the efficacy of EEW algorithms by adjusting the a priori rupture probabilities to reflect current seismic activity (see Task 5). Automated algorithms will have to recognize and map finite-fault sources, including multi-fault ruptures, in real time.

Large uncertainties in EEW alerts of prospective shaking can arise from uncertainties in the ground motion prediction equations. The ground motion predictions will have to account for three-dimensional geologic structures, particularly near-surface heterogeneities such as sedimentary basins, and to account for rupture propagation effects such as directivity and slip complexity. Physics-based numerical simulations of strong ground motions have the potential for substantially improving these predictions (see Tasks 1 and 12).

EEW algorithms will have to be verified and validated by extensive field-testing, such as that now under way in California. This testing will need to evaluate the quality and consistency of the ground motion predictions, as well as the costs and benefits to potential users. Because of the latter requirement, the design, operation, and testing of EEW systems will have to involve end users.

Implementation Issues

Private-sector service providers will be needed to adapt EEW information for utilization in automated control and response systems. In Japan, private providers offer a variety of services ranging from simple translation of the JMA information into a site-specific predicted intensity and warning time to more sophisticated systems that incorporate local

seismometers to provide additional on-site warning. Engineering and construction companies are also using the warning systems to provide both enhanced building performance during earthquakes and to protect construction workers. An effective public-private partnership will be necessary in developing “best practices” for EEW users.

Although there have been limited studies addressing the social science context of earthquake early warning (e.g., Bourque, 2001; Tierney, 2001), implementation of EEW will require additional research to determine optimal ways to interact with the public and a broad education campaign to inform the public about the availability and use of earthquake alerts. The experience of the Japanese (e.g., Figure 3.6) will be useful in this regard.

TASK 4: NATIONAL SEISMIC HAZARD MODEL

The National Seismic Hazard Maps produced by USGS are the authoritative reference for earthquake ground motion hazard in the United States. These maps are the basis of the probabilistic portion of the NEHRP Recommended Provisions, are a resource for the model building codes, and are used in seismic retrofit guidelines, earthquake insurance, land-use planning, and the design of highway bridges, dams, and landfills. They are also used in nationwide earthquake risk and loss assessment and development of credible earthquake scenarios for planning and emergency preparedness.

Proposed Actions

Improved mapping of seismic hazard, at both local and national scales, can reduce the uncertainty in earthquake probabilities and ground motion values and provide a more scientifically credible basis for engineering and policy decision-making. Seismic hazard mapping directly benefits from the advances in earthquake science described in Tasks 1, 2, and 3. Continued interaction between NEHRP researchers and the user-community will also serve to identify new earthquake hazard and risk information products of value to the community.

• Continue the development of National Seismic Hazard Maps. Three focus areas for the next generation of National Seismic Hazard Maps are (a) the improved characterization of faults capable of producing magnitude-6.5 to 7 earthquakes (Category B faults) using field investigations and seismic monitoring, (b) the development of improved ground motion attenuation models for the eastern and central United States, and (c) the development of, and improvements to, numerical ground motion simulations.

• Create hazard maps for urban areas. Expansion of the Urban Seismic Hazard Mapping program, with the goal of mapping all of the major U.S. urban areas at risk over the next 20 years. Providing greater detail about the geographic distribution of strong ground motion, geologic site conditions, and potential ground failure (fault rupture, landslides, and liquefaction) is a critical component to the earthquake risk applications discussed in Tasks 6 and 7 as well as the building and lifeline guidelines discussed in Tasks 13, 14, and 15. The development of urban seismic hazard maps involves partnerships between state and local agencies, local government, universities, and the NEHRP agencies. Integration of enhanced local hazard information with the national-scale engineering design guidance provided in the National Seismic Hazard Maps will need to be addressed by NEHRP as well as by the standards and code developing organizations. Both the San Francisco, CA, and Evansville, IN, examples described in Chapter 2 provide valuable case histories describing how such partnerships can be established.

Existing Knowledge and Current Capabilities

The current knowledge of earthquakes, active faults, crustal deformation and seismic wave generation/propagation must be integrated and translated into a form that can be used by others in order to be effective in reducing earthquake losses. The National Seismic Hazard Maps and related information products produced by USGS accomplish this critical information transfer.

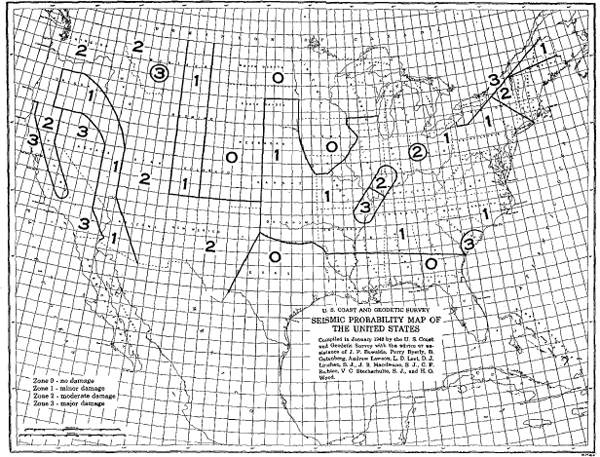

Seismic Hazard Maps

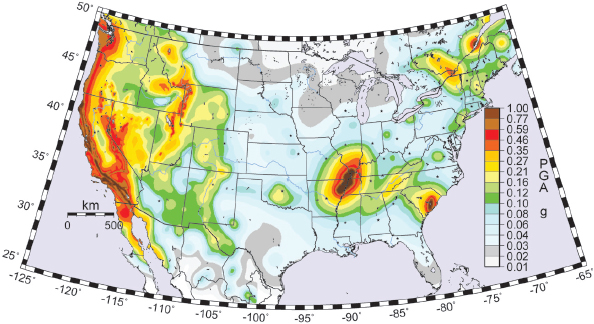

During the past 60 years, the National Seismic Hazard Maps have evolved from a series of broad zones depicting 4 damage levels (none, minor, moderate, and major) based on Modified Mercalli Intensity, a qualitative measure of earthquake shaking (see Figure 3.7; Roberts and Ulrich, 1950; Algermissen, 1969), to the current series of USGS maps that provide earthquake engineering-based parameters such as spectral acceleration (Sa, at multiple periods, 0.1, 0.2, 0.3, 0.5, and 1.0 sec) and Peak Ground Acceleration (PGA) for ~150,000 sites across the country (see Figure 3.8). The current USGS hazard maps are based on a combination of state-of-the-art probabilistic methodology, ANSS earthquake monitoring, and the latest NEHRP research findings that provide a long-term geologic perspective for earthquake activity (Crone and Wheeler, 2000). These hazard maps have been developed through a scientifically defensible and repeatable process that involves input and peer review at both regional and national levels by expert and user communities (Petersen et al., 2008).

FIGURE 3.7 Seismic probability map of the United States in 1950. SOURCE: Roberts and Ulrich (1950). © Seismological Society of America.

FIGURE 3.8 U.S. National Seismic Hazard Map showing Peak Ground Acceleration (PGA) with a 2 percent chance of exceedance in 50 years (or a 2,475-year return period). SOURCE: USGS (2008).

The USGS National Seismic Hazard Maps are the basis of the probabilistic portion of the NEHRP Recommended Provisions, a resource for the model building codes developed by the Building Seismic Safety Council and published by FEMA (FEMA, 2009b). These design maps are adopted by the International Building Code and national consensus standards such as ASCE-7 Minimum Design Loads for Buildings and Other Structures, ASCE-31 Seismic Evaluation of Existing Buildings, ASCE-41 Seismic Rehabilitation of Existing Buildings, and the NFPA 5000 Building Construction and Safety Code. Through these codes and standards, the National Seismic Hazard Maps affect billions of dollars of construction and represent one of the principal economic benefits of seismic monitoring in the United States (NRC, 2006b). In addition to new construction, they are used in seismic retrofit guidelines, earthquake insurance, land-use planning and the design of highway bridges (AASHTO, 2009), dams, and landfills. The national maps were used in a nationwide Hazards U.S. (HAZUS) earthquake risk assessment by FEMA (2001, 2008), and provide for basis for developing credible earthquake scenarios for planning and emergency preparedness and earthquake risk and loss assessments in the United States.

Continued NEHRP research has resulted in a new generation of earthquake hazard and risk maps that provide more specific information to support community decision-making. Urban Seismic Hazard Maps address strong ground shaking and ground failure at the community level. Seismic Risk Maps address the earthquake hazard to specific building types.

Urban Seismic Hazard Maps

Urban seismic hazard maps provide the foundation for developing realistic earthquake loss and damage estimates. By incorporating the effects of local geology, probabilistic and scenario earthquake maps provide a credible basis for community stakeholders to identify and prioritize community mitigation activities. Site and soil conditions vary geographically, and regional or local seismic hazard maps are needed to provide a higher spatial resolution to account for these differences and more accurately estimate strong ground motion effects.

A number of successful pilot programs around the United States have demonstrated the value the NEHRP Urban Seismic Hazards Mapping Program. USGS initiated a program to develop urban seismic hazard maps in 1998 for three pilot areas (San Francisco Bay region; Seattle, WA; Memphis, TN) and has since expanded the program in central United States (greater St. Louis area; Evansville, IN) and in southern California. These urban seismic hazard mapping programs involve state geological surveys, emergency management organizations, as well as local universities and consulting firms. In southern California, USGS is partnered with the Southern California Earthquake Center.

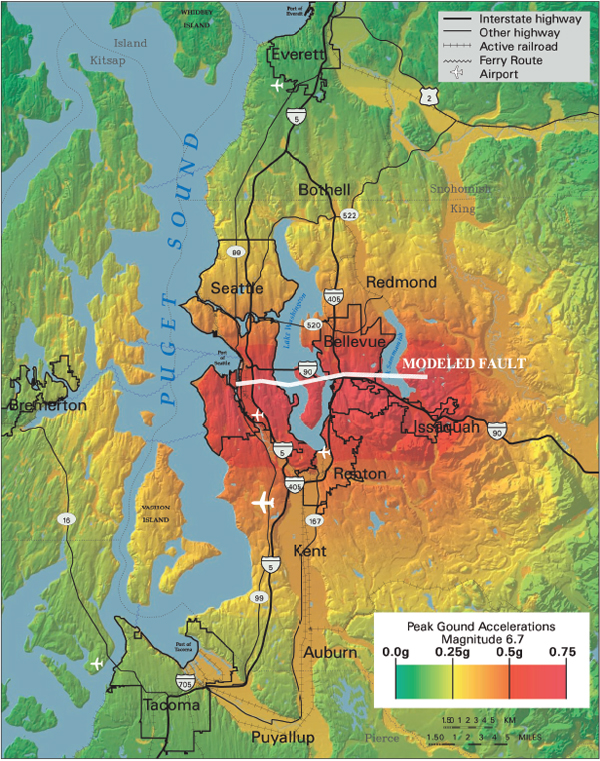

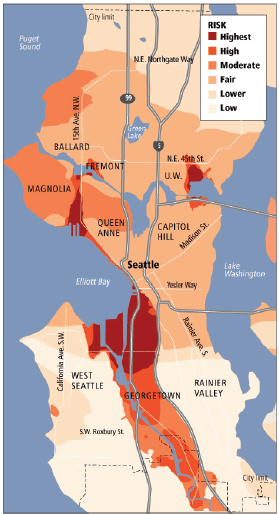

FIGURE 3.9 Seattle Urban Seismic Hazard Map, showing ground motions for the 10 percent chance of exceedance in 50 years or a 1 percent chance of exceedance in 475 years. SOURCE: Hearst Corporation. Available at seattlepi.com/U.S.G.S.

Seismic hazard maps for Seattle, WA, were improved following the magnitude-6.7 Nisqually, WA, earthquake in 2001. These maps provide a high-resolution view of potential ground shaking, which is particularly important because much of Seattle is sited on a sedimentary basin that strongly affects patterns of ground shaking and damage (see Figure 3.9). In the Nisqually earthquake, unreinforced masonry (URM) damage was disproportionately large compared with other building types, with the greatest damage occurring in areas of soft soils. Improved earthquake information for the Seattle area guided elected officials toward policy decisions about the need to mitigate hazards from URM buildings. Seattle is currently considering a URM retrofit ordinance4 that would be the first mandatory retrofit program outside California.

_________________

4 See www.cityofseattle.net/dpd/Emergency/UnreinforcedMasonryBuildings/default.asp.

Seismic Risk Maps

Earthquake risk, expressed as a level of building damage or economic loss, is dependent on both the type of building or structure and the geographic location of the structure with respect to strong ground shaking. Mapping uniform earthquake ground motions (e.g., 2 percent in 50 years, or 1 chance in 2,475 (0.04%) of exceedance in any year) does not necessarily result in identifying a uniform earthquake risk. A new series of earthquake risk maps combine hazard information from the National Seismic Hazard Maps with building fragility curves from FEMA’s HAZUS-Multi-Hazard earthquake loss estimation model to show mean annual frequencies of exceeding different structural damage states (Luco and Karaca, 2007). This type of information is fundamental to seismic risk assessment (see Task 7) and can be used by communities to make risk-informed decisions and identify performance targets for specific building types based on local hazards and local building practices (e.g., 1 percent annual likelihood of collapse). Additionally, integration of this risk-map approach with USGS ShakeMaps would provide emergency responders with accurate “damage maps” for use following an earthquake impact to a risk-mapped urban area.

Enabling Requirements

The National Seismic Hazard Maps integrate knowledge of earthquakes, historic earthquakes, active faults, crustal deformation, and seismic wave generation/propagation. The availability of on-line design and analysis tools has enabled engineers and earth science professionals to determine ground motion values for specific building codes as well as create customized hazard maps. The scientific credibility of these maps is based on basic geologic and seismologic research that includes:

Earthquake Monitoring

The National Seismic Hazard maps use the basic earthquake data collected by ANSS, and, as discussed above under Task 2, ANSS is the “backbone” of seismology research in the NEHRP program.

Geologic Research

NEHRP-supported paleoseismic research has provided the necessary long-term geologic constraints on earthquake activity to validate probabilistic seismic hazard assessments. Paleoseismic information for major fault systems capable of producing earthquakes with magnitude > 7 (Category A faults such as the San Andreas, San Jacinto, Elsinore, Imperial, and

Rodgers Creek) has been well developed during the past 30 years. These techniques need to be extended to other faults lacking sufficient paleoseismic data to constrain their recurrence intervals (defined as Category B faults). Recent examples of destructive earthquakes occurring on Category B faults include the 1971 San Fernando, CA, (magnitude-6.7) and 1994 Northridge, CA (magnitude-6.7) events. In areas where time-dependent models of earthquake activity may be more appropriate, paleoseismic research on the variability of inter-event times can help identify aleatory uncertainties and help improve the overall resolution of earthquake hazard estimates.

Wave Propagation

Better ground motion attenuation models help improve structural design and construction. The introduction of the Next Generation Attenuation (NGA) models into the 2008 hazard maps (Petersen et al., 2008) modified ground motion values in many areas of the United States, significantly impacting earthquake damage and loss estimates. Continued improvement of attenuation relations for the central and eastern United States through the use of physics-based numerical simulations (see discussion in Task 1) can advance understanding of earthquake effects to the built environment and help reduce uncertainties in areas of infrequent seismicity. Significant improvements to the empirical attenuation relations may be possible through the use of numerical simulations of ground motions that incorporate realistic models of source dynamics and three-dimensional geological structure (see Figure 3.1).

Site Conditions

Active geotechnical research and mapping programs by federal, state, and local agencies, universities, and consultants continue to improve our knowledge of subsurface and geologic site effects at the community scale. The COSMOS Geotechnical Virtual Data Center5 (Swift et al., 2004), for example, provides a distributed system for archiving and web dissemination of geotechnical data collected and stored by various agencies and organizations.

Coordination

Seismic hazard products developed by the states and university groups need to be coordinated with national maps through national and

_________________

5 See www.cosmos-eq.org/.

regional peer review processes to provide nationally consistent information to users. One example is the coordination of the UCERF2 seismic hazard study maps for California with the USGS National Seismic Hazard Mapping Program (WGCEP, 2008).

Implementation Issues

As discussed in Tasks 14 and 15, predictive models of ground shaking and deformation are required for performance-based earthquake engineering. Yet, in many areas, these types of models still exhibit large uncertainties. In those regions of our nation where earthquake data are sparse or nonexistent, earthquake-physics simulations should be used to build or augment the dataset. Continued deployment of ANSS in urban environments to collect strong motion recordings and site response information is essential to validate these simulation models. Systematic expansion of hazard mapping products and the development of national- and local-scale hazard maps for liquefaction (including lateral spreading and settlement), surface fault rupture, and landslide potential is needed to complement the maps already available for ground shaking.

Although the adoption of the USGS National Seismic Hazard Maps into the model building codes is a major NEHRP success story, the actual implementation and enforcement of these codes remains a community choice. A clearer understanding by community policy-makers and stakeholders of the role that both the National Seismic Hazard Maps and the building codes play in community safety is essential for the development of earthquake-resilient communities.

TASK 5: OPERATIONAL EARTHQUAKE FORECASTING

With the current state of scientific knowledge, individual large earthquakes cannot be reliably predicted in future intervals of years or less; i.e., “deterministic” earthquake prediction is not yet possible. Nevertheless, the public needs up-to-date information about the likelihood of future events, especially following widely felt earthquakes, even if the probabilities of a strong earthquake are too small to warrant high-cost preparedness actions such as mass evacuations. The goal of operational earthquake forecasting is to provide communities with authoritative information on how seismic hazards change with time, including a consistent set of earthquake forecasts that range from the long term (centuries to decades) to the short term (hours to weeks) (Jordan et al., 2009; Jordan and Jones, 2010).

Seismic hazards are known to change on short timescales, because earthquake occurrences suddenly alter the conditions within the fault system that lead to future earthquakes. One earthquake can trigger others

nearby; the probability of such triggering increases with the initial shock’s magnitude and decays with elapsed time according to simple (and nearly universal) scaling laws. Statistical models of earthquake triggering can explain much of the observed spatio-temporal clustering in seismicity catalogs, such as aftershocks, and the models can be used to construct forecasts that estimate future earthquake probabilities based on prior seismic activity. These short-term models have demonstrated significant skill in forecasting future earthquakes—the probability gain factors achieved in several-day intervals can range up to 100-1,000 relative to the long-term forecasts typically used in hazard estimation described under Task 4. However, although these gain factors can be high, the forecasting probabilities for large earthquakes usually remain low in an absolute sense, rarely reaching more than a few percent for intervals of a week or less.

Nevertheless, short-term forecasts, properly applied, can be used to improve resilience. Authoritative statements about the increase in seismic hazard following a significant earthquake allow emergency management agencies, as well as the population at large, to anticipate aftershocks. Such advisories also fulfill the public’s need for current information during periods of anomalous seismic activity, which can help to reduce the concern about amateur predictions and rumors that overly inflate the hazard.

Under the Stafford Act (P.L. 93-288), USGS has the federal responsibility for earthquake monitoring and forecasting. Its National Earthquake Prediction Evaluation Council (NEPEC) provides advice and recommendations on earthquake forecasts and related scientific research to the USGS director, in support of the director’s delegated responsibility to issue timely warnings of potential geologic disasters. Thus far, USGS and NEPEC have not established protocols for operational forecasting on a national level.

Proposed Actions

USGS should develop a national plan, coordinated with state and local agencies, for the implementation of operational earthquake forecasting. In formulating the plan, USGS should consider the following elements:

• Support for research. Through its internal research program and external grants program, USGS should continue to support research on the scientific understanding of earthquakes and earthquake predictability.

• Coordination of earthquake information. USGS should continue to coordinate across federal and state agencies to improve the flow of earthquake information, particularly the real-time processing of seismic and geodetic data and the timely production of high-quality earthquake catalogs. Full support of ANSS operations will allow substantial improvements in the real-time seismic information needed for short-term forecasting.

• Development of operational systems. USGS should support the development of earthquake forecasting methods—based on seismicity changes detected by ANSS—to quantify short-term probability variations, and it should deploy the infrastructure and expertise needed to utilize this probabilistic information for operational purposes. Working with local agencies, USGS should provide the public with authoritative, scientific information about the short-term probabilities of future earthquakes. The source of this information needs to properly convey the epistemic uncertainties in these forecasts.

• Operational qualification of forecasts. All operational procedures involved with the creation, delivery, and utility of forecasts should be rigorously reviewed by experts. Earthquake forecasting procedures should be qualified for usage according to the three criteria commonly applied in weather forecasting (Jordan and Jones, 2010): they should display quality, a good correspondence between the forecasts and actual earthquake behavior; consistency, compatibility among procedures used at different spatial or temporal scales; and value, realizable benefits (relative to costs incurred) by individuals or organizations who use the forecasts to guide their choices among alternative courses of action.

o Operational forecasts should incorporate the results of validated short-term seismicity models that are consistent with the authoritative long-term forecasts and demonstrate reliability (correspondence to observations collected over many trials) and skill (performance relative to the long-term forecast).

o Verification of reliability and skill requires objective evaluation of how well the forecasting model corresponds to data collected after the forecast has been made (prospective testing), as well as checks against data previously recorded (retrospective testing). All operational models should be subject to continuous prospective testing against established long-term forecasts and a wide variety of alternative, time-dependent models.

o Experience has shown that such evaluations are most diagnostic when the testing procedures conform to rigorous standards, and the prospective testing is blind (Field et al., 2007). In this regard, advantage can be taken of the Collaboratory for the Study of Earthquake Predictability (CSEP),6 which has begun to establish standards and an international infrastructure for the comparative, prospective testing of earthquake forecasting models (Zechar et al., 2010). Regional experiments are now under way in California, New Zealand, Japan, and Italy, and will soon be started in China; a program for global testing has also been initiated.

_________________

6 See www.cseptesting.org/.

o Continuous testing in a variety of tectonic environments will be critical for demonstrating the reliability and skill of the operational forecasts, and for quantifying their uncertainties. At present, seismicity-based forecasts can display order-of-magnitude differences in probability gain, depending on the methodology, and there remain substantial issues about how to assimilate the data from ongoing seismic sequences into the models.

• Assessment of forecast utility. Most previous work on the public utility of earthquake forecasts has anticipated that they would deliver high probabilities of large earthquakes, i.e., deterministic predictions would be possible. This expectation has not been realized. Current forecasting policies need to be adapted to applications in a “low-probability environment”—one in which earthquake forecasting probabilities can vary by several orders of magnitude, but remain low in an absolute sense (< 10 percent in the short term).

The implementation of operational earthquake forecasting will enable cost-effective measures to reduce earthquake impacts on individuals, the built environment, and society-at-large—Goal B in NIST (2008). A national plan for operational forecasting will address NEHRP Objective 5 (assess earthquake hazards for research and practical application), and it will provide new information tools for Goal C (improve the earthquake resilience of communities nationwide), particularly for Objective 9 (improve the accuracy, timeliness, and content of earthquake information products).

Existing Knowledge and Current Capabilities

An up-to-date overview of existing knowledge and capabilities in earthquake forecasting and prediction is the subject of an extensive recent review by the International Commission on Earthquake Forecasting (ICEF), which was convened by the Italian government following the magnitude-6.3 L’Aquila earthquake of April 6, 2009 (Jordan et al., 2009). The statements in this section are based on this overview.