Wastewater Reclamation Technology

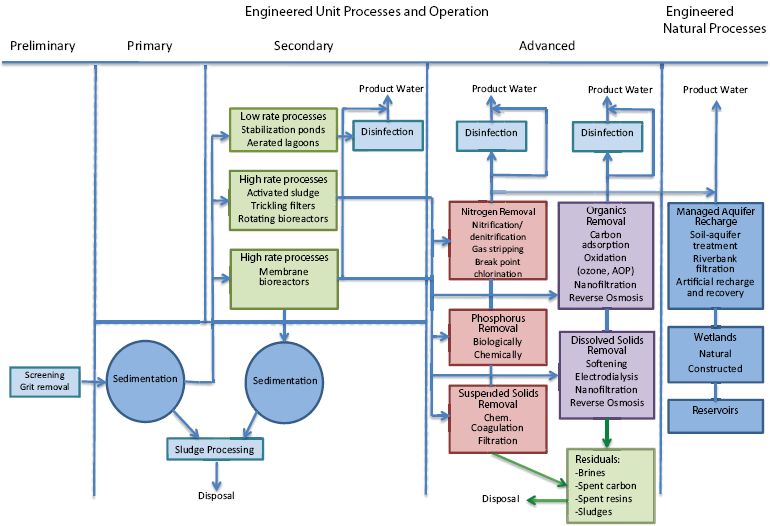

Treatment processes in wastewater reclamation are employed either singly or in combination to achieve reclaimed water quality goals. Considering the key unit processes and operations commonly used in water reclamation (see Figure 4-1), an almost endless number of treatment process flow diagrams can be developed to meet the water quality requirements of a certain reuse application.

Many factors may affect the choice of water reclamation technology. Key factors include the type of water reuse application, reclaimed water quality objectives, the wastewater characteristics of the source water, compatibility with existing conditions, process flexibility, operating and maintenance requirements, energy and chemical requirements, personnel and staffing requirements, residual disposal options, and environmental constraints (Asano et al., 2007). Decisions on treatment design are also influenced by water rights, economics, institutional issues, and public confidence. The relative importance of some of these factors is likely going to change in the future. With the current desire to limit greenhouse gas emissions and introduction of carbon taxes, energy-intense processes likely will be viewed much less favorable than today. This chapter focuses on treatment processes—characterized as preliminary, primary, secondary, and advanced and including both natural and engineered processes—that can be used to meet water quality objectives of a reuse project and their treatment effectiveness. The efficiency in removing certain constituent classes, energy requirements, residual generation, and costs of these treatment processes are qualitatively summarized in Table 4-1. Economic, social, and institutional considerations that also influence the choice of reclamation technologies are addressed in Chapters 9 and 10.

PRELIMINARY, PRIMARY, AND SECONDARY TREATMENT

Wastewater treatment in the United States typically includes preliminary treatment steps in addition to primary and secondary treatment. Preliminary steps include measuring the flow coming into the plant, screening out large solid materials, and grit removal to protect equipment against unnecessary wear. Primary treatment targets settleable matter and scum that floats to the surface. As shown in Table 2-1, only 1.3 percent of wastewater treatment plant effluents in the United States are discharged after receiving less than secondary treatment because of site-specific waivers (EPA, 2008b).

Secondary treatment processes are employed to remove total suspended solids, dissolved organic matter (measured as biochemical oxygen demand), and, with increasing frequency, nutrients. Secondary treatment processes usually consist of aerated activated sludge basins with return activated sludge or fixed-media filters with recycle flow (e.g., trickling filters; rotating biocontactors), followed by final solids separation via settling or membrane filtration and disinfection (Figure 4-1) (Tchobanoglous et al., 2002).

Advances over the past 20 years in membrane bioreactor (MBR) technologies have resulted in an alternative to conventional activated sludge processes

FIGURE 4-1 Treatment processes commonly used in water reclamation. Note that some or all of the numerous steps represented under advanced processes may be employed, depending on the end-product water quality desired and whether engineered natural processes are also used. All possible combinations are not displayed here.

that does not require primary treatment and secondary sedimentation (LeClech et al., 2006). Instead, raw wastewater can be directly applied to a bioreactor with submerged microfiltration or ultrafiltration membranes. These applications may only employ a fine screen as a preliminary treatment step. MBR processes combine the advantage of complete solids removal, a significant disinfection capability, high-rate and high-efficiency organic and nutrient removal, and a small footprint (Stephenson et al., 2000). In the past 10 years, reductions in the cost of membrane modules, extended life expectancy of the membranes, and advances in process design and operation have resulted in many domestic and industrial applications using MBRs. Its integrated design, which can be scaled down more easily than conventional secondary treatment processes, can facilitate decentralized water reclamation. However, membrane fouling and its consequences regarding plant maintenance and operating costs limit the widespread application of MBRs (LeClech et al., 2006; van Nieuwenhuijzen et al., 2008). Challenges that require research relate to maintaining productivity (or flux, i.e., the amount of water produced per membrane area) and minimizing the effects of membrane fouling. Other MBR research needs include the effluent quality that can be achieved and improvements in oxygen transfer and membrane aeration to lower operational costs of MBRs (van Nieuwenhuijzen et al., 2008).

In the United States, 45 percent of wastewater treatment plant effluent as of 2004 received only primary and secondary treatment (see Table 2-1). EPA (2008b) reported that 49 percent of all wastewater treatment plant effluent received “greater than secondary” treatment. This could include MBR treatment or

TABLE 4-1 Treatment Processes and Efficiencies to Remove Constituents of Concern during Water Reclamation

| Constituents of Concern | ||||||||||||||

| Pathogens | Trace Organics | |||||||||||||

| Process | Protozoa | Bacteria | Viruses | Nitrate | TDS | Boron | Bromate and Chorate | Metals | DBPs | Nonpolar | Polar | Energy Requirements | Residual Generationa | Cost |

| Engineered Systems: Physical | ||||||||||||||

| Filtration | Moderate | Moderate | Low | None | None | None | None | Low | None | None | None | Low | Low | Low |

| PAC/GAC | Low | Low | Low | None | None | None | Low | Low | Moderate | High | Low | Low | Low | Moderate |

| MF/UF | High | Moderate | Low | None | None | None | Low | Low | Low | Low | None | Moderate | Low | Moderate |

| NF/RO | High | High | High | High | High | Moderate | High | High | Moderate | High | High | High | High | High |

| Engineered Systems: Chemical | ||||||||||||||

| Chloramine | Low | Moderate | Low | None | None | None | None | None | None | None | None | Low | None | Low |

| Chlorine | Moderate | High | High | None | None | None | None | None | None | Low to moderate | Low to moderate | Low | None | Low |

| Ozone | Moderate | High | High | None | None | None | None | None | Low | High | High | High | None | High |

| UV | High | High | Moderate | None | None | None | None | None | None | None | None | Moderate | None | Low |

| UV/H2O2 | High | High | High | None | None | None | None | None | Low | High | High | High | None | High |

| Engineered Systems: Biological | ||||||||||||||

| BAC | Low | Low | Low | None to low | None | None | Low | Low | Low to moderate | Moderate | Moderate | Low | None to low | Low |

| Natural Systems | ||||||||||||||

| SAT | High | High | Moderate | High | None | None | Low to moderate | High | High | High | Moderate to High | Low | None | Low |

| Riverbank Filtration | High | High | Moderate | High | None | None | Low to moderate | High | High | High | Moderate to High | Low | None | Low |

| Direct inj. | Moderate | Low | Low | Low | None | None | Low to moderate | High | Moderate | Low | None | Moderate | None | Low to moderateb |

| ASR | Moderate | Moderate | Moderate | Moderate | None | None | Low | High | Moderate | Moderate | Low to moderate | Low | None | Low |

| Wetlands | Low to moderate | Low to moderate | Low | Moderate | None | None | Low | Moderate to high | Low | Low to moderate | Low | Low | None | Low |

| Reservoirs | Low to moderate | Low | Low | Low to moderate | None | None | Low | Moderate to high | Low | Low | Low | Low | None | Low |

NOTE: The qualitative values in the table represent the consensus best professional judgment of the committee.

aLow represents little generation of residuals, high represents significant amounts of residual generation; bHigh when required pretreatment is considered.

any combination of the treatment processes described in the following sections.

Disinfection processes are those that are deliberately designed for the reduction of pathogens. Pathogens generally targeted for reduction are bacteria (e.g., Salmonella, Shigella), viruses (e.g., norovirus, adenovirus), and protozoa (e.g., Giardia, Cryptosporidium) (see also Chapter 3).

Common agents used for disinfection in wastewater reclamation are chlorine (applied as gaseous chlorine or liquid hypochlorite) and ultraviolet (UV) irradiation. Only chlorine is purchased as a chemical in commerce. Chlorine dioxide, ozone, and UV are generated on-site. In drinking water applications, chlorine and hypochlorite remain the most common disinfectants, although they are decreasing in prevalence (Table 4-2). Chloramines are formed from either chlorine or hypochlorite if appropriate amounts of ammonia are present (as in wastewater) or if ammonia is deliberately added. Although chlorine or hypochlorites are still the most prevalent disinfection processes used in wastewater applications, UV is much more common and chlorine dioxide and ozone are less common than in drinking water applications (Asano et al., 2007). Membrane processes can also remove many pathogens, although they are not considered reliable stand-alone methods for disinfection, as discussed later in this chapter.

TABLE 4-2 Drinking Water Disinfection Practices According to 1998 and 2007 AWWA Surveys

| Percent of Drinking Water Utilities Using | ||

| Disinfectant | 1998 | 2007 |

| Chlorine gas | 70 | 61 |

| Chloramines | 11 | 30 |

| Sodium hypochlorite | 22 | 31 |

| Onsite generation of hypochlorite | 2 | 8 |

| Calcium hypochlorite | 4 | 8 |

| Chlorine dioxide | 4 | 8 |

| Ozone | 2 | 9 |

| UV | 0 | 2 |

NOTE: Percentages sum to more than 100 because some utilities use multiple disinfectants.

SOURCE: AWWA Disinfection Systems Committee (2008); AWWA Water Quality Division Disinfection Systems Committee (2000).

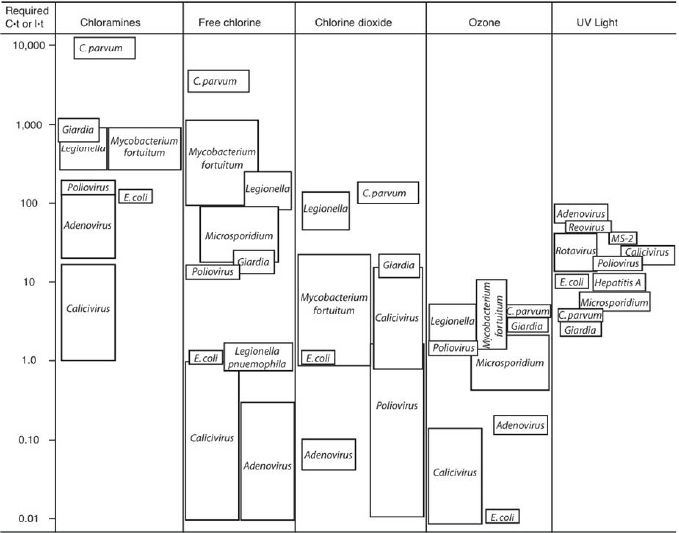

The effectiveness of each of the disinfectants against pathogens is a function of the amount of disinfectant added, the contact time provided, and water quality variables that may compete for the disinfectant or modulate its effectiveness. Once decay (or in the case of UV, absorbance of energy) is taken into account, a first approximation to effectiveness is the product of residual concentration (C) (or in the case of UV delivered power intensity [I]) and contact time (t). There is a relationship between the C·t “product” (actually integrated over the contact time of a disinfection reactor, taking into account hydraulic imperfections) and the degree of microbial inactivation. This concept is schematically illustrated in Figure 4-2.

The relationships between C·t and microbial inactivation may be affected by water quality (e.g., temperature, turbidity, pH). For chlorine in particular, there is a strong effect of pH, with disinfection being more effective below pH 7.6 (when hypochlorous acid [HOCl] predominates) than above pH 7.6 (where hypochlorite [OCl–] predominates) (Fair et al., 1948). The impact of turbidity on disinfection has been known for a long time and is particularly problematic in disinfection of wastewater effluents (Hejkal et al., 1979). However, in drinking water, when the turbidity is <1 turbidity unit (TU), the effect of turbidity on disinfection is minimal (LeChevallier et al., 1981). This has also been confirmed on experiments with actual waters, demonstrating that 0.45-µm filtration had minimal effect on disinfection of water (by chlorine or chlorine dioxide) in waters with initial turbidity <2 TU (Barbeau et al., 2005). Other water quality factors, the nature of which remains unknown, may modulate disinfection effectiveness for both chlorine (Haas et al., 1996) and ozone (Finch et al., 2001). It should also be noted that disinfection and the competing decay and demand processes are nonlinear. Therefore, a more detailed consideration of these nonlinearities as coupled to hydraulics is needed for a full engineering design (Bellamy et al., 1998; Bartrand et al., 2009).

In general, in most disinfection approaches except UV, bacteria are more easily disinfected (lower required C·t) than viruses, which are in turn more easily disinfected than protozoa. With UV, protozoa, are somewhat more sensitive than viruses (particularly

FIGURE 4-2 Required “C.t” or “I.t” for 99 percent inactivation of different organisms by different disinfectants at pH 7, 20-25 °C.

SOURCE: Crittenden et al. (2005).

adenovirus, the most UV-resistant class of viruses) (Jacangelo et al., 2002).

Chemical disinfectants (i.e., chlorine, ozone, chlorine dioxide) are known to produce characteristic disinfection byproducts (Minear and Amy, 1996; see also Chapter 3). The spectrum of these will not be reviewed in this report, but in general, chlorine and ozone can react with organic materials to produce stable disinfection byproducts (which may or may not be halogenated). For chlorine, these include trihalomethanes, trihaloacetic acids, haloaldehydes, and haloamines. Ozone can react with bromide that may be present to produce bromine and, in turn, brominated byproducts, including bromate. Chlorine dioxide can produce chlorite and chlorate, and depending on the mode of production of chlorine dioxide, chlorine may also be present, which can produce disinfection byproducts analogous to those produced by chlorination (Tibbets, 1995; Richardson et al., 1994; van Nieuwenhuijsen et al., 2000; Hua and Reckhow, 2007).

Advanced engineered unit processes and operations can be grouped into engineering systems targeting the removal of nutrients and organic constituents, reduction of total dissolved solids (TDS) or salinity, and provision of additional treatment barriers to pathogens (Figure 4-1). Nutrients can be reduced by biological nitrification/denitrification processes, gas stripping, breakpoint chlorination, and chemical precipitation. Organic constituents can be further removed by various

advanced processes, including activated carbon, chemical oxidation (ozone, advanced oxidation processes [AOPs]), nanofiltration (NF), and reverse osmosis (RO). Dissolved solids are retained during softening, electrodialysis, NF, and RO. Various processes can be combined to produce the desired effluent water quality depending on the reuse requirements, source water quality, waste disposal considerations, treatment cost, and energy needs.

Nutrient Removal

Nutrient removal is often required in reuse applications where streamflow augmentation or groundwater recharge is practiced to prevent eutrophication or nitrate contamination of shallow groundwater. Nutrient removal can be either an integral part of the secondary biological treatment system or an add-on process to an existing conventional treatment scheme.

All of the biological processes for nitrogen removal include an aerobic zone in which biological nitrification occurs. An anoxic zone and proper retention time is then provided to allow biological denitrification (conversion to nitrogen gas) to reduce the concentrations of nitrate to less than 8 mg N/L as illustrated in Table 3-2 (Tchobanoglous et al., 2002). Gas stripping for removal of ammonia or breakpoint chlorination as the primary means for nitrogen removal is not commonly employed in wastewater reclamation applications in the United States.

To accomplish biological phosphorus removal via phosphorus-storing bacteria, a sequence of an anaerobic zone followed by an aerobic zone is required (for more detailed information see Tchobanoglous et al., 2002). Phosphorus removal can also be achieved by chemical precipitation by adding metal salts (e.g., Ca(II), Al(III), Fe(III)) with a subsequent filtration following the activated sludge system. Although chemical precipitation for phosphorus removal is practiced in many water reclamation facilities, biological phosphorus removal requires no chemical input. Biological phosphorus removal, however, requires a dedicated anaerobic zone and modifications to the activated sludge process, which usually is more costly during a plant retrofit than an upgrade to chemical precipitation. A biological phosphorus removal process is also more challenging to control and maintain because it depends upon a more consistent feedwater quality and steady operational conditions. Biological and chemical phosphorus removal can result in effluent concentrations of less than 0.5 mg P/L (see Table 3-2).

Suspended Solids Removal

Filtration is a key unit operation in water reclamation, providing a separation of suspended and colloidal particles, including microorganisms, from water. The three main purposes of filtration are to (1) allow a more effective disinfection; (2) provide pretreatment for subsequent advanced treatment steps, such as carbon adsorption, membrane filtration, or chemical oxidation; and (3) remove chemically precipitated phosphorus (Asano et al., 2007). Filtration operations most commonly used in water reclamation are depth, surface, and membrane filtration.

Depth filtration is the most common method used for the filtration of wastewater effluents in water reclamation. In addition to providing supplemental removal of suspended solids including any sorbed contaminants, depth filtration is especially important as a conditioning step for effective disinfection. At larger reuse facilities (>1,000 m3/d or >4 MGD), mono- and dual-media filters are most commonly used for wastewater filtration with gravity or pressure as the driving force. Both mono- and dual-media filters using sand and anthracite have typical filtration rates between 2,900 and 8,600 gal/ft2 per day (4,900–14,600 L/m2 per hour) while achieving effluent turbidities between 0.3 and 4 nephelometric turbidity units (NTU). Because large plants with many filters usually do not practice wasting of the initial filtrate after backwash (filter-to-waste), effluent qualities with elevated initial turbidity are commonly observed, and as a consequence, the overall effluent quality can be less consistent in granular media filtration plants compared with reclaimed water provided by a membrane filtration plant.

As an alternative to depth filtration, surface filtration can be used as pretreatment for membrane filtration or UV disinfection. In surface filtration, particulate matter is removed by mechanical sieving by passing water through thin filter material that is composed of cloth fabrics of different weaves, woven metal fabrics, and a variety of synthetic materials with openings between 10 to 30 m or larger. Surface filters can be

operated at higher filtration rates (3,600–30,000 gal/ft2 per day; 6,100–51,000 L/m2 per hour) while achieving lower effluent turbidities than conventional sand filters.

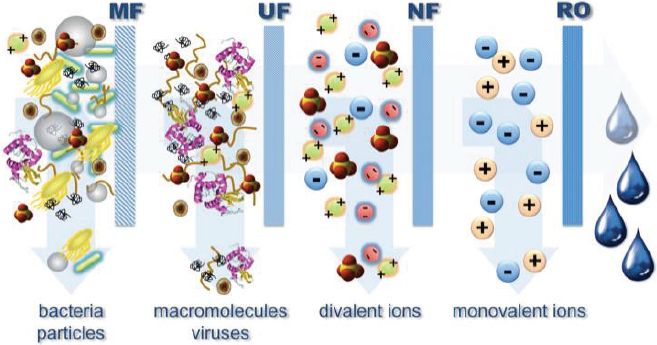

Membrane filters, such as microfiltration (MF) and ultrafiltration (UF), are also surface filtration devices, but they exhibit pore sizes in the range from 0.08 to 2 m for MF and 0.005 to 0.2 m for UF. In addition to removing suspended matter, MF and UF can remove large organic molecules, large colloidal particles, and many microorganisms. The advantages of membrane filtration as compared with conventional filtration are the smaller space requirements, reduced labor requirements, ease of process automation, more effective pathogen removal (in particular with respect to protozoa and bacteria), and potentially reduced chemical demand. An additional advantage is the generation of a consistent effluent quality with respect to suspended matter and pathogens. This treatment usually results in effluent turbidities well below 1 NTU (Asano et al., 2007). The drawbacks of this technology are potentially higher capital costs, the limited life span of membranes requiring replacement, the complexity of the operation, and the potential for irreversible membrane fouling that reduces productivity. Unlike robust conventional media filters, membrane systems require a higher degree of maintenance and strategies directed to achieve optimal performance. More detail about MF and UF membranes and their operation in reuse applications is provided in the following sections as well as in Asano et al. (2007).

Removal of Organic Matter and Trace Organic Chemicals

The following sections describe processes that are designed to remove organic matter and trace organic chemicals from reclaimed water. These processes include membrane filtration (MF, UF, NF, and RO), adsorption onto activated carbon, biological filtration, and chemical oxidation (chlorine, chloramines, ozone, and UV irradiation).

Microfiltration and Ultrafiltration

MF and UF membrane processes can be configured using pressurized or submerged membrane modules. In the pressurized configuration, a pump is used to pressurize the feedwater and circulate it through the membrane. Pressurized MF and UF units can be operated in two hydraulic flow regimes, either in cross-flow or dead-in filtration mode. In a submerged system, membrane elements are immersed in the feedwater tank and permeate is withdrawn through the membrane by applying a vacuum. The key operational parameter that determines the efficiency of MF and UF membranes and operating costs is flux, which is the rate of water flow volume per membrane area. Factors affecting the flux rate include the applied pressure, fouling potential, and reclaimed water characteristics (Zhang et al., 2006). Flux can be maintained by appropriate cross-flow velocities, backflushing, air scouring, and chemical cleaning of membranes. Typically, MF and UF processes operate at flux rates ranging from 28 to 110 gal/ft2 per day (48 to 190 L/m2 per hour) (Asano et al., 2007).

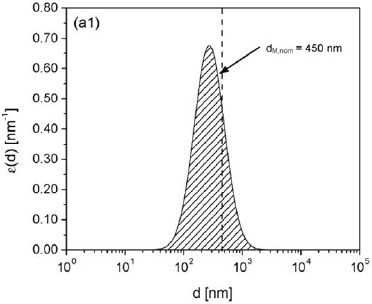

MF and UF membranes are effective in removing microorganisms (Figure 4-3). It is generally believed that MF can remove 90 to 99.999 percent (1 to 5 logs) of bacteria and protozoa, and 0 to 99 percent (0 to 2 logs) of viruses (EPA, 2001; Crittenden et al., 2005). However, filtration efficiencies vary with the type of membrane and the physical and chemical characteristics of the wastewater, resulting in a wide range of removal efficiencies for pathogens (NRC, 1998). MF and certain UF membranes should not be relied upon

FIGURE 4-3 Pore size distribution of a microfiltration membrane.

SOURCE: Pera-Titus and Llorens (2007).

for complete removal of viruses for several reasons (Asano et al., 2007). First, whereas the terms micro-and ultrafiltration nominally refer to pore sizes that have cutoff characteristics as shown in Figure 4-3, the actual pore sizes in today’s commercial membranes often vary over a wide range. Second, experience has shown that today’s membrane systems sometimes experience problems with integrity during use for a variety of reasons. Although membrane integrity tests have been developed and these tests are widely used, they are not suitable for detecting imperfections small enough to allow viruses to pass.

Nevertheless, it is generally believed that the new generation of filtration systems has significantly improved performance for microbial removal. For example, Orange County Water District (OCWD) compared the MF filtration result of their current groundwater replenishment system (GWRS) operation initiated in 2008 (see Table 2-3) with data collected during Interim Water Factor 21 (IWF21), the precursor to the GWRS project, started in 2004. Although the influent water quality was similar for both projects, IWF21 MF filtrate showed breakthrough of total coliform in 58 percent of the samples and Giardia cysts in 23 percent of samples, whereas both were absent in the GWRS MF filtrates (OCWD, 2009). However, MF did not eliminate viruses. Coliphages were present in GWRS after MF treatment. The geometric mean of male-specific coliphage was 134 plaque-forming units (pfu)/100 mL in MF-treated water (OCWD, 2009). Combining MF with chlorination is likely to improve the rate of virus removal. The OCWD reports significant reduction of coliphage in the MF feed in the presence the chloramine residual. Male-specific coliphage dropped from a geometric mean of 1,800 pfu/100 mL in the previous year to 28 pfu/100 mL in the MF feed and they were absent in the MF filtrate (OCWD, 2010).

MF and UF membranes sometimes in combination with coagulation can also physically retain large dissolved organic molecules and colloidal particles. Effluent organic matter and hydrophobic trace organic chemicals can also adsorb to virgin MF and UF membranes, but this initial adsorption capacity is quickly exhausted. Thus, adsorption of trace organic chemicals is not an effective mechanism in steady-state operation of low-pressure membrane filters.

Nanofiltration or Reverse Osmosis

For reuse projects that require removal of dissolved solids and trace organic chemicals and where a consistent water quality is desired, the use of integrated membrane systems incorporating MF or UF followed by NF or RO may be required. RO and NF are pressure-driven membrane processes that separate dissolved constituents from a feedstream into a concentrate and permeate stream (Figure 4-4). Treating reclaimed water with RO and NF membranes usually results in product water recoveries of 70 to 85 percent. Thus, the use of NF or RO results in a net loss of water resources through disposal of the brine concentrate. RO applications in water reuse have been favored in coastal settings where the RO concentrate can be conveniently discharged to the ocean, but inland applications using RO are restricted because of limited options for brine disposal (see NRC [2008b] for an in-depth discussion of alternatives for concentrate disposal and associated issues). Thus, existing inland water reuse installations employing RO membranes are limited in capacity and commonly discharge brine to the sewer or a receiving stream provided that there is enough dilution capacity.

Most commonly used RO and NF membranes provide apparent molecular weight cutoffs of less than 150 and 300 Daltons, respectively, and are therefore highly efficient in the removal of organic matter and selective for trace organic chemicals. Some of the organic constituents that are only partially removed by NF and RO membranes while still achieving total organic carbon (TOC) concentrations of less than 0.5 mg/L are low-molecular-weight organic acids and neutrals (e.g., N-nitrosodimethylamine [NDMA], 1,4-dioxane) as well as certain disinfection byproducts (e.g., chloroform) (Bellona et al., 2008). Recent advances in membrane development have resulted in low-pressure RO membranes and NF membranes that can be operated at significantly lower feed pressure while providing approximately the same product water quality. However, certain monovalent ions (e.g., Cl–, Na+, NO3–) are only partially rejected by NF, and NF membranes result in product water with higher TDS than RO (Bellona et al., 2008).

Today, most integrated membrane systems applied in reuse employ RO rather than NF. However, certain low-pressure NF membranes offer opportunities for

FIGURE 4-4 Substances and contaminants nominally removed by pressure-driven membrane processes.

SOURCE: Cath (2010).

wider applications in water reclamation projects because they have lower energy requirements and can achieve selective rejection of salts and organic constituents that results in less concentrated brine streams. For wastewater applications, RO and ultra-low-pressure RO membrane facilities typically operate at feed pressures between 1,000 and 2,100 kPa (approximately 150–300 psi) in order to produce between 8.5 and 12.5 gal/ft2 per day (13.5 and 20 L/m2 per hour) of permeate (Lopez-Ramirez et al., 2006). NF membranes, while achieving a similar product water quality with respect to TOC and trace organic chemicals, can be operated at 2 to 4 times lower feed pressures, resulting in significantly greater energy savings than conventional RO membranes (Bellona and Drewes, 2007).

RO and NF membranes, in theory, should remove all pathogens from the feedwater because they are designed to remove relatively small molecules. However, some earlier testing results have shown that the removal of virus surrogates (coliphage) seeded in front of RO is sometimes incomplete. For example, studies conducted by the City of San Diego noted coliphage breakthrough in the permeate of the RO system at concentrations up to 103 pfu/100 mL (Adham et al., 1998). Early tests showed inadequate removal of protozoa and bacteria as well. Leaks around the seals and connectors were suspected as the cause of reduced microbial removal efficiency, but once faulty connectors and an obviously flawed membrane element were identified and replaced, rejection of bacteria and protozoa seemed absolute, but the removal of the surrogate coliphage MS2 remained slightly above 2.5 logs (99.7 percent). Expansion of both bench- and pilot-scale testing to include a variety of manufacturers revealed that the quality of brackish water RO membranes ranged widely, with one manufacturer consistently demonstrating complete rejection in both types of tests. Though systematic tests are not available, newer RO systems may have significantly improved performance for microbial removal. Recent tests have shown promising results (Lozier et al., 2006) and data collected in 2008 at OCWD’s GWRS revealed the absence of native coliphage in 1-L samples of RO effluent,1 which indicated an improvement from the earlier pilot study (27 percent RO breakthrough rates) using an older generation of membranes (OCWD, 2010).

_____________

1 Randomly selected RO permeate samples taken from each of 15 RO trains, each sampled three times (M. Wehner, OCWD, personal communication, 2011)

Activated Carbon

In water reclamation, adsorption processes are sometimes used to remove dissolved constituents by accumulation on a solid phase. Activated carbon is a common adsorbent, which is employed as powdered activated carbon (PAC) with a grain diameter of less than 0.074 mm or granular activated carbon (GAC), which has a particle diameter greater than 0.1 mm. During water reclamation, PAC can be added directly to the activated sludge process or solids contact processes, upstream of a tertiary filtration step. GAC is used in pressure and gravity filtration. Activated carbon is efficient for the removal of many regulated synthetic organic compounds as well as unregulated trace organic chemicals exhibiting properties of high and moderate hydrophobicity (e.g., steroid hormones, triclosan, bisphenol A) (Snyder et al., 2006a). Although PAC needs to be disposed of after its adsorption capacity is reached, GAC can be regenerated either on- or offsite, providing this practice is more cost-effective than disposing it via landfills. Onsite GAC regeneration is only cost-effective for large installations and is currently not practiced by any water reclamation facility in the United States. GAC adsorbents are characterized by short empty-bed contact times (i.e., 5-30 min) and preferably a large throughput volume (i.e., bed volumes of 2,000 to 20,000 m3/m3) (Asano et al., 2007).

Biological Filtration

As mentioned previously in this chapter, the use of strong oxidants, such as ozone or ozone/peroxide and UV/peroxide, results in the formation of various biodegradable byproducts (Wert et al., 2007). For instance, simple aldehydes, ketones, and carboxylic acids are produced as ozone oxidizes organic matter in water. The aggregate measurements commonly employed to assess the biodegradability of transformation products is assimilable organic carbon (AOC) (Hammes and Egli, 2005) and biodegradable dissolved organic carbon (BDOC) (Servais et al., 1987). This readily biodegradable carbon has been implicated in the acceleration and promotion of biofilm growth in distribution systems. Thus, drinking water treatment facilities usually employ biofiltration after ozonation to reduce BDOC with the aid of indigenous bacteria present in the feedwater. Additionally, the use of biofiltration after ozone also has been shown to reduce the formation of some byproducts formed during secondary disinfection with chlorine (Wert et al., 2007). Some studies have also demonstrated that the byproducts from ozonation of trace organic chemicals, such as steroid hormones and pharmaceuticals, also are largely biodegradable (Stalter et al., 2010); therefore, there is growing support for the use of biofiltration after ozone or AOP. Although biofiltration alone may provide some direct benefit in terms of removing trace organic chemicals, it has generally been shown to be only marginally effective without a prior oxidation step (Juhna and Melin, 2006).

Biological filtration can be accomplished using traditional media (i.e., sand/anthracite) or using activated carbon (biologically activated carbon [BAC]). Although some studies have suggested that activated carbon is superior for supporting biological growth, mainly because of superior adherence of the biofilm to the GAC, there are some conflicting reports that show approximately equal performance using anthracite (Wert et al., 2008). Some studies have demonstrated that BAC is capable of adsorption as well as biological degradation; however, the adsorptive capacity of the BAC will eventually be reduced as the micropores in the carbon structure become blocked and the adsorptive capacity subsequently becomes exhausted. At this point, fresh GAC will be required to restore the adsorptive capacity, but effective biological activity as measured by reduction of AOC or BDOC will take time to establish. The amount of time needed to develop a biologically active filter will depend on water quality, water temperature, and operational parameters. An important factor in establishing and maintaining an active biofilm is the backwash frequency with chlorinated water.

One major disadvantage of using biological filtration is the detachment of biofilm and likely detection of bacteria in filtered water. Although these bacteria are not harmful, the detection of heterotrophic bacteria could in some cases lead to regulatory violations. In those cases, biofiltration would generally be followed by a disinfection step, such as chlorination or UV irradiation.

Chemical Oxidation

Chemical oxidation is commonly employed in water treatment to achieve disinfection, as described previously in this chapter; however, oxidants are also used to remove tastes, odors, and color and to improve the removal of metals (Singer and Reckhow, 2010). Oxidants used for water treatment include chlorine, chloramine, ozone, permanganate, chlorine dioxide, and ferrate. Advanced oxidation relies upon formation of powerful radical species, primarily hydroxyl radicals (OH·) and is rapidly gaining in use for the oxidation of more resistant chemicals, such as many trace organic chemicals and industrial solvents (Esplugas et al., 2007). The most commonly employed advanced oxidation techniques in water reclamation use hydrogen peroxide coupled with UV light or ozone gas. The UV light itself is not strictly an oxidant but it does selectively transform a small group of compounds sensitive to direct photolysis (e.g., NDMA, iohexol, triclosan, acetaminophen, diclofenac, sulfamethoxazole) (Pereira et al., 2007; Snyder et al., 2007; Yuan et al., 2009; and Sanches et al., 2010).

Very few oxidative technologies are employed at operational conditions capable of mineralizing organic materials in water. Even the most promising advanced oxidation techniques using ozone and UV irradiation combined with peroxide will result in only a minor (if any) measurable reduction of dissolved organic carbon (DOC). Regardless of the oxidation technique deployed and superior performance of trace organic chemical removal, some transformation products will result that are often uncharacterized (see Chapter 3 for additional discussions of transformation byproducts). The most commonly used oxidation methods for the removal of trace organic contaminants are described below.

Chlorine. Chlorine, defined here as the combination of chlorine gas, HOCl, and OCl–, reacts selectively with electron-rich bonds of organic chemicals (e.g., double bonds in aromatic hydrocarbons) (Minear and Amy, 1996). Recently, several reports have shown that many trace organic chemicals containing reactive functional groups can be oxidized by free chlorine (Adams et al., 2002; Deborde et al., 2004; Lee et al., 2004, Pinkston and Sedlak, 2004; Westerhoff et al., 2005), while ketone steroids (e.g., testosterone and progesterone) are not as effectively oxidized (Westerhoff et al., 2005). However, the ability of chlorine to effectively oxidize trace organic chemicals, including steroid hormones, is a function of contact time and dose. More importantly, chlorine is not expected to mineralize trace organic chemicals, but rather to transform them into new products (Vanderford et al., 2008), which may in fact be more toxic than the original molecule.

Chloramines. Chloramines are not nearly as effective as oxidants and thus play a much smaller role in trace organic chemical oxidation. Snyder (2007) demonstrated that a dose of 3 mg/L chloramines and a contact time of 24 hours was able to effectively oxidize phenolic steroid hormones (e.g., estrone, estradiol, estriol, ethinyl estradiol) as well as triclosan and acetaminophen; however, the vast majority of trace organic chemicals studied were not significant oxidized. Therefore, although chloramines play an important role in reduction of membrane fouling and disinfection, only minimal expected benefit in oxidation of trace organic chemicals will result. Moreover, careful evaluation of nitrosamine formation should be undertaken when using chloramines, considering the carcinogenic potency of these byproducts (see Choi et al., 2002; Mitch et al., 2003; Haas, 2010).

Ozone. Ozone (O3) is a powerful oxidant and disinfectant that decays rapidly and leaves no appreciable residual in reclaimed water during storage and distribution. Ozone-enriched oxygen is generally added to water through diffusers producing fine bubbles, and once dissolved in water, ozone quickly undergoes a cascade of reactions, including decomposition into hydroxyl radicals (OH·), hydroperoxyl radical (HO2), and superoxide ion (O2–). These radicals along with molecular ozone will rapidly react with organic matter, carbonate, bicarbonate, reduced metals, and other constituents in water. The reactions mediated by the hydroxyl radical are relatively nonselective, whereas molecular ozone is more selective (Elovitz et al., 2000).

Because of ozone’s ability to oxidize organic chemicals, it has been widely applied in water treatment for taste and odor control, color removal, and to reduce concentrations of trace organic chemicals. At dosages commonly employed for disinfection, the vast majority of contaminants can be effectively converted into transformation products (Snyder et al., 2006c). Although

several studies have shown that ozone effectively reduces estrogenic potency in reclaimed water (Snyder et al., 2006c), recent publications have suggested that biologically active filters be included after the ozone process in order to remove biodegradable byproducts formed during ozonation (Stalter et al., 2010). For potable reuse applications, ozonation could also be applied after soil aquifer treatment (SAT), which combines the benefits of a more selective oxidation of remaining chemicals persistent to biodegradation and a lower ozone demand due to reduced DOC concentrations in the recovered water.

It is well known that in the presence of bromide, ozone can form bromate, a toxic byproduct. There are steps that can be employed to mitigate the formation of bromate, such as the use of chlorine and ammonia before ozone addition (von Gunten, 2003). Some reports have shown that ozone applied before chloramination also results in the oxidation of nitrosamine precursors (Lee et al., 2007). However, ozone also has been shown to form some nitrosamines directly (von Gunten et al., 2010).

Ozone can play an important role in water reclamation, but the process is more energy intensive and operationally complex than chlorination. In cases where trace organic chemical removal (e.g., pharmaceuticals, steroid hormones) is important, ozone is a viable option and does not result in a residuals stream like NF or RO membrane processes or in spent media as with activated carbon. However, ozone does not provide a complete barrier to trace organic chemicals, and there are certain chemicals that are not amendable to oxidation (e.g., chlorinated flame retardants; artificial sweeteners) (Snyder et al., 2006c).

UV irradiation. UV light at doses commonly employed for disinfection (40–80 mJ/cm2) is largely ineffective for trace organic chemical removal. In a recent study that investigated the removal of trace organic chemicals from water, none of the target compounds investigated were well removed (>80 percent oxidized) using UV at disinfection doses (Snyder, 2007). However, when UV doses are significantly increased (generally by 10-fold) and high doses of hydrogen peroxide (5 mg/L and higher) are added, most trace organic chemicals were effectively oxidized (Snyder et al., 2006c). Activated carbon is sometimes employed to catalytically remove hydrogen peroxide, and other chemicals can be used to remove excess peroxide from the UV-AOP effluent. Although UV-AOP does form transformation products (i.e., it does not result in mineralization of organic compounds), it does not form bromate. Additionally, UV alone at elevated dosages or in combination with hydrogen peroxide (UV-AOP) effectively removes NDMA.

UV-AOP efficacy, however, is quite susceptible to water quality and requires proper pretreatment. In many potable reuse applications, UV-AOP is applied after RO treatment to negate the detrimental impacts of water quality, such as suspended and particulate matter and DOC. UV-AOP applications generally will require extensive pretreatment to increase UV transmittance; however, recent studies have demonstrated that UV-AOP can be also effective in advanced-treated effluents (Rosario-Ortiz et al., 2010).

Removal of Dissolved Solids

Domestic and commercial uses of public water supplies result in an increase in the mineral content of municipal wastewater. This increase can be problematic where drinking water supplies are already elevated in TDS and regional water reuse is already occurring, resulting in partially closed water and salt cycles. Hard water can also be a problem because it results in the proliferation of self-regenerating water softeners, which discharge their regenerant into the wastewater collection system. To mitigate salinity problems associated with local water reuse activities, especially in inland applications, partial desalination of reclaimed water especially for potable reuse projects may be required.

In addition to pressure-driven membrane-based separation processes, such as NF and RO, as discussed above, current-driven membrane processes, such as electrodialysis (ED) or electrodialysis reversal (EDR), can be used to separate salts. Nevertheless, ED and EDR are not commonly employed in water reclamation and currently only one facility in Southern California is using EDR to remove TDS at a demonstration-scale facility. Precipitative softening can also be used for partial demineralization (mainly to remove hardness) and is currently employed for this purpose in the City of Aurora’s Prairie Waters Project, Colorado (see also Box 4-1).

BOX 4-1

Prairie Waters Project, Aurora, Colorado

The Prairie Waters Project, established by the City of Aurora, Colorado, in 2010, is a potable water reuse augmentation project that will increase Aurora’s water supply by 20 percent; delivering up to 9 MGD (34,000 m3/d). The project is using return flows discharged to the South Platte River downstream of Denver. This water is recovered through a series of 17 vertical riverbank filtration wells, followed by artificial recharge and recovery (ARR), providing a retention time of approximately 30 days in the subsurface. The water is subsequently pumped to an advanced water treatment plant. The water treatment plant consists of precipitative softening, UV-AOP, biologically active carbon filtration, and granular activated carbon (GAC) filtration. At a ratio of 2:1, the final product water is blended with Aurora’s current supply using mountain runoff water prior to disinfection and final distribution. Precipitative softening is employed to maintain a hardness level that is similar to Aurora’s current supply. Riverbank filtration and ARR are very efficient in removing pathogens, organic carbon, trace organic chemicals, and nitrate (Hoppe-Jones et al., 2010). UV-AOP and GAC serve as an additional barrier for trace organic chemicals that might survive after the natural treatment process. The treatment scheme was selected because alternatives such as reverse osmosis with zero liquid discharge of brine or wetland treatment instead of riverbank filtration were cost-prohibitive or not viable.

SOURCE: http://www.prairiewaters.org.

Natural processes in water reclamation are usually employed in combination with aboveground engineered processes and consist of managed aquifer recharge systems and natural or constructed wetlands (Figure 4-1). Natural systems can be considered as multiobjective treatment processes targeting the removal of pathogens, particulate and suspended matter, DOC, trace organic chemicals, and nutrients, either as the key treatment process or as an add-on polishing step. All natural treatment processes combine the advantage of a low carbon footprint (i.e., little to no chemical input, low energy needs) with little to no residual generation. The drawbacks of these processes are the required footprint and a suitable geology, which might not be available where the use of natural treatment systems is desired. Examples of managed natural processes in reuse systems and the general role of environmental buffers in potable reuse projects are described in Chapter 2, but water quality improvements provided by these surface and subsurface natural systems are described in the subsequent sections.

Subsurface Managed Natural Systems

Subsurface managed natural systems can be used to enhance water quality and/or to provide natural storage for reclaimed water. These systems include surface spreading basins, vadose zone wells, and riverbank filtration wells, which take advantage of attenuation processes that occur in the vadose zone and saturated aquifer. Other processes, such as aquifer storage and recovery (ASR) and direct injection wells, introduce highly treated reclaimed water directly into a potable aquifer.

In general, subsurface treatment applications offer numerous advantages. These systems typically require a low degree of maintenance, and the energy requirements are low. The input of chemicals usually is not required, and the operation is residual free. Temperature equilibration of water is achieved during subsurface storage and excursions in water quality are buffered due to dispersion in the subsurface and dilution with native groundwater. However, subsurface applications require that a substantial aquifer be available and that it be characterized by an extensive site assessment. Although the advantages seem to outweigh the disadvantages from an operational standpoint, the lack of clear and standardized guidance for design and operation of these system limits wider establishment of managed subsurface treatment systems. Lack of process understanding can result in less-than-optimal performance or physical footprints or retention times that are larger than needed for the desired water quality improvements. Some installations might also exhibit deterioration of water quality in the recovered water due to biogeochemical reactions in the subsurface that were not anticipated.

Surface Spreading or Soil Aquifer Treatment

Surface spreading basins allow reclaimed water to infiltrate slowly through the vadose zone, where sorption, filtration, and biodegradation can enhance the water quality (also called soil aquifer treatment). Recharge basins for surface spreading operations are

often located in, or adjacent to, floodplains, characterized by soils with high permeability. In some instances, excavation is necessary to remove surface soils of low permeability. For mosquito control and to maintain permeability during operation with reclaimed water, recharge basins are usually operated in alternate wet and dry cycles. As the recharge basin dries out, dissolved oxygen penetrates into the subsurface, facilitating biochemical transformation processes, and organic material accumulated on the soil surface will desiccate, allowing for the recovery of infiltration rates (Fox et al., 2001).

The removal of organic matter during SAT is highly efficient and largely independent of the level of aboveground treatment. Biodegradable organic carbon that is not attenuated during wastewater treatment represents an electron donor for microorganisms in the subsurface and is readily removed during groundwater recharge (Drewes and Fox, 2000; Rauch-Williams and Drewes, 2006). Monitoring efforts revealed consistent removal of TOC between 70 and 90 percent at full-scale SAT facilities that were in operation for several decades (Quanrud et al., 2003; Drewes et al., 2006; Amy and Drewes, 2007; Lin et al., 2008; Laws et al., 2011). The removal of easily biodegradable organic carbon in the infiltration zone usually results in depletion of oxygen and the creation of anoxic conditions. Although this transition is advantageous to achieve denitrification, it might also lead to the solubilization of reduced manganese, iron, and arsenic from native aquifer materials. If these interactions occur, appropriate post-treatment is required after recovery of the recharged groundwater.

Previous studies have characterized the transformation and removal of select trace organic chemicals during SAT for travel times ranging from ~1 day to 8 years (Drewes et al., 2003a. Montgomery-Brown et al., 2003; Snyder et al., 2004; Grünheid et al., 2005; Massmann et al., 2006; Amy and Drewes, 2007). Several studies also report efficient removal of NDMA and other nitrosamines under both oxic and anoxic subsurface conditions (Sharp et al., 2005; Drewes et al., 2006; Nalinakumari et al., 2010). A case study conducted at a facility in Southern California (Box 4-2) illustrates the efficiency of short-term SAT for the attenuation of trace organic chemicals in reclaimed water (Laws et al., 2011).

Previous studies have demonstrated that the combination of filtration and biotransformation processes during subsurface treatment is very efficient for the inactivation of pathogens, especially viruses (Schijven et al., 2000, 2002; Quanrud et al., 2003; Azadpour-Keeley and Ward, 2005; Gupta et al., 2009). Attenuation of pathogens depends primarily on three mechanisms— straining, inactivation, and attachment to aquifer grains (McDowell-Boyer et al., 1986). Findings from field studies demonstrated that infiltration into a relatively homogeneous sandy aquifer can achieve up to 8 log virus removal over a distance of 30 m in about 25 days (Dizer et al., 1984; Yates et al., 1985; Powelson et al., 1990; Schijven et al., 1999, 2000). During SAT in the Dan Region Project, Israel, Icekson-Tal et al. (2003) measured 5.3 log removal of total coliform and 4.5 log removal of fecal coliform bacteria. The efficient removal of fecal and total coliform bacteria during subsurface treatment and essentially their absence in groundwater abstraction wells after SAT or riverbank filtration was confirmed by various other studies (Fox et al., 2001; Hijnen et al., 2005b; Levantesi et al., 2010). Other field studies have focused on attenuation of protozoa, and findings suggest that efficient removal occurs during passage across the surface water—groundwater interface and lesser removal is observed during groundwater transport away from this interface (Schijven et al., 1998). Further details on pathogen attenuation during SAT are provided in Chapter 7. An example of the degree of attenuation for various microbial and chemical constituents that can be achieved in SAT systems is illustrated in Tables A-7 and A-9 (Appendix A).

Nitrogen removal needs to be carefully managed when reclaimed water is applied with total nitrogen concentrations in excess of 20 mg N/L. At such high concentrations, the wetting and drying cycles of the spreading basins cannot meet the nitrogenous oxygen demand (in excess of 100 mg/L), resulting in incomplete nitrification. Ammonium is usually removed by cation exchange onto soil particles during wetting cycles, followed by nitrification of the adsorbed ammonium during drying cycles. Nitrate is not adsorbed to soils, but if sufficient carbon is present to create anoxic conditions, nitrate can be removed via denitrification during subsequent passage in the subsurface (Fox et al., 2001). Reclaimed water with nitrate concentrations in excess of 10 mg N/L can result in incomplete denitrification when applied to groundwater recharge basins because the biodegradable organic carbon usu-

ally present in a secondary or advanced-treated effluent will be insufficient to achieve complete denitrification.

For potable reuse projects, different regulatory requirements exist regarding the minimum retention time of reclaimed water in the subsurface prior to extraction. The primary intent of these regulations is to provide additional protection against pathogens in groundwater recharge projects and to provide time for corrective action in the event that substandard water is inadvertently recharged. Regulations in the state of Washington require a minimum of 6 months of hydraulic retention time in the subsurface for surface spreading operations and a minimum of 12 months for direct injection projects before the water can be recovered as a potable water source (Washington Department of Health and Washington Department of Ecology, 1997), while California’s draft groundwater recharge regulations require a minimum of 2 months in the subsurface for both surface spreading and injection projects to provide time for corrective action if substandard water is inadvertently recharged (CDPH, 2011). Others have defined minimum setbacks (i.e., horizontal separation) between reclaimed water surface spreading operations and potable wells (e.g., 500 ft [150 m] in Florida; 2,000 ft [610 m] in Washington) (FDEP, 2006; Washington Department of Health and Washington Department of Ecology, 1997). However, these setbacks or minimal retention times are frequently not based on scientific findings but represent a conservative estimate to provide additional removal credits for pathogens in case of a failure in the aboveground treatment train. Reuse regulations are discussed in more detail in Chapter 10.

Riverbank Filtration

Riverbank filtration has been practiced in the United States for more than 50 years for domestic drinking water supplies utilizing streams that might have been compromised in their quality due to the discharge of wastewater effluents or other waste streams (Ray et al., 2008). Recently, water reuse projects have integrated riverbank filtration into their treatment process train to take advantage of the benefits of this natural treatment system (see Box 4-1). Aquifers used for riverbank filtration usually consist of alluvial sand and gravel deposits, with thickness ranging from 15-200 feet (5–60 m) and a hydraulic conductivity higher than 10-4 m/s. In riverbank filtration, constant scour forces due to streamflow prevent the accumulation of particulate and colloidal organic matter in the infiltration layer.

Biodegradation of organic matter represents a key attenuation mechanism of riverbank filtration processes (Kühn and Müller, 2000; Hoppe-Jones et al., 2010). A bioactive filtration layer forms near the water/sediment interface where dissolved oxygen concentrations are highest, which can cause significant removal of DOC during the initial phase of infiltration (first meter). Conditions can quickly transition from oxic to anoxic as the water travels with increasing distance from the river through the subsurface, although the oxidation-reduction gradient depends on site specific conditions, such as DOC and ammonia concentrations in the river (Hiscock and Grischek, 2002; Ray et al., 2008).

More than 5-log removal of pathogen surrogate microorganisms (e.g., bacteria, viruses, and parasites) has been reported in riverbank filtration under steady-state conditions, with variations of ±1-log removal efficiency associated with individual microorganism characteristics (Medema et al., 2000). Havelaar et al. (1995) reported removal in excess of 5 logs for total coliform during transport of river water over a 30-m distance from the Rhine River and over a 25-m distance from the Meuse River to a well. Total coliforms were rarely detected in riverbank-filtered waters, with 5.5-and 6.1-log reductions in average concentrations in wells relative to river water (Weiss et al., 2005). Havelaar et al. (1995) reported 3.1-log removal of protozoa surrogates during transport over a 30-m distance from the Rhine River to a well and 3.6-log removal over a 25-m distance from the Meuse River to a well. Schijven et al. (1998) measured 1.9-log removal for protozoa surrogates over a 2-m distance from a canal. This finding is consistent with field monitoring results from a riverbank filtration site in Wyoming, where Gollnitz et al. (2005) observed a 2-log removal of Cryptosporidium surrogates in groundwater wells characterized by flowpaths between 20 and 984 ft (6 and 300 m). At a riverbank filtration site at the Great Miami River, Gollnitz et al. (2003) reported a 5-log removal of protozoa surrogates in a production well located 98 ft (30 m) off the river.

Numerous research projects have documented the removal of trace organic compounds during riverbank filtration. For example, Ray et al. (1998) and Vers-

BOX 4-2

Montebello Forebay Groundwater Recharge Operation, California

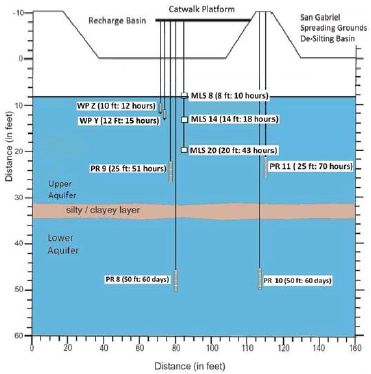

In the United States, drinking water augmentation with reclaimed water was pioneered by the County Sanitation Districts of Los Angeles County (CSDLAC) and the Water Replenishment District of Southern California (WRD) by establishing groundwater recharge spreading operations with reclaimed water in Pico Rivera, California in the early 1960s. Laws et al. (2011) studied the fate and transport of bulk organic matter and a suite of 22 trace organic chemicals during the surface-spreading recharge operation using a smaller but well-instrumented test basin at this facility. Two monitoring wells were located at the side of the recharge basin and lysimeters were installed beneath the basin (see figure below). Based on ion signatures it appeared that all of the samples collected originated from reclaimed water that was applied to the basin; however, the samples from the deeper wells (PR 8 and 10) appeared to have been diluted by native groundwater.

Instrumentation of groundwater recharge test basin associated with monitoring data provided in table below.

SOURCE: Laws et al. (2011).

traeten et al. (1999) reported 50 to 75 percent removal of the herbicide atrazine during riverbank filtration, although the underlying removal mechanisms were not clear. Despite the success of riverbank filtration in removing numerous compounds, certain trace organic chemicals have been regularly found in the product water of riverbank filtration systems, including urotropin (an aliphatic amine) and 1,5-naphthalindisulfonate (an aromatic sulfonate) (Brauch et al., 2000), antiepileptic drugs (e.g., carbamazepine, primidone), a blood-lipid regulator (e.g., clofibric acid), antibiotics (e.g., sulfamethoxazole), and x-ray contrast media were present in river water and bank-filtered water (Kühn and Müller, 2000; Schmidt et al., 2004; Hoppe-Jones et al., 2010; Maeng et al., 2010). A partial reduction in concentration was only achieved under certain redox conditions and through dilution with local groundwater.

Direct Injection

Direct injection of reclaimed water may occur in both saturated and unsaturated aquifers using wells that are constructed like regular pumping wells. In the United States, OCWD pioneered direct injection of

Over a travel time of less than three days in the upper aquifer, approximately 55 percent of the total organic carbon was removed (from 7.8 mg/L to 3.5±0.3 mg/L), and overall removal increased to 79 percent with increased travel time (60 days). Most of the observed removal occurred in the vadose zone (<2.4 m) because of its aerobic conditions. Attentuation of trace organic chemicals also occurred in the vadose zone, where concentrations decreased within the first 2.4 m (~10 hours). After 60 days travel time, the concentrations of monitored trace organic chemicals decreased further (see table below). Concentrations of primidone, carbamazepine, trimethoprim, N,N-diethyl-meta-toluamide (DEET), meprobamate, tris (2-chloroethyl) phosphate (TCEP), tris (2-chloroisopropyl) phosphate (TCPP), and triclosan were reduced less than 10 percent in the upper aquifer but contaminant attenuation increased with travel time (Laws et al., 2011).

Concentration of Select Trace Organic Chemicals in Reclaimed Water After Surface Spreading

| Compound | Basin | Avg. (MLS 8-PR 11) (10-70 hrs) | Avg. (PR8, PR10) (60 days) |

| Atrazine | <5 | 5±0.2 | 4.0±0.1 |

| TCEP | 400 | 402±15 6,483±87 | 128±39 |

| TCPP | 7,200 | 5 | 797±188 |

| Benzophenone | <1000 | 68±27 | <50 |

| DEET | 320 | 238±60 | 50±12 |

| Musk Ketone | <25 | <MRL | <25 |

| Triclosan | 6.5 | 6±3 | <1 |

| Atenolol | 830 | 31±34 | <1 |

| Atrovastatin | <10 | <MRL | <0.5 |

| Carbamazepine | 330 | 302±28 | 170±0 |

| Diazepam | <5 | 2±0.3 | 1.5±0.3 |

| Diclofenac | 24 | 10±2 | <0.5 |

| Fluoxetine | 13 | 0.57±0.16 | <0.5 |

| Gemfibrozil | 880 | 70±63 | 32±2.9 |

| Ibuprofen | 10 | 12±8 | 1.3±0 |

| Meprobamate | 430 | 375±45 | 132±31 |

| Naproxen | 32 | 6±3 | 2.4±0 |

| Phenytoin | 150 | 103±13 | 85±8 |

| Primidone | 150 | 168±45 | 90± 2.6 |

| Sulfamethoxazole | 460 | 390±129 | 207±12 |

| Trimethoprim | 54 | 58±33 | 3.5±2.6 |

| Iopromide | 2,700 | 60±41 | 89±18 |

SOURCE: Laws, et al. (2011).

NOTE: Monitoring wells (shown in the figure to the left) represent different travel times.

highly treated reclaimed water in 1976 for seawater intrusion barriers in Southern California (see Chapter 2 and Box 2-11). Direct injection wells may also be used as ASR wells where the same well serves for both injection and recovery (see also NRC, 2008c). For direct injection projects leading to drinking water augmentation, the reclaimed water is required to meet drinking water standards in addition to project-specific water quality criteria before it is injected into a potable aquifer. In these systems, the additional treatment provided in the subsurface is usually limited to temperature equilibration and blending with ambient groundwater. Storing reclaimed water after direct injection in the subsurface may also provide additional inactivation of any remaining viruses. During a deep-well (~100 ft [300 m] below surface) injection study, Schijven et al. (2000) spiked pretreated surface water with bacteriophage (MS2 and PRD1) and observed a 6-log removal within the first 8 m of travel, followed by an additional 2-log removal during the subsequent 98 ft (30 m) of travel. The degree of water quality transformations can vary with the flow path and contact time in the subsurface. Depending on the geological conditions of the subsurface, water quality degradation is possible;

for example, redox change can result in dissolution of certain constituents from the soil matrix, including iron, manganese, or arsenic.

Infiltration rates of direct injection wells are much higher than infiltration rates in spreading basins, although direct injection wells can become clogged at the interface of the gravel envelope of a well and the aquifer. Considerable research has been conducted to understand factors that contribute to clogging and to develop approaches to evaluate the clogging potential due to biological activity and suspended solids (Asano et al., 2007). These approaches can assess the relative clogging potential of different waters, but they cannot provide an absolute prediction of clogging in injection wells. Therefore, more reliable design and operational criteria are needed for a sustainable operation. The costs of direct injection wells can also be significant where deep aquifers are used for storage, which increases the well construction costs as well as the energy costs for injecting water to maintain proper infiltration rates (Asano et al., 2007).

A high level of pretreatment is usually employed to minimize the risk of clogging and to avoid costs for redeveloping clogged injection wells. In several potable reuse applications in the United States, RO treatment is employed prior to direct injection (Table 2-3, Chapter 2). This degree of treatment, however, reduces the biodegradable organic carbon, thereby limiting the biological activities in the subsurface environments and reducing the effectiveness of the natural subsurface treatment with respect to achieving attenuation of contaminants. At OCWD in the early 2000s, low-molecular-weight compounds, such as NDMA, were present in RO permeate and persisted after direct injection during subsurface transport, presumably because co-metabolic reactions that can remove these compounds were not adequately stimulated in the aquifer (Drewes et al., 2006; Sharp et al., 2007).

Surface Managed Natural Systems

In addition to providing aesthetic benefits and providing habitat and recreational opportunities, managed natural surface water systems can provide benefits with respect to water quality. One of the main differences between surface and subsurface managed natural systems is that managers of surface water systems must frequently satisfy competing demands and multiple objectives. For example, in addition to providing water quality benefits, engineered treatment wetlands frequently serve as habitat for birds and provide recreational and educational benefits for the community. In addition, they have the potential to serve as breeding grounds for mosquitoes and other vectors.

Another important difference between surface and subsurface systems is the way in which the water flows. With the exception of fractured bedrock, the soil and groundwater systems used in managed subsurface treatment processes lead to predictable flow patterns and residence times in the subsurface. In addition, the high surface area provided by soil and geological materials provides ample surface area for microbial growth, facilitating biological attenuation processes. In contrast, managed surface systems often exhibit preferential flow and lower biological activity. As a result, a poorly managed natural system has a higher potential for providing less-effective treatment than expected, with hydraulic short-circuiting and low biological activity leading to little contaminant attenuation.

Treatment Wetlands

Treatment wetlands have been used to treat reclaimed water for nonpotable and potable reuse (see Box 2-10). Treatment wetlands are built as either subsurface-flow or surface-flow systems. Subsurface-flow wetlands consist of plants growing within a gravel bed through which reclaimed water flows whereas surface-flow systems consist of wetland plants growing in anywhere from 0.5 to 2 feet (0.15 to 0.6 m) of flowing surface water with occasional deeper areas to enhance mixing and provide habitat (Kadlec and Knight, 1996). Subsurface wetlands are more common in colder climates and in locations where there are concerns about contact with contaminants in the reclaimed water (e.g., when wetlands are used for treatment of primary effluent). With respect to water reclamation, subsurface-flow wetlands may be better suited for decentralized treatment of primary or secondary effluent (e.g., septic tank effluent) than wastewater from full-scale treatment plants.

Surface-flow wetlands are less expensive to build and maintain and provide better habitat and aesthetic benefits and are therefore more common in

warmer climates. Ammonia is usually removed from reclaimed water through nitrification prior to discharge to surface-flow wetlands because ammonia toxicity affects the growth of plants and can be detrimental to resident fish that control mosquitoes.

Surface-flow wetlands frequently provide good removal of contaminants present in wastewater effluent. In particular, ample data indicate that surface-flow wetlands remove nitrate through denitrification in anoxic zones, and phosphorus through settling of particulate phosphate and uptake by growing plants (Kadlec and Knight, 1996). Wetlands also are effective in the removal of particles that settle out at the low flow velocities encountered in the wetland. As a result, wetlands provide removal of particle-associated pathogens and metals. Aerobic microorganisms living near the air– water interface and nitrate-reducing microbes below the surface also can transform organic contaminants as they metabolize decaying plants and organic matter present in the reclaimed water. Concentrations of certain trace organic chemicals, such as trihalomethane disinfection byproducts, also can decrease in treatment wetlands through volatilization (Rostad et al., 2000). Laboratory microcosm studies demonstrate the ability of microorganisms and organic compounds in wetlands to transform numerous trace organic chemicals (Gross et al., 2004; Matamoros et al., 2005; Matamoros and Bayona, 2006; Waltman et al., 2006).

Comparison of results from laboratory- or pilot-scale wetland studies with full-scale systems often indicates that hydraulic short-circuiting can result in significant decreases in treatment efficacy. For example, between 30 and 40 percent of the steroid hormones entering a pilot-scale surface treatment wetland were removed over a hydraulic residence time of approximately 2 days (Gray and Sedlak, 2005). The associated full-scale wetland, which had nearly identical plant species and a nominal hydraulic residence time of over a week should have achieved removals exceeding 90 percent, but monitoring of the inflow and outflow of the full-scale system failed to show significant removals. Presumably, this apparent discrepancy is due to hydraulic short-circuiting, which has been observed in tracer tests of the full-scale system (Lin et al., 2003). These types of findings are consistent with tracer studies of full-scale treatment wetlands that frequently show that preferential flow paths can become dominant within wetlands, meaning that a large fraction of the flow receives little treatment (Lightbody et al., 2008). Therefore, active management of surface-flow treatment wetlands is crucial to achieving effective treatment.

Reservoirs

As mentioned previously, surface-water reservoirs frequently are managed to preserve or enhance water quality. Procedures for proper management of reservoirs that receive reclaimed water are not well established because there are a limited number of reservoirs that receive reclaimed water and the contribution of reclaimed water to the overall volume of the reservoirs is typically small. Concentrations of trace organic chemicals usually are quite low and it is difficult to assess the potential for removal from reservoirs. There is a clear research need to better understand the contribution of various attenuation processes (i.e., biotransformation, photolysis, sorption to particulate matter, and dilution) for trace organic chemicals and pathogens in surface reservoirs receiving reclaimed water.

Despite these limitations, insight into the potential importance for attenuation of contaminants in reservoirs can be made from data on reservoirs and lakes that receive discharges of wastewater effluent. For example, Poiger et al. (2001) demonstrated that the pharmaceutical diclofenac underwent photolysis in the surface of Lake Griefensee in Switzerland that receives a significant fraction of its overall flow from wastewater treatment plants. Monitoring data and models of the stratified lake demonstrated that diclofenac concentrations were significantly lower in the epilimnion of the lake because photolysis rapidly transformed the compound. Thus, for those compounds that undergo photolysis (e.g., diclofenac, sulfamethoxazole) as well as waterborne pathogens that are inactivated by sunlight, the surface-to-volume ratio of the reservoir and the depth of the drinking water plant intake both could be important to the concentration of contaminants in the water entering the treatment plant.

In recent years, there has been increasing federal attention to the impacts of nutrients on surface water ecosystems. EPA has encouraged states to develop and adopt numeric nutrient criteria for nitrogen and phos-

phorus, which could affect the viability of surface discharge of reclaimed water without nutrient removal.2

A portfolio of treatment options, including engineered and managed natural treatment processes, exists to mitigate microbial and chemical contaminants in reclaimed water, facilitating a multitude of process combinations that can be tailored to meet specific water quality objectives. Advanced treatment processes are capable of also addressing contemporary water quality issues related to potable reuse involving emerging pathogens or trace organic chemicals. Ways to integrate these technologies through alternative system designs that ensure water quality are discussed in Chapter 5.

Advances in membrane filtration have made membrane-based processes particularly attractive for reuse applications. Membrane advances have resulted in treatment approaches for nonpotable and potable reuse applications that are associated with a smaller space requirement, reduced labor requirement, ease of process automation, more effective pathogen removal (in particular with respect to protozoa and bacteria), consistent effluent quality, and potentially reduced chemical demand. The drawbacks of this technology are potentially higher capital costs, the limited life span of membranes, the complexity of the operation, and the potential for irreversible membrane fouling that reduces productivity. Unlike robust conventional media filters, membrane systems require a higher degree of maintenance and strategies directed to achieve optimal performance.

Environmentally sustainable and cost-effective options for brine disposal are limited in inland areas. Many of the earliest potable water reuse projects were established in coastal communities where brine concentrate from RO systems could be disposed of by ocean discharge. As a result, many coastal utilities still favor RO to mitigate salinity and risks from trace organic chemicals to produce high-quality water for potable reuse. However, limited cost-effective concentrate disposal alternatives hinder the application of membrane technologies for water reuse in inland communities. Instead, inland potable water reuse projects are increasingly relying on treatment trains that do not include RO, such as process combinations that involve managed natural treatment systems, activated carbon, ozonation, or AOP.

The lack of clear and standardized guidance for design and operation of engineered natural systems is the biggest deterrent to their expanded use, in particular for potable reuse applications. Engineered natural systems that replace certain advanced treatment unit processes are compelling from an operational standpoint, but little is known how operating conditions could be modified and retention times shortened to achieve a predictable water quality while using a smaller footprint. Additional research is needed to elucidate key attenuation processes in engineered natural systems and quantify their effects on microbial and chemical constituents of concern so that guidance for design and operation can ultimately be developed. Although each application will still require a thorough site-specific assessment, general design standards and operating procedures as well as appropriate monitoring approaches can foster a wider application of natural systems as part of reuse schemes.

_____________

2 See http://water.epa.gov/scitech/swguidance/standards/criteria/nutrients/progress.cfm.