Ensuring Water Quality in Water Reclamation

A consistent reclaimed water quality can be achieved through appropriate treatment strategies (e.g., high-level disinfection, process redundancy), technical controls (e.g., alarm shutdowns, frequent inspection procedures), online monitoring devices (e.g., effluent turbidity, residual chlorine concentration), and/or operational controls to react to upsets and variability. Similar to drinking water practices, quality control in potable reuse projects is provided by monitoring and operational response plans, whereas quality assurance embeds the principle of establishing multiple barriers and an assessment and provision of treatment reliability. This chapter discusses the state of the science of water reuse design and operational principles to ensure water quality. Additionally, the chapter includes discussion of the role of an environmental buffer within the multiple-barrier concept. The committee then summarizes these considerations by presenting 10 steps that can be taken to ensure water quality in potable and nonpotable water reuse projects.

DESIGN PRINCIPLES TO ENSURE QUALITY AND RELIABILITY

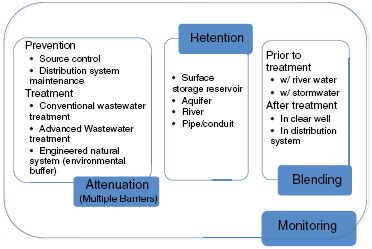

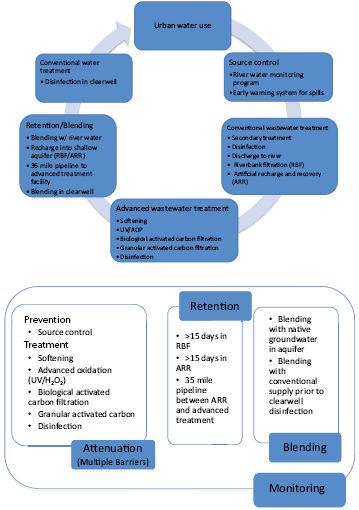

The primary goal of any reuse project is that public health is protected continually and the finished water quality is acceptable to consumers. Four elements—monitoring, attenuation, retention, and blending—are typically embedded into the design of both nonpotable and potable reuse schemes to ensure a reclaimed water quality that is suitable for the desired use at all times (see Figure 5-1). The extent of monitoring, contaminant attenuation, retention, and blending required for a particular water reuse application (e.g., industrial, agricultural, potable) will depend on project-specific water quality objectives and the potential impacts from system failure. The following discussions focus primarily on potable reuse applications, for which rigorous quality assurance is essential, although the design concepts can be adapted to nonpotable applications as well.

FIGURE 5-1 Four elements often used in the design of nonpotable and potable reuse schemes: monitoring, attenuation, retention, and blending.

Water Quality Monitoring

As with conventional drinking water supplies, water quality monitoring for potable water reuse is composed of a combination of online monitoring devices (e.g., filter effluent turbidity, chlorine residual, pH) and discrete measurements using grab or compos-

ite samples (e.g., ammonia, nitrate, dissolved organic carbon [DOC], Eschericia coli) to ensure the quality of the finished product water. These practices usually follow standards and protocols similar to those applied in drinking water treatment. Although these monitoring controls can fail, the acknowledged imperfection of the monitoring technology is comparable to that of drinking water treatment facilities. In some states potable reuse systems are required to include water retention after discharge from the treatment plant (e.g., in surface or subsurface storage of the product water). In theory, this retention allows time for additional contaminant attenuation and for water to be diverted from the distribution system if water quality problems are detected. However, significant water retention is often not cost-effective for potable reuse projects. Additionally, past experience with water reuse has demonstrated that unanticipated contaminants can be detected in final product water, even when state-of-the-art treatment and monitoring programs are employed (e.g., see Box 3-2 on NDMA).

An idealized monitoring program would measure critical microbial and chemical contaminants in real time in the finished product water before it leaves the reclamation plant. The availability of instantaneous monitoring techniques could allow significant reduction of required reclaimed water retention times. Water quality goals would need to be well defined, and measuring techniques would need to be selected with sensitivity suitable for confirming that water treatment goals have been achieved. Although several new techniques to monitor pathogens and a diverse set of chemicals in real time have recently been proposed (Panguluri et al., 2009; Cahill et al., 2010; Puglisi et al., 2010), significant additional research is required to develop reliable and appropriate approaches to real-time monitoring that are suitable for water reclamation settings. Also, to be truly protective of public health, such monitoring programs would need to be comprehensive enough to include all potential contaminants that pose significant risks in the anticipated reuse applications. Real-time monitoring techniques that are both sufficiently comprehensive and sensitive are unlikely to be available in the next decade. Thus, in the meantime, alternative approaches to quality assurance are needed to address shortcomings in real-time monitoring of contaminants.

The problem of ensuring the quality of an ongoing production operation is not new. The food and drinking water industries have faced it for some time, particularly where pathogens are concerned. Where drinking water is concerned, this need has been addressed by a three-part strategy: (1) characterizing critical elements that control the performance of unit processes in removing specific contaminants, (2) identifying parameters that can be reliably monitored and used to confirm that these elements are in place and that the processes are performing as expected, and (3) routine analysis of certain constituents in samples taken from the finished water to confirm that the previous measures are reliable.

Recently, a monitoring approach with similar components has been proposed for management of trace organic chemicals in potable reuse schemes (Drewes et al., 2008). This approach combines the monitoring of bulk parameters (i.e., surrogates) and a select number of indicator chemicals to ensure proper performance of unit processes. In this work, performance indicators and surrogate parameters are defined as follows:

• Indicator—“An indicator compound is an individual chemical occurring at a quantifiable level, that represents certain physicochemical and biodegradable characteristics of a family of trace organic constituents that are relevant to fate and transport during treatment. It provides a conservative assessment of removal.” (Drewes et al., 2008).

• Surrogate—“A surrogate parameter is a quantifiable change of a bulk parameter that can measure the performance of individual unit processes or operations in removing trace organic compounds” (Drewes et al., 2008). Surrogates can often be used in real time.

As an analogy, the measurement of indicators plays a similar role to the measurement of E. coli in drinking water, and the monitoring of surrogates plays a role similar to the monitoring of chlorine residual and contact time. This analogy makes it clear that the indicators and surrogates concept can be extended to address virtually any constituent targeted by a treatment train.

In 2010, an independent scientific advisory panel appointed by the California State Water Resources Control Board endorsed this concept to ensure proper performance of water reclamation processes that remove trace organic chemicals. The panel suggested a combination of appropriate surrogate parameters and

performance-based and health-based indicator chemicals for monitoring reclaimed water quality of surface spreading operations (i.e., soil aquifer treatment) and direct injection projects in California (Anderson et al., 2010). Indicator chemicals were selected with a range of properties in an attempt to account for unknown chemicals and newly developed compounds that may be released to the environment in the future, provided they fall within the range of chemical properties covered. This committee encourages further development of this concept.

Monitoring requirements usually become more stringent (e.g., more frequent sampling and more constituents to be monitored) as the potential for human contact with the reclaimed water increases. Municipal wastewater can contain thousands of chemicals originating from consumer products (e.g., household chemicals, personal care products, pharmaceutical residues), human waste (e.g., natural hormones), industrial and commercial discharges (e.g., solvents, metals), or chemicals that are generated during water treatment (e.g., transformation products; see also Chapter 3). Thus, it is appropriate for monitoring programs for reclaimed water used for potable applications to be more comprehensive than programs commonly used for monitoring water quality for conventional drinking water supplies.

Attenuation

Attenuation of microbial and chemical contaminants of concern can be achieved by establishing multiple barriers. A reuse scheme usually is composed of a combination of treatment barriers that are suitable to reduce the concentrations of compounds of concern and preventive measures that control exposure to certain contaminants, although the actual number of barriers differs among different reuse projects (Drewes and Khan, 2010). Tailored source control programs that limit the discharge from industrial activities to a municipal sewer system or the maintenance of a reclaimed water distribution system are examples of preventive barriers. Attenuation of water quality constituents of concern can occur through conventional wastewater treatment, advanced water treatment, or engineered natural systems.

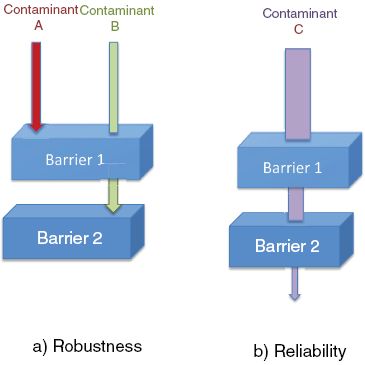

Multiple barriers are an important concept in ensuring that performance goals are met. Multiple barriers accomplish this objective in two ways: (1) by expanding the variety of contaminants the process train can effectively address (i.e., robustness) and (2) by improving the degree to which the process can be relied upon to remove any one of them (i.e., reliability, or the extent of consistent performance of a unit process to attenuate a contaminant). These principles are illustrated in Figure 5-2. Multiple barriers can also provide redundancy (defined as a series of unit processes that is capable of attenuating the same type of contaminant) so that if one process fails another is still in the line (Haas and Trussell, 1998; NRC, 1998). Additionally, even when true redundancy is not provided, multiple barriers can reduce the consequences of a failure when it does occur (Olivieri et al., 1999; Crittenden et al., 2005).

Given the nature of the associated risk, the performance criteria of multiple barriers are generally different for pathogens, which can cause acute (sudden and severe) health effects, as compared with organic chemicals, which can cause chronic health effects after prolonged or repeated exposures in drinking water scenarios (see also Chapter 6). Acute health effects from exposure to organic chemicals in drinking water

FIGURE 5-2 Multiple barriers function in two ways: (a) robustness—increasing the variety of contaminants addressed and (b) reliability—decreasing the likelihood that any one contaminant will fail to be removed, in this example by incorporating redundancy.

or reclaimed water are highly unlikely absent cross connections or backflows. From a public health standpoint, disinfection, which addresses acute risks, is the process element that requires the highest degree of reliability for applications involving significant human contact. In the case of pathogens in potable reuse projects, the performance expectation is that the overall objective for pathogen reduction needs to be met even if a single treatment barrier fails (NRC, 1998). The level of redundancy applied to address microbial constituents is typically not applied in the same way to multiple barriers for chemicals, because of the long-term exposure associated with significant elevated risks for most chemical constituents. Instead, multiple barriers for organic contaminants are designed to encompass a sequence of different processes capable of targeting classes of chemicals with different physicochemical properties, given the wide range of different chemicals present in reclaimed water (Drewes and Khan, 2010). For example, multiple barriers for chemical contaminants might consist of an advanced oxidation process followed by granular activated carbon, where GAC is attenuating chemicals that are not amendable to oxidation.

Retention

Within a water reuse context, retention time may serve two purposes: (1) to allow additional opportunities for attenuation of contaminants and (2) to provide time to respond to system failures or upsets. Retention time can be provided by storing reclaimed water in a surface storage reservoir, storing it in an engineered storage tank, recharging it to an unconfined or confined aquifer, releasing it into a segment of a river, or conveying it through a pipeline system. Proper documentation should be provided of how the water provider would be able to respond to specific types of upsets, including strategies for diverting compromised product water to avoid contaminated water reaching consumers and to ensure that the desired retention time is actually provided.

Blending

Blending of reclaimed water with a water source other than wastewater (e.g., surface water, stormwater, native groundwater) may occur prior to treatment of reclaimed water in engineered processes or after treatment prior to a distribution system. For advanced treatment processes that demineralize reclaimed water and remove trace chemicals, it may be necessary to balance the water chemistry by blending after treatment for public health concerns (e.g., absence of magnesium and calcium), to enhance taste, to prevent downstream corrosion (e.g., calcium saturation index), and to minimize damage to soils (e.g., sodium adsorption ratio) and crops (e.g., magnesium deficiency) (Tchobanoglous et al., 2011). Blending with traditional sources can also ensure some degree of contaminant dilution if a treatment system failure occurs. It is noteworthy that in many cases the blending water might actually represent a lower quality source. Therefore, a careful evaluation of the water quality prior to and after blending is warranted to avoid any degradation of the final product water.

Balancing Monitoring, Attenuation, Retention, and Blending

The need for using retention and/or blending to ensure water quality is dependent on the reliability and robustness of the measures taken for attenuation and monitoring. Early projects using limited technologies for attenuation and monitoring depended heavily on retention and blending. In the future, as more advanced technologies are used for attenuation that address a broader variety of contaminants with greater reliability and as these technologies are supported by improved techniques for monitoring and control, retention and blending will have less significance. However, an overarching comprehensive monitoring program tailored to the specific barriers and local conditions of a reuse scheme is necessary in all water reuse systems to ensure proper performance of each barrier.

Role of the Environmental Buffer in the Multiple-Barrier Concept

Up to the present time, the environmental buffer has often been a core element of the multiple-barrier concept in potable reuse projects. As discussed in Chapter 2, an environmental buffer is a water body or aquifer that is perceived by the public as natural and serves to eliminate the connection between the water and its past history. It also may provide some or all of

the following design elements discussed in the previous section: (1) attenuation of contaminants of concern, (2) provision of retention time, and (3) blending (or dilution). The performance of various environmental buffers is discussed in Chapter 4.

Attenuation of contaminants can occur in certain environmental buffers (e.g., wetlands, soil aquifer treatment, riverbank filtration). In this function, an engineered natural treatment system can be used before or after an aboveground water reclamation plant. However, the role of environmental buffers in attenuation of contaminants is not well documented. As detailed in Chapter 4, contaminant attenuation has been reported for some environmental buffers. However, considering site-specific differences, environmental buffers are likely to exhibit some variability in performance with respect to contaminant attenuation.

There is no widely accepted standard for retention time in environmental barriers for potable reuse systems. The retention provided by various examples discussed in this report varies from days to more than 6 months. Retention is particularly uneven where de facto reuse is concerned. Additionally, relying on environmental buffers as the only means of lengthening response times is questionable, especially in systems with short hydraulic residence times.

For potable reuse projects implemented through groundwater recharge, blending or dilution of reclaimed water with water deemed not to be of wastewater origin can occur before application or in aquifers. For surface water augmentation, blending typically occurs in a raw drinking water reservoir. The extent of dilution varies with the different natural systems, and can range from substantial dilution (<1 percent reclaimed water) to minimal dilution (>50 percent reclaimed water). As mentioned before, the need for blending depends heavily on the nature of the process train employed for attenuation.

Currently, the use and application of an environmental buffer for potable reuse is based on regulatory guidance and current practice rather than specific scientific evidence. Sufficient science does not currently exist to determine if current guidance is, in fact, appropriately protective, overprotective, or underprotective of public health. From a public outreach perspective, environmental buffers have often been perceived as important for gaining public acceptance as they create the perception of a “natural” system and provide time to respond to potential problems should they arise (Ruetten et al., 2004). NRC (1998) described a “loss of identity” that occurs in an environmental buffer, although the committee noted that “loss of identity is an issue that seems more relevant to public relations than public health protection” (NRC, 1998).

During the past decade, extensive research on the performance of reuse operations using modern engineered systems (Ternes et al., 2003; Drewes et al., 2003b; Snyder et al., 2006c; Bellona et al., 2008) as well as those using environmental buffers (Fox et al., 2001; Laws et al., 2011; Maeng et al., 2011) has demonstrated some engineered systems can perform equally well as some existing environmental buffers in diluting and attenuating contaminants, and the proper use of indicators and surrogates in the design of reuse systems offers the potential to address many concerns regarding quality assurance (Drewes et al., 2008). This committee concludes that the practice of classifying potable reuse projects as indirect and direct based on the presence or absence of an environmental buffer is not meaningful to an assessment of the final product water quality because it cannot be demonstrated that such “natural” barriers provide any public health protection that is not also available by other means. Moreover, the science required to design for uniform protection from one environmental buffer to the next is not available.

Accordingly, although the committee does view environmental buffers as useful elements of design that should be considered along with other processes and management actions in formulating potable water reuse projects, the committee does not consider environmental buffers to be an essential element of potable reuse projects. Rather than relying on environmental buffers to provide public health protection that is poorly defined, the level of quality assurance required for public health protection needs to be better defined so that potable reuse systems can be designed to provide it, with or without environmental buffers. A more quantitative understanding of the protections provided by different environmental buffers will allow engineered natural systems to be more effectively designed and operated.

Case Studies for System Design

The role of the design elements mentioned earlier (monitoring, attenuation, retention, blending) can be illustrated using three case studies that practice drink-

ing water augmentation. The three case studies employ different treatment processes including engineered unit processes as well as engineered natural treatment systems providing attenuation of contaminants, and provide a final quality of drinking water that is considered safe by public health agencies and accepted by the public. It is noteworthy that the sequence and location of the individual treatment barriers within the potable reuse scheme also differs.

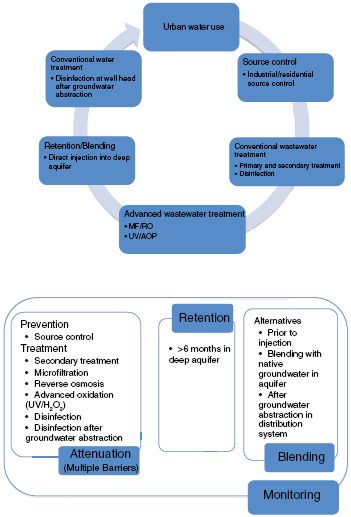

• Case Study 1 describes a groundwater recharge project favoring direct injection of reclaimed water into a potable aquifer after advanced treatment (Figure 5-3). This case study is similar to the practice of groundwater recharge established by the Orange County Water District (see also Box 2-11) or West Basin Municipal Water District in California.

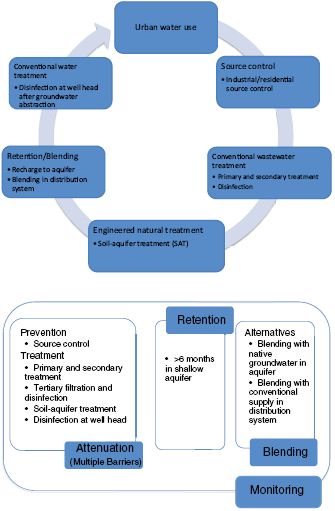

• Case Study 2 (Figure 5-4) illustrates a groundwater recharge project employing surface spreading followed by soil aquifer treatment. The case study is similar to the groundwater recharge operation in the Montebello Forebay operated by the County Sanitation Districts of Los Angeles County and the Water Replenishment District of Southern California (see also Box 4-2).

• Case Study 3 (Figure 5-5) represents a groundwater recharge scenario using a combination of engineered natural treatment systems with advanced engineered unit processes for drinking water augmentation, similar to that established by the Prairie Waters Project of the City of Aurora, Colorado (see also Box 4-1).

For each case study, the key processes that provide attenuation of contaminants are highlighted, the retention process is identified, and the role of blending in these projects is characterized. These examples reveal that multiple combinations and sequences of treatment processes can be selected for a potable reuse scheme resulting in comparable qualities of finished drinking water.

OPERATIONAL PRINCIPLES TO ASSURE QUALITY AND RELIABILITY

Treatment plant reliability is defined as the probability that a system can operate consistently over extended periods of time (Olivieri et al., 1987). In the case of a water reclamation plant, reliability might be defined as the likelihood of the plant achieving an effluent that matches or is superior to predetermined water reuse quality objectives. Traditional drinking water treatment plants consider reliability in their operations, but even greater attention to reliability is necessary in water reclamation facilities that supply water for potable reuse or other applications with significant human exposures. Failure of wastewater reclamation treatment processes could result in exposure of the population served by nonpotable or potable reuse applications to considerable health risk, particularly from acute illnesses caused by microbial pathogens (see Chapters 3 and 6). It is therefore important to minimize the probability of failure, or, in other words, to increase reliability. Although appropriate design is necessary to ensure reliable delivery of a product such as reclaimed water (as discussed in the previous section), it is also necessary to maintain an operational protocol to cope with intrinsic variability and react to process and conveyance upsets.

Some definitions of reliability only encompass the variability associated with treatment processes and assume that the plant is properly designed, operated, and maintained. Expansion of the definition of reliability to include the probability that the plant will be nonfunctional at any given time requires an evaluation of plant operational reliability, separate from reclaimed water quality variability. Operational reliability is affected by mechanical, design, process, or operational failures, which may be triggered by a wide range of causes, including human error or severe weather events. Previous sections of this chapter discuss ways to incorporate reliability into project design.

Reliability analysis can also be used to reveal weak points in the process so that corrections and/or modifications can be made. Even a well-maintained, well-operated plant is not perfectly reliable, and some variation will necessarily be inherent in any system (e.g., variations in influent flow and quality can lead to variation in effluent characteristics). Other factors, including power outages, equipment failure, and operational (human) error also affect plant reliability and need to be incorporated into the reliability analysis (Olivieri et al., 1987). There are a number of formal techniques for assessing reliability by looking at historical performance of individual components (e.g., pumps, valves, electric

FIGURE 5-3 Case Study 1: Potable reuse design elements (including attenuation, retention, and blending) used for groundwater recharge of reclaimed water.

NOTE: Residential source control could include voluntary programs to reduce the discharge of potentially problematic chemicals.

supply) and the potential for various hazards (e.g., storms, wind, earthquakes) to occur. By using historical data of these individual events (including data from individual components in other applications), failure or event trees can be constructed (Rasmussen, 1981; Kumamoto and Henley, 1996), and the probability distribution of consequences of different levels of severity can be illustrated.

FIGURE 5-4 Case Study 2: Potable reuse design elements (including attenuation, retention, and blending) used for surface spreading of reclaimed water followed by soil aquifer treatment.

Strategies for Incorporating Reliability into System Operation

No matter how well designed a treatment system is, there will be inevitable fluctuations in performance due to intrinsic variability of processes, variability in the influent stream, equipment failures, and human error. Therefore, systems delivering potable reclaimed water need to incorporate deliberate strategies to ensure reliable operation. The centrality of the operational plan in ensuring water quality has been emphasized by the World Health Organization (WHO, 2005) in its concept of water safety plans.

One formal approach for ensuring operational reliability is the hazard analysis and critical control points (HACCP) framework. HACCP was developed

FIGURE 5-5 Case Study 3: Potable reuse design elements (including attenuation, retention, and blending) used for riverbank filtration of reclaimed water followed by softening, advanced oxidation, and carbon adsorption.

in the late 1950s to ensure adequate food quality for the nascent National Aeronautics and Space Administration program. HACCP was further developed by the Pillsbury Corporation and ultimately codified by the National Advisory Committee on Microbiological Criteria for Foods (NACMC, 1997). The ultimate framework consists of a seven step sequence outlined in Box 5-1. These principles are important parts of the international food safety protection system. The development of HACCP broke reliance on the use of testing of the final product as the key determinant of quality, but rather emphasized the importance of understanding and control of each step in a processing system (Sperber and Stier, 2009).

Havelaar (1994) was one of the first to note that the drinking water supply, treatment, and distribution

BOX 5-1

Steps of the HACCP Framework and Application to Potable Reuse

The following seven steps represent the key components of the HACCP framework (adapted from NACMC, 1997), which was originally developed for food safety but has been applied to other areas, including drinking water quality.

1. Conduct a hazard analysis. Under HACCP, hazards are chemical or microbial constituents likely to cause illness if not controlled.

2. Determine the critical control points (CCPs). Defined originally for the food sector, a critical control point is “any point in the chain of food production from raw materials to finished product where the loss of control could result in unacceptable food safety risk” (Unnevehr and Jensen, 1996).

3. Establish critical limit(s). Critical limits are performance criteria—specific maximum or minimum values of biological, chemical, or physical parameters that are readily measurable—that must be attained in each process (at the CCPs) to prevent occurrence of a hazard or reduce it to an acceptable level. These parameters will be process-specific and determined through experimentation, computational models, quantitative risk analysis, or a combination of such methods (Havelaar, 1994; Notermans et al., 1994).

4. Establish a system for monitoring the CCPs.

5. Establish the corrective action(s) that will be taken when monitoring signals that a CCP is not under control.

6. Establish verification procedures to confirm that the HACCP system is working effectively.

7. Document all procedures and records relevant to these HAACP principles and their application.

Application to Potable Reuse

As an illustration of the use of HACCP, the committee developed the following set of steps that might be followed to implement this framework in the potable reuse context using an example of managing risks from pathogenic organisms:

1. Identify the critical organisms of interest, considering the type of source water used, that are likely to cause illness if not controlled. Determine the overall log reductions needed after treatment, given the nature of an incoming water to achieve the targeted final acceptable risk level and allocate these reductions among individual treatment processes.

2. Enumerate CCPs for water reclamation, considering each particular treatment process in the treatment train as well as the overall treatment method.

3. Given criteria in the finished reclaimed water, determine the minimum performance criteria for each treatment process. Note that these performance criteria should be based on easily measurable parameters (e.g., surrogates, residual chlorine) that can be used for operational control.

4. Establish a monitoring system to track the identified performance criteria at the critical control points. The finished product of a reclamation system is only acceptable for utilization when the performance criteria are all within the acceptable bounds.

5. Establish an operational procedure for implementing appropriate corrective actions at a particular installed process should a performance criterion be outside acceptable limits. These actions might include additional holding time, recirculating the water to allow for additional treatment, or some other measure. These procedures would also include actions to protect public health in the case of systemwide failure (e.g., natural disaster leading to extended power failure).

6. Establish a quality assurance process for periodic validation and auditing (e.g., by an independent third-party organization) to assess that the procedures are working effectively.

7. Document all procedures and records.

chain has a formal analogy to the food supply, processing, transport, and sale chain and that HACCP could be applicable to water treatment. The development of the U.S. Surface Water Treatment Rule under the Safe Drinking Water Act (SDWA; 40 CFR Parts 141-142) and subsequent amendments incorporate a HACCP-like process. Under this framework, an implicitly acceptable level of viruses and protozoa in treated water was defined. Based on this, specific processes operated under certain conditions (e.g., filter effluent turbidity for granular filters) were “credited” with certain removal efficiencies, and a sufficient number of removal credits needed to be in place depending on an initial program of monitoring of the microbial quality of the supply itself. The use of treatment technique in drinking water regulation is an option when it is not “economically or technically feasible to set an MCL” (SDWA § 1412(b) (7)(A)). HAACP has also been used as a framework for

the Australian Drinking Water Guidelines (NHMRC, 2004), which have been expanded to address potable reuse [see Box 5-2; NRMMC/EPHC/NHMRC, 2008]). Box 5-1 highlights an example of how the HACCP approach might be applied in the context of reclaimed water to ensure operational reliability.

STEPS TO ENSURE WATER QUALITY IN WATER REUSE

In the following section, the committee identifies reasonable steps that can and should be taken to

BOX 5-2

Australian Potable Reuse Guidelines

The Australian Guidelines for Water Recycling: Augmentation of Drinking Water Supplies (Phase 2) (NRMMC/EPHC/NHMRC, 2008), were developed to complement the Australian Drinking Water Guidelines (NHMRC, 2004). The approach to risk management for potable reuse is modeled on the approach developed for the Australian Drinking Water Guidelines and incorporates a generic framework applicable to any system that is reusing water based on 12 elements focusing on ensuring safety and reliability, rather than verification monitoring. The framework incorporates HACCP principles, based on a risk management approach designed to assure water quality at the point of use. The guidelines also provide detailed information on topics such as setting health-based targets for microorganisms and chemicals, the effectiveness of various treatment processes, CCPs, and monitoring.

In the Australian potable reuse guidelines, approaches for calculating contaminant guideline values based on toxicological data and specific guideline values for individual contaminants, as outlined in the Australian Drinking Water Guidelines, are applied to potable reuse. Microbial risk is evaluated using disability adjusted life years (DALYs), performance targets, and reference pathogens (based on WHO, 2008; see also Box 10-4). The tolerable microbial risk adopted in the potable reuse guidelines is 10–6 DALYs per person per year, which is roughly equivalent to 1 diarrheal illness per 1,000 people per year. The approach adopted in these guidelines for chemical parameters is based on approaches and guideline values outlined in the Australian Drinking Water Guidelines. The potable reuse guidelines also describe an approach, using thresholds of toxicological concern, for addressing chemicals without guideline values or those that lack sufficient toxicological information for guideline derivation (see also Chapter 6 for further discussion of this and other methods).

ensure water quality in potable and nonpotable water reuse projects. These steps address potential public health impacts from microbial pathogens and chemical contaminants found or likely to be found in reclaimed water and include considerations of reliability and quality assurance, and therefore merit careful consideration from designers and managers of reuse projects. The extent of each activity will depend on the type of reuse (nonpotable vs. potable) and degree of exposure:

1. Implement and maintain an effective source control program.

2. Utilize the most appropriate technology in wastewater treatment that is tailored to site-specific conditions.

3. Utilize multiple, independent barriers, especially for the removal of microbiological and organic chemical contaminants.

4. Employ quantitative reliability assessments to monitor and assess performance including major and minor process failures (i.e., both process control and final water quality monitoring and assessment as well as assessment of mechanical reliability).

5. Establish a trace organic chemical monitoring program that goes beyond currently regulated contaminants.

6. Document a strategy to provide retention time necessary to allow time to respond to system failures or upsets (e.g., this could be based, in part, on turnaround time to receive water quality monitoring results).

7. Provide for alternative means for diverting the product water that does not meet required standards.

8. Avoid “short-circuiting” in environmental buffers to ensure maintenance of appropriate retention times within the buffers (i.e., groundwater, wetlands, reservoir).

9. Train and certify operators of advanced water reclamation facilities regarding the principles of operation of advanced treatment processes, and educate them on the pathogenic organisms and chemical contaminants likely to be found in wastewaters and the relative effectiveness of the various treatment processes in reducing microbial and chemical contaminants concentrations. This is important because, in general, operators at water reclamation facilities have not received training on the operation of advanced water treatment

processes or the public health aspects associated with drinking water.

10. Institute formal channels of coordination between water reclamation agencies, regulatory agencies, and agencies responsible for public water systems. This will, for example, allow for rapid communication and immediate corrective action(s) to be taken by the appropriate agency (or agencies) in the event that the reclaimed water does not meet regulatory requirements.

CONCLUSIONS AND RECOMMENDATIONS

In both nonpotable and potable reuse schemes, monitoring, contaminant attenuation processes, post-treatment retention time, and blending can be effective tools for achieving quality assurance. Today, most reuse projects find it necessary to employ all these elements. Attenuation can be achieved through the establishment of multiple barriers (consisting of treatment and prevention approaches) to minimize public health risks. Over the last 15 years, several potable reuse projects of significant size have been developed in the United States. Although these projects share the design principle of multiple barriers, the type and sequence of water treatment processes employed in these schemes differ significantly. All these schemes have demonstrated that different configurations of unit processes can achieve similar levels of water quality and reliability. In the future, as new technologies improve capabilities for both monitoring and attenuation, it is expected that retention and blending requirements currently imposed on many potable reuse projects will become less significant in quality assurance.

Reuse systems should be designed with treatment trains that include reliability and robustness. Redundancy strengthens the reliability of contaminant removal, particularly important for contaminants with acute affects, while robustness employs combinations of technologies that address a broad variety of contaminants. Reuse systems designed for applications with possible human contact should include redundant barriers for pathogens that cause waterborne diseases. Potable reuse systems should employ diverse processes that can function as barriers for many types of chemicals, considering the wide range of physicochemical properties of chemical contaminants.

Reclamation facilities should develop monitoring and operational plans to respond to variability, equipment malfunctions, and operator error to ensure that reclaimed water released meets the appropriate quality standards for its use. Redundancy and quality reliability assessments, including process control, water quality monitoring, and the capacity to divert water that does not meet predetermined quality targets, are essential components of all reuse systems. Particularly in potable reuse, systems need to be designed to be “fail-safe.” The concept of HACCP, water safety plans, or their equivalent may be used as a guide for such operational plans. A key aspect involves the identification of easily measureable performance criteria (e.g., surrogates), which are used for operational control and as a trigger for corrective action.

Natural systems are employed in most potable water reuse systems to provide an environmental buffer. However, it cannot be demonstrated that such “natural” barriers provide public health protection that is not also available by other engineered processes. Environmental buffers in potable reuse projects may fulfill some or all of three design elements: (1) provision of retention time, (2) attenuation of contaminants, and (3) blending (or dilution), although the extent of these three factors varies widely across different environmental buffers. In some cases engineered natural systems, which are generally perceived as beneficial to public acceptance, can be substituted for engineered unit processes. However, the science required to design for uniform protection from one environmental buffer to the next is not available.

The potable reuse of highly treated reclaimed water without an environmental buffer is worthy of consideration, if adequate protection is engineered within the system. Historically, the practice of adding reclaimed water directly to the water supply without an environmental buffer—a practice referred to as direct potable reuse—has been rejected by water utilities, by regulatory agencies in the United States, and by previous National Research Council committees. However, research during the past decade on the performance of several full-scale advanced water treatment operations indicates that some engineered systems can perform as well or better than some existing environmental buffers in diluting (if necessary) and attenuating contaminants, and the proper use of indicators and surrogates in the design of reuse systems offers the potential to address

many concerns regarding quality assurance. Environmental buffers can be useful elements of design that should be considered along with other processes and management actions in formulating the composition of potable water reuse projects. However, environmental buffers are not essential elements to achieve quality assurance in potable reuse projects. Additionally, the classification of potable reuse projects as indirect (i.e., includes an environmental buffer) and direct (i.e., does not include an environmental buffer) is not productive from a technical perspective because the terms are not linked to product water quality.