Evaluating the Risks of Potable Reuse in Context

In this chapter, the committee summarizes the findings of previous National Research Council (NRC) committees as they examined the question of the safety of water reuse. Building on the risk assessment methodologies presented in Chapter 6, the committee then presents a comparative analysis of potential health risks of potable reuse in the context of the risks of the common contemporary circumstance of a conventional drinking water supply derived from a surface water that receives a small percentage of treated wastewater (see Chapter 2). By means of this analysis, the committee compares the estimated risks of a drinking water source generally perceived as safe (i.e., de facto potable reuse) against the estimated risks of two other potable reuse scenarios.

PREVIOUS NRC ASSESSMENTS OF REUSE RISKS

The 1982 Committee on Quality Criteria for Water Reuse, citing the many unknowns in making an assessment of the health effects of potable reuse, adopted the view that “the quality of reused water could be compared to that of conventional drinking water supplies, which are assumed to be safe” (NRC, 1982). That committee was providing advice for an extensive testing program being undertaken by the U.S. Army Corps of Engineers on the treatment of an effluent-dominated Potomac River as part of an evaluation of the future water supply for Washington, D.C., and it outlined specific testing procedures for evaluating the treated water based on the state of science in 1982. A second NRC committee reviewed the results of the Corps’ testing program and found them inconclusive, primarily because the Corps had not included all the tiers of toxicological testing recommended (NRC, 1984).

In the years that followed, more extensive toxicological tests were conducted for other proposed potable reuse projects, particularly in Tampa (CH2M Hill, 1993) and Denver (Lauer and Rogers, 1996). In reviewing those data (see Box 6-2), which did not demonstrate any adverse health effects, a third NRC committee concluded that such tests provide a database too limited to draw general conclusions about the safety of potable reuse (NRC, 1998). NRC (1998) also pointed out new concerns that had arisen since the earlier NRC reports and outlined new testing techniques that had been developed. The committee also recommended that new toxicity tests be conducted, particularly long-term fish exposure testing, which were partially implemented in subsequent evaluations in Orange County and Singapore. Advice on testing methods will continue to evolve as science advances, but based on the progression of this research, it is evident that, although such testing might be used to show evidence of potential health risk, it cannot be used to establish the absence of risk.

Examining this history from the vantage point of 2011, the most profound contribution of the 1982 committee was the idea that the quality of the water in potable reuse scenarios should be compared with the quality of conventional drinking waters, which are assumed safe. Advice on specific tests that might be useful in making these comparisons will continue to follow developments in the underlying science, but it is unlikely that any laboratory test will ever establish the absence of health risk in a drinking water from any source. This chapter builds on that foundation,

comparing the estimated health risk of water from potable reuse projects with conventional supplies using established tools of risk assessment.

The 1982 committee went on to say that the comparison should be made with the highest quality water that can be obtained from that locality even if that source may not be in use. In a similar vein, the 1998 committee, after concluding that planned potable reuse is viable, suggested that planned potable reuse should be, “an option of last resort—to be adopted only if all the alternatives are technically or economically infeasible” (NRC, 1998). All three committees (NRC, 1982, 1984, and 1998) also took the view that U.S. drinking water regulations were not intended to protect public health when raw water supplies were heavily contaminated with municipal and industrial wastewater.

In the committee’s judgment, current circumstances call for a reassessment of those views. First, as shown in Chapter 1, the United States has been operating near the limit of its water supply for several decades since about the time of the first study. As a result of further stress from continued population growth and climate change, this report is being written with a view to providing useful advice to the nation as it comes to terms with this new world where pristine water is ever less abundant, even as the domestic wastewater from an increasing population is discharged into the nation’s waterways. Second, as demonstrated in Chapter 2, the committee concludes that de facto reuse (i.e., when a drinking water source consists of some significant percentage of treated wastewater effluent from an upstream discharger) is becoming increasingly common in the United States. Finally, it has become evident to the committee that, in many communities, today’s drinking water regulations are already being employed to address the quality of drinking water prepared from water supplies that have substantial wastewater content (see also Chapter 10 for a discussion of regulations). Although this fact does not imply that the regulations are adequate for that charge, it does reflect a notable shift in perspectives since the prior NRC reuse reports were written.

Under these conditions, the committee judges that it is appropriate to compare the risk associated with potable reuse projects with the risk associated with de facto reuse scenarios that are representative of the supplies that are widely experienced today. The committee chose to construct a “risk exemplar” to examine how these comparisons might be made. The analysis in this exemplar uses the quantitative risk assessment methods originally proposed for organic chemicals by the NRC (1983) as expanded for microbial contaminants (Haas et al., 1999) and more recently updated (NRC, 2009b). Other methods recently developed to address pharmaceuticals, personal care products, and other anthropogenic contaminants (Rodriquez et al., 2007a,b; Snyder et al., 2008a; Bull et al., 2011) are also used in the analysis to address the risk of classes of contaminants for which rigorous toxicological data are lacking. In the committee’s judgment, these risk assessment techniques represent the best means available at this time for estimating the relative risk in such circumstances (see Chapter 6) and offer a method for evaluating the relative merits of various options for managing health risks from chemical and microbial contaminants in reclaimed water.

The committee chose to develop an exemplary comparison of risks associated with various potable reuse scenarios, including de facto potable reuse, modeled upon circumstances currently encountered in the United States today. Based on the discussion in Chapter 2, the committee concluded that it would be appropriate to compare the quality of the water in potable reuse scenarios with the quality of a de facto reuse scenario where a conventional water supply has an average annual wastewater content of 5 percent. This situation is commonly found among current surface water supplies (see Box 2-4). As shown in the figure in Box 2-4, there are many circumstances where de facto reuse exceeds 5 percent, and the committee discussed at length the appropriate wastewater content for use in the exemplar. In the end, 5 percent was selected as a wastewater content that can be reasonably viewed as commonplace and not exaggerated. Swayne et al. (1980) reported that more than 24 million people of the 76 million people surveyed were using drinking water supplies with a wastewater content of 5 percent or more in low-flow conditions (see figure in Box 2-4). Although no data exist, anecdotal evidence based on the population growth in urban areas suggests that wastewater content is often higher today.

The comparative risk approach used in this analysis was designed to examine the presence of selected pathogens and trace organic chemicals in final product waters from de facto reuse and two potable reuse scenarios. Contaminant occurrence data, compiled from several sources, were critically evaluated for each scenario. The data were then analyzed to assess whether there are likely to be significantly greater human health concerns from exposure to contaminants in these hypothetical reuse scenarios, compared with a common de facto reuse scenario. For the chemicals in each of the scenarios, a risk-based action level was used, such as the U.S. Environmental Protection Agency’s maximum contaminant levels (MCLs), Australian drinking water guidelines, or World Health Organization drinking water guideline values. Also, a margin of safety is reported, defined as the ratio between a risk-based action level (such as an MCL) and the actual concentration of a chemical in reclaimed water. The resulting ratio between these two values (i.e., the margin of safety) can be used to characterize potential health risks associated with exposure to a chemical (Illing, 2006). For microbials, the dose-response relationships were used to compute risk from a single day of exposure. Additional underlying assumptions are described below.

It was beyond the means of the committee to conduct an analysis of every possible contaminant in reclaimed water. In addition, certain assumptions were made to simplify the analysis. The committee focused on four pathogens commonly of concern in reuse applications and selected 24 chemicals representing different classes of contaminants (i.e., nitrosamines, disinfection byproducts (DBPs), hormones, pharmaceuticals, antimicrobials, flame retardants, and perfluorochemicals), for which occurrence and toxicological data were available in the published literature.

Potable Reuse Scenarios Considered in the Exemplar

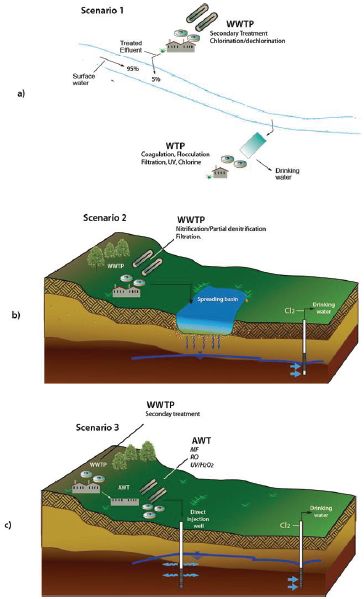

Three hypothetical scenarios were evaluated to compare the relative risk from exposure to pathogens and trace organic chemicals in the conventional water supply and potable reuse scenarios. Scenario 1, the conventional water supply scenario, considers de facto reuse with a conventional drinking water treatment plant drawing water from a supply that receives a 5 percent contribution of disinfected wastewater effluent upstream of the intake for the drinking water plant (Figure 7-1a). The two reuse cases describing drinking water supplies derived from planned potable reuse projects include one with groundwater recharge to a potable aquifer via surface spreading basins with subsequent soil aquifer treatment (SAT; Scenario 2, Figure 7-1b) and one with groundwater recharge to a potable aquifer by direct injection of reclaimed water that has received advanced water treatment (Scenario 3, Figure 7-1c).

Scenario 1: De Facto Reuse (Common Surface Water Supply)

In Scenario 1, a surface water supply that serves as a drinking water source receives discharge from secondary treated wastewater effluent that is disinfected and dechlorinated prior to discharge to meet a standard of 200 fecal coliform/100 mL. The surface water is assumed to be free of pathogens with no measurable trace organic chemicals prior to effluent discharge.

Attenuation of contaminants after wastewater discharge can vary widely as a function of distance between discharge point and raw drinking water withdrawal (i.e., retention time), streamflow geometry (i.e., depth, mixing), and environmental conditions such as temperature, ultraviolet light penetration, particulate matter, biological activity. In this scenario, a worst case is assumed where no inactivation or attenuation of pathogens or chemicals occurs in the surface water body. The wastewater discharged constitutes 5 percent of the flow in the source at the point where water is abstracted for the drinking water treatment plant. Subsequently, this water is extracted by a conventional drinking water plant employing coagulation/flocculation, followed by granular media filtration with disinfection designed to meet the requirements of the Long Term-2 Surface Water Treatment Rule (EPA, 2006a).

Scenario 2: Soil Aquifer Treatment

In Scenario 2, a potable aquifer is augmented with reclaimed water via groundwater recharge by surface spreading. Advanced wastewater treatment in this exemplar assumes secondary treatment, followed by nitrification, partial denitrification and granular media filtration but no disinfection. The reclaimed water is

FIGURE 7-1 Summary of scenarios examined in the risk exemplar. (a) Scenario 1—A conventional water plant drawing from a source that is 5 percent treated wastewater in origin; (b) Scenario 2—A deep well in an aquifer fed by reclaimed water via a soil aquifer treatment system and (c) Scenario 3—A deep well drawing from an aquifer fed by injection of reclaimed water from an advanced water treatment (AWT) plant.

applied to surface spreading basins with subsequent SAT (see Chapter 4). It is assumed that the water remains in the subsurface for 6 months with no dilution from native groundwater. The assumption of no dilution is a worst case, being more conservative than the real-world hydrogeological characteristics of typical subsurface systems. This condition was selected to assign removal credits only to physicochemical and biological attenuation processes occurring during SAT. Subsequently, the water is abstracted and disinfected with chlorine at the wellhead prior to consumption, assuming that no blending with other source waters occurs in the distribution system. This assumption describes a scenario where all the drinking water that is consumed originates from the reclaimed water source. This assumption is conservative, given that most existing potable reuse projects blend their product water with other sources, providing additional dilution.

Scenario 3: Microfiltration, Reverse Osmosis, Advanced Oxidation, Groundwater Recharge

In Scenario 3, a potable aquifer is augmented with advanced-treated wastewater followed by groundwater recharge by direct injection. The advanced treatment train includes secondary treatment with chloramination, followed by microfiltration, reverse osmosis, and advanced oxidation using UV irradiation in combination with hydrogen peroxide (UV/H2O2). It is assumed that the water remains in the subsurface for 6 months with no dilution from native groundwater. Again, this scenario assumes that any attenuation of pathogens and trace organic chemicals in the aquifer is achieved only by physicochemical and biological processes rather than dilution. Subsequently, the groundwater is abstracted and disinfected at the wellhead with chlorine prior to consumption. Again, this case describes a scenario where 100 percent of the drinking water consumed originates from reclaimed water after advanced water treatment and direct injection into a potable aquifer. This assumption is also conservative, given that most existing potable reuse projects blend their product water with other sources, providing additional dilution.

Other Scenarios Considered

The construction of an additional scenario, similar to Scenario 2, but with disinfection similar to the 450 mg-min/L requirement in California’s Title 22 regulations was considered, but was not included because sufficient data could not be obtained to estimate the impact of disinfection on contaminant concentrations. From a qualitative perspective, this scenario would result in significantly lower levels of microbial exposure, particularly for Salmonella, but higher levels of DBPs would be present—trihalomethanes and haloacetic acids if free chlorine is used or N-nitrosodimethylamine (NDMA) if combined chlorine is used. A review of the literature shows that these DBPs are typically removed during the SAT, particularly NDMA (Kaplan and Kaplan, 1985; Yang et al., 2005; LACSD, 2008; Zhou et al., 2009; Nalinakumari et al., 2010; Patterson et al., 2011), but vigilance is called for when disinfected effluent is used for SAT because as shown in the following section, the margin of safety is smaller with DBPs than with most other trace organic chemicals.

Further details for Scenarios 1 through 3 are provided in Appendix A with respect to water quality characteristics, attenuation, and generation of contaminants during various treatment steps.

Contaminants Considered in the Risk Exemplar

The committee considered a broad cross section of common pathogenic bacteria, viruses, and protozoa, as well as regulated and nonregulated trace organic chemicals that have been reported in reclaimed water or surface water receiving wastewater discharge, to determine the contaminants to be considered in the risk exemplar. The contaminants that were ultimately selected met the following criteria: (1) sufficient information was available on their occurrence, health effects, fate and transport, and behavior in treatment systems such that reasonable calculations could be made for each scenario; and (2) they are either recognized to be of concern based on possible health effects or they are of interest to the public.

Four pathogens were selected: adenovirus, norovirus, Salmonella, and Cryptosporidium. All of these organisms are transmitted by the fecal-to-oral route, and they all play an important role in waterborne illness in the United States. All four pathogens have been studied in effluents, and, for each, dose-response data are available. Salmonella is a classic bacterial pathogen associated with both food- and waterborne disease, while the significance of the other three pathogens has only

become clear in recent decades. Toxigenic Escherichia coli was originally considered as well, but sufficient dose-response data were not available.

Potential adverse health effects associated with trace organic chemicals in drinking water are an important concern among stakeholders and the public. As noted in Chapter 3, reclaimed water can contain chemicals originating from consumer products (e.g., personal care products, pharmaceuticals), human waste (e.g., natural hormones), and industrial and commercial discharges (e.g., solvents). The reclaimed water, itself, can also contain compounds that are generated during water treatment (e.g., DBPs). Collectively, the number of potential compounds present in reclaimed water is in the thousands. For the risk exemplar, 24 chemicals were selected (see Box 7-1) that represent different classes of these contaminants (i.e., DBPs, including nitrosamines; hormones; pharmaceuticals; antimicrobials; flame retardants; and perfluorochemicals).

The starting concentrations for the microbial and chemical contaminants were selected on the basis of a review of contaminant occurrence data in the scientific literature. More details are provided in Appendix A.

BOX 7-1

Chemicals Selected for Evaluation in the Risk Exemplar

| Disinfection Byproducts | Hormones and Pharmaceuticals |

| Bromate | 17β-Estradiol |

| Bromoform | Acetaminophen |

| Chloroform | (paracetamol) |

| Dibromoacetic acid (DBCA) | Ibuprofen |

| Dibromoacetonitrile (DBAN) | Caffeine |

| Dibromochloromethane (DBCM) | Carbamazepine |

| Dichloroacetic acid (DCAA) | Gemfibrozil |

| Dichloroacetonitrile (DCAN) | Sulfamethoxazole |

| Haloacetic acid (HAA5) | Meprobamate |

| Trihalomethanes (THMs) | Primidone |

| N-Nitrosodimethylamine (NDMA) | Others |

| Triclosan | |

| Tris(2-chloroethyl)phosphate (TCEP) | |

| Perfluorooctanesulfonic acid (PFOS) | |

| Perfluorooctanoic acid (PFOA) |

Assumptions Concerning Fate, Transport, Removal, and Estimates of Risk

The assumptions in the exemplar concerning the fate, transport, and removal of the pathogens and chemicals considered in each scenario of the exemplar are discussed in detail in Appendix A. Literature references are provided for the sources of the data that make up each scenario, including expected densities or concentrations following the various treatment steps (including both engineered treatment systems and engineered natural systems), to characterize the fate of contaminants from the initial water or wastewater source to the product water at the consumer’s tap. Quantitative microbial risk assessment methodologies are described that are used to estimate the risk of disease that results. Chemical risk assessment techniques used in the exemplar are also described that detail methods to derive risk-based action levels for chemicals in reclaimed water.

Exemplar Results

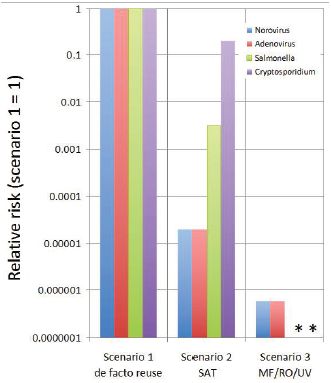

The goal of the exemplar exercise is to illustrate the relative risk among the scenarios. In Appendix A, the qualities of the surface and reclaimed water and the final water qualities are described for each case as well as the rest of the assumptions behind the exemplar. Scenario 1 represents a scenario to which the public is already commonly exposed in many locations throughout the United States and which is generally regarded as safe, whereas Scenarios 2 and 3 represent planned potable reuse projects. Because of the nature of the risk characterization tools employed, risks from pathogens are displayed in a different form than the risks from chemicals. The pathogen risks are calculated as an estimate of the risk of increased gastroenteric illness. These data also can be usefully displayed as a relative risk—the risks of the potable reuse Scenarios 2 and 3 relative to the risks of Scenario 1—de facto reuse (Figure 7-2).

Figure 7-2 presents a summary of the relative risk of illness from exposure to norovirus, adenovirus, Salmonella, and Cryptosporidium as a result of drinking water from each of the three scenarios. All of the risks have been normalized to Scenario 1, the de facto potable reuse example. As shown, both potable reuse scenarios have reduced risks, especially where viruses

FIGURE 7-2 Relative risk of illness (gastroenteritis) to persons drinking water from each of the reuse scenarios relative to de facto reuse (Scenario 1). The smaller the number, the lower the relative risk of the reuse applications for each organism. For example in Scenario 2, the risk of illness due to Salmonella is estimated to be less than 1/100th of the risk due to Salmonella in Scenario 1.

NOTES: *The risks for Salmonella and Cryptosporidium in Scenario 3 were below the limits that could be assessed by the model.

are concerned with the SAT supply, and with all four organisms where the microfiltration/reverse osmosis/UV supply is concerned. In the latter instance, the densities of Salmonella and Cryptosporidium are estimated to be reduced to such low levels that the model was unable to calculate a risk. On the basis of these calculations the committee concludes that microbial risks from these potable reuse scenarios are much less than those from de facto reuse.

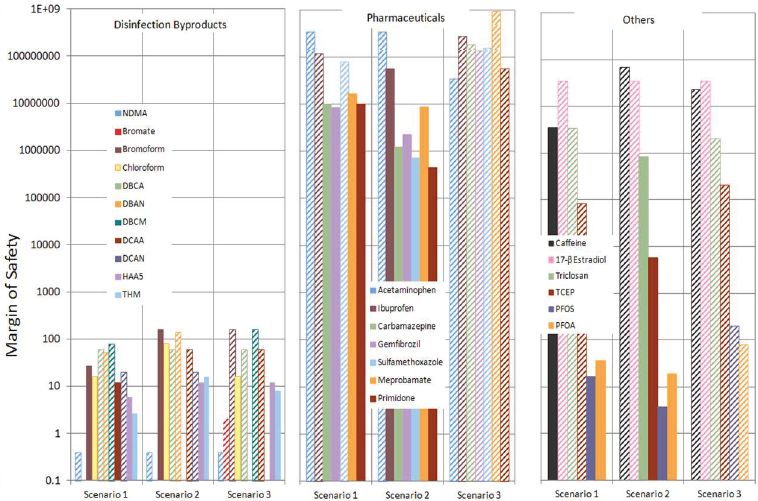

Table 7-1 summarizes the estimates of the margin of safety for each of the 24 organic compounds studies in the exemplar. These are also displayed graphically in Figure 7-3.

Findings of the Risk Exemplar

The results of the risk assessment (Table 7-1, Figures 7-2 and 7-3) can be used to ascertain whether the particular process trains produce water of an acceptable quality. Note that these assessments were based on an ingestion scenario. For other end uses, such as showering, some modification in the analyses would need to be made.

For the pharmaceuticals, triclosan, and TCEP, the margin of safety ranges from 1000 to 1,000,000 for all three scenarios (a margin of safety lower than 1 poses potential concern). The perfluorinated chemicals (PFOA and PFOS) have lower margins of safety, but have margins of safety exceeding 1 for all three scenarios. With one exception, the DBPs are shown to have margins of safety above 1. For NDMA, the data for all three scenarios show that it was below the limit of detection, but the detection limit (2 ng/L) exceeds the 10–6 lifetime cancer risk level used for the risk-based action level for this compound in the exemplar (0.7 ng/L). As a result the margin of safety for NDMA can only be established as greater than 0.35 for all three scenarios. These results have not identified any chemical that presents a health risk of concern in any of the scenarios studied, although further research is warranted to ensure confidence in these assessments (see Chapter 11). Despite uncertainties inherent in the analysis, these results demonstrate that following proper diligence and employing appropriately designed treatment trains (see Chapter 5), potable reuse systems can provide protection from trace organic contaminants comparable to what the public experiences in many drinking water supplies today. As a general rule, DBPs and perfluorinated chemicals deserve continued scrutiny in all drinking water supplies.

For microbial agents, if one illness or infection/10,000 persons per year is used as a benchmark, it is apparent that the risks from bacterial and protozoan exposure are below this benchmark for all the scenarios, with the exception of Scenario 1, the de facto reuse example (see Appendix A, Table A-6). In this particular instance, it is likely that the risks for the viruses are overestimated, perhaps as a result of the conversion of the genome copy density to the density of infectious units (IU) and/or because predation and die-off in the stream was neglected. In any case, the consistent use of conservative assumptions throughout all three scenarios assures that the assessment of the relative risk of one scenario over the other is robust. The relative analysis makes it clear that the potable reuse scenarios exam-

TABLE 7-1 Summary of Margin of Safety (MOS) Estimates for the Three Scenarios Analyzed by the Committee

| Chemical | Risk-Based Action Levela | MOS Scenario 1, de Facto Reuse | MOS Scenario 2, SAT, No Disinfection | MOS Scenario 3 MF/RO/UV | |

| Nitrosamines | |||||

| NDMA | 0.7 ng/L | >0.4 | >0.4 | >0.4 | |

| Disinfection byproducts | |||||

| Bromate | 10 μg/L | N/A | N/A | >2 | |

| Bromoform | 80 μg/L | 27 | 160 | >160 | |

| Chloroform | 80 μg/L | 16 | 80 | 16 | |

| DBCA | 60 μg/L | >60 | >60 | >60 | |

| DBAN | 70 μg/L | >54 | >140 | N/A | |

| DBCM | 80 μg/L | >80 | N/A | >160 | |

| DCAA | 60 μg/L | 12 | >60 | >60 | |

| DCAN | 20 μg/L | >20 | >20 | N/A | |

| HAA5 | 60 μg/L | 6 | 12 | 12 | |

| THM | 80 μg/L | 2.7 | 16 | 8 | |

| Pharmaceuticals | |||||

| Acetaminophen | 350,000,000 ng/L | >350,000,000 | >350,000,000 | >35,000,000 | |

| Ibuprofen | 120,000,000 ng/L | >120,000,000 | 56,000,000 | >280,000,000 | |

| Carbamazepine | 186,900,000 ng/L | 10,000,000 | 1,200,000 | >190,000,000 | |

| Gemfibrozil | 140,000,000 ng/L | 8,600,000 | 2,300,000 | >140,000,000 | |

| Sulfamethoxazole | 160,000,000 ng/L | >80,000,000 | 720,000 | >160,000,000 | |

| Meprobamate | 280,000,000 ng/L | 17,000,000 | 8,800,000 | >930,000,000 | |

| Primidone | 58,100,000 ng/L | 10,000,000 | 450,000 | >58,000,000 | |

| Others | |||||

| Caffeine | 70,000,000 ng/L | 3,500,000 | >70,000,000 | >23,000,000 | |

| 17-β Estradiol | 3,500,000 ng/L | >35,000,000 | >35,000,000 | >35,000,000 | |

| Triclosan | 2,100,000 ng/L | >3,500,000 | 840,000 | >2,100,000 | |

| TCEP | 2,100,000 ng/L | >84,000 | 5,800 | >210,000 | |

| PFOS | 200 ng/L | 17 | 4 | >200 | |

| PFOA | 400 ng/L | 36 | 19 | >80 | |

NOTES: > indicates that the assumed concentration was below detection, and only an upper limit on the risk calculation was determined. See Appendix A for further detail. aSources of the risk-based action limits are provided in Table A-11 of Appendix 11.

ined here represent a reduction in microbial risk when compared with the de facto scenario that has become a common occurrence throughout the country.

It should be emphasized that the committee presents these calculations as an exemplar. This should not be used to endorse certain treatment schemes or determine the risk at any particular site without site-specific analyses. For example, the presence of a chemical manufacturing facility in the service area of a wastewater utility being used for potable reuse would dictate scrutiny of chemicals that might be discharged to the sanitary sewer. In addition, the various inputs and assumptions of this risk assessment contain sources of variability and uncertainty. Good practice in risk assessment would require full consideration of these factors, such as by a Monte Carlo analysis (Burmaster and Anderson, 1994).

It is appropriate to compare the risk from water produced by potable reuse projects with the risk associated with the water supplies that are presently in use. The committee conducted an original comparative analysis of potential health risks of potable reuse in the context of the risks of a conventional drinking water supply derived from a surface water that receives a small percentage of treated wastewater. By means of this analysis, termed a risk exemplar, the committee compared the estimated risks of a common drinking water source generally perceived as safe (i.e., de facto potable reuse) against the estimated risks of two other potable reuse scenarios.

The results of the committee’s exemplar risk assessments suggest that the risk from 24 selected chemical contaminants in the two potable reuse sce-

FIGURE 7-3 Display of the Margin of Safety (MOS) calculations for the 24 chemicals analyzed for each of the three scenarios. MOS <1 is considered a potential concern for human health.

NOTE: Bars with diagonal stripes are for MOS values represent the lower limit of the actual value, considering that the concentration of the contaminant was below the detection limit.

narios does not exceed the risk in common existing water supplies. The results are helpful in providing perspective on the relative importance of different groups of chemicals in drinking water. For example, DBPs, in particular NDMA, and perfluorinated chemicals deserve special attention in water reuse projects because they represent a more serious human health risk than do pharmaceuticals and personal care products, given their lower margins of safety. Despite uncertainties inherent in the analysis, these results demonstrate that following proper diligence and employing tailored advanced treatment trains and/or natural engineered treatment, potable reuse systems can provide protection from trace organic contaminants comparable to what the public experiences in many drinking water supplies today.

With respect to pathogens, although there is a great degree of uncertainty, the committee’s analysis suggests the risk from potable reuse does not appear to be any higher, and may be orders of magnitude lower than currently experienced in at least some current (and approved) drinking water treatment systems (i.e., de facto reuse). State-of-the-art water treatment trains for potable reuse should be adequate to address the concerns of microbial contamination if finished water is protected from recontamination during storage and transport and if multiple barriers and quality assurance strategies are in place to ensure reliability of the treatment processes (see Chapter 5). The committee’s analysis is presented as an exemplar (see Appendix A for details and assumptions made) and should not be used to endorse certain treatment schemes or determine the risk at any particular site without site-specific analyses.