Addressing Issues of Regulatory Oversight

KEY SPEAKER POINTS

- A new paradigm for regulatory oversight assumes, according to Nancy Kass, that it is desirable to increase the quality, value, fairness, and efficiency of health care, health care systems, and institutions and that any learning that does take place must proceed in an ethical way.

- Quality-improvement studies need to take advantage of better experimental designs, Susan Huang said, and they need to be done in multiple settings. Quality-improvement researchers, she added, need to “stop being afraid of randomization” because they fear that a randomization trial design inevitably leads to the need for a protracted institutional review board approval process.

- Well-designed studies can be turned into rigorous academic publications quickly, said Rainu Kaushal, and researchers can translate their findings into actionable information for policy makers and health care stakeholders without jeopardizing publications.

This session took on the challenges and opportunities related to the legal and ethical oversight of integrating care and research efforts. Nancy Kass, the Phoebe R. Berman Professor of Bioethics and Public Health at the

Johns Hopkins Bloomberg School of Public Health and Berman Institute of Bioethics, opened the session with a discussion of an ethical framework for learning health systems. Susan Huang of the University of California, Irvine; James Weinstein, CEO and president of the Dartmouth–Hitchcock Health System; and Peter Margolis, a professor of pediatrics and the director of research at the James M. Anderson Center for Health System Excellence at Cincinnati Children’s Hospital Medical Center then provided some examples of approaches to dealing with oversight challenges. An open discussion, moderated by Barbara Bierer, the senior vice president for research at Brigham and Women’s Hospital and a professor of medicine at Harvard Medical School, followed the presentations.

Before the first presentation, Bierer commented that as the discussion moves along the continuum of research to practice or practice to research, it is important to remember that the regulatory guidelines of the Office for Human Research Protections (OHRP) define research as a systematic investigation including research, development, testing, and evaluation design to develop or contribute to generalizable knowledge. This definition, she said, means that quality improvement projects conducted internally still fall under the provisions of the Common Rule if the intention is to publish the results and make them generalizable.

AN ETHICAL FRAMEWORK FOR LEARNING HEALTH SYSTEMS

Nancy Kass proposed a new way of thinking about ethics and human research, one that moves away from the current “distinctions paradigm,” which is based on the premise that ethics and oversight should be based on whether an activity meets the regulatory definition of research. Instead, Kass proposed a “learning health care system paradigm” in which research and care are integrated and for which ethics and oversight decisions are based on whether there are moral concerns that would be deduced through a variety of considerations.

The ethics and oversight of research came to the public’s attention in the 1960s and 1970s as a result of research ethics problems that gained prominent attention in the media, mostly notably the Tuskegee Syphilis Study. Given the context out of which they emerged, the regulations that were developed strongly emphasize protections. For example, IRB review and informed consent are now required for any kind of human research that meets the criteria that Bierer mentioned in her introductory remarks. These regulations rely on being able to define and identify the activities to which those regulations apply, Kass said. “Anything that met the regulatory definition of research needed oversight,” she explained. “Anything that didn’t, such as clinical care, did not.”

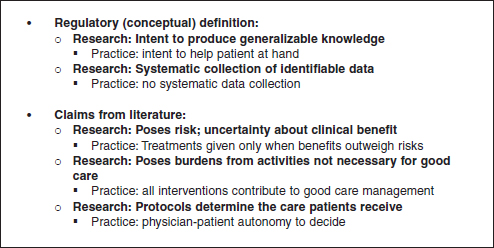

Kass then listed five criteria that have been used for distinguishing re-

search from clinical care (see Figure 6-1). Two of these criteria are defined by regulations, and the other three reflect common themes found in the scientific literature concerning morally relevant criteria that distinguished research from clinical care. Kass said that the three claims from the literature all make sense logically, but in practice those distinctions turn out to not be true in many cases. For example, one claim in the literature is that research has more risks and uncertainties than clinical care, but in fact, Kass said, “when you start to look at the data, there is a remarkable amount of uncertainty and risk in clinical delivery as well.”

Based on the work that Kass and her colleagues have done, she believes that the distinction paradigm does not work. “We challenge the view that using distinction for policy about what needs ethical oversight should be sustained,” Kass said, “There are practical, conceptual, and moral problems in relying on distinction.” From a practical perspective, too often IRBs look at regulatory definitions, but still do not know how to handle specific research proposals, and there can be disagreements between an IRB and OHRP that delay important research. Conceptually, the distinction is problematic because the definitions do not consistently put the same types of activities into the same categories, and from a moral perspective, the distinction paradigm leads to overprotection of low-risk research and underprotection from unsafe or unproven care.

To argue for a new paradigm, Kass described her team making two assumptions. The first is that integrating learning into health care is an ethi-

FIGURE 6-1 “Distinction paradigm” approach to distinguish research from clinical care.

SOURCE: Reprinted with permission from Nancy Kass.

cal good. “It is a good thing to increase the quality, value, fairness, and efficiency of health care, health care systems, and institutions,” she said. The second assumption is that any learning that does take place must proceed in an ethical way. “We must always be thoughtful about what kinds of activities are compromising patients’ rights and interests and what kinds aren’t and what kinds of safeguards we can build in, in addition to informed consent, that help make an impact on patients’ rights and interests,” Kass said.

From these assumptions, Kass and her colleagues developed an ethical framework for the learning health care system that has seven obligations:

- Respect the rights and dignity of patients/families.

- Respect the judgment of clinicians.

- Provide each patient optimal clinical care.

- Avoid imposing nonclinical risks and burdens.

- Address unjust health inequalities.

- Clinicians and health care institutions should conduct continuous learning activities

- Patients and families should contribute to the common purpose of improving the quality. and value of clinical care.

The first obligation is obvious, Kass said, and it is a central consideration for all IRB deliberations and for clinical care. She emphasized, however, that not every decision is of equal moral relevance to patients and that the duties of respect go well beyond autonomous decision making by patients. For example, it is of great moral importance for a patient to be involved in a decision about whether to have back surgery or physical therapy to alleviate back pain, but it is of little importance as to what kind of hand sanitizer the hospital uses. Kass added that one issue concerning autonomous decision making is that all considerations here get lumped into informed consent. “I don’t mean to minimize the importance of informed consent,” she said, “but there are so many additional ways in which we can demonstrate respect to patients.”

The second obligation—to respect clinical judgment—gets to the point that the goal of quality improvement research should not be to take all decision making out of the hands of physicians. This obligation requires asking whether an activity affects a clinician’s ability to use his or her own judgment when it advances the medical and autonomy interests of the patient. There is, however, a tension that exists between honoring this obligation and taking into account the evidence that clinicians’ judgments can be biased, conflicted, or less than fully informed, Kass said.

The obligation to provide patients with optimal clinical care, Kass said, raises the question of how a learning activity will affect the net clinical benefit to patients when compared to the benefit of the “ordinary care”

that they would receive in the absence of learning. Similarly, the obligation to avoid imposing nonclinical risks and burdens on patients is judged by examining the kinds of burdens that patients are being asked to undergo in a learning activity or research project versus the burdens that patients undergo when receiving “ordinary care.”

Kass said that the obligation to address unjust inequities might be “a little bit more out there,” but she noted that as an organization identifies an agenda that it wants to take on, it needs to be mindful of a duty to think about the injustices that happen in health care. The questions to ask, she explained, are whether a learning activity will exacerbate or reduce unjust inequalities and if an activity can be structured to advance the goal of reducing unjust inequalities in health care.

The sixth obligation says that health care professionals, health care institutions, and payers all have an ethical responsibility to conduct and contribute to learning activities that advance the quality, fairness, and economic viability of the health care system. Health care professionals, health care institutions, and payers are uniquely situated to contribute such data, and they have the expertise to make use of such data. Kass said that this obligation is relevant to their responsibilities to provide high-quality care.

The most controversial of the obligations, based on feedback she and her colleagues have received since publishing this framework (Kass et al., 2013), and the one that is most frequently misunderstood holds that patients have an obligation to participate in the enterprise of learning. “I do think there are certain kinds of activities where patients ought not be given a choice,” Kass said. “That doesn’t mean they ought not to be informed, but there are certain activities that in no way change the risk, the care, or the burden for patients where we could learn things that could make a difference in improving care.” Akin to much current, ongoing quality improvement, what needs to happen, she said, is that there needs to be a better way of explaining that this type of research is part of a cycle that helps provide patients with high-quality care. This obligation, she explained, derives from the moral norm of common purpose—that all patients have a common interest in having a high-quality, just, and economically viable health care system. She stressed that this obligation does not mean that patients must participate in all learning activities, but when a learning activity has little or no impact on the first four obligations, patients should have an obligation to participate.

In her final comments, Kass turned to the subject of implementation and discussed two steps that need to be taken to put this framework to use in the real world. The first step is to put in place ethics-relevant policies, which are transparent to patients, that deal with ongoing learning activities, that engage patients to help decide which studies need consent and further protection, and that provide accountability. The second step is to evaluate

the types of learning activities to determine what needs review and consent, and Kass listed three categories of learning activities that can help guide this triage step. Category 1 research creates no additional risk or burden and would require no consent or prospective oversight, though there would be random audits to ensure that these criteria are being met. Examples of this type of research would be chart reviews, some systems level interventions, and prospective observational studies that do not change care.

Category 2 research presents a low level of risk or burden and includes those studies for which there would be no reason to think that patients would object or prefer one approach over another. An example would be a study comparing the efficacy of two very similar blood pressure medications. There would be prospective oversight of Category 2 research, and consent would be streamlined. Category 3 research, which includes traditional intervention research, carries more risk and/or burden, and the different approaches being studied present meaningful differences to patients. Category 3 research would require prospective oversight and prospective patient consent.

APPROACHES TO DEALING WITH OVERSIGHT CHALLENGES

Susan Huang, University of California, Irvine

Susan Huang discussed three clinical trials that she said illustrate some of the issues that she and her colleagues have been struggling to solve. The first trial was the REDUCE MRSA trial that she discussed in the first workshop’s opening session. This trial benefited greatly, Huang said, from the fact that the chair of the Harvard Pilgrim Healthcare IRB, which served as the lead IRB for all sites enrolled in the trial, had years of experience in health care quality improvement. The IRB chair knew that in the context of this trial, the protocols met the national criteria for minimal risk and a waiver of informed consent set by OHRP, she explained. Given that the three strategies were already adopted by hospitals as quality improvement strategies and none was known to be more effective than the others, the IRB also ruled that randomizing by hospital did not increase the risk to the patients regardless of which of the three protocols they would receive. This enabled Huang and her colleagues to do a head-to-head comparison of these quality improvement programs. “If we had to obtain individualized consent,” she said, “we would not have been able to understand the effect of these quality improvement strategies in the way they are usually implemented by hospitals.” She noted that this trial was unusual in that it was studying interventions to prevent contagious pathogens for which the individual risk of infection is affected by the infection status of other patients in the same ICU. “Applying a unit-wide intervention to reduce bacteria has

indirect benefits that could be equal to or greater than the intervention a patient directly receives,” she said.

The second trial Huang described involved seven academic medical centers, each of which was testing the efficacy of antiseptic bathing in adult ICUs in a study that was similar to the REDUCE MRSA trial except that the patients were randomized according to when they received the intervention, not whether they received it. Six of the academic medical center IRBs waived consent, but the seventh required individual consent of their ICU patients. The seventh medical center had such poor uptake of the intervention that its ICUs dropped from the study, which in turn meant that the necessary prospective data that was critical to the study could not be collected, and the data could not be analyzed as a randomized clinical trial. Thus, a variation in IRB rulings regarding patient consent within a randomized clinical trial can have a significant bearing on the success and standardization of a trial, including cluster randomized trials where the intent is to apply the intervention throughout the cluster in a uniform and representative way.

The third example was of a cluster randomized trial of the same antiseptic soap used in the REDUCE trial in 10 pediatric ICUs. The IRBs at the five academic medical centers involved decided that individual consent was required despite the intent for this intervention to be a unit-based intervention. Although children do represent a vulnerable population, Huang noted, the types of quality-improvement strategies being studied in these trials are being employed by children’s hospitals in the United States as part of routine care. Although the investigators “did an absolutely heroic job of getting consent,” Huang said, they were still unable, for various reasons, to get consent for 35 percent of ICU patients who had been assigned to the intervention. As a result, the trial did not achieve the power that the investigators needed in order to analyze the trial as planned, so the as-randomized analysis failed to find a significant difference although the as-treated analysis for the 65 percent who participated did find a substantial effect size and a significant difference. The research was published in Lancet (Milstone et al., 2013).

Huang said she picked these three examples as a means of pointing out gaps in the way that IRBs make their decisions. She noted that NIH has examined some of these ethical dilemmas and that the NIH Collaboratory has tried to move some of these issues to the forefront by engaging OHRP, FDA, and PCORI. One gap is the lack of understanding about why there is such variation in the way IRBs rule on studies. “We cannot be dependent on whether or not we were lucky enough to have someone who was familiar with health systems, who understood quality improvement processes, and who knew that there were hospitals doing this all the time,” Huang said. In particular, it is imperative to address the idea of consent for quality-

improvement studies with minimal risk. “How do we get more uniformity in approaching things that are minimal risk so that we have more answers for the things that we are already doing?” Huang asked.

There are also gaps in the way quality-improvement studies are designed and conducted. Huang said that statisticians are rarely, if ever, involved in the design of a quality-improvement study. She said that quality-improvement studies need to take advantage of better experimental designs and they need to be done in multiple settings for generalizability and also because target outcomes are often infrequent. Furthermore, she said, quality-improvement researchers need to “stop being afraid of randomization” because they fear that a randomization trial design inevitably leads to a protracted IRB approval process. Improving this process and increasing the standardization of rulings across IRB committees, especially in the case of minimal risk studies, will be necessary to take advantage of one of the greatest strengths in study design. One place to start would be to better understand if and when randomization actually increases risk. In addition, from the patient’s point of view, there is still work to be done in explaining concepts such as randomization and keeping the randomization process transparent.

IRBs also need more consistency when dealing with vulnerable populations such as prisoners and children, particularly for low-risk quality improvement studies, Huang said. “We need to be able to study the things that drive quality improvement that we do every day and be able to reasonably test them in important populations such as children,” she said. “Otherwise, we may never get the right answer for what you want to know.”

The field must also figure out a way of working with industry on quality-improvement trials, Huang said, given that these pragmatic trials may often request contributed products and that pharmaceutical companies may have an interest in using the mounting data from well-conducted quality-improvement studies that support product use. This area is not well developed, particularly the use of cluster randomized trials for FDA indications.

James Weinstein, Dartmouth–Hitchcock Health System

In his comments, James Weinstein first described an 11-state trial that he ran—the Spine Outcomes Research Trial—that involved enrolling patients in a randomized or observational cohort to determine if the less expensive observational trial could replace RCTs for quality-improvement studies. This trial ran into all of the IRB issues that had been noted by previous speakers, he said, but, nonetheless, he and his colleagues were able to run the trial and publish the results in the New England Journal of Medicine (Weinstein et al., 2007) and the Journal of the American Medical

Association (Weinstein et al., 2006a,b). What Weinstein and his colleagues found was that the RCT was not much better than the observational trial. “There were some differences,” he said, “but the differences were not significant in many of the diagnostic groups.”

At end of the day, Weinstein said, patients have a great deal of decisional regret if they were not involved in the decision-making process. He noted that laws have been changed in California and Washington State to mandate that clinicians and patients together make shared decisions. He said that rather than have the process be one of informed consent, it should be one of informed choice, with the patient actively involved in the decision-making process.

In his final remarks, Weinstein discussed the High Value Healthcare Collaborative, previously discussed in Chapter 4, which he started with colleagues at the Mayo Clinic and Intermountain Healthcare and which has grown to include safety net systems such as Denver Health and Sinai Health in Chicago. These safety net hospitals do not pay to belong to the collaborative, whereas the other members pay about $200,000 per year to participate. The collaborative developed a master collaborative agreement that allows for the collection of data from EHRs and claims data from all payers and all patients and for that data to be shared among all the members. Weinstein noted that many of the nation’s 5,000 hospitals are struggling to survive, and he said he hopes that the nation creates more collaboratives to help share the burden of working to build a better health care system that reaches everyone equitably across the nation.

Peter Margolis, Cincinnati Children’s Hospital

Peter Margolis addressed the challenges of using data collected in EHRs from 66 institutions and transferred into a registry that is used to support clinical functionality as part of the Improve Care Now network. The registry is updated daily from data provided in real time. The registry includes personal health information that is held separately from the data. Margolis explained that when a patient’s data are pushed back out to care centers, the personal identifiers are reattached. The registry also uses a consent management tool that identifies which patients have consented for research and which have not.

Five years ago, he said, nine centers using a single protocol started the network. As the network grew, Margolis and his colleague decided it was important to put in place a more standardized approach for getting IRB approval, and they did so using a federated model in which the centers could choose to rely on Cincinnati Children’s as a central IRB with a single consent form. This consent form informs patients that their data are being used for clinical care and quality improvement, and it asks them to consent

to having their data used for research purposes. “We have quite a sense of urgency about making this process run smoothly,” Margolis said, “because we know that patients who participate in the system do better.” Of the 66 centers, 43 percent have chosen to rely on Cincinnati Children’s IRB.

What he and his colleagues have noticed is that there is significant variation among the IRBs regarding how they deal with the complexities of this type of data sharing, where some data is used for clinical care and some for research. “There are also differences of opinion about the kinds of risks that are involved for patients,” Weinstein said. Furthermore, there is a great deal of confusion among physicians and care teams about Health Insurance Portability and Accountability Act (HIPAA) regulations and IRB oversight. For example, he said, physicians and care teams have little appreciation for how much data sharing takes place under HIPPA authorization. He noted that the IRB process is time consuming, taking an average of 22 hours and 82 e-mail transmissions per care center to work through the IRB and legal approval process. “Meanwhile, the patients are not exposed to the benefits of the system,” Margolis said.

Two of the 66 care centers have now decided that they are not willing to share personal health information outside of their institutions. Those two centers created a separate encryption program to identify and re-identify patients, a process that has taken too long to accomplish, Margolis said. One of these two centers is not seeing the kinds of improvements in outcomes that the rest of the network sites are realizing.

Margolis ended his remarks by noting that there are huge opportunities for improvement using this type of data collection system but that health care systems first have to think about how to do more to inform patients and make them aware of just how a network such as the one he discussed can be a benefit to them. Most patients, he said, are used to interacting with their own clinicians and do not think about being part of a network. Health care systems also need to understand how to do this type of information collating and dissemination without overburdening physicians. Finally, he commented that it is important to educate clinicians, IRBs, and health systems that there are different ways of managing IRB approval and patient consent.

Session moderator Bierer started the discussion by asking Kass to explain how she thinks about informational risk, privacy, and the continuum of risk that she outlined. Kass replied that information risk is important and that it is something that is getting attention. “What I think is critical,” she said, “is to not separate our thinking in terms of what kinds of protections we want for research from the kinds of protections we want for clinical care

and, particularly, for electronic records.” Regardless of which realm is being considered, protecting patient privacy is critical, and it will be achieved through a combination of technology, rules, and firewalls.

After commending Kass for her work reconceptualizing the notion of risk with regard to the need for IRB approval, Harold Luft of the Palo Alto Medical Foundation said he was surprised by Brent James’s comment that only a small percentage of findings are eventually published. From his perspective as an economist, Luft wondered if there might be a way of incentivizing organizations that do quality improvement work to move their work into the public domain, perhaps by making a small percentage of Medicare and Medicaid dollars available for that purpose. Kass said that that was a great idea and that it should be extended to cover not only publication but implementation as well. “This is not to suggest that every project when it’s done is ready for widespread implementation,” Kass said, “but there is some number that are ready.”

Weinstein said that part of belonging to the High Value Healthcare Collaborative is a requirement to publish. He said that the Collaborative will soon have publications out that identify institutions and provide cost variations, outcomes, and other measures. He said that he hopes these publications begin the process of uncovering some of the variables that affect care. The collaborative will also be publishing case study reports of the type that are informative but do not get the attention of full papers in major journals. Rainu Kaushal, from Weill Cornell Medical College, said that well-designed studies can be turned into rigorous academic publications quickly and researchers can translate their findings into actionable information for policy makers and health care stakeholders without jeopardizing publications.

Commenting on the trouble of getting studies other than RCTs published in major journals, Richard Brilli from Nationwide Children’s Hospital said that journal editors need to be educated about newer statistical methods. “The traditional research community needs to be less afraid of statistical process control and interrupted time series with upper and lower control limits,” he said. Brilli added that statistical process control is just as valid a way to show improvement over time as are the more traditional randomization methodologies. Huang agreed that this can be a valid approach and said she believes it is a failure of our educational system that the randomized clinical trial, even when poorly conducted, has become the be-all and end-all for research. However, she added that too many quality-improvement studies use other approaches that are not statistically well designed and are not long enough to show meaningful improvements. Brilli replied that the Standards for Quality Improvement Reporting Excellence, or SQUIRE, guidelines do provide advice on how to publish quality-improvement work using statistical process control.

Steve Fihn from the VA also commended Kass’s work and said that the VA has now adopted many of the principles that she and her colleague developed and, furthermore, that it has published them in a handbook that governs operational evaluations by the VA’s leadership. He added, though, that the VA’s ethicists commented that many of the activities that Kass was talking about are indistinguishable from some of the activities that administrators are already doing. “It begs the question as to whether those things ought to be under some sort of evaluation and created pushback,” Fihn said. In reply, Kass recounted an anecdote about one system that, in order to stay out of trouble with the system’s IRB, created a “quality office” distinct from a research office and made sure to use words such as “survey” and “project” instead of “questionnaire” and “study” because “questionnaire” and “study” sound too much like research.

Lucila Ohno-Machado from University of California, San Diego, said she liked the notion of no additional risk and highlighted the challenge of quantifying risk at baseline in order to know whether there is additional risk. Margolis replied that there is no good vocabulary for describing how much risk exists concerning the loss of privacy but that making physicians and leaders more aware of the actual quantitative risk could be helpful.

John Steiner of Kaiser Permanente Colorado asked the panelists to comment on the role of empirically measuring items such as time to IRB completion in order to further the process of reform. As an example, he said that Kaiser has seven research departments and seven IRBs and that looking at the natural history of the studies that pass through each of the IRBs provides information on pain points and barriers and helps identify potential solutions to improve the process. Kass said that there have been some studies of variation in IRB approval that have been published but that there is room for many more studies of this type. “Metrics can be helpful for quality improvement, and IRBs are no exception,” Kass said, adding that the IRBs at Johns Hopkins have in fact used metrics to implement a few changes that made a big difference in time to IRB approval.

Bierer remarked that the Harvard-affiliated institutions have all signed on to one master agreement that allows any institution to rely on any other IRB for any clinical research. In the 7 years since the agreement was developed, she said, more than 1,000 protocols have been approved, and the culture of the entire Harvard system has changed to be more collaborative and trusting. Steiner added that one aspect of sustainability is for networks to be able to demonstrate to those who fund quality improvement studies that they can get IRB reviews done rapidly and efficiently.

In response to a question from Kenneth Mandl of Boston’s Children’s Hospital about how to get the public more engaged in quality-improvement research, Kass said that her sense is that the public has no idea how much is left to be learned in clinical medicine. “People know there isn’t a cure

for cancer, but for everyday medical care, I think people are shocked,” she said. She and her colleagues have a PCORI pilot grant to conduct engagement sessions with patients, educate them about the learning health care system, and find out what kinds of protections they would like to have in place. She also said people who have health problems are eager, in general, to have their data used. It is healthy people who seem to be more concerned about privacy.

This page intentionally left blank.