3

Evolving Data Needs

The rapidly changing characteristics of the science and engineering workforce result in a concomitant change in data needs. The National Center for Science and Engineering Statistics (NCSES) has always been focused on understanding these needs and keeping its surveys current. The recently launched Early Career Doctorates Survey (ECDS) is an example of an initiative that is responsive to emerging issues and to recommendations made by previous panels of the National Academies of Sciences, Engineering, and Medicine as well.

Part of NCSES’s motivation for commissioning the present study was to ensure that the agency is continuing to meet the policy and research needs of stakeholders, including Congress, federal agencies, the Office of Management and Budget, the National Science Board, researchers in academia and other sectors, providers of the data, the media, and the public. The challenges posed by the competing needs of stakeholders for surveys of this type are substantial, primarily because of the need to limit respondent burden. In addition, the need to keep pace with new developments in the realm of the science and engineering workforce has to be balanced with the need to maintain stability in the estimation of key trends.

Potential increases in respondent burden resulting from efforts to meet stakeholders’ data needs are a major concern because they could have an adverse impact on response rates, and therefore on data quality. Ideally, if new questions were added, other questions that had become obsolete or were not widely used would be dropped, and other questions might be asked of more targeted segments of the sample or in response to specific information gathered from survey or monitoring data. However, determining

what questions to drop or ask in more targeted or selective ways can be a difficult task for any government survey with a wide range of stakeholders who depend on the data, and who may or may not be active in making their voices heard. Identifying questions that could potentially be dropped is beyond the scope of this study. However, information about what data and publications are downloaded, as well as input gathered through discussions with data users at various venues, can provide insight into whether some items are no longer used. At the same time, these methods need to be used with caution to ensure that they do not result in misleading conclusions. For example, users of the data tables may be very different from users who typically download the microdata.

WORKSHOP ON DATA NEEDS

To better understand the data needs of users of science and engineering workforce data, including topics that are increasing in importance as a result of changes in society and the workforce, the panel held a workshop on October 20, 2016 (the workshop agenda, including the list of speakers, appears in Appendix C). The workshop involved a diverse group of participants from a variety of backgrounds and disciplines. Participants emphasized that NCSES enjoys international recognition for its work in the area of science and engineering statistics, and that the data it produces are of very high quality and meet important policy and research needs. The congressionally mandated Science and Engineering Indicators (SEI) and Women, Minorities, and Persons with Disabilities in Science and Engineering reports produced by NCSES were among the data products highlighted as most widely used. The new international component of the Survey of Doctorate Recipients (SDR)—the International Survey of Doctorate Recipients from U.S. institutions who migrate abroad—was mentioned as a recent addition that addresses an increasing need for data on such individuals.

Workshop participants shared their views on existing and emerging data needs, and several of the key areas discussed are reviewed in this chapter. Many of these needs go beyond NCSES’s primary mandate and should not be viewed as shortcomings of the existing surveys, but as areas in which there is potential for enhancing the usefulness of the data. Furthermore, the input received from stakeholders indicates that the content of the surveys matches many data needs reasonably well, yet the data are not always available in ways that would maximize analytic capabilities, particularly longitudinal analysis, and other emerging areas of interest are just beginning to be represented in the surveys. The nature of the data needs identified at the workshop suggests several possible mechanisms that could enable NCSES to accommodate new analyses or additional detailed questions about some topics or produce new estimates linked to the surveys in

other ways, thereby responding to changes in education and the workforce, including demographic characteristics and highly flexible, nonlinear education and career paths.

This chapter reviews the key data needs going forward based on input received at the workshop and from other sources, including the panel members’ own expertise and deliberations, as well as the broader literature on the science and engineering workforce. Some of the discussion in this chapter refers to NCSES’s science and engineering workforce surveys in general, while some is specific to one survey. It is also important to acknowledge that “scientists and engineers” is a broad concept, and there are many differences among individual fields. Some of the comments made by data users apply to some fields but not others. It is beyond the scope of this report to address these differences, and indeed the nature of the surveys is such that it is not possible to collect detailed data specific to particular subfields.

It is important to note as well that some aspects of data users’ needs are discussed in other chapters. For example, as a result of rapid changes in science and engineering education and workforce needs, a theme that emerged from the workshop is the desire for more current estimates across several topics. This issue is discussed in further detail in Chapter 6. Limitations related to data access, such as the level of detail that can be released, also are discussed in Chapter 6.

The remainder of this chapter examines possible strategies for addressing the identified data needs in ways that are likely to bring the most benefit with minimal disruption to trend data and the least increase in respondent burden. Expanding the data available through NCSES will undoubtedly necessitate reliance on creative data collection methods, such as administering topic modules, or asking some questions at longer intervals (depending on the goals for the question) or only if triggered by some event, such as a job change, as well as the increased use of nonsurvey and nontraditional sources of such data.

CAREER PATHWAYS

From both scientific and policy perspectives, it is increasingly imperative to understand the career pathways of scientists and engineers. NCSES recognizes, as stressed by workshop participants as well, that the education and career movements of scientists and engineers no longer follow a typical linear pipeline, but now are characterized by complex flows and pathways (Freeman and Goroff, 2009; Martin and Pearson, 2005; Morrison et al., 2011; Stephan, 2009, 2011). Movement increasingly occurs between public- and private-sector jobs and between academic and nonacademic jobs (Musselin, 2010). Some students take longer to graduate because they work part time or take time off for nonacademic training, such as internships. In some

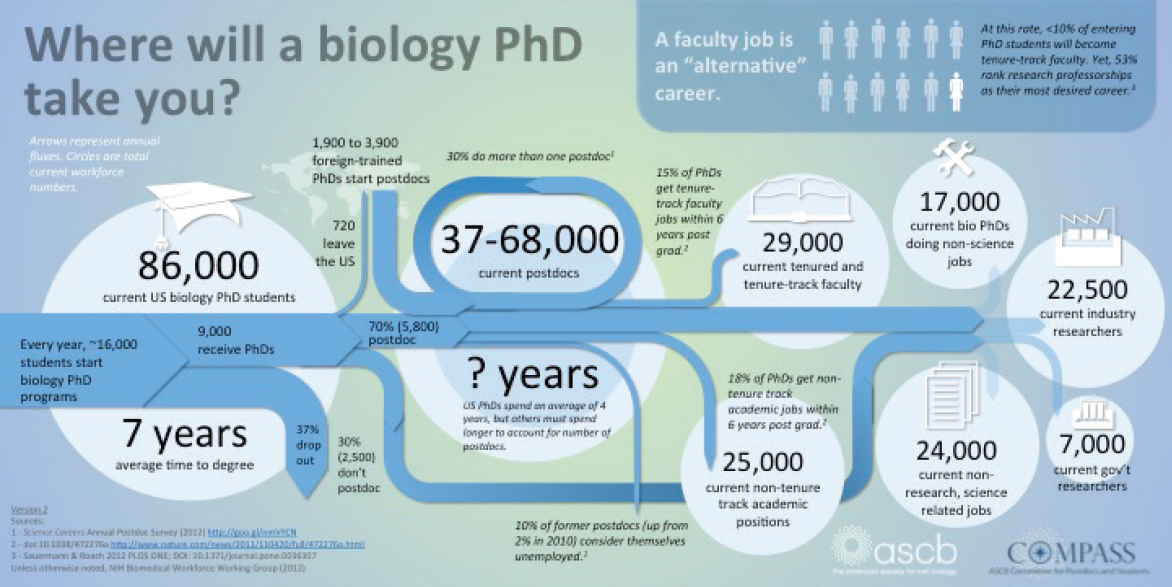

engineering and computer science fields, there is a perception that entering the workforce after obtaining a bachelor’s degree pays off more than pursuing a doctorate (Ehrenberg and Kuh, 2009). Some complete a doctorate but work in a field other than that of their degree (Aanerud et al., 2007; Nerad, 2009) or shift fields as a result of interdisciplinary work. In some disciplines, several postdoctoral positions are not unusual, while in others such positions in general are rare (Nerad and Cerny, 2002). Some individuals end up in science and engineering careers by following nontraditional pathways, and the likelihood of this occurring varies by such characteristics as race and gender. Likewise, retirement may be followed by a second or third career. Figure 3-1 illustrates these pathways in the case of doctorate recipients in the biomedical sciences.

While the NCSES surveys capture at each point in time the different types of jobs held by individuals educated or employed in a science and engineering field, the trajectories involved are not well understood. In addition to understanding these pathways, policy makers and researchers would like to know more about what factors influence people’s options and preferences. Some of these options and preferences evolve during academic training (Gibbs et al., 2014), and graduate deans are interested in the types of factors that influence people’s initial career decisions, particularly decisions to seek employment in nonacademic sectors (Goulden et al., 2009). Other factors come into play at later points in the life course and career (see the sections on work environments, work–life balance, and discrimination and harassment below).

There is substantial interest as well in better understanding the role of postdoctoral positions in a career. It is unclear to what extent pursuing such a position and the type of position pursued (for example, academic or nonacademic) reflect career preferences, a lack of other options, a lack of clarity as to opportunities, or family-related decisions (Nerad and Cerny, 1999; Rudd et al., 2010). A question is whether a postdoctoral position should be considered primarily training, and ultimately what the impact of such positions is on subsequent careers. The recently launched ECDS is a welcomed attempt on the part of NCSES to address some of the key questions related to this population, but the sampling frame for that survey has more severe limitations relative to the other NCSES science and engineering workforce surveys (see Chapter 4). As discussed below, the panel encourages NCSES to consider merging the content and samples of the ECDS with the ongoing SDR.

The rapid changes occurring in the science and engineering workforce, particularly the evolving skills required, mean that in some fields there is a need for periodic retooling, which may result in a return to an educational setting after several years in the workforce or a change in careers. The turnover associated with this is not well captured by the surveys. Also needed

SOURCE: Used with permission from Polka (2014) and ASCB, the American Society for Cell Biology.

is a better understanding of underemployment, the discouraged worker category, and re-entry in the context of science and engineering fields.

RECOMMENDATION 3-1: The National Center for Science and Engineering Statistics should place greater emphasis on following sample members longitudinally and obtaining survey data on the nature, determinants, and consequences of significant transitions in science and engineering career pathways.

INTERNATIONAL MOBILITY AND COMPARISONS

The increase in international mobility greatly complicates the career trajectories of many scientists and engineers. From a research and policy perspective, it is important to understand the mobility of scientists and engineers who are foreign-born (whether they are educated in the United States or not), as well as those who are born in the United States but are educated abroad, work abroad, or both (Lin et al., 2009). Of particular interest are the stay rates for foreign students and their characteristics in terms of field of degree and type of job (Gupta et al., 2003).

As discussed in Chapter 2, the SDR recently expanded its coverage to include individuals who have earned a doctorate from an institution in the United States even if they moved abroad after graduating. While collecting data from individuals who live abroad represents a challenge from a survey operations perspective, and in particular in terms of response rates, it is now possible to conduct analyses of this population based on whether they were U.S. citizens or temporary visa holders at the time they graduated, as well as on whether their place of residence was in the United States or abroad when the survey was conducted. Researchers at Oak Ridge Associated Universities have been producing regular estimates of the stay rates of foreign recipients of U.S. doctorates since 2000 (Finn, 2010, 2012, 2014). Over the years they have relied on data from the Social Security Administration (SSA) to produce these estimates, but because of difficulties associated with obtaining the data from SSA, their most recent report relies more heavily on the SDR (Finn, 2014).

The National Survey of College Graduates (NSCG) collects data on the college-educated population residing in the United States at the time of the survey, including individuals who received their degrees from abroad. However, the data available on the NSCG sample are more limited than those collected through the Survey of Earned Doctorates (SED) and SDR, and respondents are not reinterviewed if they move abroad (see Chapter 4).

Increasingly, members of the U.S. science and engineering workforce are people who have migrated from other countries to the United States after or in the midst of their education. NCSES has been working to identify

such individuals and follow them as it does scientists and engineers who are educated and/or begin their careers in the United States. NCSES needs to maintain and enhance its efforts in these directions.

In addition to more comprehensive coverage of scientists and engineers who are mobile across countries and the ability to examine some of the same questions about career pathways discussed above, policy makers and researchers would like to better understand trends that are unique to the internationally mobile population. People who immigrate or emigrate within multinational organizations are of particular interest. One of the main questions is related to the factors that influence a person’s decision about whether to remain in the United States or move abroad. This question is of interest not only nationally but also often from a local perspective. State and local governments frequently want to know what proportion of foreign students are remaining in the area where they graduate and why, especially in light of incentives by some countries (for example, China and India) to attract back doctorate recipients who studied abroad.

RECOMMENDATION 3-2: The National Center for Science and Engineering Statistics should work toward survey design and questionnaire content that capture both the immigration of individuals from abroad into the U.S. science and engineering workforce and the emigration of individuals from the U.S. science and engineering workforce to other countries, including the nature, determinants, and employment outcomes of such movements. Specific questionnaire modules targeted to these groups could explore such issues.

WORK ACTIVITIES, TRAINING, AND SKILLS

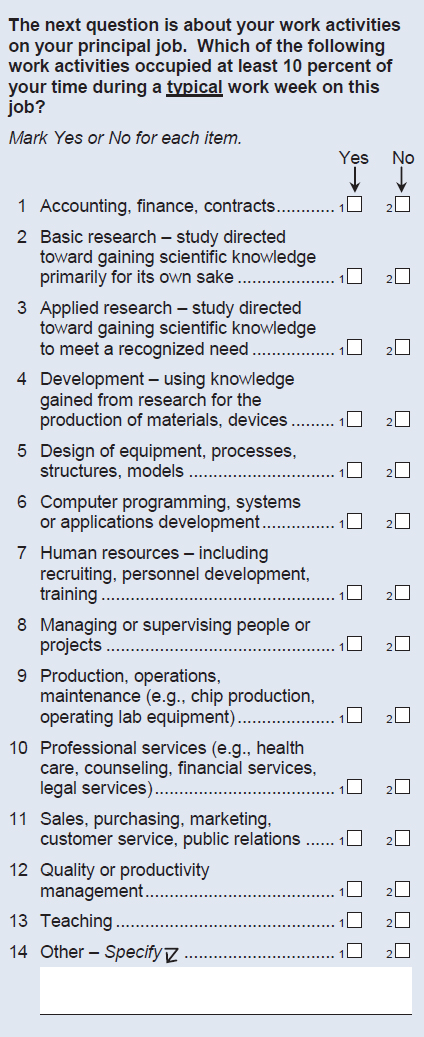

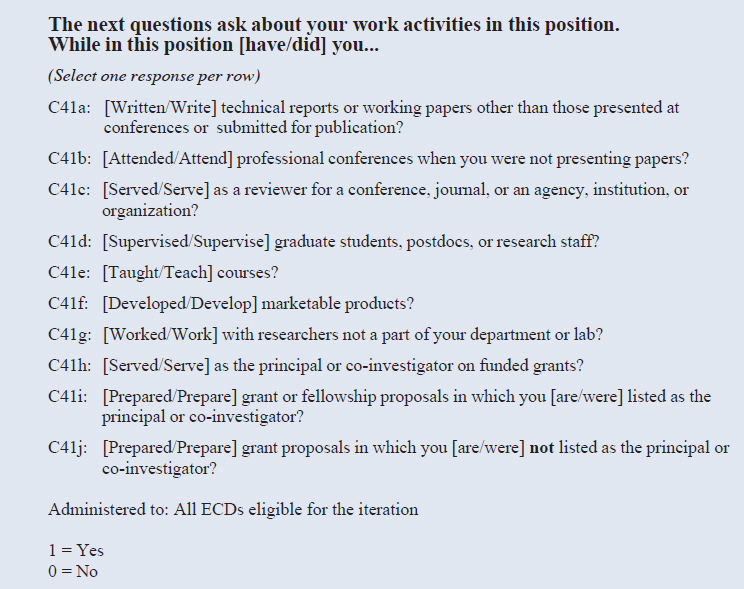

The NCSES surveys use a yes/no format to ask about the work activities in which respondents are engaged at least 10 percent of the time during a typical work week, and these questions provide a very basic understanding of the type of work people do. Figure 3-2 shows the NSCG and SDR list of work activities, which includes 13 items, such as basic research, applied research, and development. The question on work activities in the ECDS pilot survey, shown in Figure 3-3, includes a list of 10 items customized for early career doctorate recipients working in the types of settings covered by the sampling frame (academic institutions, federally funded research and development centers [FFRDCs], and the National Institutes of Health’s Intramural Research Program). Because of the ECDS’s more narrowly defined target population, its items are better able to reflect the work activities that are characteristic of the population of interest.

The NSCG and SDR also ask about work-related training, certification, and licenses, but less is known about the skills that respondents would

SOURCE: National Survey of College Graduates Questionnaire. Available: https://www.nsf.gov/statistics/srvygrads/surveys/srvygrads-newrespond2015.pdf [December 6, 2017].

SOURCE: Early Career Doctorates Survey Questionnaire. Available: https://www.nsf.gov/statistics/srvyecd/surveys/srvyecd_2015.pdf [December 6, 2017].

consider useful to have learned. The skills that are valued in the workforce are changing more rapidly than in the past, and there is a need to track these skills continuously (Golde and Dore, 2001; Rudd et al., 2008). Some of the skills needed in the science and engineering workforce (for example, management skills, managing a diverse workforce with more underrepresented groups, managing intellectual property rights) are not traditionally viewed as science and engineering skills. The need for this information is especially critical in the case of nonacademic careers, particularly in industry.

Workshop participants indicated that traditional academic institutions want to learn more about skills needed as input to their program planning (Aanerud et al., 2006; see also Chesley, 2015). Many are experimenting with integrating such approaches as targeted workshops, internships, training periods abroad, and collaborative projects, but often must speculate about what may be useful. Interdisciplinary programs also are on the rise,

and there is increasing interest in understanding how these programs affect career trajectories (Martinez et al., 2015).

The Council of Graduate Schools is in the process of developing a survey of alumni to understand how they are using their skills in their careers and to provide feedback to graduate schools. The survey is designed to complement data collected by the SDR and SED, as well as data compiled by the Association of American Universities Data Exchange and individual institutions.

An overall question is whether the degree itself was useful and whether, looking back, the person would make the same decision to pursue a degree in the same field and at the same level (Aanerud et al., 2006).

In addition to interdisciplinary programs and degrees at traditional institutions, several government programs have been launched to further interdisciplinary training and research and facilitate collaboration across disciplines and internationally. These include the National Science Foundation’s (NSF) Research Traineeship Program, the Partnerships for International Research and Education (formerly the Integrated Graduate Research Traineeship), and the National Institutes of Health’s interdisciplinary programs. If the data from the SED and SDR could be linked to administrative records from these programs, this could greatly expand understanding of the impact of these programs (see below for detailed discussion of data linkages).

Community colleges and apprenticeships have received some attention among the less conventional sources of training. Many university extensions also are offering certificate trainings to help workers maintain or upgrade their skills or transition to a skill that is in high demand. In addition, major universities are increasingly making more courses, and in some cases master’s degrees in science, technology, engineering, and mathematics (STEM) fields, available on the Internet.

Also seen over the past few years has been a rapid expansion in more informal sources of training. These include free online courses, such as those offered by Coursera, Udacity, and EdX; YouTube tutorials; Webinars offered by the journals Science and Nature; Websites that facilitate offline meetings, such as MeetUp.com; and even online communities, such as Stack Overflow and Stack Exchange. These types of resources can address the need to continuously update skills that characterizes some science and engineering fields, such as computer science, and also help expand participants’ personal networks.

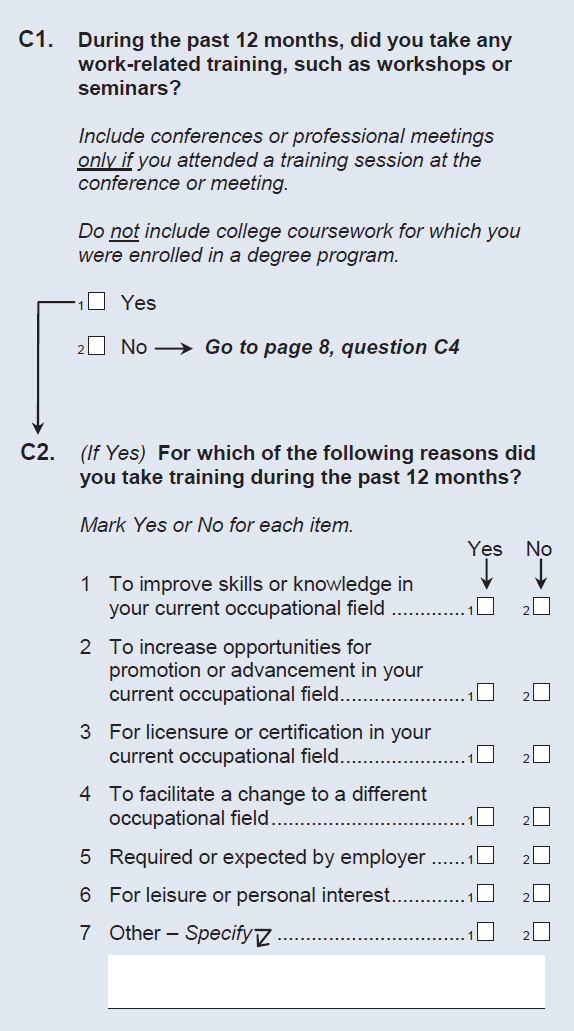

While the NCSES surveys ask about job-related training, the questions are focused on formal training (see Figure 3-4). Some forms of training, such as nondegree courses and especially informal training, including online tutorials and communities, may be missed. The surveys also ask about respondents’ reasons for pursuing the training, and the items used

SOURCE: National Survey of College Graduates Questionnaire. Available: https://www.nsf.gov/statistics/srvygrads/surveys/srvygrads-newrespond2015.pdf [December 6, 2017].

for this purpose capture the reasons for formal training reasonably well (refer to Figure 3-4, question C2). Although researchers who study this topic would also be interested in additional details about the training, such as frequency, quantity, quality, satisfaction, and funding sources (e.g., paid for by the employer or employee), most of these details may be beyond what the NCSES surveys could accommodate.

RECOMMENDATION 3-3: To address the desire of researchers and policy makers for in-depth information on such topics as training and skills obtained and needed, the National Center for Science and Engineering Statistics should explore and evaluate adding to its surveys topic modules that would vary from round to round or be asked only of subsets of the samples.

EMPLOYMENT OUTCOMES, RESEARCH PRODUCTIVITY, AND ENTREPRENEURSHIP

Workshop participants called attention to the keen interest in measuring outcomes associated with various programs targeted at science and engineering students and the workforce. As a result, many disparate efforts are focused along these lines (Leggon and Pearson, 2008). Some academic institutions are following up with participants from their own programs in an attempt to assess the programs’ impact, but these are small-scale efforts that could benefit from the availability of national data (Martinez et al., 2015). Also of great interest is being able to understand the outcomes associated with training, scholarship programs, fellowship programs, and internships among those who offer such programs, as well as in the federal government (e.g., outcomes associated with the Workforce Innovation and Opportunity Act).

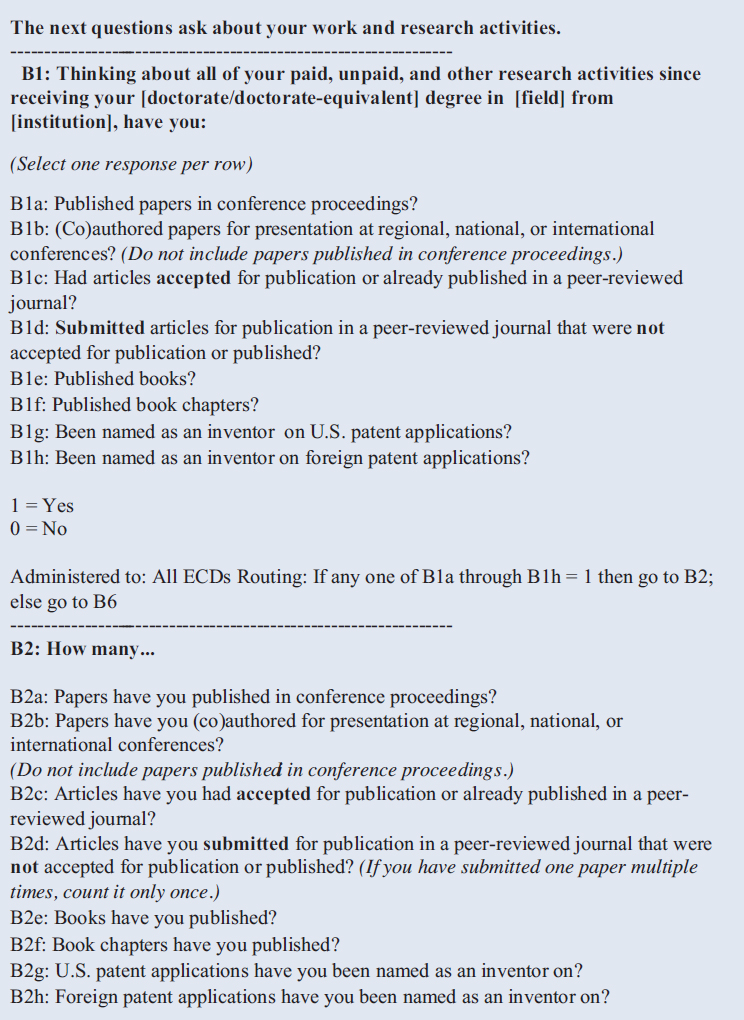

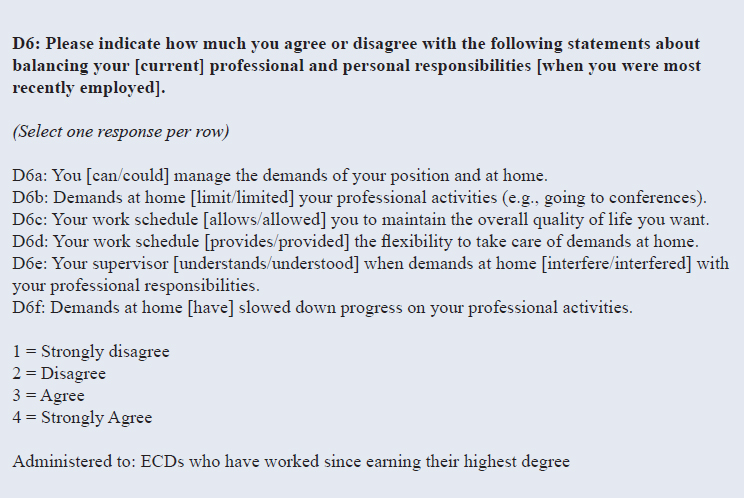

Measuring outcomes is among the key goals for the NCSES science and engineering surveys, but one of the challenges is that outcomes, particularly those related to employment and careers (compared with those related to education), can be difficult to operationalize and measure (Rubio et al., 2011). The NSCG and SDR include widely used questions on labor force status and the relationship between education and occupation, as well as some questions on job and employer characteristics. The SDR also includes questions on academic position, faculty rank, and tenure status. The ECDS pilot study asked several questions about professional activities aimed at capturing productivity, including several follow-up questions about patents. These questions are shown in Figure 3-5.

The input obtained from workshop participants highlighted the need for these types of data on publications and patents. NCSES is already conducting research (described below) on possibilities for linking survey

data to information about publications and patents. Questions related to factors that influence publications and patenting, such as collaborations and networks, would be useful to further understanding of these issues. In turn, the impact of scientific productivity on the ability to obtain research funding, promotions, or salary increases also is a topic of interest, particularly because of perceived gender differences in these areas (Blumenfield and Nerad, 2012; Nerad and Blumenfield, 2012).

In addition, researchers, practitioners, and policy makers want to better understand entrepreneurship, including involvement in launching a startup company. The NCSES surveys ask about whether the respondent is self-employed, capturing certain types of small businesses and successful entrepreneurs but not necessarily the founders of high-growth, innovative companies. The founders of startups would typically be more likely to consider themselves employees than self-employed, and thus would show up in the data as employees of a for-profit company. If it were possible to capture the concept of a startup, this information also would be useful for understanding the extent to which recent graduates are likely to be working at these types of companies versus other for-profit companies. This information would be useful not only for recent doctorate recipients but also for those without a graduate degree, and possibly even those with less than a completed bachelor’s degree.

RECOMMENDATION 3-4: The National Center for Science and Engineering Statistics should continue to enhance its measurement of employment outcomes, research productivity, and entrepreneurship/innovation. Greater use of targeted survey modules and/or administrative and other nonsurvey data would enhance the feasibility and effectiveness of such measures.

WORK ENVIRONMENTS AND WORK–LIFE BALANCE

Motivation to choose a specific career or job and the decision whether to stay or leave are complex, and are heavily influenced by earnings opportunities, the nature of the work, and prospects for growth and advancement (Nerad, 2009). Such factors as illness, disability, military or other government service, and a voluntary move to part-time work can lead to changes in career trajectories as well. Also playing a role is the broader climate of the work environment, including the presence or absence of welcoming, talented, and supportive peers, supervisors, and mentors, as well as experiences of discrimination, harassment, or a hostile work environment, especially among members of historically underrepresented groups such as women, racial/ethnic minorities, and those with nontraditional gender identities.

SOURCE: Early Career Doctorates Survey Questionnaire. Available: https://www.nsf.gov/statistics/srvyecd/surveys/srvyecd_2015.pdf [December 6, 2017].

Considerations related to work–life balance greatly influence career choices and pathways as well. These considerations are sometimes related to childcare or eldercare responsibilities. In some cases, the decision to relocate is the result of a partner or spouse changing jobs (Goulden et al., 2009). These issues often affect women more than men, although there also are differences by race, in part because the sex ratio among those with advanced degrees varies by race (Malcom and Malcom, 2011; Ong et al., 2011; Ceci and Williams, 2010). These types of moves may decrease in the future as a result of the rise of telework options. In addition to information about the reasons for job changes, data on the educational background and occupation of spouses or partners would be useful to shed further light on these trends.

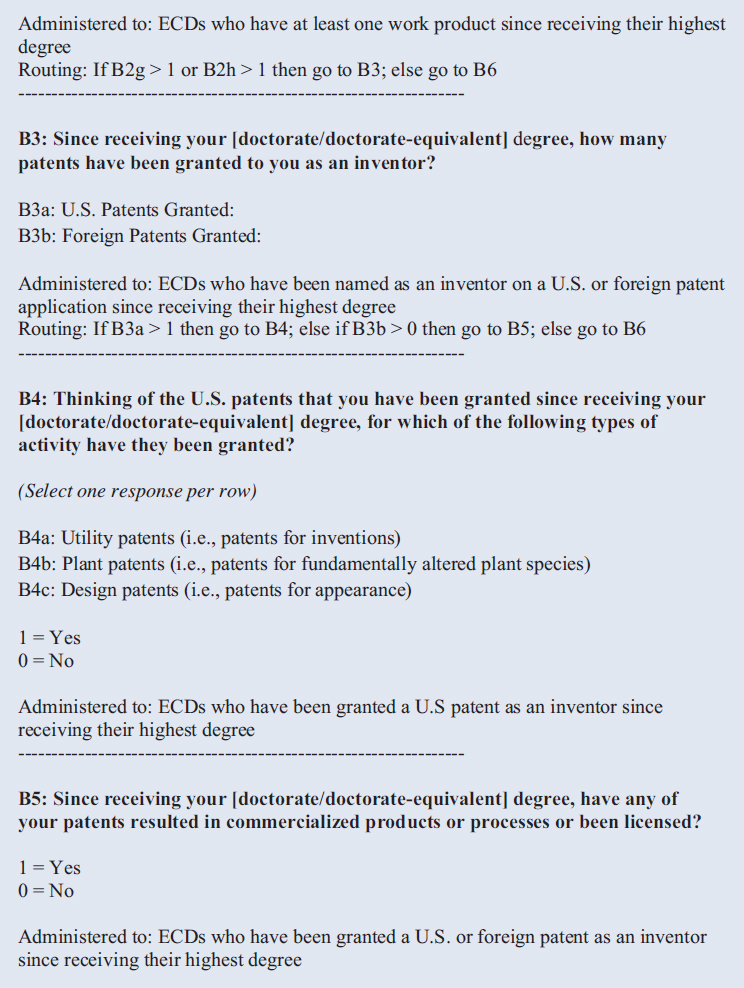

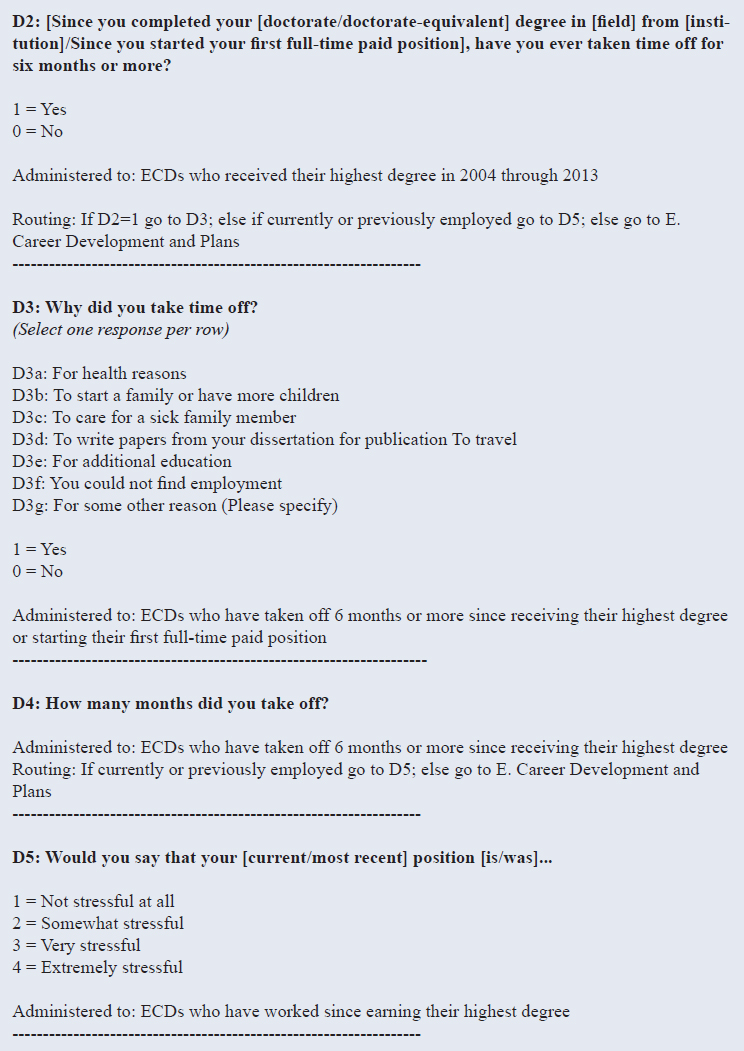

Researchers would like to know more about the characteristics of the workplace and work–life balance factors that can impact career paths. These include earnings, availability of mentoring, choice of projects, collegiality, collaborations, and telework options. A better understanding of the role of these considerations in career trajectories could advance research in this area. The new NCSES workforce survey, the ECDS, demonstrates responsiveness to emerging data needs in this area as well. The pilot study included a question about being away from work for 6 months or more, followed by a question about the reasons. The survey also asked about work stress level and about the ability to balance professional and personal responsibilities (see Figure 3-6).

Discrimination and harassment have long been recognized as barriers to full participation and performance to full capacity in science and engineering fields, not only for women but also for minority groups in general, yet no high-quality national data are available that could advance understanding of the extent, nature, and implications of these phenomena. This is an area NCSES has not yet explored, and although these topics are sensitive and difficult to measure, doing so is important to guide employer decisions and inform policy in this area.

RECOMMENDATION 3-5: The National Center for Science and Engineering Statistics should assess the performance of the new questions on working conditions and work–life balance in the Early Career Doctorates Survey and should consider adding these questions to the National Survey of College Graduates and the Survey of Doctorate Recipients as well.

RECOMMENDATION 3-6: The National Center for Science and Engineering Statistics should develop for the surveys core questions and a more in-depth module on harassment and discrimination.

SOURCE: Early Career Doctorates Survey Questionnaire. Available: https://www.nsf.gov/statistics/srvyecd/surveys/srvyecd_2015.pdf [December 6, 2017].

POSSIBLE STRATEGIES FOR EXPANDING DATA ON THE SCIENCE AND ENGINEERING WORKFORCE

This section describes several possible strategies for expanding data on the science and engineering workforce and potentially addressing some of the key needs of data users. Because substantially increasing the length of the surveys would clearly not be possible without increasing respondent burden and jeopardizing response rates, several overall strategies could be considered that would likely have benefits in one or more of the areas discussed above.

Longitudinal Analysis

The designs of the NCSES workforce surveys include several rounds of follow-up with sample members. The NSCG currently interviews people for four cycles, and the SDR does so biennially until respondents reach the age of 76. However, data users generally cannot take advantage of the longitudinal aspects of the surveys because the data products are structured based on the model of a series of repeated cross-sectional surveys and do not include weights and documentation that would facilitate longitudinal analyses. This limitation has frustrated data users for many years. In addition,

the recent SDR redesign led to the loss of about two-thirds of the existing panel cases in that survey (for a more detailed discussion, see Chapter 4).

As discussed earlier in this chapter and reflected in Recommendation 3-1, some analytic goals that NCSES considers priorities can be achieved with cross-sectional data, but changes in the science and engineering workforce are increasing the need to understand career pathways that are not as predictable and linear as they were in the past, as well as the impact of various factors, such as training and work environments, on career outcomes and job choices. There is also an increased need to better understand the inflows and outflows of scientists and engineers in the U.S. workforce, particularly international mobility.

Many of these emerging data needs could be addressed by taking better advantage of the longitudinal nature of the surveys—in other words, by treating the surveys as true panel designs that follow some of the sample members over the years. An increased emphasis on panel design also could enable a redesign of the questionnaires to focus on what has changed since the last contact with the sample member. This redesign could potentially free up space for a more nuanced understanding of the reasons for the changes, a gap in the current data.

Beyond integrating data from the different waves within each of the surveys, integration that enables longitudinal analysis across surveys would facilitate additional research. Measures related to interdisciplinary education that are included in the surveys are limited (field of degree and second field of degree in the NSCG, and field of interdisciplinary dissertation in the SED). The ability to conduct analyses on a dataset that longitudinally integrated responses from the SED and the different waves of the SDR would help in understanding how multidisciplinary education shapes future career pathways.

NCSES recently launched several activities that support longitudinal analyses. These activities include commissioning analytic work to construct properly weighted longitudinal data files for the past few rounds of the SRD and NSCG, and consulting with survey design experts and data users on design options for building up a substantial longitudinal component in the SDR going forward (see Chapter 4). Enabling longitudinal analyses would be the most far-reaching enhancement for expanding the usefulness of the NCSES workforce surveys, as reflected in Recommendation 3-1 above.

Survey Core Questions and Modules

One way to balance the need for fine-grained analysis of the core questions of the NCSES surveys with the need for occasional detail on some issues would be to redesign the surveys to accommodate topic modules. As discussed further in Chapter 5, this redesign would involve identifying

a short list of core questionnaire items that would be administered to all sample members during every round of the survey, and grouping other questions into topical modules that would vary from round to round or be asked every round, but only of a subset of respondents. NCSES could develop survey modules that would serve as in-depth follow-ups to specific changes and perhaps be triggered by specific survey responses or information about a change from other sources of data, such as administrative records or other forms of nonsurvey data (discussed later in this chapter).

To some extent, the NSCG and SDR already rely on questionnaire structures that are based on core topics and topic modules administered periodically and focused on specific policy issues or on the gathering of in-depth information on a subpopulation. Over the years, both the NSCG and SDR have included modules on job satisfaction and attributes, federal support of work, immigration information, organization of work, international collaboration, and productivity measures (publications, patenting). In addition, the NSCG has included modules on professional certifications, community college enrollment, education debt (amount borrowed/owed), and academic positions. The SDR has included a module on postdoctoral experiences (see Chapter 2).

Recommendations 3-2 through 3-6 above identify topic areas in which there is a clear need for additional in-depth data that could potentially be addressed through topic modules: (1) factors that lead to career or job changes, including immigration to or emigration from the United States; (2) training and skills (including skill gaps); (3) employment outcomes and research productivity; (4) entrepreneurship and innovation; and (5) working conditions, including work–life balance and discrimination and harassment.

It is important to acknowledge that the desire of researchers and other data users for detailed information in many areas surpasses NCSES’s ability to address these needs, even with greater reliance on topic modules. As discussed earlier, modules often increase respondent burden, particularly if many of the existing questions would be considered core questions that needed to be asked of everyone. To address this issue, it might be possible to experiment with administering a series of modules over a short period of time (some of which could consist of core questions and other questions that applied only to respondents with certain characteristics), and then evaluating how the response distributions and respondent perceived burden compared with the results from a longer version of the survey administered all at once (West et al., 2015). The use of modules for subsets of the sample also can greatly increase the complexity of a survey. Nonetheless, greater reliance on modules would be worth exploring, looking ahead to a system of longitudinal data that might integrate data across the surveys and from other sources. Increasing reliance on electronic modes of data collection, particularly Web administration, and a move away from paper-and-pencil

questionnaires (see Chapter 5) also will likely make the use of customized modules more feasible.

NCSES could explore collaborations with other government agencies to support in-depth modules on topics of mutual interest and to identify efficient ways of collecting data on these topics. Agencies that might be particularly interested in detailed data on selected topics of mutual concern include the Bureau of Labor Statistics and the Census Bureau.

Other Questionnaire Modifications

In some areas, minor modifications to a questionnaire may be necessary or desirable to capture new characteristics and trends in the workforce. One question that warrants particular attention is the employer question on the NSCG and SDR, which asks for the employer’s location (refer to Figure 3-1). Although the questionnaires collect address information for the respondent’s residence separately, the physical location of the employer is important to understanding mobility, and the growing complexity of work arrangements may be making this question increasingly more challenging to answer.

The NSCG and SDR also ask respondents whether they were working for the same employer and in the same job during the reference week as during the reference week of the previous data collection. If the data were linked longitudinally, this information would be known, along with information about the location of the previous employer. The increased flexibility afforded by electronic data collection modes means there are fewer justifications for one-size-fits-all questionnaires, and in the future, information about a job change could drive subsequent questions, potentially reducing respondent burden and enabling NCSES to ask more detailed follow-up questions about the reason for the change. Currently, respondents who indicate that they changed either their employer or their type of job are asked to provide the reason. One of the answer options is “job location,” but this response by itself does not enable an in-depth understanding of the mobility-related reasons. Reviewing the verbatim answers recorded under “some other reason” with the goal of identifying emerging trends could shed some light on whether this question could be revised or expanded.

Another question that could benefit from updating is that on training (refer to Figure 3-4). This question could be revised to ensure that it captures a broader range of training, particularly newer forms of informal training.

Integration of Data from Multiple Nonsurvey Data Sources

The NCSES surveys are essential for producing nationally representative data on key topics, many of which can be assessed meaningfully only through self-report because of their subjective or idiosyncratic nature. However, alternative data sources becoming increasingly available capture information of interest about scientists and engineers. As discussed earlier, some of these nonsurvey data sources are promising mechanisms for supplementing, or possibly even replacing, survey questions to reduce respondent burden.

NCSES already has some experience with the use of administrative data to enhance survey data collected by the SED. For the SED, institutional coordinators are asked to assist in obtaining from the institution’s administrative records such missing critical items as year of birth, sex, race/ethnicity, citizenship, country of citizenship, bachelor’s degree year and institution, and postdoctoral location. SED survey data also have been linked to administrative data from SSA to derive short- and long-term stay rates.

In addition, NCSES is exploring the use of alternative data sources to enhance information on such topics as employment outcomes, research productivity, and entrepreneurship. For the ECDS, NCSES is considering the use of data extracted from respondent-provided resumés and information from ORCID, a nonprofit organization that links education and research data for those who register. NCSES also is investigating the linkage of STAR METRICS, UMETRICS, and PROQUEST data to SED respondent data. Publication data from Web of Science and patent data from the U.S. Patent and Trademark Office are being linked to SDR respondent data as part of a feasibility study to evaluate data quality. NCSES is also experimenting with Web scraping to collect degree and work histories for SDR respondents. ScienCV could be another potential source of data to evaluate.

Some of the research on alternative data sources is being conducted in collaboration with the Census Bureau’s Center for Administrative Records Research and Applications (CARRA). CARRA’s research is focused primarily on data sources that could enhance the sampling frame for the NSCG, the survey for which the Census Bureau serves as NCSES’s data collection contractor, but the research is likely to have implications for the other surveys as well. (Research on the use of alternative data sources to enhance the NSCG sampling frame is discussed in detail in Chapter 4.) The data sources being evaluated by CARRA include the Census Bureau’s own Master Address File and Longitudinal Employer-Household Dynamics (LEHD) program; several other government agencies, such as the Internal Revenue Service (IRS), the Department of Education, and the Department of Homeland Security; career network Websites (such as LinkedIn, Monster.

com, and Chroniclevitae.com); online resumés; and the National Student Clearinghouse.

The potential use of data from the LEHD program, and unemployment insurance earnings data in general, is particularly promising because these data could serve multiple purposes. The Census Bureau’s LEHD program combines state data with administrative data from federal agencies and from censuses and surveys to create a longitudinal database of linked employer–household data. Linking this information to IRS data, such as Business Register, Longitudinal Business Database, and Form W-2 data, could supplement the NSCG data with additional detail about sample members’ employers and potentially provide a source of updates on employment that would reduce the need for frequent survey followups. Unemployment insurance earnings data also could be linked to data from the Quarterly Census of Employment and Wages, which can identify new firms. Individuals taking new jobs at new firms could then be identified and receive targeted questions, for example, on entrepreneurship. One challenge associated with IRS data is that employer location and industry data are at the firm rather than the establishment level, which limits the ability to determine workplace location and type of work. Appendix D examines the possible use of unemployment insurance earnings data in further detail.

In terms of data available from other government agencies in general, one of the main challenges is related to developing procedures for and negotiating the terms of data sharing. Different surveys use different language to describe to participants how the data will be used and to obtain informed consent, which often limits how the data can be shared. Changes to the language must be carefully considered because of the possible impact on response rates. Another difficulty hindering collaboration between government agencies to advance work in this area is resource limitations, especially the fluctuation in resources available for this type of work within agencies. In the case of data from state agencies, the need to negotiate a data sharing agreement and adjust processes for each individual agency greatly increases the challenges. Nonetheless, some of the most valuable data for potential enhancement of the science and engineering surveys originate from other government agencies, and research on issues related to the use of nonsurvey data lends itself well to a variety of productive collaborations, such as the work being conducted with CARRA. These collaborations could be expanded to encompass methodological research (beyond the work conducted to evaluate data sources), building on the extensive methodological research experience of both the Census Bureau and NCSES. A variety of initiatives now under way government-wide could facilitate this type of collaboration going forward (see National Academies of Sciences, Engineering, and Medicine, 2017).

One limitation that needs to be addressed in assessing the potential use of these types of data is the lack of representativeness (in other words, data are likely to be available for some sample members and not others). In fact, researchers sometimes use NCSES survey data to benchmark data from other sources and to better understand the latter data’s strengths and weaknesses. However, datasets do exist in which representation is very high for some types of scientist or engineer subgroups. On some topics, such as the types of projects on which a person is working, small-scale studies could be useful, focusing, for example, on the development of a richer dossier with substantial detail on the subset of sample members for which data are available. In some cases, data from external sources also could provide important information for research on topics related to the science and engineering workforce even if data from those sources could not be linked to the survey data.

Recent research in the area of record linkages has focused on ways of obtaining consent for linking survey data to data from other sources, as well as on the implications of consent bias, which is another source of concern in terms of the representativeness of the data from nonsurvey sources (Sakshaug et al., 2015, 2017). Privacy and confidentiality issues need to be considered and researched in general, in the case of all data from nonsurvey sources, including online databases and the Internet.

While due caution is necessary regarding the use of nonsurvey data, and despite the implementation challenges, options available for expanding the NCSES survey data with data from alternative sources are growing rapidly. Some of the identified gaps in the survey data are of the type that could be addressed through supplementation with data from other sources, and use of these types of data also could reduce respondent burden and attrition. The populations of interest for the NCSES science and engineering workforce surveys are particularly well suited to the use of online sources for supplemental data because more information about these sample members than about the general population is likely to be available online.

NCSES could be at the forefront of research on the use of the new data sources and assessment of their quality. Possible mechanisms for jumpstarting innovative research on combining data from multiple sources include establishing a research center of excellence; collaborating with academic centers; and using fellowships, dissertation awards, or grants to support research conducted by others.

The panel’s recommendations below are consistent with the direction endorsed by recent reports of the National Academies of Sciences, Engineering, and Medicine (2017) and the Commission on Evidence-Based Policy Making (2017).

RECOMMENDATION 3-7: The National Center for Science and Engineering Statistics should explore mechanisms for accelerating research on the use of alternative data sources to expand and supplement the information obtained from the science and engineering workforce surveys.

RECOMMENDATION 3-8: The National Center for Science and Engineering Statistics (NCSES) should continue its collaboration with the Census Bureau toward expanding the use of external data sources to supplement its science and engineering surveys. A particular focus should be the use of unemployment insurance earnings data to supplement the survey data with additional, more complete, or more frequent data points regarding career trajectories. NCSES should also pursue collaborations with other government agencies to explore options for combining data from different sources.

INTERNATIONAL EFFORTS AND COLLABORATIONS TO EXPAND SCIENCE AND ENGINEERING WORKFORCE DATA

With an increased interest in international comparisons of science and engineering workforce data on particular topics such as international mobility, it is important for NCSES to continue its collaborations with international partners to coordinate data collection strategies and definitions. OECD, in collaboration with the United Nations Educational, Scientific and Cultural Organization Institute for Statistics, and Eurostat recently launched the Careers of Doctorate Holders project, which aims to develop internationally comparable indicators on the careers and mobility of doctorate recipients. The project was developed with support from the European Union and NSF. These types of collaborations are important because international comparability greatly enhances the usefulness of the data.

Interest in alternative data sources is increasing in other countries as well. For example, OECD is exploring the use of databases of inventors and authors of scientific publications. One project has examined the international mobility of scientists based on a mobility measure derived from data on affiliations obtained from a global index of scholarly publications (Appelt et al., 2015). Another OECD initiative, the International Survey of Scientific Authors, follows up on a stratified random sample of corresponding authors from the same publications database (Boselli and Galindo-Rueda, 2016; see also European Science Foundation, 2013, 2015).

Likewise, Statistics Canada has been experimenting with the use of administrative data from two government sources: the Postsecondary Student Information System (PSIS) and the Registered Apprenticeship

Information System (RAIS). PSIS is a registry of enrollments in postsecondary institutions containing several variables, including field of study, while RAIS contains similar information for registered apprentices. Over the past few years, PSIS and RAIS have become the backbone of an integrated system called the Education Longitudinal Linkage Platform (ELLP). The ELLP complements the PSIS and RAIS data with data from other sources, in particular, income tax data. More important, this linkage is maintained longitudinally, enabling researchers to track the paths of individuals through the education system and then the workforce. There are plans to add other data sources to the ELLP, such as student loan files and survey data.

A recently published pilot study using the ELLP for graduates from universities in Canada’s Maritime provinces compares the economic outcomes of graduates who earned their degree between 2006 and 2008 with those of later cohorts (Galarneau et al., 2017). This project provides a good example of the type of longitudinal analysis that can be conducted by combining data from purely administrative sources.

RECOMMENDATION 3-9: The National Center for Science and Engineering Statistics should continue to consult and collaborate with international partners on ways to improve and enhance data on the science and engineering workforce.

Although the availability of data sources varies by country, particularly in the case of administrative data, international collaboration and consultation can generate valuable ideas with potential to be adapted for implementation in the United States. Collaboration with the goal of researching the use of alternative data sources that may be internationally applicable, such as data extracted from online databases, could be especially useful.

MONITORING OF FUTURE DATA NEEDS

NCSES’s science and engineering workforce surveys have remained current over the decades because of the agency’s due diligence in keeping up to date on emerging workforce issues. However, the pace of change is increasing, and the emerging issues are increasingly more complex and substantially more difficult to measure. To meet this challenge, NCSES needs to incorporate into its planning efforts mechanisms that will allow it to remain well informed about stakeholders’ data needs and emerging topics of importance.

One option would be to involve an advisory board focused on providing guidance on the prioritizing of topic modules. The NSF Directorate for Social, Behavioral, and Economic Sciences has an advisory committee,

but it does not always include members with expertise specific to NCSES’s mission. Another option would be to issue calls for proposals for new topics, as done for the General Social Survey. This mechanism also could facilitate broader use of the surveys in general because more researchers would become familiar with the core questions included in the surveys on a regular basis.

Another option would be to communicate more extensively with user groups. NCSES recently issued a task order to enhance outreach efforts for its Human Resources Statistics (HRS) Program by strengthening relationships with current customers and developing the groundwork for interaction with potential customers. The goals are to

- identify and document the current and potential HRS customers;

- improve HRS’s understanding of the needs of its customers and how they interact with the HRS data products and other data sources;

- gather feedback on how the HRS survey content aligns with customers’ data needs;

- strengthen the NCSES brand by increasing awareness of the HRS data and data products; and

- develop a framework for continued interactions with the HRS customer base.

The task order represents a promising effort to broaden understanding of stakeholder needs, and the focus on developing a framework for continued interactions with stakeholders is particularly important. A possible avenue for obtaining regular input would be to form a data user group that could serve as a sounding board on issues related to survey content. Periodic data user conferences or workshops would also be invaluable. Collaborations with academic centers, fellowships, dissertation awards, and grants would be other good means of keeping current on emerging topics of interest concerning the science and engineering workforce (for additional discussion, see Chapter 6).

RECOMMENDATION 3-10: The National Center for Science and Engineering Statistics should implement mechanisms for continuously monitoring input on emerging data needs from stakeholders in the academic, research, policy-making, and practitioner communities.

This page intentionally left blank.