As discussed in Chapter 1, one of the core characteristics of real-world evidence (RWE) is that it is “fit for purpose.” That is, evidence should be capable of answering a research question, even if it was originally generated for a purpose other than research. Several workshop participants said that regardless of the source of the data or the research method employed, the most critical element of using RWE is making sure that the evidence is fit for purpose. Andrew Bindman, professor of medicine, epidemiology, and biostatistics at the University of California, San Francisco, said there are many ways to arrive at scientifically valid evidence, so the means by which evidence is generated should be adaptable to the needs of the end user. Robert Califf, vice chancellor of health data science at Duke University and scientific advisor at Verily Life Sciences, agreed, and said that different end users not only have different evidence needs, but they also need different degrees of certainty in the data. These end users could be payers, providers, patients, or others; each has his or her own unique perspective and needs for evidence. The workshop participants heard from four speakers representing three different groups: payers, delivery systems, and patients. The speakers discussed how they use evidence to make decisions, and were asked to identify how RWE does or could inform their decision-making process.

PAYER PERSPECTIVE

The main challenge for payers, said Michael Sherman, senior vice president and chief medical officer at Harvard Pilgrim Health Care, is balancing access and affordability while at the same time driving innovation. Sherman said one out of every four dollars that Harvard Pilgrim spent in 2016 was on drugs, and that Harvard Pilgrim members spent more than 20 percent of their out-of-pocket health care dollars on drugs. Sherman stressed that for drugs that keep people out of the hospital, extend life, and improve chronic disease, “cost should not be a barrier,” but that drugs with less certain or less profound impacts may not have the same value.

With this tension between access and affordability in mind, Sherman moved on to the topic of generating evidence for medical interventions. Clinical trials, said Sherman, are not always relevant to the real world of medical practice. In clinical trials, he emphasized, the investigators are

experts, the patients are carefully selected, there is full compliance with all protocols, and patients are closely monitored to address concerns and ensure adherence. In the real world, by contrast, providers are not necessarily experts in every field, work with large patient loads, and may have their own biases and ways of practicing, Sherman said. In particular, new diagnostics offer challenges; while a test may give information to physicians and patients about what course of action to take, there are many other considerations that inform treatment decisions, so the value of the diagnostic is unclear. Sherman said that even for commonly accepted treatments, evidence-based medicine is not always followed. For treatments or diagnostics that are newer, more complicated, and more expensive, it is not always clear if the investment is worthwhile because decisions made by the physician and patient may not be fully aligned with the results of these diagnostics, he said.

Financial pressures on payers are increasing, said Sherman, particularly as innovation is resulting in new drugs and therapies such as gene therapy. Although these innovations are very exciting, he said, evidence must show they are worth the price. Unfortunately, data are limited for some of these new innovations, and some recent U.S. Food and Drug Administration (FDA) approvals have been seen as “overly broad” and based on limited evidence, said Sherman. When data are limited, pricing is uncertain, and clinical variability is unknown, payers are “understandably a little concerned” about paying for these new innovations. Sherman noted, however, that FDA has a difficult job, and that patients and families are reasonable to expect access to drugs that can give hope, even when evidence is limited. For some rare conditions, there will never be the possibility of a high-quality, randomized clinical trial of sufficient size; for other conditions, the time that it would take to generate proper data is significant, and during that time, patients are suffering. This is an ethical “tightrope” that patients, providers, regulators, and payers all must walk, said Sherman.

Sherman offered suggestions that could help to balance concerns about access and affordability for such approvals that may have been made based on limited evidence, while also encouraging innovation in research and development. As a caveat, Sherman noted that FDA is limited in what it can require of manufacturers. That said, he suggested that in cases where an approval may be based on limited evidence, FDA could consider

- Requiring manufacturers to enter into value-based agreements that tie reimbursement to success of the drug (tied to outcome measures used to gain approval);

- Requiring manufacturers to submit data to an objective third party (e.g., Institute for Clinical and Economic Review) and agree to pricing that aligns with findings; and/or

- Encouraging postmarketing payer–pharmaceutical company collaboration to use data generated by these value-based agreements.

Sherman said this proposal could have several benefits. First, it could create a structure for payers in which they could offer a drug, but would only be required to pay for it if it was shown to actually add value. Second, it could provide transparency and certainty to the pricing of drugs, and the value of the drug would be reflected in the price. Third, it could create RWE in the normal course of practice—as drugs are made available to patients, data would be collected and analyzed to study the patient outcomes and value of the drug. Finally, this process could reduce uncertainty for companies that are submitting drugs for approval. Currently, a drug may be approved, but there is still uncertainty about whether insurers will pay for it, which creates frustration for the companies as well as patients and providers. This type of proposal, said Sherman, could increase transparency and certainty, help to grow the evidence base for new innovations, and improve access to life-saving drugs for patients.

DELIVERY SYSTEMS PERSPECTIVE

Concurring with many of Sherman’s remarks was Michael Horberg, executive director of research, community benefit, and Medicaid strategy and the Mid-Atlantic Permanente Research Institute at the Mid-Atlantic Permanente Medical Group, Kaiser Permanente (KP). In particular, he agreed that a “clinical trial is often an idealized version of what we would all hope the care would be.” In the real world, there are issues with patient adherence and care delivery that can impact the effects of a medical intervention. Because KP both delivers care and pays for the care, said Horberg, medical practices must be backed up with quality, relevant evidence that shows a benefit for patients. When assessing the evidence base for a new intervention, KP looks at a variety of considerations, including

- Who conducted the studies (e.g., KP, industry, or government funded)?

- Who is the population at risk? Do the data reflect this population, or can data be generated to reflect this population?

- What is the current medical practice in this area? Is the new intervention an improvement?

- What will the new intervention cost?

- How will implementation of the new intervention be operationalized in clinical care?

In assessing the data, said Horberg, KP heavily relies on its own data collection and analysis. KP is “swimming in data,” but the challenge lies in

curating the quality of the data and using appropriate statistical methodologies. KP also convenes internal guideline panels and performs systematic reviews, both of which use data from inside and outside the system. In addition to the evidence assessment, KP also performs a financial analysis of how the system would integrate the new costs of care if an intervention were to be adopted.

During the assessment process, many points of tension must be balanced. First, KP is driven by its mission to “do the right thing the first time.” However, this desire to provide members with the best possible care is tempered by the fact that the system must remain financially viable to provide care. Second, KP believes in “prevention first . . . if possible.” While providing drugs to treat disease is important and necessary, it is better to prevent the disease in the first place—both for the patients’ quality of life and for the cost savings to the health system. Third, KP considers whether the new intervention is actually better than the current treatment, and how much of an improvement it represents. Fourth, KP looks not just at the availability of evidence, but the credibility and relevance of the evidence. For example, KP has two very different populations of members with HIV. More KP members on the East Coast with HIV tend to be female, heterosexual, and African American, whereas on the West Coast, members with HIV are mostly white men who have sex with men. Evidence for an HIV intervention would need to be relevant to both of these groups, if it were to be adopted. KP also looks for gaps in the data, said Horberg, particularly if there are gaps in the data on certain vulnerable populations.

The process for deciding which interventions to adopt, said Horberg, is both bottom-up and top-down. The impetus for assessing an intervention may come from new information in the literature, a provider request to review the literature, patient demand, or new regulatory or statutory requirements. There are a variety of interregional groups that are convened to make decisions about adoption, including formulary committees, new technology committees, guideline committees, specialty groups (e.g., gastroenterology chiefs), and special ad hoc groups. However, Horberg noted that the groups may come up with different decisions and these are not always in alignment. For example, the guideline committee may recommend a new drug as a good treatment, and the formulary committee may decide to approve it to the formulary, but the benefits committee may not approve it for payment. In addition, despite the emphasis on evidence-based medicine, individual care decisions are often based on discussions and experiences of providers and patients. Overall, KP aims for “collaboration” within the organization, with the goal of “getting what’s best for the patient,” concluded Horberg.

Daniel Ford, director of the Institute for Clinical and Translational Research at the Johns Hopkins University School of Medicine, described

the Johns Hopkins Health System (JHHS) as both a generator of evidence and a consumer of evidence. Although one might assume that this dual role would lead to systematic synergies, in reality, there are sometimes tensions and inconsistencies between the two parts of the system. JHHS still relies heavily on traditional clinical research, said Ford, but recently has branched out into conducting clinical research at community hospitals. In the system’s three community hospitals, there are about 450 patients in a clinical trial at any one time, with 23 research coordinators supporting them. This project marks a transition to collecting evidence outside of the traditional academic health center, said Ford. In addition to the community hospital research, JHHS also conducts clinical research with its patients; about 10 percent of JHHS patients (300,000 out of 2.5 million) have been involved in a clinical trial over the past 8 years, said Ford.

Ford said the JHHS process for making coverage decisions is similar to KP’s. JHHS uses internal or external data summaries, consults experts about their views of the available data, and looks at the patients who have received the drug. Ford said that while JHHS has a fair amount of internal data, it would be a “stretch” to rely solely on these data to judge the clinical effectiveness of a drug. He noted that because physicians use the electronic health record (EHR) daily, and know its drawbacks and limitations, they may be less persuaded by a conclusion based on EHR data versus data from a regulated clinical trial. However, he noted that the EHR data are consistently improving, and that there are new ways to integrate other data sources, such as data from other hospitals or death records. One database, called the Chesapeake Regional Information System for our Patients (CRISP), contains data from nearly all hospitals in Maryland, and hospitals from Delaware; Washington, DC; and West Virginia are joining as well. This integrated database allows researchers to track all hospitalizations, emergency room visits, and deaths, and serves as an important tool for both research and clinical practice.

Ford discussed the roles and expectations of patients and providers in the generation of RWE. Patients often desire information about their treatment plans in order to inform their personal decision making, but this information is not always accessible in the current environment. Academic researchers, meanwhile, frequently express interest in researching drug effects on off-label indications and applying their findings to usage recommendations, but providers still rely on traditional RCT evidence rather than other potential sources of evidence. Ultimately, Ford said, altering the evidence generation system will require changes on the part of multiple stakeholders. The funding for RCTs remains steady, providers use data from RCTs, and patients understand the RCT design. Integrating EHR data into research will require a shift in perspective and an effort to ensure that EHR data are as valid as data from traditional RCTs.

PATIENT PERSPECTIVE

Sharon Terry, president and chief executive officer at Genetic Alliance, started by questioning the term “patient” itself. To Terry, the word “patient” conjures up an image of a person sitting quietly in a gown on an exam table, with a “tremendous information asymmetry and power asymmetry.” Terry told workshop participants that they are all patients first and professionals second, and that “we make very different decisions [as patients] than we do when we sit here primarily as professionals.”

Terry briefly told the story of how she transitioned from a mother of two with a background in religious studies to a researcher who is involved in clinical trials with four different therapies. Terry’s children were born with a rare genetic condition called pseudoxanthoma elasticum (PXE). Upon their diagnosis, Terry and her husband founded PXE International, started a patient registry, discovered the gene responsible for PXE, patented it, and developed a diagnostic test. Terry now serves as the chief executive officer of Genetic Alliance, which is a network of more than 1,000 organizations and patient advocacy groups, representing millions of people with genetic diseases. Terry stressed that people like her—patients, families, and communities—have gathered data for years. Abundant data have been gathered through disease advocacy organizations, community-based participatory research, and activist and citizen science contributions, she said. However, these communities are fighting an “uphill battle” to collect data, and the data are often not used or integrated with data from other sources to impact the medical system. Terry questioned, “When are we going to start to pay attention to that information, and not keep comparing it to other sources of information? We need it all.” In other industries, data from consumers are highly valued, such as reviews and ratings on Angie’s List. In health care, these data may be more difficult to collect and manage, but they are equally—if not more—useful.

One example of community-led evidence generation is patient registries. These registries, which are often created and managed by community and advocacy groups, capture information about the lived experience of patients. The validity and accuracy of the data collected by these registries has long been questioned—even though, said Terry, there are also issues with validity and accuracy when data are collected in a clinical trial or in the course of clinical practice (e.g., EHRs). There are now registries for a wide variety of communities, from individuals working in homeless communities to people using a specific medical device to parents of autistic children. Technological advances have enabled better communication among these communities, and have facilitated the collection of real-world data (RWD) such as data collected on smartphones and other devices.

The difference between a community-led registry and an industry-led registry, said Terry, is that the community-led registry is focused on the priorities and the lived experiences of the community, rather than on the financial bottom line. Focusing on community priorities enables the registries to answer the questions most relevant to the community, and to give a realistic view of the opportunities and risks of taking a certain path (e.g., using a certain therapeutic), said Terry. Another benefit of community registries is the opportunity for education. Unlike a clinical or trial setting, in which a patient comes in for brief visits, community registries often have opportunities for daily interactions (e.g., through Facebook or chats), and patients can educate and communicate with each other. However, despite the benefits of community-led registries, there is a need for “rigorous and accessible methods for validation” of the data. Terry noted that working toward validation should be a communal effort, and that the methods used to validate should be made accessible to all.

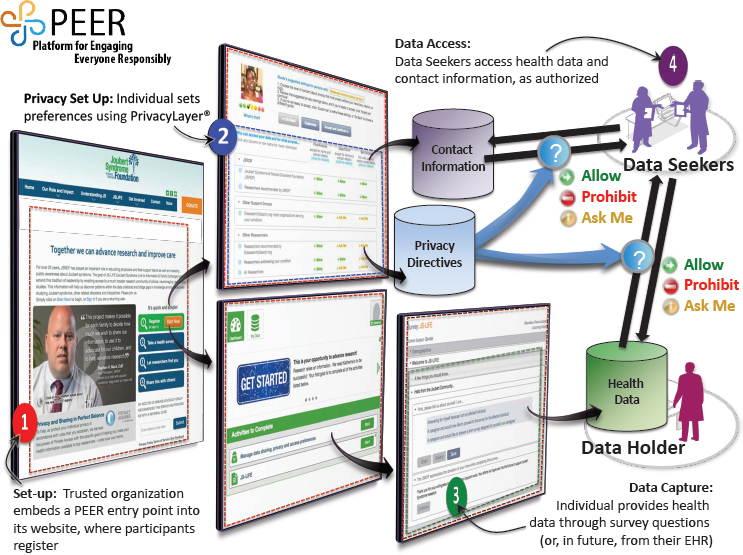

Terry introduced workshop participants to a platform that Genetic Alliance developed called Platform for Engaging Everyone Responsibly (PEER) (see Figure 2-1). This platform allows organizations and communities to create a custom registry, and to offer individuals control over the data they share. Terry explained the process. First, an organization creates a registry and puts a link on its website for patients to register. Individuals who register can choose their own personal privacy settings and the purposes for which their data may be used. The health data, the contact information, and the privacy preferences are held in three separate databases, and the registrant has control over who may access the data. The process of choosing privacy settings is guided by people from the same community as the registrant—for example, the same socioeconomic status, the same race, and/or the same experience with the disease, said Terry. She noted that of the tens of thousands of people who have input their data into the system, about 95 percent say, “Share my data with everybody.” This customizable platform that can be embedded into the communities’ existing website allows organizations to create registries that are responsive to the needs of the community and that “look like” the community. However, they all share the same underlying data structures and are therefore interoperable across diseases. There are currently 45 communities using PEER to build the database they need to get industry attention or to start clinical trials themselves, said Terry.

Terry closed with a quote about keeping the patient at the center of decisions about health care: “Nothing about us without us.” As stakeholders move forward with collecting and using RWE, she said they must keep in mind that patients’ lived experiences are a valuable source of information, and that patients are experts on themselves and their experiences. Of course, the data collected must be aggregated in a way that is rigorous and clear, said Terry, but there is an existing plethora of communi-

NOTE: EHR = electronic health record.

SOURCE: Terry presentation, September 19, 2017.

ties who are ready, willing, and able to generate evidence that can be used for decision making. Stakeholders could build on the successes of these communities, and help to facilitate the rigorous collection and analysis of patient-generated data.

DISCUSSION

Joanne Waldstreicher, chief medical officer at Johnson & Johnson, and Eleanor Perfetto offered some reflections on the presentations by Sherman, Horberg, Ford, and Terry, as well as their own perspectives on the issue of RWE generation. Their input, as well as discussion from the audience, has been divided by topic area.

Patient Perspective: “Will This Work for Me?”

To follow on Terry’s presentation about the patient perspective, Perfetto told participants about a National Health Council roundtable that was held

to gather patient views of real-world evidence. One of the predominant findings, said Perfetto, was that patients do not particularly care about the source of evidence. Rather, Perfetto said that what patients most want to know is, “Will this work for me?” Whether the evidence is generated in the real world or in a clinical trial, patients want evidence that will help them make a good decision about their care. In addition to this finding, many patients at the roundtable exercise conveyed a belief that data should belong to patients, and patients should have an opportunity to understand how and by whom their information is being used and to actively opt in to the use of their information in research. Ross McKinney, chief scientific officer at the Association of American Medical Colleges, added that a regulatory and ethical framework to deal constructively with RWD is lacking. In a prospective study, researchers obtain active consent from patients, whereas in studies involving RWD, patient consent and privacy are much less central, he said.

Roundtable contributors also said that EHRs and claims are “not authentic sources of real patient data” because these sources do not include information about patient preferences or experiences. It was proposed, said Perfetto, that clinical data be integrated with the data that matter to patients, such as patient-reported outcomes. This integration would not only present a fuller picture, but would also help with appropriately interpreting the data that come from clinical sources. Patients also revealed frustration with the difficulty of assessing the quality of different types of studies and data, and proposed that patient advocacy groups have access to a scientific board or consultants.

Perfetto echoed Terry’s point that patient communities have long been a source of RWD, and have produced quality data that have made major contributions and led to changes in care. The challenge, said Perfetto, is to integrate all of the data, including community-generated evidence and other types of RWD. Only by looking at the weight of all of the evidence—regardless of where it comes from—can health professionals “help patients make the best decisions and the best choices.”

Data and Analysis Considerations

Califf noted that two different dimensions are involved in generating RWE: the source of the data and the method of analysis. These dimensions are often conflated and discussed as if RWE equates to observational studies. However, studies on RWD can include observation, a variety of randomization methods, and prospective or retrospective analyses, said Califf. Bindman added that while RCTs are often held up as the “gold standard,” using a method other than RCT does not mean that scientific principles are abandoned. Waldstreicher said that study designs are already

changing, and that real-world trials that use randomization are becoming more popular. For example, randomized pragmatic studies have made a major difference in some areas of medicine, such as in the field of statins. Designs and tools such as platform trials and master protocols are increasingly important, said Waldstreicher, but it is critical to have regulatory buy-in for these types of trials. Before embarking on a trial, she emphasized that industry needs to know that the evidence generated will be acceptable from a regulatory perspective.

When observational study designs are used, the bar for rigor and quality should be as high as it is for clinical trials, said Waldstreicher: Clinical trials have a number of requirements that grant collective confidence in the data, and observational trials could have similar requirements. Observational trials should be reported with transparency about the sponsor of the trial, the study design, the methodology, the protocol, and the analysis plan, and observational trials should be registered just like clinical trials, said Waldstreicher. When an observational trial is based on analysis of one database, it is valuable to test whether the results can be replicated using a different database.

Rory Collins, head of the Nuffield Department of Population Health at the University of Oxford, offered a slightly different perspective on observational studies. Although observational studies on large databases are expedient and “seductive,” Collins worried that “we’re planning on a very large scale to repeat the errors of the past.” The drawbacks of randomized controlled trials (RCTs) have been discussed at length at this workshop and others, said Collins, but observational studies also have serious limitations. Perhaps the solution is not to do more observational studies, but instead to fix the issues with RCTs, he suggested. Waldstreicher clarified that while she believes there is a role for observational studies, the evidence from these studies should be looked at as part of the totality of the evidence, in combination with data from sources such as randomized pragmatic trials, safety data, and predictive modeling. “We should use all of the tools in our tool chest,” said Waldstreicher, and use knowledge from all sources in an iterative and synergetic fashion. To this end, stakeholders could work to break down compartmentalization, share data, and collaborate in order to build a learning health care system, she said.

Role of Health Systems

Califf addressed the three presenters whose organizations provide and pay for health care in one way or another: Sherman, Horberg, and Ford. He noted that these organizations are making decisions with imperfect evidence, but they are also in a position to improve the evidence base by collecting and sharing RWD from their patients. Califf asked, “What is your

obligation to fix [the system]?” Sherman agreed that health systems are in a unique position to encourage behavior change: “Because we control the dollar and the policies, we are in a unique position to . . . encourage certain behaviors or certain types of activities.” Sherman said that Harvard Pilgrim is working closely with other stakeholders to generate better evidence, to collaborate, and to incorporate RWE into decision making. Horberg concurred that payers have a unique role to play; he said that KP feels “a strong sense of obligation to contribute to medical knowledge.” For Horberg, changing the system comes down to improving research practices. Research must be based on a sound scientific question that is the “right” question for the community and the patients, he said.