3

Maintaining Science Leadership

Advanced computing underpins virtually every discipline of science and engineering and is critical to the National Science Foundation’s (NSF’s) mission “to promote the progress of science; to advance the national health, prosperity, and welfare; to secure the national defense; and for other purposes.”1 The use of advanced computing enables discoveries in fundamental areas of physical sciences; provides new insights in biological sciences that have implications for national health; leads to improved engineering of devices; enables the development of new materials and systems with both commercial and defense implications; and aids in our understanding of the environment and society. Advanced computing has traditionally been used for modeling and simulation to interpret and project the implications of mathematical models of physical phenomena and, increasingly, to analyze the large and complex data sets from observations and experiments.

The impacts on science have been both broad and deep. Advanced computing supports the education and research of thousands of students and scientists across the country, and it has been essential in some of the most significant award-winning scientific discoveries. Advanced computing has been used for scientific discoveries across many disciplines, from cosmology and astrophysics to biology and medicine. For example, advanced computing at NSF has been used to understand the forma-

___________________

1 National Science Foundation, “At a Glance,” http://www.nsf.gov/about/glance.jsp, accessed March 31, 2016.

tion of the first galaxies in the early universe, to analyze the impacts of cloud-aerosol-radiation on regional climate change, and to understand the design and behavior of computing device technology as the end of Moore’s law scaling approaches. The use of advanced computing systems, including those designed for data-intensive workloads, has expanded beyond traditional domains to understanding social phenomena captured in real-time video streams, connection properties of social networks, and voter redistricting schemes. Other examples of science impacts can be found in Box 3.1.

Advanced computing has been a key to multiple Nobel Prizes (Box 3.2), including the 2013 Nobel Prize in chemistry awarded jointly to Martin Karplus, Michael Levitt, and Arieh Warshel for “the development of multiscale models for complex chemical systems.” The team used NSF TeraGrid resources for particle simulations to predict the structure of proteins and combine molecular dynamics with quantum mechanical calculations.

3.1 CRITICAL ROLE OF NSF

NSF plays a critical role in providing the advanced computational infrastructure, including advanced computing, necessary to keep the United States at the forefront in the areas of science and engineering. According to the Networking and Information Technology Research and Development (NITRD) reports2 on investments in high-end computing infrastructure and applications (HECIA), NSF ranked second, behind the Department of Energy (DOE), for 2015 in investments in high-end computing facilities. DOE invested more than $350 million, while NSF was just over $200 million. With respect to investments in the NITRD high-end computing research and development program area, NSF is currently very close to DOE, with the Department of Defense (DOD)3 leading with more than $200 million and NSF and DOE in the range of $125 million.4 However, NSF investment in HECIA has declined significantly

___________________

2 See fiscal year 2000 through fiscal year 2016 editions of Networking and Information Technology Research and Development National Coordination Office. The Networking and Information Technology Research and Development Program: Supplement to the President’s Budget.

3 DoD includes Office of the Secretary of Defense, National Security Agency, and the DoD Service research organizations.

4 Note that the interpretation of the NITRD budget numbers is difficult and not consistent across agencies, as has been observed in President’s Information Technology Advisory Committee reports (2010) and that DOE has been investing in extreme scale research both through its application communities with Scientific Discovery through Advanced Computing (SciDAC) and co-design projects and through research evaluation prototypes, some of which may not have been included in the HECRD (High End Computing Research and

as a percentage of its total investments in NITRD research and in total dollar amount (Figure 2.2), even as the gap between request and available computing resources has grown (Figure 2.5).

NSF has a critical role with advanced computing because of its mission to initiate and support “basic scientific research and research funda-

Development) category. However, the point here is that the levels of investment are roughly comparable. DOE also supports industry research in processor and memory design, interconnects, and programming environments through the Research and Evaluation Prototypes program.

mental to the engineering process.”5 NSF’s Division of Advanced Cyberinfrastructure (ACI) and its predecessor organizations have supported computational research across NSF and provided services to a user base that spans work sponsored by all federal research agencies. While a large fraction of the leadership-class investments have been driven by the mission-critical requirements of DOE and DOD, NSF has played a pivotal role in moving forward both the state of the art in HPC software and systems and the scale and scope of impacts that are enabled through their use to address key scientific challenges. This is in complement to other agencies such as DOE, the National Institutes of Health, DOD, and the Defense Advanced Research Projects Agency, which are mission driven.

Currently, NSF-supported advanced computing investment includes a diversity of resources. NSF supports several large-scale hardware facilities, together with associated staff expertise (Blue Waters at the University of Illinois, Urbana-Champaign, Stampede at the University of Texas, Austin, and Yellowstone at the National Center for Atmospheric Research-Wyoming), long-tail and high-throughput resources (Comet at the University of California, San Diego), data-intensive resources (Wrangler at University of Texas, Austin, and Bridges at the Pittsburgh Supercomputing Center), and cloud resources (Jetstream at Indiana University).

In February 2012, NSF published Cyberinfrastructure for 21st Century Science and Engineering: Advanced Computing Infrastructure Vision and Strategic Plan.6 The document addressed broadly the cyberinfrastructure needed by science, engineering, and education communities to address complex problems and issues. The Cyberinfrastructure Framework for 21st Century Science and Engineering (CIF21) strategic plan seeks to position and support the entire spectrum of NSF-funded communities at the cutting edge of advanced computing technologies, hardware, and software. The CIF21 vision is to position NSF to “be a leader in creating and deploying a comprehensive portfolio of advanced computing infrastructure, programs, and other resources to facilitate cutting-edge foundational research in computational and data-enabled science and engineering (CDS&E) and their application to all disciplines.”7 In addition, the vision calls for NSF to “build on its leadership role to promote human capital development and education in CDS&E to benefit all fields of science and engineering.”8 After the publication of the strategic plan,

___________________

5 National Science Foundation Act of 1950, as amended, and related legislation, 42 U.S.C. 1861 et seq.

6 National Science Foundation (NSF), Cyberinfrastructure for 21st Century Science and Engineering: Advanced Computing Infrastructure Vision and Strategic Plan, NSF 12-051, http://www.nsf.gov/pubs/2012/nsf12051/nsf12051.pdf, February 2012.

7 Ibid., p. 4.

8 Ibid., p. 4.

NSF formed a foundation-wide committee with representatives from all directorates to move NSF in the direction of achieving the advanced computing infrastructure vision.

3.2 GLOBAL ISSUES

Advanced computing is arguably the most critical ingredient of international science leadership, affecting leadership in other disciplines, providing an essential and often unique role in international experiments, and literally tying international collaborations together through networking, data, and computing infrastructure. Nearly every developed country has some type of national program for computing because of its importance to economic growth, science, defense, and society.

Leadership is difficult to quantify by a single, good benchmark or by analysis of a single system. The TOP500 list9 ranks computers globally by their performance on the High-Performance Linpack benchmark. It is sometimes criticized for using this measure, which is much more compute-intensive than most modeling and simulation applications and does not reflect data-intensive workloads. Moreover, it does not contain all advanced computing systems, either because the system is business confidential, classified, or, as in the case of the Blue Waters system, because owners did not wish to take time away from normal operations to run the benchmark. Nevertheless, the list is an excellent source of historical data, and taken in the aggregate gives insights into investments in advanced computing internationally. The United States continues to dominate the

___________________

9 See the TOP500 website at http://top500.org, accessed January 27, 2016.

list, with 45 percent of the aggregate performance across all machines on the July 2015 list, but it has dropped substantially from a peak of over 65 percent in 2008. NSF has had systems either high on the list (e.g., Kraken, Stampede) or comparable to the top systems (i.e., Blue Waters), reflecting the importance of computing at this level to NSF-supported science. Although there are fluctuations across other countries, the loss in performance share across this period is mostly explained by the growth in Asia, with China’s share growing from 1 percent to nearly 14 percent today and Japan growing from 3 to 9 percent.

The Association for Computing Machinery’s Gordon Bell Prize may be a better metric of scientific talent and usable performance; it is awarded annually to teams who demonstrate the best performance on a real application. Of the 26 awards to date, 20 were awarded to U.S. teams using U.S. systems, some involving participants from other countries. The other 6 were from Japanese teams on the Earth Simulator system in the early 2000s and the K computer in 2013, both custom-designed systems. Although NSF systems and staff were involved in some awards, DOE laboratory staff and systems have largely dominated the U.S. awards, and the most recent award was to a commercial entity and custom system (D.E. Shaw Research’s Anton 2).

China’s Tianhe-2 supercomputer stands at the top of the TOP500 list. Barely visible in high-performance computing 15 years ago,10 China’s presence on the list has continued to grow. China had, however, not announced at the time this report was being prepared its new 5-year plan for high-performance computing, so it is difficult to be precise about its future plans.

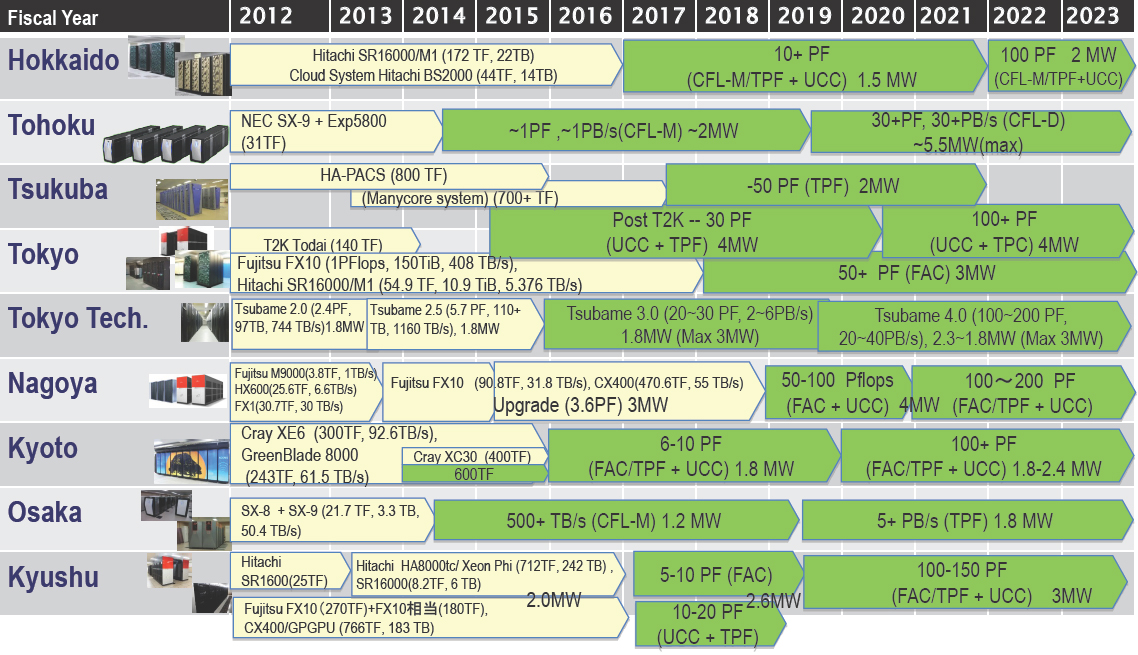

Japan has a long history of strong support for advanced computing in support of both science and industrial competitiveness. By several measures, Japan has often deployed and operated the world’s fastest machine for science, most recently with the Earth Simulator (#1 on the TOP500 list from 2002-2004) and the K Computer (#1 on the TOP500 list in 2011). Japan has plans for both a powerful, leadership-class system, called the Flagship 2020 project, and a roadmap for nine powerful systems at university centers. The Flagship 2020 project is expected to provide roughly 1 ExaFLOP/s (1018 floating-point operations per second), although the focus is on a leadership-class system for science rather than a particular peak performance target. This is likely to be one of the most powerful systems in the world when it becomes operational and a pow-

___________________

10 J. Alspector, A. Brenner, R.F. Leheny, and J.N. Richmann, “China—A New Power in Supercomputing Hardware,” Institute for Defense Analysis, March 27, 2013, https://www.ida.org/~/media/Corporate/Files/Publications/IDA_Documents/ITSD/ida-document-ns-d-4857.ashx.

erful advantage for Japanese scientists. Perhaps more importantly, Japan has a roadmap for what might be considered its second-tier systems, which will deploy, by 2020, nine highly capable systems at its university HPC centers, including eight with performance exceeding 10 PetaFLOP/s (Figure 3.1). Although what Japan actually acquires is of course subject to change, the roadmap illustrates the depth of the Japanese government’s commitment to advanced computing.

The situation in Europe is complex. First, although there is a cross-European Union (EU) consortium, the Partnership for Advanced Computing in Europe (PRACE), most investment decisions are made by individual countries. Germany, for example, has made significant investments to support both basic research and industrial competitiveness. The Juqueen system at the Juelich Research Center, an IBM Blue Gene/Q system, is one of the most powerful systems in the world. It is over half the size of the Mira system at the Argonne National Laboratory in Illinois, which is one of the two leadership-class systems operated by DOE’s Office of Science. Germany has three other systems ranked in the top 25. Outside the EU, other European countries have their own powerful systems. For example, the Swiss National Supercomputing Center operates a system ranked #6 by the TOP500 list in June 2015.

In terms of data-intensive computing, the United Kingdom’s eScience program identified the emergence of data-intensive computing—as a complement to the tradition simulation and modeling research activities—as long ago as the early 2000s. It would later prove influential in launching NSF’s cyberinfrastructure program and as an inspiration for other national programs, such as the Australian eResearch and the Dutch eScience programs.11

Another country that has made significant recent investments is Saudi Arabia. Both its Shaheen system, an IBM Blue Gene, and its more recent Shaheen II, a Cray XC40, both installed at the King Abdullah University of Science and Technology and used for scientific research, have been among the world’s fastest systems for science research. The Indian government has approved a 7-year supercomputing program worth $730 million (Rs. 4,500-crore) intended to revitalize its program and raise the nation’s status as a world-class computing power.

Leadership means drawing the best talent nationally and internally and supporting training of the next generation of scientists in computer

___________________

11 International Panel for the 2009 RCUK Review of the e-Science Programme, Review of e-Science 2009: Building a UK Foundation for the Transformative Enhancement of Research and Innovation, Research Councils UK and the Royal Society, 2009, https://www.epsrc.ac.uk/newsevents/pubs/rcuk-review-of-e-science-2009-building-a-uk-foundation-for-the-transformative-enhancement-of-research-and-innovation/.

science, data sciences, and scientific computing. This next generation includes the designers of future computer architectures, systems software, algorithms, and computational tools, as well as the applications. It is difficult to quantify these future impacts, except to point to the historical benefits that the United States has seen from computing and the continual demand by industry, government, and academia for experts in the design and use of advanced computing, networking, and data systems.