2

Pollutant Monitoring Requirements and Benchmark Thresholds

This committee was charged with recommending potential improvements to the current Multi-Sector General Permit (MSGP) monitoring requirements, including monitoring by additional sectors not currently subject to benchmark monitoring, monitoring for additional industrial-activity-related pollutants, and adjusting benchmark threshold levels. As discussed in Chapter 1, the Environmental Protection Agency (EPA) is currently developing Additional Implementation Measure (AIM) requirements in response to a legal settlement that would provide actionable consequences for large or repeated benchmark exceedances. These changes place greater emphasis on ensuring that the MSGP uses appropriate benchmarks. In this chapter, the committee provides a broad assessment of the current MSGP benchmark monitoring process and summarizes the most recent MSGP monitoring data. Then, the chapter describes ways to improve pollutant monitoring requirements, including industrial activities not currently covered by the MSGP, industry-wide benchmarks, and sector-specific monitoring requirements. The committee also discusses the adequacy of current benchmark threshold levels, considering recent information on toxicity and treatment attainability.

ASSESSMENT OF CURRENT MSGP BENCHMARK MONITORING

The original 1995 MSGP monitoring scheme was based on program elements embedded in sound administrative, scientific, and public policy principles (EPA, 1995, p. 50804). Many of the program elements, however, have been hampered by shortfalls in generating, considering, and acting on new information. This has resulted in missed opportunities for refining the MSGP monitoring requirements in support of improved stormwater management. Some of these key program elements are summarized in Table 2-1.

The pollutant monitoring requirements of the MSGP are particularly dated and have not been substantially updated over time. Many industrial sectors have never collected and reported data for any of the conventional and nonconventional pollutants, toxic pollutants, and hazardous substances listed in Appendix B. With the group application process, industrial sectors were directed to sample for specific pollutants based on their own determination of whether they had knowledge or reason to believe a pollutant may be present in their stormwater discharges.

Consequently, some industrial groups submitted more information than others, causing monitoring data submittal discrepancies among some sectors. The result is a disparity in the relative monitoring burden across sectors. This disparity is shown in Table 2-2 for five example sectors. Sectors M and N1 have benchmark monitoring requirements and Sectors I, P, and R have no benchmark monitoring requirements. Sectors I and R self-determined through the group application process that no sector-specific pollutants needed to be tested in their discharges. In contrast, Sectors M, N1, and P determined that pollutant testing was, in fact, necessary, with Sector N1 making that determination for the highest number of pollutants.

TABLE 2-1

Evolution of Key MSGP Program Elements Affecting Monitoring Requirements

| Factors Affecting Monitoring Requirements | 1995 MSGP Approach | Current Assessment |

|---|---|---|

| Pollutant Characterization | ||

| Gross characterization of basic chemical parameters in stormwater discharges | Baseline sampling was required, and results were statistically characterized | Has not been repeated since 1992 |

| Characterization of specific pollutants in discharges by sector/subsector | Industries self-determined which pollutants (beyond the baseline sampling pollutants) needed to be analyzed; industry data, where provided, were statistically characterized | Gaps in industry sampling have not been addressed |

| Evaluation of pollution potential by sector/subsector based on activities, sources, and pollutants | Activities indicated in permit applications were summarized along with likely sources of stormwater contamination and pollutants that may be present | Industry fact sheets have not been updated since 2006. Fact sheets and other sector-specific information have not been used to update MSGP monitoring requirements |

| Sampling and Analysis | ||

| Availability of sampling methods and protocols | Sampling methods were limited to grab | Sampling methods have not been updated to include more broadly available and representative methods |

| Availability of sufficiently sensitive analytical test methods | The sensitivity of analytical methods informed choice of benchmarks and monitoring requirements | Improvements in analytical capabilities were incorporated into the 2008 MSGP for some metals, but it remains unclear whether or how updated analytical methodology is considered for other benchmark requirements |

| Pollutant Reductions | ||

| Capacity of stormwater control measures (SCMs) to reduce pollutant loads | Options for controlling pollutants and the capacities of SCMs were evaluated when setting benchmarks | The 1995 evaluation was limited and has not been comprehensively updated |

Literature reviews generating new information regarding pollutants with industrial activity and their presence in the environment (EPA, 1995, 2006a; O’Donnell, 2005) have not been systematically or comprehensively used to update the MSGP. This reveals missed opportunities to characterize and likely reduce pollution in industrial stormwater discharges.

CONTEXT OF RECENT MSGP DATA

A review of recent MSGP monitoring results is instructive to evaluate the current state of MSGP benchmark monitoring compliance and provide important context for the committee’s findings. More than 17,000 reported results were evaluated from MSGP permitted facilities in the four states where EPA has primacy for the regulations (Idaho, Massachusetts, New Hampshire, and New Mexico), the District of Columbia, U.S. territories, Indian country, and some federal facilities. The data were submitted electronically by permittees under the 2015 MSGP through February 13, 2018. Tables 2-3 and 2-4 summarize the discharge monitoring results. For each pollutant-sector combination, the graphical results are color coded based on the percentage of individual reported results with concentrations above the benchmark (see Table 2-3) or eight times the benchmark (see Table 2-4). Tables 2-3 and 2-4 do not indicate MSGP benchmark exceedances, which are determined based on the average of four quarterly samples, and trigger review of the stormwater pollution prevention plan and 1 year of additional monitoring. However, they provide insight into the sectors and pollutants with frequent elevated discharge concentrations. Eight times the benchmark was selected as indicative of a major elevated discharge concentration, as suggested for the AIM process (see Box 1-3). In order for a data set to be included in Tables 2-3 and 2-4, each pollutant considered had to have a minimum of eight reported results for that subsector. The committee rec-

TABLE 2-2

Benchmark Pollutant Evaluation for Five Sample Sectors

| Oil and Gas Extraction and Refining (Sector I) |

Automobile Salvage Yards (Sector M) |

Scrap and Waste Recycling Facilities (Sector N1) |

Motor Freight, Rail, Passenger, and U.S. Postal Service Transportation Facilities (Sector P) |

Ship and Boat Building or Repair Yards (Sector R) |

|

|---|---|---|---|---|---|

| Monitoring Data Supplied in 1992 Industry Group Application (beyond baseline parameters) | None | Aluminum, iron, lead | Lead, zinc, cadmium, copper, zinc, chromium, iron, nickel, arsenic, aluminum, polychlorinated biphenyls (PCBs) | Lead, zinc | None |

| 1995 MSGP Common Pollutants Listed | Total suspended solids (TSS), total dissolved solids, oil and grease, chemical oxygen demand (COD), chlorides, barium, naphthalene, phenanthrene, benzene, lead, arsenic, fluoride, pH, acetone, toluene, ethanol, xylenes, antimony | TSS, oil and grease, ethylene glycol, heavy metals, sulfuric acid, petroleum hydrocarbons, chlorinated solvents, acid/alkaline wastes, arsenic, organics, detergents, phosphorus, salts, bacteria, biochemical oxygen demand (BOD) | Hydraulic fluids, oils, fuels, grease and other lubricants, accumulated particulate matter, chemical additives, PCBs, antifreeze, acid, mercury, lead, heavy metals | Fuel, oil, heavy metals, chlorinated solvents, acid/alkaline wastes, ethylene glycol, arsenic, organics, paint, dust, sediment, detergents, salts, phosphorus, sodium chloride, BOD | Paint solids, heavy metals, suspended solids, spent abrasives, solvents, dust, oil, ethylene glycol, acid/alkaline wastes, detergents, fuel, BOD, bacteria |

| 2015 MSGP Benchmark Monitoring Requirements | None | TSS, aluminum, iron, lead | TSS, COD, aluminum, copper, iron, lead, zinc | None | None |

ognizes that for stormwater data analysis more storm event results (18 to 24) are preferable, considering the inherent variability of precipitation events. However, based on the limited available data on industrial sites, a lower threshold of eight reported results was used. Additional pollutant-specific tables and graphs, a description of the data set, specific details on the committee’s analysis, and known limitations of the data set are provided in Appendix D.

When evaluating the results by sector, several sectors emerge that have a large percentage of samples with concentrations above the benchmark threshold for more than one pollutant, and even some with a large percentage of samples with concentrations above eight times the benchmarks. For example, in Sector H (coal mines and coal-mining-related facilities), more than half of the samples exceed eight times the benchmark for total suspended solids (TSS) and 95 percent of the samples exceeded eight times the benchmarks for aluminum and iron. In Sector A2 (wood preserving), more than half of the samples exceeded the benchmarks for chemical oxygen demand (COD), copper, and TSS, and 81 percent of the samples exceeded eight times the benchmark for copper. Sector F4 (nonferrous foundries) reported frequent high levels of zinc and copper, with 30 and 50 percent of the samples, respectively, above eight times the benchmark. In Sector N1 (scrap recycling), more than 50 percent of the samples are above the benchmarks for copper, iron, and zinc, while more than 10 percent of samples exceed eight times the benchmarks for aluminum, copper, iron, and zinc.

Additionally, meeting benchmarks proved more difficult for some pollutants than others. No sector was able to meet the magnesium benchmark in more than 50 percent of the samples. Copper, zinc, and iron also showed large percentages of samples above the benchmarks from most sectors.

TABLE 2-3

NetDMR 2015 MSGP Data According to the Percentage of Results Above Benchmarks

| Sector | Al | NH3 | As | BOD5 | Cd | COD | Cu | CN | Fe | Pb | Mg | Hg | NO2+ NO3 | pH | TP | Se | Ag | TSS | Turb | Zn |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| A1: Sawmills | ||||||||||||||||||||

| A2: Wood | ||||||||||||||||||||

| A3: Log storage | ||||||||||||||||||||

| A4: Hardwoods | ||||||||||||||||||||

| B1: Paperboard | ||||||||||||||||||||

| B2: Pulp mills | ||||||||||||||||||||

| C1: Agricultural | ||||||||||||||||||||

| C2: Industrial inorganics | ||||||||||||||||||||

| C3: Cleaning, cosmetics | ||||||||||||||||||||

| C4: Plastics | ||||||||||||||||||||

| C5: Medicinals | ||||||||||||||||||||

| D1: Asphalt | ||||||||||||||||||||

| E2: Concrete | ||||||||||||||||||||

| E3: Glass | ||||||||||||||||||||

| F1: Steel works | ||||||||||||||||||||

| F2: Iron/steel foundries | ||||||||||||||||||||

| F3: Nonferrous metals | ||||||||||||||||||||

| F4: Nonferrous foundries | ||||||||||||||||||||

| G1: Copper ore | ||||||||||||||||||||

| G2: Other ores | ||||||||||||||||||||

| H: Coal mines | ||||||||||||||||||||

| J1: Construction sand | ||||||||||||||||||||

| J2: Stone | ||||||||||||||||||||

| J3: Clay mineral mining | ||||||||||||||||||||

| K: Hazardous waste | ||||||||||||||||||||

| L1: Landfills | ||||||||||||||||||||

| L2: Landfills, not MSW | ||||||||||||||||||||

| M: Automobile salvage | ||||||||||||||||||||

| N1: Scrap recycling | ||||||||||||||||||||

| O1: Steam electric | ||||||||||||||||||||

| P: Transportation, postal | ||||||||||||||||||||

| Q: Water transportation | ||||||||||||||||||||

| R: Ship and boat building | ||||||||||||||||||||

| S: Air transportation | ||||||||||||||||||||

| T: Sewage treatment | ||||||||||||||||||||

| U1: Grain mill products | ||||||||||||||||||||

| U3: Meat, dairy, tobacco | ||||||||||||||||||||

| Y1: Rubber | ||||||||||||||||||||

| Y2: Misc. plastics | ||||||||||||||||||||

| AA1: Fabricated metals | ||||||||||||||||||||

| AA2: Fabr. metal coating | ||||||||||||||||||||

| AB: Machinery | ||||||||||||||||||||

| AC: Electronics |

| No data | Insufficient data (<8 results) | <10% above benchmark (BM) | 10–25% above BM | 26–50% above BM | >50% above BM |

|---|---|---|---|---|---|

NOTE: MSW = municipal solid waste.

TABLE 2-4

Categorization of NetDMR Data Based on the Percentage of Results Above Eight Times the Benchmark

| Sector | Al | NH3 | As | BOD5 | Cd | COD | Cu | CN | Fe | Pb | Mg | Hg | NO2+ NO3 | TP | Se | Ag | TSS | Turb | Zn |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| A1: Sawmills | |||||||||||||||||||

| A2: Wood | 81% | 13% | |||||||||||||||||

| A3: Log storage | |||||||||||||||||||

| A4: Hardwoods | |||||||||||||||||||

| B1: Paperboard | |||||||||||||||||||

| B2: Pulp mills | |||||||||||||||||||

| C1: Agricultural | 13% | 25% | |||||||||||||||||

| C2: Industrial inorganics | |||||||||||||||||||

| C3: Cleaning, cosmetics | |||||||||||||||||||

| C4: Plastics | 16% | ||||||||||||||||||

| C5: Medicinals | 50% | ||||||||||||||||||

| D1: Asphalt | |||||||||||||||||||

| E2: Concrete | 17% | ||||||||||||||||||

| E3: Glass | |||||||||||||||||||

| F1: Steel works | |||||||||||||||||||

| F2: Iron/steel foundries | |||||||||||||||||||

| F3: Nonferrous metals | 14% | 12% | |||||||||||||||||

| F4: Nonferrous foundries | 50% | 30% | |||||||||||||||||

| G1: Copper ore | |||||||||||||||||||

| G2: Other ores | |||||||||||||||||||

| H: Coal mines | 95% | 95% | 55% | ||||||||||||||||

| J1: Construction sand | |||||||||||||||||||

| J2: Stone | 11% | ||||||||||||||||||

| J3: Clay mineral mining | |||||||||||||||||||

| K: Hazardous waste | 83% | ||||||||||||||||||

| L1: Landfills | |||||||||||||||||||

| L2: Landfills, not MSW | 17% | ||||||||||||||||||

| M: Automobile salvage | |||||||||||||||||||

| N1: Scrap recycling | 13% | 26% | 18% | 13% | |||||||||||||||

| O1: Steam electric | |||||||||||||||||||

| P: Transportation, postal | |||||||||||||||||||

| Q: Water transportation | 12% | 61% | 12% | ||||||||||||||||

| R: Ship and boat building | 81% | ||||||||||||||||||

| S: Air transportation | 16% | ||||||||||||||||||

| T: Sewage treatment | 10% | ||||||||||||||||||

| U1: Grain mill products | |||||||||||||||||||

| U3: Meat, dairy, tobacco | 13% | ||||||||||||||||||

| Y1: Rubber | 23% | ||||||||||||||||||

| Y2: Misc. plastics | |||||||||||||||||||

| AA1: Fabricated metals | 46% | ||||||||||||||||||

| AA2: Fabr. metal coating | |||||||||||||||||||

| AB: Machinery | |||||||||||||||||||

| AC: Electronics |

| No data | Insufficient data (<8 results) | 0% above 8× BM | 1–9% above 8× BM | 10–25% above 8× BM | >25% above 8× BM |

|---|---|---|---|---|---|

NOTE: MSW = municipal solid waste.

TABLE 2-5

Percent Benchmark Exceedances in 2008 MSGP NetDMR Data Based on Annual Averages as Reported in EPA (2012)

| Al | NH3 | As | BOD5 | Cd | COD | Cu | CN | Fe | Pb | Mg | Hg | NO2+ NO3 | TP | Se | Ag | TSS | Zn | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| A | 33 | 100 | 16 | 17 | ||||||||||||||

| B | 0 | |||||||||||||||||

| C | 19 | 48 | 0 | 17 | 0 | 100 | ||||||||||||

| D | 14 | |||||||||||||||||

| E | 71 | 19 | ||||||||||||||||

| F | 71 | 50 | 0 | 72 | ||||||||||||||

| J | 25 | 8 | ||||||||||||||||

| K | 0 | 0 | 0 | 0 | 0 | 0 | 100 | 0 | 0 | 0 | ||||||||

| L | 91 | 38 | ||||||||||||||||

| M | 25 | 52 | 22 | 9 | ||||||||||||||

| N | 53 | 43 | 75 | 88 | 50 | 37 | 67 | |||||||||||

| O | 67 | |||||||||||||||||

| Q | 40 | 33 | 0 | 100 | ||||||||||||||

| U | 0 | 0 | 20 | 20 | ||||||||||||||

| Y | 0 | |||||||||||||||||

| AA | 36 | 65 | 26 | 74 |

| No data reported | <10% above BM as annual average | 10–25% above BM as annual average | 26–50% above BM as annual average | >50% above BM as annual average |

|---|---|---|---|---|

Table 2-5 provides a graphical representation of the results of an analysis of electronically submitted benchmark monitoring data from the 2008 MSGP (EPA, 2012). Table 2-5 is presented parallel to Table 2-3. The main exception is that Table 2-5 reflects the percentages of annual averages (based on four quarterly results) that exceeded the benchmark, rather than individual results; thus, the color coding by percentage exceedance is more stringent than that in Table 2-3. Additionally, the monitoring data were not separated into subsectors. The EPA (2012) analysis was based on far fewer data compared to the above analyses, because electronic submission of the MSGP data was not mandated until 2016. Several parameters have data from only one permittee and, in some cases, at only one outfall; therefore, the data are too limited to assess any trend between 2008 and 2015. Nonetheless, several of the same issues from EPA (2012) are apparent when reviewing the recent data in Table 2-3. Again, pollutants frequently above the benchmark include magnesium, copper, iron, and zinc. Without subsector breakdown, comparisons among sectors are more problematic.

Tables 2-3 through 2-5 highlight the ongoing challenges faced by several industrial sectors for which a large portion of facilities have results above the benchmarks under both the 2008 and 2015 MSGP. The remainder of this chapter discusses ways MSGP pollutant monitoring requirements could be improved to enhance industrial stormwater management within the program.

IMPROVING POLLUTANT MONITORING REQUIREMENTS

In this section, the committee reviews the benchmark monitoring requirements within the MSGP. The committee identifies industrial activities not currently covered by the MSGP, discusses the value of industry-wide benchmark monitoring, and analyzes sector-specific pollutant monitoring requirements.

Industrial Activities Not Covered by the MSGP

Industrial facilities in the MSGP are classified within sectors based on the products they generate using the legacy standard industrial classification (SIC) code. The MSGP was intended to cover discharges associated with industrial activity—not just discharges from facili-

ties whose main purpose has been defined as industrial (EPA, 1995b). SIC codes are not ideal for characterizing the industrial activities that occur at a site with the potential risk of stormwater pollution. Some facilities like gas stations and school bus transportation facilities are not included in the MSGP, because they are not considered to be industrial facilities, even though the environmental risks associated with their outdoor activities may be similar to or greater than other facilities that the MSGP covers.

The MSGP should extend coverage for facilities, including commercial ones, that are not explicitly defined as “industrial” under the National Pollutant Discharge Elimination System stormwater regulation SIC structure if they conduct on-site activities that are equivalent to industrial activity covered under the MSGP. These facilities should be subject to the same monitoring requirements as those industries with like on-site activities. These facilities include

- Timber lots,

- Fuel storage and on-site fueling,

- Vehicle maintenance (e.g., school bus transportation facilities),

- Facilities with numerous parked diesel vehicles,

- Outdoor materials storage that poses stormwater contamination threats (e.g., liquid tanks with operational valves or in poor condition, solids such as salt or wood chips that are exposed to stormwater), and

- Outdoor handling of materials (e.g., filling liquid tank trucks, conveyors handling solids in particulate form).

Some states have done this. Maryland, for example, describes Department of Public Works highway maintenance facilities and school bus facilities as specific types of facilities designated for coverage under the Sector AD of the general permit (MDEP, 2014). Connecticut’s general permit includes several activities that have been added to the definition of “stormwater associated with industrial activity” (CT DEEP, 2018). This includes small-scale composting facilities; road salt and deicing material storage facilities; wood processing facilities not otherwise covered, including mulching, chipping, and mulch coloring facilities; and vehicle service and storage facilities (including public works garages) operated by federal, state, or municipal governments.

EPA should identify the industrial, commercial, and retail activities currently excluded by the MSGP’s SIC-based approach that have stormwater pollution potential comparable to industrial facilities currently regulated under the MSGP. In their upcoming revisions to the MSGP, EPA should consider ways to include these facilities under the MSGP, with monitoring requirements equivalent to like facilities. This would facilitate improved stormwater management and characterization of discharges at these facilities.

Industry-wide Benchmark Measurements for All Sectors

A primary goal of the MSGP benchmark monitoring requirements is to indicate the performance of structural and nonstructural SCMs for ensuring the quality of stormwater leaving industrial sites. The committee recommends a suite of water quality parameters for benchmark monitoring by all industrial sites that must do stormwater sampling, including those that currently only do visual monitoring. Such industry-wide monitoring would provide indicators of problems for a wide range of sites and a baseline understanding of industrial stormwater risk for all sectors. Industry-wide monitoring would also provide stormwater quality information that could be compared across all industries regardless of sector, and would help address some of the monitoring disparities that resulted from the group application process. Such monitoring has been recommended by other reviews of the MSGP (O’Donnell, 2005), and several states currently use some degree of industry-wide monitoring (see Appendix A). The committee recommends three industry-wide parameters:

- pH detects excess acidic or alkaline substances in the water, and pH excursions indicate corrosive (acidic or basic) and/or toxic concerns. Stormwater discharges that are excessively polluted may not exhibit problems with respect to pH. However, pH excursions that are highly acidic or highly alkaline and do not fall into the benchmark range (6.0–9.0) can be indicative of a major polluting event or process failure and can be impactful to

- receiving waters. Unexpected pH values also can indicate that a stormwater treatment system is not operating properly. For example, a limestone-based treatment system will typically raise pH, so a low effluent pH may indicate system failure. pH is simple and low cost to measure and is currently required as an industry-wide benchmark by California, Connecticut, and Washington (see Appendix A).

- Total Suspended Solids (TSS) is a measure of suspended particulate matter in a water sample. Particulate matter can result from erosion of industrial soils, deposited particulate matter on the drainage area, erosion/corrosion of materials present on the site, and general overall site cleanliness. TSS also provides information about possible high concentrations of numerous other pollutants that will partition onto particulate matter, including phosphorus, many heavy metals, and many hydrophobic organic chemicals. Stormwater TSS concentrations in receiving waters are highly correlated with the concentrations of metals such as copper, lead, and zinc, known to cause freshwater and marine bio-toxicity (Schiff and Tiefenthaler, 2011). Several treatment and nonstructural SCMs are available to control TSS (Clark and Pitt, 2012), and TSS can provide information about their performance or the need for additional SCMs at a site (Avila et al., 2008). TSS is a standardized, well-established test. Suspended sediment concentration (SSC; an approved method under 40 CFR § 136.3 for filterable residue) was considered as an alternative surrogate for TSS. SSC is generally judged to be a more accurate measure of particulate matter in stormwater because it will capture all sediment, not just suspended matter. However, use of SSC complicates the monitoring process by requiring an independent sample for this parameter only. Turbidity measurements have also been suggested as an indicator for suspended solids. However, TSS provides a better basis for comparisons against historical data, which are more commonly reported as TSS. TSS is currently required as an industry-wide benchmark by California, Connecticut, and Minnesota (see Appendix A).

- Chemical Oxygen Demand (COD) is a surrogate measure of organic pollutants in water (through measure of oxygen demand). It is a conventional water quality parameter with established industrial stormwater benchmarks. In addition to the measure of oxygen demand, high COD can also be indicative of oils and hydrocarbon pollution (Han et al., 2006a) and, as with TSS, can be an indicator of overall site cleanliness. Increases in COD could also indicate problems with the treatment SCM effectiveness, including the need for maintenance. The committee recognized that total organic carbon (TOC), which generally provides the same information of interest as COD, would be a better measure of organic pollution in water for several reasons. TOC analysis is simple, standardized, and easier to automate than COD. TOC analysis uses fewer toxic chemicals and can produce results much more sensitive, precise, and accurate than COD. However, TOC does not have an EPA-established benchmark or history of data as COD does. TOC may also be less effective in measuring colloidal/particulate organic matter. Once an EPA benchmark is developed for TOC, EPA should consider the overall advantages and disadvantages of conversion to TOC monitoring. While both COD and TOC are gross measures of organic pollution, they are not specific enough or sensitive enough to detect possible excursions of toxic pollutants (e.g., polycyclic aromatic hydrocarbons [PAHs]) at moderate/low concentrations. COD is currently required as an industry-wide benchmark by Connecticut (see Appendix A).

All three parameters are direct measures of water quality and are appropriate choices for industry-wide sampling because all three can be indicators of broader water quality problems and the presence of other pollutants. In addition, these industry-wide water quality parameters can provide indications of SCM absence, neglect, or failure, which can lead to high concentrations of potential pollutants. There are well-established standardized analytical procedures for all three recommended industry-wide parameters, and analytical determinations are expected to be relatively inexpensive (less than $100 for all three). Considering that all permittees must collect quarterly storm event samples for visual monitoring, the additional cost burden of these analyses is expected to be small.

Review of Pollutant Monitoring Requirements by Sector

For the most part, the monitoring requirements in the MSGP were based on the best information available at the time they were derived. However, based on information gained since the MSGP was developed, changes for a number of sectors are merited. Some sectors are not required to conduct benchmark monitoring. Other sectors are required to monitor for only a very limited number of pollutants (see Table 1-1) and some sectors are not required to monitor for the substances that could potentially be important pollutants that may be discharged in stormwater from their sites. This section reviews the monitoring requirements of the MSGP and discusses areas that the committee recommends to be updated based on the current understanding of risk and pollutant occurrence.

Inconsistent Monitoring Requirements for Similar Sectors with Similar Industrial Activities

Analysis of the sector-specific benchmark monitoring requirements shows inconsistencies across sectors that have comparable industrial activities, highlighting shortfalls in the current MSGP. For example, Sectors M (automobile salvage yards) and N1 (scrap recycling and waste recycling facilities) have similar activities on site but different monitoring requirements (see Table 2-2). Both sectors include material handling and storage, material processing and dismantling, including ferrous and nonferrous metals, equipment maintenance and cleaning, reclaiming and recycling liquid wastes such as used oils and antifreeze, and other operations that occur at industrial facilities often exposed to stormwater. Monitoring requirements for Sector M are TSS, total aluminum, total iron, and total lead, whereas Sector N1 is required to monitor for these parameters and also total copper, total zinc, and COD. As discussed earlier in this chapter, Sector M does not have benchmark monitoring requirements for total copper and total zinc, at least in part, because they did not self-determine through the 1992 group application process that monitoring for these two pollutants was necessary. As such EPA did not have data to evaluate pollutant potential when developing the 1995 MSGP and has not, to date, required Sector M to sample for those parameters. Among the limited monitoring data reported for Sector M from the 2015 MSGP (11 samples), the benchmark for copper was exceeded 82 percent of the time compared to 63 percent for Sector N1 (see Appendix D).

Sectors Not Subject to Benchmark Monitoring

Of the industrial sectors listed in Table 1-2, 10 sectors (including all their subsectors) have no benchmark monitoring requirements in the MSGP. Other sectors have at least some subsectors required to conduct benchmark monitoring, representing varying proportions of the sector facilities. According to EPA, 45 percent of all facilities permitted under the MSGP are not required to conduct benchmark monitoring (R. Urban, EPA, personal communication, 2018). In this section, the committee examines in more detail three of the sectors where no benchmark monitoring is currently required (see also Table 2-2). These analyses highlight the need for updated evaluations of pollutant potential and opportunities for pollutant reduction through implementation of additional SCMs.

Oil and Gas. Sector I includes oil and gas exploration, production, processing or treatment operations, and transmission facilities. A number of chemicals are used at these operations that could contribute to stormwater pollution, including diesel fuel, oil, solvents, drilling fluid, acids, and chemical additives (EPA, 2006c). Ammonia, lead, nickel, nitrate, and zinc have been detected at these sites in stormwater in greater than 10 percent of the reported data (O’Donnell, 2005). No monitoring data on Sector I have been submitted as part of the 2015 MSGP (see Appendix D). Spills and leaks can also lead to petroleum hydrocarbon contaminants in stormwater, including PAHs, which have been shown to be highly toxic to aquatic life (Incardona et al., 2011; Abdel-Shafy and Mansour, 2016; McIntyre et al., 2016). Chemical-specific monitoring is appropriate for this sector to ensure that stormwater is appropriately managed.

Motor Freight and Transportation Facilities. Sector P includes motor freight and passenger transportation facilities, petroleum bulk oil stations and terminals, rail transportation facilities, and post office facilities.

Activities on these sites include vehicle and equipment fluid changes, mechanical repairs, parts cleaning, fueling, and vehicle storage. Chemicals used on site include solvents, diesel fuel and gasoline, hydraulic fluids, antifreeze, and transmission fluids (EPA, 2006b; see Table 2-2). Benchmark monitoring for lead and mercury in addition to pH, TSS, and COD were recommended by O’Donnell (2005) because of the frequency of occurrence in Toxic Release Inventory stormwater data, but the 2015 MSGP does not include any benchmark monitoring requirements for this sector. Although benchmark monitoring is not required nationally, some Sector P monitoring data have been reported in EPA’s Network Discharge Monitoring Report (NetDMR). Greater than 25 percent of results had concentrations above the benchmarks for aluminum, copper, and iron. As with Sector I, petroleum hydrocarbon leaks and spills could lead to harmful stormwater discharges of PAHs. The activities in Sector P and risk of stormwater pollution suggest that chemical-specific monitoring within the MSGP would be appropriate.

Ship and Boat Building. Sector R covers ship and boat building or repair yards, which includes activities such as fluid changes, mechanical repairs, parts cleaning, refinishing, paint removal, painting, and fueling. Chemicals used on site include solvents, oil, fuel, antifreeze, and acid and alkaline wastes. As discussed earlier in this chapter, Sector R self-determined through the 1992 group application process that no sector-specific pollutants needed to be tested in their discharges, which was a significant reason for the lack of benchmark monitoring in the 1995 MSGP. This determination has carried over into the 2015 MSGP. O’Donnell (2005) suggested that chromium, copper, lead, nickel, and zinc be considered for future monitoring for Sector R and noted that Toxic Release Inventory stormwater data were limited. Greater than 300 Sector R monitoring data points have been submitted to the NetDMR under the 2015 MSGP. Greater than 25 percent of reported results were above the benchmark for aluminum, copper, and iron (see Appendix D).

Rhode Island recently added benchmark monitoring for aluminum, iron, lead, and zinc for Sector R in their 2013 MSGP (RI DEM, 2013). The Rhode Island Department of Environmental Management determined that Sector R has the potential to generate the same pollutants as water transportation Sector Q because they have common industrial activities. In the 1992 group application process, Sector Q self-determined that aluminum, iron, lead, and zinc needed to be tested in their discharge, and EPA applied benchmark monitoring for those four pollutants to Sector Q in the MSGP. The MSGP monitoring requirements for Sector R are likely insufficient due to shortfalls in the original 1992 group application process.

Need for Periodic Monitoring Reviews

These examples show that monitoring requirements within the MSGP are not consistently applied. Additionally, updates to the benchmark monitoring requirements have not been made over time in spite of data and several analyses showing that specific contaminants are commonly detected or likely to occur in stormwater at these facilities (Harcum et al., 2005; O’Donnell, 2005; EPA, 2012; see also Appendix D). Sector-specific monitoring requirements for all sectors should be rigorously reviewed to assess whether the monitoring requirements are appropriate to ensure control of stormwater pollution and determine whether benchmark monitoring requirements should be adjusted.

The committee recommends the following specific steps be taken to periodically review the MSGP monitoring requirements and update them as appropriate based on new information:

- Prior to each permit renewal, EPA should conduct a literature review and update its industry fact sheets, which describe potential pollutants from common industry activities, pollutant sources, and practices that could reduce pollutant discharge on site.1 Changes in industry practice over time may introduce new contaminants and render other contaminant monitoring of limited value.

- EPA should continue the process conducted by Tetra Tech in advance of the 2008 MSGP (O’Donnell, 2005) where sector-specific data from the previous MSGP as well as Toxic Release Inventory and Toxic Substances Control Act data are assessed to determine whether the chemical

___________________

1 See https://www.epa.gov/npdes/industrial-stormwater-factsheet-series.

- monitoring requirements are adequate to detect stormwater management concerns.

- State industrial stormwater permits should be reviewed for advancements in sector-specific monitoring that would be appropriate for the national permit (e.g., Bulkley et al., 2009).

- New understanding of pollutant effects in the environment and advances in monitoring technology should be evaluated. For example, PAHs were not previously monitored as part of the MSGP process, but aquatic impacts of PAHs are now better understood and analytical technologies have advanced significantly since the 1992 group application. Scientific advances that identify cost-effective monitoring surrogates should also be considered.

This level of analysis should be adequate to substantiate the addition of benchmark monitoring requirements for specific sectors. That said, where EPA finds that the sector review is not substantial enough to withstand the scrutiny of adding benchmark monitoring requirements, as was the case after EPA proposed to add benchmark monitoring for several sectors in the 2006 draft MSGP, the committee suggests an alternative process. Where data are lacking to inform the analysis, additional sector-specific monitoring data should be collected to provide the information necessary to quantify whether stormwater pollutants are present at levels of concern, using a process similar to that used in 1992 for the group applications. The committee recommends that additional monitoring be performed over a 1-year period, at the same outfalls for which industry-wide monitoring is conducted, but without the application of benchmark threshold. These data would then inform future revisions of the MSGP monitoring requirements.

ADJUSTING BENCHMARK THRESHOLD LEVELS

Benchmark threshold levels were established during the early iterations of the MSGP, based on several different criteria and employing several simplifying assumptions. In this section, the committee reviews the latest information on toxicity and technical achievability, relative to the current benchmark levels, and discusses the implications to the benchmark levels.

Updated Toxicity Information

Advancements in the understanding of aquatic toxicology suggest that some benchmark threshold levels may require adjustment to reflect the latest scientific information in meeting the MSGP’s intended water quality protection goals. This section reviews the application of water quality criteria toward the benchmark and the need to update benchmarks to reflect the latest aquatic life criteria. Additionally, the committee discusses new benchmarks to better characterize stormwater risks, unnecessary benchmarks, and benchmark units used in documentation and communication.

Application of Water Quality Criteria

Many of the current benchmark thresholds were derived from aquatic life criteria (see Table 1-3). EPA recommends that aquatic life criteria be derived for protection against toxicity from both acute (short-term) and chronic (longer-term) exposure, when possible (EPA, 1985). Given the intermittent nature of stormwater exposures and the likelihood of dilution and attenuation within watersheds, organisms will be exposed to chemicals from stormwater discharges over short time frames. For stormwater benchmarks based on aquatic life criteria, the committee recommends the use of criteria designed to protect against short-term or intermittent exposures when they exist, which, to date, have generally been acute criteria.

Most benchmarks in the 2015 MSGP are set according to acute criteria (see Table 1-3); however, chronic criteria are used in three cases—selenium, arsenic, and iron—each for different reasons. Chronic criteria are established to protect aquatic life against mortality and impacts to growth and reproduction after longer-term exposure.

Selenium. EPA originally considered establishing the selenium benchmark at a value equal to the acute freshwater criteria (20 µg/L; EPA, 1987), but sufficiently sensitive test methods were lacking at that time. Thus, in the MSGP, EPA originally set the selenium benchmark at 238.5 µg/L based on the value that could be accurately and precisely quantified (EPA, 1995, p. 50825). In the development of the 2008 MSGP, EPA updated benchmark thresholds for which more sensi-

tive analytical methods were available. For selenium, EPA stated in 2008 that they based the benchmark threshold on chronic criteria (5 µg/L) because at the time of development of the 2008 MSGP, no acute criterion was in effect (EPA, 2008a).

The selenium benchmark based on chronic aquatic life criteria is now outdated. In 2016, EPA released updated ambient aquatic life criteria for selenium, with new chronic freshwater criteria reduced to 1.5 and 3.1 µg/L for still or flowing waters, respectively (EPA, 2016b). However, no concentration-based acute criteria were derived. The updated selenium criteria are unique in that they were derived to specifically account for the bioaccumulative properties of selenium and reproductive effects on fish species and included a translation of the chronic criteria for short-term or intermittent exposure, in lieu of an acute criterion. The translation of the chronic criteria must be calculated based on the background base-flow concentration of selenium in the receiving water and the length of exposure. This complicates the monitoring requirements for selenium given the additional data required to translate chronic criteria to intermittent conditions. Although such intensive data collection would not be desirable for most permittees, enhanced sampling and analysis for facilities with repeated benchmark exceedances would allow EPA to determine if their discharge is causing adverse effects under the site-specific conditions (see also Enhanced Monitoring, discussed in Chapter 3).

Arsenic. Even though an acute criterion of 360 µg/L arsenic had been developed (EPA, 1986), the MSGP benchmark was originally set at 164.8 µg/L based on the analytical detection limit (EPA, 1995, p. 50825). At the time of the 2008 MSGP review and update for more sensitive detection methods, the updated acute criterion (340 µg/L) was more than two times the previous value. EPA decided not to substantially weaken the benchmark based on concerns about near-coastal freshwater discharges flowing quickly into sensitive saline waters, which had a saltwater acute aquatic criterion of 69 µg/L (EPA, 2008b). Therefore, the benchmark was adjusted to the chronic criterion of 150 µg/L. Unless EPA can justify specific unique concerns for arsenic discharge from freshwater in near-coastal settings that do not apply to all other benchmarks with lower saltwater benchmarks or until it develops a criterion based on intermittent exposure, EPA should adopt the acute aquatic life criterion (340 µg/L) for the arsenic benchmark.

Iron. EPA based the iron benchmark threshold on the chronic criterion (1,000 µg/L) given the lack of an acute criterion, and that decision has remained over the iterations of the MSGP. No acute aquatic life criterion for iron has been developed since the MSGP was originally established.

The committee found very few studies on the acute effects of iron on aquatic organisms, and these studies suggest lethal effects occur well above the current benchmark over longer time periods. For example, an iron concentration of 6,700 µg/L over 48 hours caused acute immobilization in 50 percent of the population (EC50) in Daphnia magna (Okamoto et al., 2014). An iron concentration of 2,000 µg/L in humus-free water was lethal to 23 percent of one-summer-old grayling fish after being exposed for 72 hours (Vuorinen et al., 1998). Zahedi et al. (2014) determined that 122,000 µg/L iron is lethal to 50 percent of a population (LC50) of kutum fish over 96 hours. The science on which the criterion is based is dated and limited (EPA, 1976). The committee suggests that EPA reevaluate the aquatic toxicology literature for acute toxicity studies of iron and develop a benchmark for iron based on acute toxicity. Because iron has relatively low toxicity and bioaccumulation of iron does not pose a substantial hazard to higher trophic levels (Cadmus et al., 2018), it is unlikely that a criterion based on intermittent exposure would be necessary. Given the basis of the iron criterion and the difficulty many facilities have in meeting the benchmark (see Tables 2-3 through 2-5 and Appendix D), EPA should suspend the benchmark for iron until an acute criterion is developed unless EPA can articulate a specific rationale for protecting against chronic effects of iron from intermittent events.

Updating Benchmarks to Match Aquatic Life Criteria

Other aquatic life criteria are currently under revision or have recently been revised. For example, revised acute aquatic life criteria for cadmium have been developed (EPA, 2016a) and will need to be incorporated into the next MSGP revisions (see Table 2-6).

TABLE 2-6

Outdated Benchmarks or Inconsistencies with Aquatic Life Criteria

| 2015 MSGP Benchmark | Source | Current Aquatic Life Criteria | Source | |

|---|---|---|---|---|

| Cadmium | 2.1 µg/L | EPA, 2001 | 1.8 µg/L | Acute; EPA, 2016a |

| Copper | 14 µg/L | EPA, 1980a | BLM | EPA, 2007 |

| Selenium | 5 µg/L | EPA, 1987 | 1.5 µg/L (lentic), 0.0031 µg/L (lotic) Intermittent equation | Chronic; EPA, 2016b |

NOTE: See https://www.epa.gov/wqc/national-recommended-water-quality-criteria-aquatic-life-criteria-table.

EPA has adopted or is considering more complex approaches to defining aquatic life criteria for some constituents, which could have implications for the MSGP benchmark monitoring requirements. For copper, the most recent aquatic life criteria (EPA, 2007) do not provide a single-concentration acute criterion, but instead provide an equation or model that is used to calculate acute criteria with additional site-specific data. The biotic ligand model for copper, which takes into account the fact that the bioavailability and hence toxicity of certain metals is affected by water chemistry, uses 10 input parameters for toxicity determination.2 Given the extra sampling burden, the 2015 MSGP did not recommend using the biotic ligand model for copper benchmark monitoring, which is reasonable for a national permit. Nevertheless, in Chapter 3, the committee discusses giving permittees the option to monitor for additional components and to use the biotic ligand model and updated acute criteria if they routinely exceed the benchmark.

Draft 2017 aquatic life criteria for aluminum similarly involve the measurement of multiple parameters to determine the acute criteria based on bioavailability. The new approach to determine aluminum toxicity uses a multiple linear regression method, considering total hardness, pH, and dissolved organic carbon (DOC) (DeForest et al., 2018). The 2015 MSGP freshwater aluminum benchmark is set at 750 µg/L (EPA, 1988), but the 2017 draft update recommends increasing the acute criteria to 1,400 µg/L (based on pH = 7, hardness = 100 mg/L, and DOC = 1 mg/L; EPA, 2017). Considering the minimal additional analysis required, the next version of the MSGP should reflect this change, if the new aluminum criteria are finalized.

Developing New Benchmarks to Better Characterize Stormwater Risks

PAHs have been shown to be extremely toxic to fish and aquatic invertebrates and are known to bioaccumulate (Incardona et al., 2011; McIntyre et al., 2016). PAHs are expected at industrial sites with petroleum hydrocarbon exposure. However, no benchmark has been set for PAHs for any of the industrial sectors. Analytical methods for determination of PAHs are standardized and readily available (EPA, 2015b). It may appear that COD can be used as a surrogate for PAHs, but PAHs can be toxic at concentrations orders of magnitude lower than the COD benchmark (120 mg/L). Canadian water quality guideline values for PAHs for the protection of aquatic life range from 0.012 µg/L (anthracene) to 5.8 µg/L (acenaphthene) (Canadian CME, 1999). Currently, EPA has no recommended aquatic life criteria for individual or total PAHs. EPA evaluated the need for ambient water quality criteria for PAHs in 1980 and noted at the time that the data regarding aquatic life toxicity were extremely limited (EPA, 1980b). Information gathering and/or preliminary monitoring of PAHs from some sectors would be valuable; such data could be correlated with COD concentrations to help EPA determine if COD is an adequate surrogate for PAH concentrations and impacts or if additional PAH monitoring is needed for sectors that have the potential to release PAHs.

___________________

2 pH, dissolved organic carbon, calcium, magnesium, sodium, sulfate, potassium, chloride, alkalinity, and temperature.

Unnecessary Benchmark: Magnesium

Magnesium is a natural component of surface water and groundwater and does not appear to be toxic to a majority of aquatic organisms at concentrations likely to be encountered in most waters, with reported LC50 values ranging from 780 to more than 20,000 mg/L (van Dam et al., 2010). No EPA aquatic life criterion is provided for magnesium. Nevertheless, total magnesium is listed in the MSGP as a benchmark monitoring requirement for Sector K (hazardous waste treatment, storage, or disposal facilities), with a threshold concentration of 0.064 mg/L as a benchmark. Data submitted under the 2015 MSGP show that all samples reported for Sector K exceeded the benchmark, and 83 percent of the samples exceeded eight times the benchmark. It is unclear why magnesium is a required benchmark for this sector, given the lack of toxicity at concentrations likely to be observed in industrial stormwater discharges. Therefore, the committee recommends that magnesium be removed as a benchmark monitoring requirement.

Units

Benchmark threshold levels should be expressed in the same units as the values from which they are derived. For example, benchmark thresholds for parameters such as TSS, total nitrogen, and total phosphorus should be expressed in milligrams per liter and benchmark threshold for metals should be expressed in micrograms per liter. Units of measurement are a foundation of science used to communicate the magnitude of a quantity. The adoption of common units of measurement allows scientists to consistently communicate findings in context and in a manner that eases comparative analysis. In the case of the MSGP, expression of benchmark thresholds for metals in micrograms per liter would promote understanding of the potential consequence of an exceedance relative to the scientific basis from which the benchmark was derived, for example, acute toxicity to aquatic life. This change in expression would also eliminate the need to report sampling results using leading zeros, which can create confusion and the opportunity for reporting error. In the committee’s analysis of the 2015 MSGP monitoring data (see Appendix D), there were numerous reported values that appeared to be reporting errors due to incorrect units. Consistent expectations for reporting would also reduce error in the data set.

EPA should also explain the uncertainty and rounding inherent in the expression of criteria upon which benchmark values are derived and provide guidance on the level of precision expected in reported results. Specifically, EPA should describe how many significant figures should be included and when sample results should be rounded. This ensures that the corrective actions triggered by the exceedance of a benchmark have a threshold of significance and a relationship to the scientific basis of the value.

Assessing Technical Achievability

Pollution prevention and nonstructural SCMs are generally the preferred method to address industrial stormwater discharges. Using nonhazardous materials, general site cleanliness, and creating no-exposure scenarios will greatly minimize pollutant discharge concentrations and masses. However, for some sites, structural SCMs including treatment systems will be necessary to meet MSGP benchmark concentrations. For technology-based benchmarks, an important consideration is whether the benchmarks continue to represent the capability of technologies (when combined with appropriate pollution prevention and nonstructural SCMs) for pollution control. For water quality-based benchmarks, the feasibility of achieving these thresholds with current technology and appropriate site management must be understood. This section examines the capacity of treatment SCMs to meet industrial stormwater benchmarks (see Table 1-2) considering available (albeit limited) data. For this analysis, the committee examined stormwater treatment data from two sources:

- A study of treatment SCM performance at several industrial sites in the United States by Clark and Pitt (in press) and

- The International Stormwater Best Management Practices (BMP) Database, which includes mostly nonindustrial sites.3

All the data reported represent influent and effluent concentrations at various treatment SCMs, and the

___________________

results are organized by pollutant. In general, it is expected that pollutant behavior in a treatment device is independent of the industrial sector and, instead, is a function of influent concentration and other chemical characteristics (e.g., association with solids, complexation with inorganic and organic ligands, pH).

The Clark and Pitt (in press) data were collected at industrial sites, including Sectors M, N, R, S, and AB (see details in Appendix E), although separation by industry types is not analyzed here. The data from each site are reported separately, labeled by the type of treatment SCM. The study included three broad categories of treatment SCMs: (1) sedimentation systems (hydrodynamic separator systems, ponds, and wetlands); (2) filtration/adsorption systems; and (3) treatment trains that included two or more serial SCMs.

The International Stormwater BMP Database includes data on SCM treatment performance from a wide range of studies that meet specific quality control criteria. Data were analyzed for pollutants with MSGP benchmarks and focused on five treatment SCMs considered relevant to industrial stormwater: dry detention ponds, wet retention ponds, wetlands, media filters, and bioretention. The BMP Database contains many more sites than the Clark and Pitt (in press) study, and data for each SCM selected likely represent multiple sites. Additionally, the BMP Database includes multiple land uses, including primarily municipal sites. Because of this, the stormwater concentrations tend to be lower than the Clark and Pitt (in press) industrial data. Detailed design, sizing, and operational/maintenance information was not available for any of these sites, so it cannot be assumed that they are or are not appropriately designed, sized, or maintained.

The Clark and Pitt (in press) and International Stormwater BMP Database data were analyzed in the same manner. Data analysis was performed to answer the following question: For treatment systems that demonstrated statistically significant removal of a pollutant, were the treatment systems able to reduce influent concentrations that exceeded the MSGP benchmarks (see Table 1-2) to effluent concentrations that met the benchmark? Therefore, the analysis only included influent/effluent data pairs where the influent exceeded the benchmark threshold. As with the 2015 MSGP data analysis, for a data set to be included, each site considered had to have a minimum of eight storm events.

Given the limitations of the data sets, the committee used a simple comparison of the data presented in box plots to assess the capability of the treatment systems to meet the benchmarks. The boxes of the box plots highlight the 25th, 50th, and 75th percentiles of the pollutant concentrations, while the whiskers represent the 10th and 90th percentiles. The committee then examined the percentage of the effluent samples that met the benchmark, by treatment types, categorizing the performance by the percent of the effluent concentrations that met the relevant benchmark according to the components of the box-and-whisker plot (<10, 10–25, 25–50, 50–75, 75–90, and >90 percent). Under the MSGP, the results of four quarterly samples are averaged for evaluation against the benchmark threshold; thus, meeting the benchmark in at least 50 percent of events provides a reasonable likelihood of the average also meeting the benchmark. However, the occurrence of even one very high concentration can lead to an average above the benchmark. The data are plotted on a linear scale (in some cases with split axis) because of the need to clearly visualize performance at concentrations near the benchmark level(s).

The analysis considers seven common industrial stormwater pollutants for which adequate data are available: TSS, total aluminum, total copper, total iron, total lead, total zinc, and chemical oxygen demand. For copper, lead, and zinc, the benchmark is based on the receiving water hardness; therefore, two benchmarks were used for analysis—one for a softer water (60 mg/L hardness) and one for a harder water (200 mg/L hardness). All data reported are from composite samples, typically volume weighted. When compared to the early-storm grab sample of benchmark monitoring, the flow-weighted composite generally would be either equal or lower in concentration.

In this analysis, the data are treated as independent events, an assumption underlying a box-plot analysis. Temporal trends were not analyzed and no conclusions can be drawn regarding rolling averages meeting the benchmark.

Results

To highlight examples of the results of this analysis, the SCM treatment performance for two pollutants, TSS and total iron, are discussed in this section along

with the overall findings of the analysis of all pollutants. The remaining pollutant-specific data plots are presented in Appendix E.

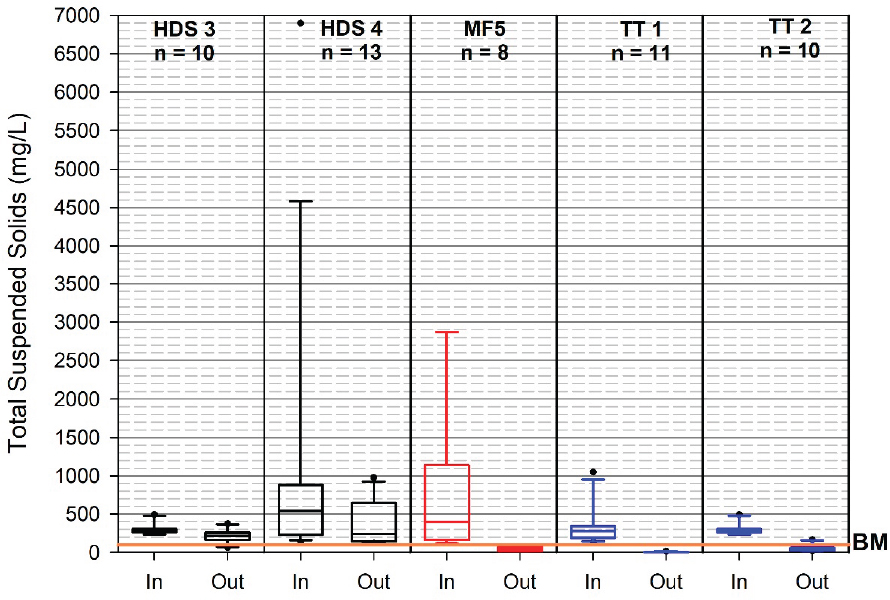

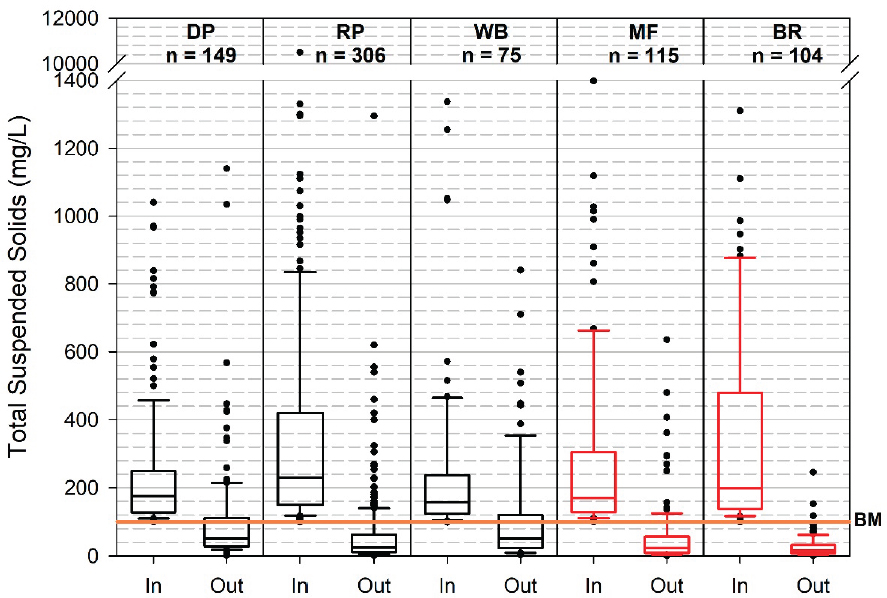

Total Suspended Solids (TSS). For the treatment SCMs at industrial sites, neither of the two sedimentation systems met the benchmark for at least 50 percent of the monitored events; the media filter and both treatment train systems met the benchmark for at least 75 percent of the storm events (see Figure 2-1). Data from the International Stormwater BMP Database, which represent slightly lower concentrations, showed that all systems were able to meet the benchmark for at least 50 percent of the monitored storm events; dry ponds, media filters, and bioretention systems were able to meet the benchmark for at least 75 percent or more of the monitored events (see Figure 2-2). These data suggest that several treatment SCMs are available and can be operated in a manner that provides sufficient treatment to meet the TSS benchmark for at least 50 percent of the monitored events.

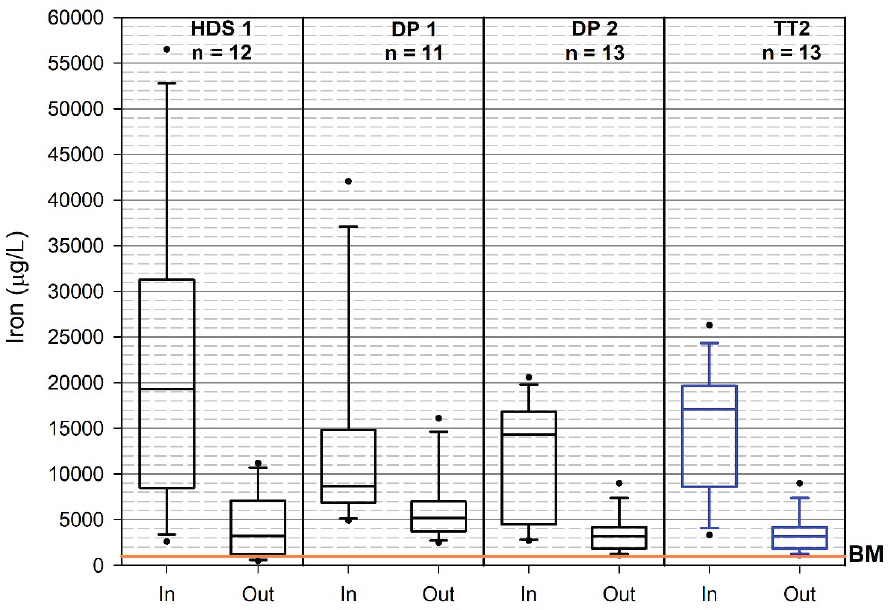

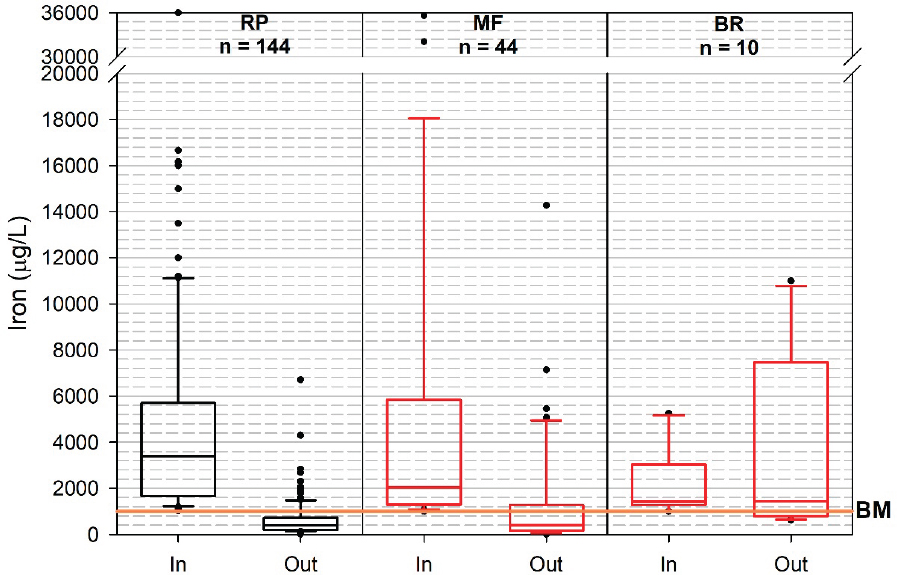

Total Iron. The available data for total iron show a different story. At the Clark and Pitt (in press) industrial stormwater monitoring sites, none of the four systems was able to meet the benchmark concentration for 50 percent, or even 25 percent, of the monitored storm events (see Figure 2-3). Two treatment systems (retention ponds and media filters) from the International Stormwater BMP Database were able to meet the total iron benchmark concentrations for at least 50 percent of the monitored storm events, but the average influent concentrations were substantially lower in this data set (see Figure 2-4). Although the number of industrial sites, treatment types, and storm events were quite limited for the Clark and Pitt (in press) study, the data suggest that industrial sites with high influent concentrations may have difficulty attaining the total iron benchmark, although more data would be needed with more information about the nature of the SCMs to definitively reach this conclusion.

Summary of Treatment Systems. Table 2-7 synthesizes the treatment performance results from Figures 2-1 to 2-4 and E-1 to E-13 (see Appendix E) for all of the treatments and pollutants analyzed. The performance is color coded according to the percentage of storm events in which the flow-weighted effluent concentration for that treatment met the benchmark. For example, yellow, red, and magenta shades indicate treatments where 25 to 50 percent, 50 to 90 percent, and >90 percent, respectively, of the effluent event mean concentrations were above the benchmarks. Green shades represent treatments for which at least 75 percent of the effluent event mean concentrations met the benchmark (darker shades reflect better performance). Gray shading represents sites where sufficient data pairs (a minimum of eight storm events in which the influent exceeded the benchmark) for that treatment and pollutant were not available or the removals were not statistically significant—the committee’s criteria for inclusion. Table 2-7 shows that for industrial sites, less than a third of the treatment and pollutant types met the inclusion analysis criteria, limiting the data available for drawing conclusions.

There are several important limitations/caveats to these summaries. Overall, the industrial site-level data are limited to a relatively small number of storm events. Data included in this analysis used a low threshold of inclusion, only eight events. Additionally, both data sets lack sufficient site-level information to make definitive assessments of the capacity of any these treatment types to meet the benchmarks in other locations. In many cases, specific design information about the systems is not known. For the Clark and Pitt (in press) individual site evaluation, many site owners noted that their filter media were proprietary mixes developed by a vendor and optimized for their site pollutants. In the International Stormwater BMP Database, all media filters are placed into a single category, even though the performance of filtration media is known to vary based on the composition of the media (Clark, 2000; Johnson et al., 2003). Although some of the sedimentation device sizes could be determined, the size of the drainage area could not, and, therefore, the appropriateness of device sizing is unknown. Incorporation of specific features, such as energy dissipaters that would prevent scour of captured sediment, is not known. Finally, as noted previously, the data reflect composite samples (typically flow-weighted composites), which are often lower in concentration than first-flush grab samples, as required by benchmark sampling. Thus, treatment technologies and sites shown to meet the benchmark with composite samples may not necessarily meet it consistently with

NOTE: BM = benchmark; HDS = hydrodynamic separator; MF = media filter; n = number of storm events sampled; TT = treatment train.

SOURCE: Clark and Pitt, in press.

NOTE: BM = benchmark; BR = bioretention; DP = dry detention ponds; MF = media filters; n = number of storm events sampled; RP = wet retention ponds; WB = wetlands.

NOTE: BM = benchmark; DP = dry detention pond; HDS = hydrodynamic separator; n= number of storm events sampled; TT = treatment train.

SOURCE: Clark and Pitt, in press.

NOTE: BM = benchmark; BR = bioretention; DP = dry detention ponds; MF = media filters; n = number of storm events sampled; RP = wet retention ponds; WB = wetlands.

TABLE 2-7

Comparison of Treatment Performance, Shown as Percentage of Storm Events with Event Mean Concentrations Above the Benchmark, with Sample Size Noted

| System | TSS (100 mg/L) | Total Al (750 µg/L) | Total Copper | Total Iron (1,000 µg/L) | Total Lead | Total Zinc | COD (120 mg/L) | |||

|---|---|---|---|---|---|---|---|---|---|---|

| Soft (9 µg/L) | Hard (28.5 µg/L) | Soft (45 µg/L) | Hard (213 µg/L) | Soft (80 µg/L) | Hard (230 µg/L) | |||||

| INDUSTRIAL SITE-SPECIFIC EVALUATIONS | ||||||||||

| Hydrodyn. Separator 1 | 11 | 12 | 12 | 12 | 12 | 12 | ||||

| Hydrodyn. Separator 2 | ||||||||||

| Hydrodyn. Separator 3 | 10 | 8 | ||||||||

| Hydrodyn. Separator 4 | 13 | |||||||||

| Dry Detention Pond 1 | 10 | 10 | 11 | 10 | 10 | 10 | ||||

| Dry Detention Pond 2 | 14 | 16 | 16 | 13 | 15 | 9 | 14 | 11 | ||

| Wetlands | ||||||||||

| Media Filter 1 | ||||||||||

| Media Filter 2 | ||||||||||

| Media Filter 3 | 10 | 18 | ||||||||

| Media Filter 4 | 13 | 13 | ||||||||

| Media Filter 5 | 8 | 9 | 12 | 9 | 12 | |||||

| Media Filter 6 | 9 | 16 | ||||||||

| Treatment Train 1 | 11 | 15 | 14 | |||||||

| Treatment Train 2 | 10 | 14 | 13 | 16 | 9 | 16 | 16 | 9 | ||

| INTERNATIONAL STORMWATER BMP DATABASE EVALUATIONS (includes many sites) | ||||||||||

| Dry Detention Ponds | 149 | 146 | 65 | 51 | 114 | 53 | 14 | |||

| Wet Retention Ponds | 306 | 8 | 336 | 110 | 144 | 105 | 31 | 223 | 55 | 11 |

| Wetlands | 75 | 47 | 55 | 8 | ||||||

| Media Filters | 115 | 225 | 43 | 44 | 18 | 252 | 54 | |||

| Bioretention | 104 | 127 | 39 | 10 | 100 | 33 | 27 | |||

| <10% above BM | 10–25% above BM | 25–50% above BM | 50–90% above BM | >90% above BM |

|---|---|---|---|---|

NOTE: Numbers of influent/effluent sample pairs displayed in each cell. Gray cells indicate that the system was not included in the analysis because it did not meet the criteria for inclusion. The removal either was not statistically significant or the data set did not include at least eight storm events for that treatment/pollutant where the inflow exceeded the benchmark.

first-flush grab samples. Where composite samples consistently fail to meet the benchmark, the same would be expected when first-flush grab samples are used, although not necessarily from discharge of storage SCMs.

Despite the limitations of the data sets, some general findings emerge. In the site-level industrial evaluation (Clark and Pitt, in press), at least one treatment SCM was capable of meeting benchmarks for at least 50 percent of storm events for TSS, aluminum, copper, zinc, and COD. Multiple sites and treatment SCMs met the benchmarks for at least 50 percent of storm events for TSS, aluminum, copper (soft water), lead (hard water), and zinc (hard water). In contrast, no systems/sites analyzed were able to meet the benchmark for at least 25 percent of storm events for iron or at least 50 percent of events for lead (soft water).

The International Stormwater BMP Database provides a larger data set, but it includes many nonindustrial sites, and on average it has much lower pollutant influent concentrations than the site-level industrial data. Under conditions of lower influent concentrations that

might be found more commonly in municipal settings, the International Stormwater BMP Database data suggest that treatment SCMs are available that are effective in reducing concentrations below freshwater benchmark threshold levels in at least 50 percent of the storm events where the influent concentration exceeds the benchmark for TSS, copper, iron, lead, zinc, and COD. Data for aluminum were extremely limited (only eight storms for one treatment type).

The committee cannot say definitively that lower influent concentrations led to more successful treatment performance for these particular sites because of the limited information on design and operation of the SCMs. With median inflow iron concentrations ranging from 1,500 to 3,500 µg/L, two of the three treatment SCMs in the BMP Database met the iron benchmark for at least half of the storm events, while none of the four industrial sites/treatments (with median inflow concentrations of 8,500 to 19,000 µg/L) could meet the benchmark for at least 10 percent of events. Similarly, at the industrial sites where the median inflow zinc concentrations ranged from 500 to 900 µg/L, only one of the treatments met the lower soft-water benchmark. In contrast, in the BMP Database, where median inflow concentrations ranged from 100 to 400 µg/L, four out of five treatments met the soft-water benchmark for at least half of the storms. Although some dependence on influent concentration is found generally in SCM performance, SCM treatment is not linear with influent concentration (Clark, 2000). Some SCMs will discharge pollutant concentrations near a treatment value determined by their design characteristics, independent of influent concentrations up to the design storm size (most storm events rarely approach the design storm size).

The analyses also indicate that all SCMs will not provide equal performance. Dry detention ponds and hydrodynamic separators generally performed poorly compared to other treatment types in both the industrial site evaluation and using the BMP Database. Much of the poor performance likely is attributable to scour of previously captured sediment (Avila and Pitt, 2009). Media filters, treatment trains, wet detention ponds, and bioretention were among the better-performing SCMs.

Three of the industrial sites met the benchmark for TSS for at least 50 percent of storm events, but failed to meet the benchmarks as often for other parameters (e.g., iron, copper, zinc). Even though some guidance documents, such as California Water Boards (2018), state that TSS removal can be used as a predictor of particulate metals removal, these data suggest that attaining the benchmark for TSS at industrial sites is not a sufficient surrogate for meeting the metals benchmark.

Overall, the committee’s evaluation of technical achievability is hampered by the acute lack of SCM performance data for industrial stormwater. Table 2-4 highlights the paucity of industrial stormwater data available with which to evaluate the attainability of benchmarks. None of these data are sufficient to determine that certain benchmarks cannot be achieved with existing treatment technology combined with appropriate site management and pollution prevention strategies. It does appear, however, that some type of treatment train approach, where an initial SCM handles part of the pollutant load followed by a second “polishing” treatment, has the potential to meet many of the benchmarks for more than 50 percent of storm events. The initial treatment may be an SCM that specifically targets high-particulate-matter loads or some nonstructural SCM that can reduce input pollutant concentrations.

Although this analysis focuses on treatment and the 2015 MSGP monitoring data are based on benchmark monitoring discharge concentrations, some commonalities are noted. Again, copper, iron, and zinc are the pollutants that have benchmark concentrations that are the most difficult to meet.

This analysis clearly highlights the critical need for more data to assess the achievability of many benchmarks. Specifically, more data would be particularly valuable regarding the treatment performance for iron, but would also be useful for aluminum, copper, lead, and zinc, which in the 2015 MSGP monitoring data show results that commonly exceed the benchmarks across multiple sectors (see Tables 2-3 and 2-4 and Appendix D).

Priorities for Additional Monitoring

The Statement of Task asked the committee to

Identify the highest priority industrial facilities/subsectors for consideration of additional discharge

monitoring. By “highest priority” EPA means those facilities/subsectors for which the development of numeric effluent limitations or reasonably standardized stormwater control measures would be most scientifically defensible (based on sampling data quality, data gaps and the likelihood of filling them, and other data quantity/quality issues that may affect the calculation of numeric limitations).

As discussed in Chapter 1, national effluent limitation guidelines (ELGs) are used to set enforceable technology-based effluent limits. ELGs are developed based on performance of specified technologies and can be numeric or narrative. In the absence of ELGs, technology-based effluent limits can also be applied by best professional judgment on the technical capabilities of achieving effluent limits (EPA, 2010).

All technology-based numeric effluent limitations (NELs) that currently apply in the MSGP were developed through the ELG process in the 1970s and 1980s (see list in Appendix B). Additional NELs for industrial stormwater could be developed based on the performance of structural and nonstructural SCMs. Developing new NELs based on the capabilities of treatment technology and other on-site stormwater management practices would require significant amounts of rigorous monitoring data. For this reason, the ELG process has several important advantages over the MSGP process for development of NELs for industrial stormwater. First, although both the MSGP and ELG processes can consider publicly available data on the performance of treatment technology, the ELG process includes the capability to generate additional performance data through targeted sampling, questionnaires, and other means. Key aspects of monitoring SCM performance include study design, sample type and locations, data validation and reporting, and performance analysis (Geosyntec Consultants and Wright Water Engineers, Inc., 2009). These elements of performance monitoring go beyond the capability of what can be prescribed in a national general permit and reported through discharge monitoring reports and annual reports in a useful manner. The ELG process also affords a more focused analysis of treatment technology performance, because it analyzes treatment technology performance on a waste stream by waste stream basis, for specific sectors and subsectors. In contrast the objective of the MSGP is to update permit requirements for many sectors and subsectors at the same time. The ELG process includes a comprehensive consideration of economic factors specifically related to treatment technology performance, and extensive opportunity for public input from planning to final promulgation.

Based on the paucity of industrial SCM performance data available at this time, no specific sectors are recommended for development of new numeric effluent limits solely based on existing data, data gaps, and the current likelihood of filling them. Any new NEL that is developed would require extensive collection of new data. Instead, NELs are appropriate for sectors and pollutants that cannot be effectively controlled within the MSGP and proposed AIM process (see Box 1-3) or for which there are documented benchmark attainability issues, considering implementation of reasonable structural and nonstructural SCMs.

In the committee’s review of the 2015 MSGP monitoring data, a few sectors stand out as having a large percentage of samples with high discharges (eight times the benchmark levels), including Sectors H (coal mines and coal-mining-related facilities), A2 (wood preserving), F4 (nonferrous foundries), Q (water transportation), and R (ship and boat building or repairing yards) (see Table 2-4). A few of these sectors reflect only a small number of sites. The AIM process, which is under development, is intended to provide structured mechanisms to improve compliance under the MSGP. Thus, it is premature to judge whether AIM will be effective to reduce these high stormwater pollutant discharges. Those sectors that consistently fail to meet the benchmark under the most intense scrutiny within the AIM process may be appropriate candidates for the development of ELGs, although individual permits may also be a more efficient pathway for sectors with relatively few facilities.

Where benchmark attainability is questionable, industries and industry groups should collect detailed performance data for common SCMs under typical stormwater conditions to expand the knowledge base and potentially identify future sectors and pollutants where numeric effluent limits may be appropriate. Such data should be collected using appropriate quality assurance and quality control (QA/QC) practices for stormwater monitoring and include information on SCM design, sizing, maintenance during monitoring, and on-site characteristics, such as watershed area, land

cover, and anticipated pollutants. These monitoring data should be made available via a mechanism similar to (or directly employing) the International Stormwater BMP Database. The open nature of the BMP Database is an opportunity for a wide range of study authors and reviewers to submit performance data with quality assurance reviews. Relatedly, in December 2017, EPA released its Industrial Wastewater Treatment Technology Database (IWTT) as a publicly accessible web application.4 EPA now conducts routine literature reviews to identify performance data that could be included in the IWTT, and considers performance data in the IWTT in its ELG process (EPA, 2018). Similar EPA efforts for industrial stormwater would strengthen the value of the BMP Database.

For water quality-based criteria, rigorous treatment performance data are necessary to determine if there are benchmarks that are not attainable based on current technology and best practices for site management and pollution prevention. These data could provide scientific support for the development of new numeric effluent limits via the ELG process to reflect treatment attainability. For benchmarks based on treatment technology, such as TSS, the data could indicate whether current benchmarks represent appropriate performance targets or, in fact, should be lower, based on improvements in the state of practice of structural and nonstructural SCMs.

CONCLUSIONS AND RECOMMENDATIONS