CHAPTER 7

RESEARCH AND DECISION-MAKING PROTOCOLS

The purpose of this chapter is to review concepts considered in the other chapters and highlight protocols for studying, researching, or otherwise understanding these concepts. For each topic, this chapter outlines the state of the art, potential pitfalls, and emerging issues and advances. The objective of this synopsis is to aid regulators, stakeholders, and practitioners by serving as a synthesis of protocols, with the other chapters providing more detailed and technical background.

OIL FATE AND TRANSPORT

Environmental Geochemistry Research Protocols

State of the Art and Potential Pitfalls

The response efforts associated with the Deepwater Horizon (DWH) oil spill included the use of numerous techniques in environmental geochemistry with potential to inform response options, including dispersant application. A selection of geochemical applications and methodologies potentially useful in informing response options with quantitative results are the focus of this section. Key to the application of geochemical approaches is knowledge of the chemical composition of the spilled oil, which in the case of the DWH oil spill was not readily available. Thus, for major oil spill events in the future, spill response operations would benefit if all available information regarding the chemical composition of the spilled oil and applied dispersant was made publicly available, and if oil samples were also made available to the response and scientific communities (see Chapter 5).

Hydrocarbon and Dispersant Fractionation

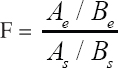

Based on the benchmark of a source oil composition, environmental geochemical studies inform both fate and transport of discharged petroleum fluids and applied dispersant. A key metric for understanding the partitioning of oil and dispersant is the fractional abundance of a given chemical species, typically calculated as a normalized ratio, in which the compound of interest in

the environmental sample (Ae) is referenced to a benchmark compound in that same sample (Be), and then a ratio calculated in reference to the abundance of those same compounds in the source oil (As and Bs). The result is a fractional abundance relative to both the reference compound and the source oil.

By choosing a benchmark compound that is conserved relative to the process of interest, the resulting fractional abundance provides a robust tool to investigate the disposition of oil by effectively removing effects like dispersion, advection through open boundaries, etc. This double normalization approach, referred to here as geochemical referencing, was applied during DWH toward understanding the mass balance of oil, and it was key to understanding those processes that fractionated the discharge, including both physical partitioning and biodegradation. Seven applications of this approach during DWH are outlined here, each of which applies to a specific and limited time window.

- First, geochemical referencing was used to determine the fate of natural gas compounds—ethane and propane—relying on methane as a conservative benchmark (Valentine, 2010). This approach revealed that these gases were consumed in the deep intrusion layers and were the major contributor to deep-sea oxygen sags observed in theatre.

- Second, geochemical referencing enabled calculation of dissolution in the deep intrusion layers. This was accomplished notably for benzene, toluene, ethylbenzene, and xylene (BTEX) compounds (Camilli et al., 2010) by comparing aqueous solubility to fractional abundance for a range of compounds.

- Third, geochemical referencing was used to differentiate net aqueous dissolution of specific hydrocarbons relative to evaporation by reference to aqueous-insoluble compounds of comparable volatility (Ryerson et al., 2011). This was accomplished through atmospheric measurement.

- Fourth, geochemical referencing was used to identify the partitioning of the dispersant component, dioctyl sodium sulfosuccinate (DOSS) by reference to methane and to the environmental oxygen deficit (Kujawinski et al., 2011). These findings demonstrated that DOSS dissolved to the intrusion layers along with other soluble compounds.

- Fifth, geochemical referencing enabled a mass balance for DWH that included dissolution, evaporation, and subsurface trapping of oil droplets (Ryerson et al., 2012). This approach considered all available measurements as well as flow rate estimates but was enabled by geochemical referencing.

- Sixth, geochemical referencing was used to calculate the distribution of oil deposited to the seafloor (Valentine et al., 2014) as well as the rates, molecular patterns, and controls on biodegradation of oil deposited to the seafloor (Bagby et al., 2017).

- Seventh, geochemical referencing was used to estimate the trapping of liquid oil droplets in the deep intrusion layers (Gros et al., 2017) for a particular time period in June 2010. These results found that ~0.8% of the insoluble hydrocarbon fraction was trapped in the deep intrusion layers as microdroplets. Furthermore, by using geochemical referencing coupled with some reasonable assumptions about biodegradation, Gros et al. (2017) were able to estimate a mass balance through the overall water column that could be used to validate fate and transport models.

The methodologies needed for geochemical tracing of discharge as described here include a combination of traditional and evolving analytical tools, including Fourier transform ion cyclotron resonance mass spectrometry; liquid chromatography coupled to high-resolution mass spectrometry; comprehensive two-dimensional gas chromatography; compound specific isotope ratio mass spectrometry; and proton transfer reaction mass spectrometry. For a complex release scenario, the combined application of available methodologies warrants consideration by response personnel within the context of response operations, and expert scientific opinion can be essential in determining what value emerging technologies might provide. The timing of such measurements is also critical. Drawing from the DWH examples above, there is no single environmental sample that was analyzed comprehensively: for example, for the combination of surfactants; volatile organic compounds; BTEX; polycyclic aromatic hydrocarbons (PAHs); paraffins, isoparaffins, aromatics, naphthenes, and olefins; natural gases; biomarkers; and saturated alkanes. This is important because comprehensive analysis of samples would provide a comprehensive geochemical inventory that would robustly inform fate and transport processes.

Indirect Chemical Measurements

In addition to measurements of the spilled oil, including transformation rates and products, a number of indirect chemical measurements also proved useful during DWH. Specific examples include dissolved nitrogen species nitrate, nitrite, and ammonium (Chakraborty et al., 2012; Hazen et al., 2016; Lu et al., 2012); dissolved oxygen (Du and Kessler, 2012; Kessler et al., 2011a); dissolved phosphate (Hazen et al., 2016); and dissolved metals, notably iron (Shiller et al., 2017). Because each of these compounds is bioactive and follows predictable oceanic behavior, appropriate measurement schemes provided useful insight as to microbial growth and metabolism associated with hydrocarbons, including estimates for total hydrocarbon respiration in the deep intrusion layers (Kessler et al., 2011a).

Emerging Issues and Advances

Isotope Tracking

In addition to the quantification of compound concentrations described above, numerous isotopic methods proved useful in tracking the transport and fate of discharged materials during DWH; these and a number of emerging isotopic methods may prove useful in future spill scenarios. Isotopic tracing in the context of oil spills falls into three general categories. First is the measurement of isotopic abundance in specific discharged compounds for forensic identification and quantification of biodegradation. One specific application during DWH was to quantify the extent of biodegradation for methane, ethane, and propane (Valentine, 2010); numerous other isotopic systems—including sulfur, carbon, and hydrogen—have previously been applied to petroleum source identification (Peters et al., 2005). Various emerging isotopic systems also hold promise for petroleum spills, including compound specific sulfur (Amrani et al., 2009, 2012) and radiocarbon (Kessler et al., 2008a) quantification as well as clumped isotope analysis (Douglas et al., 2017; Stolper et al., 2015). Second is the use of isotopes as a tracer into other chemical forms. Specific applications during DWH included the tracking of stable carbon isotopes and radiocarbon abundance into biota (Chanton et al., 2012) and sediment (Chanton et al., 2014). Third is the addition of isotopes to environmental samples as a tracer to determine process rates or to identify the flow of carbon into the ecosystem. Specific application during DWH was to quantify oxidation rates of methane using tritium (Crespo-Medina et al., 2014; Valentine, 2010) and to identify microbes consuming select hydrocarbons with carbon-13 (Redmond and Valentine, 2012).

Intercalibration Experiments

An intercalibration experiment was performed to examine the measurement of oil hydrocarbons between 20 laboratories (Murray et al., 2016; Reddy et al., 2015). Results included measurements by gas chromatography, ultra high-resolution mass spectrometry, toxicity, shear velocity, and interfacial tension as well as the measurement of weathered oils using Fourier Transform ion cyclotron resonance mass spectrometry by a subset of labs. The reports recommended the use of certified reference materials alongside sample analyses and encouraged detailed reporting of methods and the associated quality assurance/quality control. While an intercalibration experiment has been performed only on oil hydrocarbons, the concept of this approach could be broadly applied for the measurement of all oil, gas, and dispersant compounds of interest.

Adoption of Emerging Technologies

An important lesson to emerge from the DWH oil spill is that developing technologies can provide critical insight into a complex spill scenario. Select examples provided above include in situ mass spectrometry linked to autonomous vehicle technology (Camilli et al., 2010); in situ mass spectrometry linked to an aerial platform (Ryerson et al., 2011); and development of new laboratory procedures by liquid chromatography-mass spectrometry for quantification of surfactants at trace levels. The application of these approaches has provided critical insight as to transport, fate, and impacts of oil and dispersed oil from this spill; yet, after 9 years, few of these analytical approaches have been formally adopted by the response community.

Biodegradation Protocols

State of the Art and Potential Pitfalls

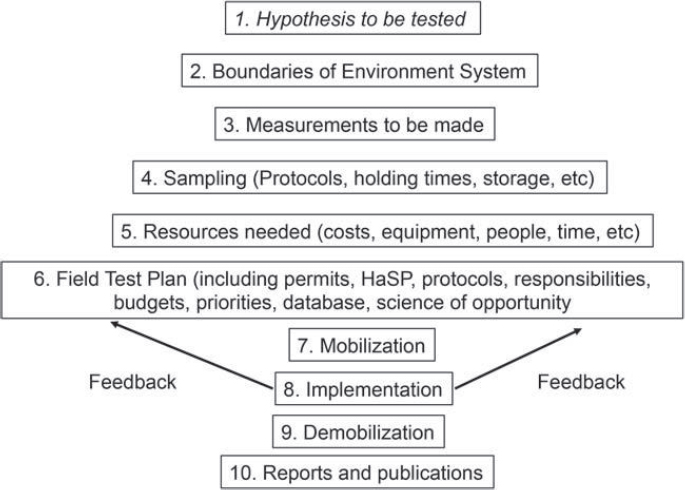

The past decade has seen an increase in the use of molecular tools as direct (culture-independent) techniques to determine microbial community structure, functional capabilities of the environment, stress responses, protein identity and abundance, and the relationship between specific organisms and substrate compounds. This has been largely due to the rapidly declining costs of these methods from many thousands of dollars to only a few dollars per sample. In addition, the speed at which analyses can be performed has decreased from months to hours. Consequently, the number of known oil-degrading microbial phyla has increased dramatically (three phyla to seven phyla in all kingdoms) as our ability to detect them has increased. Currently, oil degraders are found in all three domains of life (Hazen et al., 2016). However, all these culture-independent techniques carry underlying assumptions and rely on analytical pipelines that build on biases for the final conclusion, so they must be scrutinized carefully for positive and negative controls, field trip blanks, and materials and methods (see Figure 7.1).

The DWH oil spill saw many new protocols used for the first time both in the field and in the lab. Many molecular techniques had not been tried extensively in the field, which raises concerns based on sample collection. Such concerns include:

- whether or not sample collection actually captured the desired subsurface feature, such as an intrusion layer;

- whether the samples were filtered at depth in situ or were collected in sampling bottles and brought to the surface for further processing (how were they handled, how long did it take to process them on deck, how were they stored);

- handling of the sampling bottle prior to processing can substantially change the microbial environment (e.g., temperature, pressure, and surface substrate of the bottle interior). For

- collection of samples that could indirectly indicate biodegradation, such as sensitive dissolved oxygen measurements, direct cell counts, hydrocarbon fractionation (see the Hydrocarbon and Dispersant Fractionation section in this chapter), and isotope tracking.

example, if samples were collected at 4°C and at high pressures, but were stored live on deck for more than just a few hours before being processed, the microbial community is likely to be different from that at the sampling site (Liu et al., 2017a); and

Numerous studies published since DWH focused on oil biodegradation. Unfortunately many studies were done with samples collected and stored for weeks to years after they were collected (Bælum et al., 2012; Crespo-Medina et al., 2015; Dubinsky et al., 2013; Hu et al., 2017; Liu et al., 2017a). In addition, “open water” environmental conditions are impossible to fully replicate in the lab, and many studies were not conducted using Macondo oil and/or Corexit® 9500. Few studies used live oil (Jaggi et al., 2017). Most instead used dead oil or even non-crude oil. While some of these studies may have sound conclusions, it is possible that fresh live oil and samples from the Gulf of Mexico would have given different results. Furthermore, as noted by Yergeau et al. (2015), the timing of studies during and after the spill is also an important consideration for evaluating the potential for long-term effects. The concentrations of oil and/or dispersant used in these studies differed from the actual concentrations encountered in the field. For example, a study published by

Kleindienst et al. (2015b) suggested that “Chemical dispersants can suppress the activity of natural oil-degrading microorganisms.” Yet, a later publication (Techtmann et al., 2017) suggested that “Corexit® 9500 Enhances Oil Biodegradation and Changes Active Bacterial Community Structure of Oil-Enriched Microcosms.” Several factors differed between these studies including oil that was used, the microcosm setups, and the applied concentrations of oil and Corexit® 9500. Such divergent approaches create confusion when scientists, stakeholders, regulators, practitioners, and the public try to meaningfully interpret the results.

It is also unclear whether the biodegradation pathway sequence of oil is different in anaerobic seawater environments. Anaerobic biodegradation of hydrocarbons is a significant process that occurs in many environments (Gieg and Toth, 2018; Gründger et al., 2015; Laso-Pérez et al., 2016; Wawrik et al., 2012) and may be an important process in some deepwater communities of the ocean; however, anaerobic hydrocarbon degraders are primarily found in anoxic sediments and within ocean hydrocarbon seeps (Hazen et al., 2016; von Netzer et al., 2013). Anaerobic hydrocarbon degradation has been studied in fossil hydrocarbon reserves (e.g., tar sands; Berdugo-Clavijo and Gieg, 2014) and in thermophilic communities (e.g., around hydrothermal vents; Laso-Perez et al., 2016), but less knowledge exists about this process among cold-adapted communities in deepwater environments. In deep coastal environments where the temperature is nearly always 4°C, psychrophilic and psychro-tolerant microbes can play a significant role in oil biodegradation, even degrading oil faster than microbes in the surface water, as was seen in DWH (Hazen et al., 2010; Valentine et al., 2012). Thus, maintaining a proper temperature for microcosms and collecting samples and maintaining that temperature during transport and storage also become critical.

The potential for hydrostatic pressure to inhibit oil biodegradation is relevant to deepwater blowouts and to oils that sink to the deep-sea floor but has been largely overlooked. The Macondo well is located at a water depth of 1,500 m, which is shallower than the depth of 2,000 m beyond which pressure effects on biodegradation rates are expected (Hazen et al., 2016; Marietou et al., 2018). A recent study that considered oil biodegradation rates using samples collected during the response phase of DWH at pressures equal to 1,500 m found no effect, consistent with expectation; however, studies at higher pressure on the same samples did indicate that pressure might affect oil biodegradation rates and pathways (Marietou et al., 2018). In addition, many other factors may work synergistically to impact the biodegradation of oil (see Table 7.1).

The publicity of DWH led to inevitable comparisons to the Exxon Valdez oil spill. However, these spills were completely different in terms of oil type, the environment, and the use of dispersants. Other than the fact that both environments contained oil degraders, the two spills were not comparable in terms of biodegradation rates of oil (Atlas and Hazen, 2011).

In spill responses, scientists have observed the microbial biomass in the water column slowly decline long after the oil has been depleted. In DWH, many thought that this was due to the oil degraders surviving for an extended period of time (Dubinsky et al., 2013). However, detailed studies during and after the DWH spill demonstrated that the microbial community structure changed from oil degraders once the oil was no longer present to microbes that could use dead bacteria as a food source (Dubinsky et al., 2013).

Whole oil has an apparent half-life; half-life does not imply first order kinetics. Classification of different compounds in the oil and how they are degraded has been reported; some degrade very slowly, resulting in heavy oil (Aeppli et al., 2014; Head et al., 2014). The pathway for oil biodegradation was shown not to be altered by Corexit® 9500 both in the water column (Prince and Butler, 2014) and in sediment cores from DWH (radio-labeled constituents from Corexit® 9500 and whole oil, 5°C) (Mason et al., 2014). Nutrients and trace metal concentrations can regulate rates and pathways of oil biodegradation, especially in low nutrient environments like the oceans (Bælum et al., 2012; Hazen et al., 2016; Pepper et al., 2015). The microbial community composition will dictate which oil biodegradation pathways are used, how fast the oil is degraded,

TABLE 7.1 Synergistic Effects That Impact the Biodegradation of Oil

| Factors Working Synergistically | Impact on Biodegradation |

|---|---|

| Chemical dispersants + mineral fines | Individually each will promote dispersion of the oil. Combined, the formation of daughter products and transfer of oil from the surface into the water column is enhanced. |

| Autoinoculation + “memory response” of hydrocarbon degraders | Introduction of hydrocarbons to previously exposed water parcels leads to an increase in microbial abundance and accelerated hydrocarbon biodegradation. |

| Oil droplet size + dispersion + biodegradation rates + dissolution | Enhances biodegradation, dissolution and dispersion rates of oil hydrocarbons. |

| Cometabolic biodegradation + dispersion + secondary electron donors | Enhances biodegradation, dissolution and dispersion rates of oil hydrocarbons even when the oil itself cannot be a suitable electron donor. |

| Biosurfactants from multiple microorganisms | Enhances bioavailability of poorly soluble compounds. |

SOURCE: Hazen et al., 2016.

which compounds in the oil are degraded, and what oil daughter products might result, which could affect the bioavailability and toxicity of hydrocarbon compounds.

Emerging Issues and Advances

The emerging realization that oil biodegradation is a function of the environmental system as a whole represents a departure from compartmentalized research that provides an inadequate picture of oil biodegradation during a spill. Thus, an ecosystem services approach is required. The DWH oil spill was a prime example of one of the most rigorous oil spill sampling efforts ever undertaken, but the efforts failed to initially coordinate collection of all the components. It also failed to have a plan vetted by systems experts that would allow immediate deployment, including resources (money, equipment, and people) on standby for a large-scale spill response. These scenarios require that government agencies—for example, the National Oceanic and Atmospheric Administration (NOAA), the U.S. Coast Guard (USCG), the U.S. Environmental Protection Agency (EPA), and applicable state agencies—work together to develop a plan and designate contingencies for resources that could be used in that plan. The government oil spill response documents have been reviewed, with exercises carried out for people to work with. The governing documents for oil spill response include:

- National Response Framework1

- National Oil and Hazardous Substances Contingency Plan2

- Title 40 CFR 300.115—Regional Response Teams

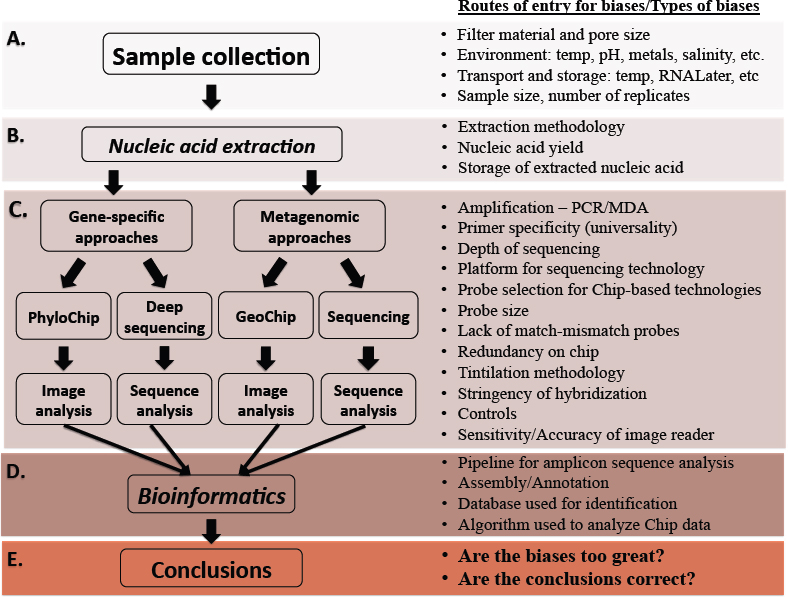

None of these includes a complete systems approach; for instance, oil biodegradation should be part of any such field sampling plan that would also provide for follow-on laboratory studies that will be critical for the analysis and understanding of the fate and effects of the spilled oil. Figure 7.2 provides an overview of key aspects of that plan in a stepwise fashion to ensure the best

___________________

1 See https://www.fema.gov/media-library/assets/documents/117791.

2 See https://www.epa.gov/emergency-response/national-oil-and-hazardous-substances-pollution-contingency-plan-ncpoverview.

possible outcome, recognizing that priorities will have to be set. The plan would be a dynamic living document with regular feedback from both the field and the laboratory.

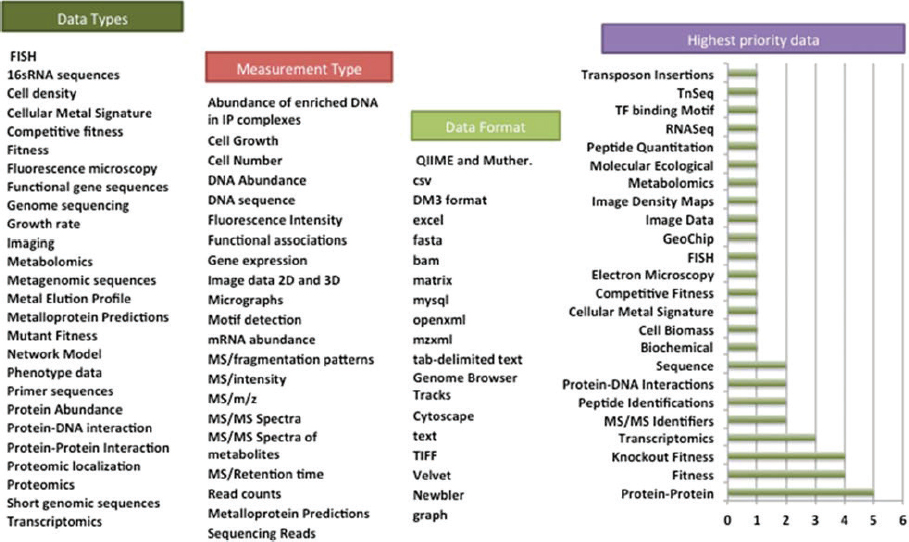

As part of this Field Test Plan, development of a Data Management Plan could assure that all the data collected are in a format and location where they can be stored and used. The Gulf of Mexico Research Initiative (GoMRI) has developed the Gulf of Mexico Research Initiative Information & Data Cooperative (GRIIDC)3 to capture all the data funded by GoMRI related to the DWH spill. The cooperative might be a useful starting point for this type of database. NOAA also has a database for spill-related data called Data Integration Visualization Exploration and Reporting (DIVER).4 The U.S. Department of Energy also has been working on a database for all its funded projects for more than a decade and has made it public (KBase: The U.S. Department of Energy Systems Biology Knowledgebase).5 KBase is “a collaborative, open environment for systems biology of plants, microbes and their communities,” which encourages investigations and findings from all environments. Integration across platforms is clearly something that should be recommended across government agencies, especially for GRIIDC, DIVER, and KBase. Figure 7.3 provides an example of culture-independent (not isolated and cultured) data types, measurements, formats, and priorities for environmental systems biology.

Improved understanding of natural biodegradation rates of oil and the effectiveness of chemical dispersants in the Arctic marine environment will be important as maritime transportation and oil exploration expand in the region (NRC, 2014).

A number of microcosm studies have recently been focused on quantifying changes in microbial structure and function and potential oil biodegradation rates in seawater and ice core

___________________

3 See https://data.gulfresearchinitiative.org.

4 See https://www.diver.orr.noaa.gov.

5 See http://kbase.us.

samples recovered from across the Canadian Arctic (Garneau et al., 2016; Yergeau et al., 2017). In terms of dispersants, McFarlin et al. (2014, 2018) have chemically quantified biodegradation and abiotic losses of Alaska North Slope crude oil and Corexit® 9500 in Arctic seawater samples and identified the microorganisms potentially involved in biodegradation based on shifts in bacterial community structure and abundance of biodegradation genes.

A recent study supported by NOAA and EPA on State of the Science of Dispersants and Dispersed Oil in U.S. Arctic Waters (CRRC, 2017)—with a large group of experts and public review—concluded that many of the uncertainties about biodegradation of oil can be attributed to reliance on laboratory studies that may not accurately reflect environmental conditions. The influence of major environmental parameters on these processes, including low temperature, nutrient concentrations, sea ice, sunlight regime, suspended sediment plumes, and phytoplankton blooms that characterize the Arctic, merits future investigation (Vergeynst et al., 2018a,b).

Modeling Biodegradation

State of the Art and Pitfalls

The modeling of the biodegradation of oil droplets in the water column builds on modeling work on the biodegradation of dissolved hydrocarbons in aquifers and liquid oil in sediments. In general, the rate of biodegradation of dissolved hydrocarbon components depends on their concentration in water, whereas the biodegradation of non-aqueous-phase liquid (NAPL) is assumed to depend on NAPL-water surface area and the physical properties of oil, namely, viscosity. There are

numerous papers on the biodegradation of NAPL within sediments, and these laid out the foundations for the biodegradation of NAPL suspended in water.

NAPL Oil in Sediments

In this section, one finds the model developed by Nicol et al. (1994) as well as the first-order models of oil biodegradation presented by Venosa et al. (1996, 2010). Geng et al. (2014, 2015) presented a model for hydrocarbon biodegradation that accounted for nutrient concentration on the biodegradation rate using a Monod-type formulation. Various parameters were estimated, and they were found to be consistent with those obtained from wastewater treatment fields and dissolved hydrocarbons.

NAPL Oil in the Water Column

Venosa and Holder (2007) reported biodegradation studies of Alaska North Slope oil in laboratory flasks. They fitted first-order models to the oil mass in the flasks. Their results showed that chemically dispersed oil biodegraded faster initially, but the final extent of biodegradation was the same as that of physically dispersed oil. Yassine et al. (2013) modeled the biodegradation of dispersed oil using Monod kinetics and a quasi-steady-state approximation for the dissolution of low solubility hydrocarbons in the water column, where the oil dissolves before being biodegraded. Campo et al. (2013) conducted laboratory biodegradation experiments using south Louisiana crude and two microbial communities from the Gulf of Mexico, one obtained from the surface (meso) and one from near the Macondo well (cryo). They found that chemically dispersed oil biodegraded faster, and that the meso experiments resulted in faster biodegradation overall. The cryo experiments exhibited a lag ranging from 2 days to 28 days, and alkanes larger than n-C14 persisted in them while the aromatics of similar sizes were biodegraded. Campo et al. (2013) attributed the recalcitrance of the alkanes to the formation of crystalline structures of these alkanes. They fitted first-order models without lags to the biodegradation rates. The experiments were conducted at atmospheric pressure, which might not represent the optimal conditions for the cryo cultures. They also used extremely high concentrations of nitrate (2.8 g/L KNO3) and phosphate (0.55 g/L NaP3O10), which were much higher than the concentrations at depth in the Gulf of Mexico (Hazen et al., 2010). Brakstad et al. (2015) conducted laboratory biodegradation experiments on two droplet sizes, 10 µm and 30 µm. They observed that the smaller droplets biodegraded faster. They also observed a lag that was generally less than 8 days, using coastal Norwegian seawater. Wang et al. (2016b) conducted a similar study, using GOMEX oil, but found situations where the 30 µm droplets biodegraded faster than the 10 µm droplets did. They also found a lag up to 10 days, but importantly, the samples were pretreated with oil for several weeks prior to experimentation, calling into question the relevance of the reported lag time. Using local Norwegian fjord seawater and local oil in the presence of methane, ethane, and propane, Brakstad et al. (2017) found that methane oxidation was faster than propane, which is the opposite that was found with DWH where propane jump-started the biodegradation process of the Macondo oil (Valentine et al., 2010). This suggests that biodegradation of oil and gas could be inherently different in varying environments.

Emerging Issues and Advances

The models used in the studies discussed in the previous paragraph did not account directly for the fact that biodegradation of dispersed oil occurs at the oil-water interface and thus increases with the oil-water surface area (Atlas and Hazen, 2011). In NOAA’s Automated Data Inquiry for Oil

Spills model, the oil is assumed to consist of various pseudo-components (C4-C12 alkanes, 2-3 ring aromatics, and others), and the biodegradation rate is assumed to depend on the surface area of the oil droplet. The size of the oil droplet is allowed to be reduced following biodegradation (Viveros et al., 2015). In a simulation developed by Vilcáez et al. (2013), the authors assumed oil droplets were completely covered by microorganisms. The results indicated faster biodegradation of small oil droplets due to their larger surface area per unit mass. Because the model assumes complete microbial coverage, the oil droplets biodegraded faster than the dissolved oil components, but no evidence was provided to support the assumption of total microbial coverage.

The impact of nutrients on oil biodegradation has been noted since the 1970s, and more recent studies found that concentrations exceeding a few mg-N/L of water are needed for maximal biodegradation rates (Boufadel et al., 1999). More detailed studies have elucidated that nutrient concentration was a limiting factor for the DWH oil biodegradation in surface waters (Atlas and Hazen, 2011; Bælum et al., 2012; Chakraborty et al., 2012; Dubinsky et al., 2013; Edwards et al., 2011; Hazen et al., 2010, 2016; Kimes et al., 2013, 2014; King et al., 2015a; Lu et al., 2012). The impact of nutrients has been modeled using a Monod type expression (Geng et al., 2014) for oil within sediments, and the model could be easily translated to dispersed oil. However, there are no calibrated models of dispersed oil biodegradation that incorporate the impact of nutrient and account for what has been observed. At low nutrient concentration, the rate of biodegradation of oil is proportional to concentration, and at high nutrient concentration, the rate reaches its maximum value and thus becomes independent of the actual value of the nutrient concentration.

Meso-Scale Test Facilities for Dispersant Studies

State of the Art and Potential Pitfalls

There are four major types of meso-scale facilities developed for the study of oil fate and behavior in the presence and absence of dispersants: (1) tower tanks for studying plume and oil droplet behavior from subsurface oil releases as well as oil component partitioning and gas droplet behavior; (2) flume tanks for studying weathering of oil and dispersed oil under various environmental conditions (e.g., currents, wind, temperature, and ice presence); (3) wave tanks with the capacity to provide controlled energy dissipation rates for oil droplet formation, oil fate, and transport studies; and (4) high-pressure test chambers to study the above variables under different pressure and temperature conditions.

These test systems do not fully replicate “open water conditions.” Despite their relative size, enclosed systems such as these have a number of potential limitations, such as higher levels of oil droplet coalescence; the loss of oil from the water column due to changes in hydrodynamics; and biological responses related to containment and “wall effects” that preclude the determination of an accurate mass balance for the oil used in experiments. Furthermore, in terms of oil biodegradation studies, test facilities systems using artificial and recycled seawater do not have the normal microbiome of the ocean environments being simulated.

Tower Tanks

Data on oil droplet size and plume behavior for the development and validation of models have been collected from experimental studies using tower tanks. Since the DeepSpill field experiment in 2000 (Johansen et al., 2003), studies at the SINTEF Tower Basin (6 m high × 3 m wide, 40 m3 seawater) in Norway and the CEDRE Experimental Column (5 m high × 1 m wide, 4.5 m3 seawater) in France have have been largely responsible for data used in models to advance our knowledge on deepwater releases of oil. These test systems include an injection system that can control the release

rates of oil and gas as well as instruments to monitor oil droplet and gas behavior (Brandvik et al., 2013, 2014b, 2017b, 2018, 2019a,b; >LeFloch et al., 2013). Water samples are recovered at various depths for the analysis of total hydrocarbons, dispersant concentrations, content of oil-in-water (droplets and dissolved components), and interfacial tension analysis.

Scaling of oil droplet size data remains a challenge for the potential use of tower tanks due to limitations in the volume of oil that can be released and the diameter of the nozzle in the injection systems. Natural water column density gradients are not accounted for in these existing laboratory systems.

Flume Tanks

Flume tanks comprise a looped system in which water is continuously circulated and waves are generated to replicate field conditions. Over the last two decades, following the construction of the SINTEF flume tank facility in Norway (elliptical system, 9 m circumference, 0.5 m wide, 0.4 m depth, 4 m long major axis, containing 1.75 m3 of seawater), there has been a continuous development of flume tanks for oil weathering and dispersant effectiveness studies. Constructed to simulate environmental conditions, the SINTEF flume tank incorporated a wave generator, submerged pumps, and fans to control water flow and wind effects as well as UV lamps for solar irradiance to enable photooxidation studies (Castro et al., 2016; Fiocco and Lewis, 1999; Hokstad et al., 1998; NRC, 2005).

Subsequently the “Polludrome” flume system was developed by CEDRE with a significantly larger canal (L = 12 m, W = 0.6 m, H = 1.4 m) with a total volume of 10.5 m3. Expanding on the capability of the SINTEF flume tank, this system was connected to a large storage tank to enable the pumping of water into and out of the flume to simulate tides. It also had a long straight section that extended beyond the elliptical flume, in line with the wave generator, in which a shoreline could be constructed (Guyomarch et al., 1999; NRC, 2005). More recently, the system incorporated a solar radiation simulator capable of simulating the global range of solar exposure conditions and a laser particle size analyzer (Malvern Mastersizer 2000) for the collection of data on oil droplet size distributions (DSDs) (Guyomarch et al., 2012; NRC, 2005). Based on the success of the flume tanks at SINTEF and CEDRE, similar test systems have been constructed in Canada (i.e., SL Ross Environmental Research Limited, Ottawa, Canada), China, and the United States (pending). This network of test systems will be intercalibrated to enhance the intercomparison of results.

In 2018, Environment and Climate Change Canada completed the construction of the Canadian Environmental Oil Spill Simulator, a meso-scale testbed for spills of oil and other hazardous products in fresh and marine waters and in temperate and Arctic conditions. Containing 7.5 m3 of water, at a depth of 0.9 m, in a channel 0.6 m wide, this system is based on the existing flume tanks located at CEDRE and SINTEF. Advances include automated control systems to support long-term studies for weeks to months, with full control of all conditions (e.g., waves, currents, temperature, salinity, solar irradiance, wind, rain, stratification, formation of surface ice, etc.).

Wave Tanks

The Ohmsett wave tank operated by the U.S. Department of the Interior’s Bureau of Safety and Environmental Enforcement (BSEE) is the largest outdoor saltwater wave/tow tank facility in North America (see Figures 7.4 and 7.5). Recent works funded by BSEE have been aimed at characterizing the wavetank hydrodynamics (Boufadel et al., 2017) and chemistry (Boufadel et al., 2017). The effectiveness of various dispersants on surface oil was also explored, including a recent work by Steffek et al. (2016).

Following the DWH spill, dispersant studies of subsurface releases of oil at Ohmsett have been performed in the presence of both oil and gas at dispersant-to-oil ratios ranging from 1:20 to 1:200 (Panetta et al., 2012, 2013). Biodegradation studies of oil and chemically dispersed oil are not conducted at the Ohmsett facility due to the need to chlorinate its water to control fouling by microorganisms.

The Ohmsett facility offers a versatile and flexible basin with its large horizontal dimensions (200 m × 20 m) but limited water depth (2.4 m). Attempts to overcome this limitation have been undertaken by Brandvik et al. (2017d) and Zhao et al. (2016d) by issuing the jet horizontally or by towing the jet horizontally to simulate a current. This raises the issue of fractionation, which deserves further consideration.

A joint effort funded by Fisheries and Oceans Canada and EPA, a wave tank facility was constructed at the Bedford Institute of Oceanography (Nova Scotia) in 2005 for the study of dispersants under controlled environmental conditions, including wave energy dissipation rates corresponding to conditions encountered at sea. The tank is also equipped with a series of manifolds to generate uniform water currents up to 0.5 cm/s along the direction of wave propagation to incorporate the effect of dilution and flushing on oil and applied chemicals that would occur in the natural environment (King et al., 2015c; Li et al., 2008b, 2009b). In addition, a suspended sediment load can be created in the tank to study oil and particle interactions (O’Laughlin et al., 2017a,b). Proximity to a ready supply of natural seawater and freshwater supply lines enable the simulation of coastal brackish water conditions within the wave tank facility. In operation 9 months of the year, experiments have been conducted in a range of water temperatures that are representative of seasonal variations in subarctic regions (~4°C to 20°C).

For studies on the fate and transport of subsurface discharges of oil, as well as surface spills in environments such as rivers where currents are stronger, a second tank of equal dimensions was recently built with a high flow pumping system capable of generating current velocities up to 5 cm/s and a pressurized oil injection system that can discharge heated oil at various flow rates through a nozzle in the bottom of the tank (Conmy et al., 2016, 2017; Li et al., 2016).

High Pressure Test Chambers

The influence of increasing water depth and hydrostatic pressure on droplet formation and dispersant effectiveness has been an intriguing question. The issue has been partially addressed by the use of a 5.6 m high, 2.3 m diameter hyperbaric chamber facility containing 24.4 m3 of simulated salt water, located at the South West Research Institute in San Antonio, Texas (Brandvik et al., 2019b). Rated to a maximum pressure of 4,000 psi (275 bar) this system can be cooled down to 4°C, is fitted with instrumentation for the characterization of oil droplets, and it has a state-of-the-art delivery system for “live oil” injection. Analysis of oil droplet size from comparable experiments (nozzle, oil type, flow rates, injection techniques, and dispersant product) at ambient pressure (5 m depth) and high-pressure conditions (1,750 m depth/172 bars; such as are encountered by deepwater platforms in the Gulf of Mexico) showed no significant difference in droplet sizes as a function of water depth (Brandvik et al., 2019b).

A number of key experiments with oil and dispersants under DWH-simulated ambient conditions have also been conducted at the high-pressure facility at the Hamburg University of Technology (TUHH) in Germany. The nucleus of TUHH’s High-Pressure Test Center is a 99 L stainless steel autoclave (see Figure 7.6), which provides the experiment space for specialized test modules. This pressure vessel has several hydraulic, electric, and mechanical interfaces that allow the manipulation of the experiments as well as the injection of fluids into the vessel. The pressure generation (maximum pressure of 55 MPa) is carried out by a pneumatic amplifier that compresses tap water and routes it into the main pressure vessel (Seeman et al., 2014). The experimental module for the

investigation of DSDs (“Jet Module”) consists of an acrylic cylinder (190-mm inner diameter, 600 mm height) filled with artificial seawater that can be placed within the high-pressure steel autoclave. The autoclave is then filled and pressurized with tap water. Pressure equalization occurs through a flexible membrane connected to the acrylic cylinder, thereby creating isobaric conditions between test volume (within the acrylic cylinder) and autoclave. Oil or gas is released into the test volume via a 1.5 mm nozzle from a pressurized reservoir positioned outside the autoclave (Malone et al., 2018; Seeman et al., 2014). The Jet Module is equipped with several temperature and pressure sensors to monitor conditions both within the seawater and in the oil reservoir. Oil and gas flow is monitored by a Coriolis mass flow meter. Data are recorded at a sampling rate of 300 Hz; sampling rates up to 24 kHz are possible (Malone et al., 2018). Endoscopic cameras measure DSDs and behavior. An important advance with this facility is the ability to test “live” (e.g., methane-saturated) oil and to simulate realistic effects of pressure drops as occur during actual deepwater blowouts. Gas-saturated oil droplets fracture into many smaller droplets when such pressure drops are simulated, consistent with observations of a similar magnitude pressure drop at DWH (Malone et al., 2018).

The accuracy of modeling tools for the depiction of deep-sea oil spills and the prediction of the ensuing three-dimensional distribution of the hydrocarbons in the ocean relies on precise input parameters. One of the most important influencing quantities to be determined is the rise velocity of the fluid particles originating from the blowout. To meet this challenge, a high-pressure countercurrent

flow cell has been designed, constructed, and commissioned at TUHH in collaboration with the company Eurotechnica GmbH, Bargteheide, Germany, to conduct experimental investigations of bubble and droplet rise behavior under simulated deep-sea conditions using substance mixtures of interest. Varying combinations of pressure (up to 15 MPa) and temperature (4°C to 35°C) have been investigated for different systems (i.e., pure and methane-saturated crude oil in artificial seawater) (Malone et al., 2018; Pesch et al., 2017).

Field Studies

State of the Art and Pitfalls

As noted throughout this report, it is challenging to simulate many of the important complexities of a real spill in the laboratory or in a mathematical model. For instance, hydrodynamic processes measured in the lab must be scaled to the field, and this requires various assumptions. As another example, it is extremely challenging to conduct lab studies of oil effects on large animals or those living at high pressure.

Given the limitations of laboratory and model studies, numerous attempts have been made to take measurements during actual spills (what we will refer to as “Spills of Opportunity,” or SOOs), and there have been several dedicated field studies, such as DeepSpill (Johansen et al., 2003) and Tropical Oil Pollution Investigations in Coastal Systems (Renegar et al., 2017b). While field studies capture more of the real-world complexity than do lab or model studies, they have their own set of challenges, which include:

- Legal and Regulatory. A dedicated oil spill field study must receive authorization to purposely release oil into the environment and this is often a daunting undertaking. In the case of a SOO, scientific activities are also inherently limited by safety concerns and the fact that experiments cannot interfere with the response efforts and there is a concern about liability for the relative portion of natural resource damages.

- Logistical Constraints. Conducting an experiment in the open ocean presents many logistical challenges, especially in the case of a SOO where science will always have lower priority than safety and response activities. Access to the casualty site is a frequent constraint (e.g., in a blowout, droplet size should be measured near the wellhead), but the response operators are reluctant to allow such access for fear that it will interfere with the well control operations.

- Uncontrolled Complexity. In the lab, a scientist can reasonably hope to control or monitor all the important variables that could affect the experiment’s outcome. That is not the case in a field experiment and even less so in a SOO. In the latter case, a scientist may not even have baseline data, and by the time scientists are on scene, it may be too late to collect relevant information.

- Size. Even a modest-sized spill can cover huge volumes of ocean with open boundaries often dominated by complex currents, winds, etc. Monitoring all the potentially important variables with sufficient temporal and spatial resolution is costly at best, and frequently impracticable. For instance, doing a simple mass balance of oil in the water column during a blowout is a significant logistical challenge even in a modestly sized dedicated field experiment.

- Cost. The cost of a field study in the open ocean is high. This can limit the duration of the scientific program, the spatial and temporal resolution, and the ability to systematically study the sensitivity of the dependent variables of interest to changes in independent vari

ables. For a SOO, replicated scientific sensitivity studies are unlikely, though sometimes conditions do fortuitously provide for them.

In the case of a SOO, another challenge is to initiate the monitoring in a timely manner. It will usually take days or weeks to get the necessary scientific equipment in place to get meaningful measurements of an unanticipated spill. Key information can be lost during this preparation period. Of course, the time delay can be substantially decreased by pre-positioning monitoring equipment such as outlined by Aurand et al. (2001, 2004), but to the committee’s knowledge, no one has ever executed such a program on a large scale. A major reason for this is that substantial spills are fairly rare occurrences and few funders or investigators are able to maintain standby capacity for long periods of inactivity.

DWH is the most recent example of a SOO in which many scientific observations were taken. However, the limitations of this dataset are numerous and well documented in the preceding chapters of this report. Although the DWH dataset does shed considerable light on many important science questions, it has left a long list of key unanswered questions. This outcome illustrates the difficulty of overcoming many of the limitations outlined above.

Emerging Issues and Advances

Despite all the challenges of conducting a dedicated field experiment or monitoring a SOO, there are numerous reasons why these efforts are worthy of consideration. Some examples identified in this report are:

- Validation of integrated models. As noted in Chapter 5, integrated oil spill models are widely used to calculate the fate of oil and more recently to calculate the effects on biota. The integrated models are composed of sub-models, many of which have been validated to some degree, but the models as a whole remain poorly validated because of a paucity of high-quality, unambiguous datasets from actual spills. This is especially true of the newest generation of integrated models which incorporate effects.

- Validation of droplet size models. As discussed in Chapters 2 and 6, oil droplet size is possibly the single-most important factor affecting the fate of oil in a blowout. Much work has been done in improving droplet size models, but the question of how well these models scale up to the field remains largely unanswered. Field-scale measurements are probably impossible to make in the laboratory; yet, with recent advances in key instrumentation (e.g., Davis et al., 2017), such measurements are now feasible in the field.6

- Health impacts on response workers. One cannot purposefully expose humans to most of the substances a response worker encounters during an actual spill. Thus, a SOO is a unique chance to take measurements of real-world concentrations of oil spill pollutants and their effects on workers. As pointed out in Chapter 4, many questions remain concerning the concentration and effects of various pollutants on workers.

- Validation of response decision-making tools. A number of semiquantitative approaches (e.g., Consensus Ecological Risk Assessment, Spill Impact Mitigation Assessment, etc.) have been developed to assist responders in considering trade-off decisions when faced with choices of response options, especially considering the use of surface- or subsea

___________________

6 It should be noted that there are many other questions concerning droplet size that can probably be answered more economically in a large-scale lab facility. These questions concern the dependence of droplet size on blowout preventer pressure gradients, degassing of live oil, churn flow, and tip streaming. Indeed, these phenomena could be very difficult to study in the field because of logistical and cost constraints. Hence, the reason for the call for the development of a large-scale lab facility in the recommendations of Chapter 2.

-

dispersant injection (SSDI)-based dispersant applications. Field-scale experiments can assist in understanding whether such apparent trade-offs are true or false dichotomies by observing system behavior under differing response approaches.

- Systems approach to determine hidden effects. Because an ecosystem is greater than the sum of its parts, simplified lab studies can miss hidden synergies and complexities that occur in the real world. Modern advances in sensors and molecular techniques now make it much more probable that a careful field study can uncover these complexities.

Validation of Droplet Models

State of the Art

Since the DWH spill, a great deal of work has been done on developing models of oil droplet sizes emanating from a deepwater blowout. Chapter 2 shows that for DWH-like scales there is a discrepancy of up to four times between the three models examined.

Emerging Issues and Advances

All the existing droplet models reviewed in Chapter 2 use one or more tunable coefficients that must be determined by comparing the model results to observations and backing out the tunable coefficients using some kind of error minimization. This is commonly called the calibration step. Sound statistical practices then dictate that the next step should be to use these coefficients and compare the model against a totally new set of observations, preferably covering a different range of diameters, flow rates, etc., than the observations used to calibrate the coefficients. This is commonly referred to as the “validation” step, and it can be used to estimate the confidence limits on the model droplet size estimates. A close review of the papers describing the models shows that they have often stopped at the calibration step and have generally based their calibration on a small subset of available observations. In Chapter 2, the committee provides a recommendation that existing and future models be more thoroughly calibrated and validated, using the wealth of experimental observations now available, and that validation should be continued as new observations become available.

Another issue affecting droplet model accuracy is the lack of droplet observations during realistic blowout conditions, especially with SSDI activated. Without such observations it is hard to validate a droplet model but, more importantly, to establish the accuracy of the various models at full field scale. As noted in a Chapter 2 recommendation, there is a need to do further large-scale droplet measurements.

ENVIRONMENTAL AND AQUATIC TOXICITY

Toxicity Testing

State of the Art and Potential Pitfalls

It is important to note that a more extensive discussion of toxicity testing protocols can also be found in Chapter 3. The primary difficulty with the current state of toxicity testing is the improper use of test designs that are appropriate for a single compound for which the dissolved concentration can be separated. Oil is a partially miscible mixture of many components of widely varying solubility and toxicity. The dissolved components need to be measured and aggregated into a proper dose metric.

Toxic units (as discussed in Chapter 3) have been demonstrated to properly weight each component and is a proper dose metric. However, the commonly used arithmetic sum of either total petroleum hydrocarbons (TPHs) or the sum restricted to only the polycyclic aromatic hydrocarbon (PAH) concentrations (Total PAH, or TPAH) is not a proper weighting because (1) TPAH ignores all the other hydrocarbons that are contributing to toxicity, and (2) TPHs and TPAH ignore the orders of magnitude difference in all the component toxicity because they weight them equally (Equations 2-4 in Chapter 3). Therefore, neither TPH nor TPAH, even if they used dissolved concentrations, is a proper dose metric.

The usual water-accommodated fraction (WAF) preparation methods leave a residual of undissolved microdroplets as well as the dissolved concentrations. These microdroplets greatly complicate the analysis of the toxicity test results for two key reasons. First, measuring the concentration in the WAF includes both dissolved and microdroplet components, which substantially overestimates the concentration of the less soluble components. Second, when the WAF is diluted, it is assumed that concentrations decrease in proportion to the dilution. While this is true for the total (dissolved + microdroplet) concentration, it is not true for the dissolved fractions, because concentrations are elevated by the dissolution of the components in the microdroplets. The elevation can exceed orders of magnitude in concentration (see Chapter 3, Figure 3.14a). Because this effect depends on the concentration of microdroplets in the WAF, it covaries with other test variables (e.g., presence versus absence of a dispersant). If the microdroplet effect is not properly quantified the results of toxicity test cannot be unambiguously assigned to the effect being investigated (e.g., whether the dispersant increases toxicity).

Protocols for the Preparation of WAFs

Prior to the DWH spill, Chemical 67 Response to Oil Spills Ecological Effects Research Forum (CROSERF) protocols (Singer et al., 2001) had been in place to describe the best practices for the preparation of WAFs and chemically enhanced WAFs (CEWAFs) for use in toxicity testing. As described by Singer et al. (2001), “test media must be reproducible over time and between laboratories with standardized analytical methods to characterize the oil and quantify its components.” When mixing the dilution water and oil, the duration and energy must be sufficient to ensure equilibration of the dissolved mixture constituents in the water. The intensity of the mixing energy also influences the composition of the WAF; therefore, WAF/CEWAF preparation protocols need to generate solutions that are reproducible, comparable to earlier data, and sufficiently relevant to field conditions to be usable in risk assessments and other oil spill decision-making tasks.

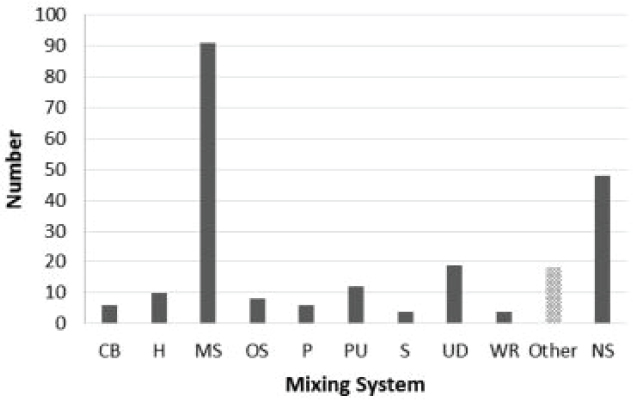

Since 2010, a variety of new methods were developed that resulted in a myriad of different media preparation protocols. Adams et al. (2017) reviewed the oil mixing system (the equipment and/or method used to prepare test solutions) in 144 published toxicity tests and found a total of 226 mixing methods to generate solutions. The most common mixing instrument or method was the magnetic stirrer, and CROSERF was the most common method stated. However, a variety of other mixing methods, including commercial blending, hand mixing, orbital shaking, propeller mixing, pump mixing, sonication, upwelling dilution, and water recirculation, were also identified, and there were studies where the mixing method was not specified. These are depicted in Figure 7.7.

In addition to the type of mixing methods that have been employed in recent years, there have been numerous modifications to the CROSERF method to create new types of WAFs. In a 2013 paper (Incardona et al., 2013), the authors state that the high-energy WAF (HEWAF) standardized protocol was intended to produce WAFs that more closely emulate the dispersion of oil droplets under high pressure (i.e., the DWH spill). Studies conducted in 2017 to further examine HEWAF were done by making serial dilutions from a stock solution; however, only the stock solution was analyzed via fluorescence in order to reduce analytical testing (Forth et al., 2017a).

Another research team suggested that the HEWAF method required further standardization (Sandoval et al., 2017). This study examined three key factors: the presence or absence of oil microdroplets; dispersion stability over the time interval used in toxicity testing; and the chemical composition (PAHs) in relation to potential environmental relevance. This team determined that the CROSERF method for WAFs and CEWAFs had greater stability and were more representative of both dissolved components (WAFs) and oil-water mixtures containing microdroplets (CEWAFs).

Yet a third team (Stubblefield and De Jourdan, 2017) characterized test solutions of even more types of WAFs, including low-energy; no-energy; medium-energy; and intermediate-energy in addition to HEWAFs. Given the large differences in the various WAF composition, the authors concluded that it is imperative to accurately characterize and quantify exposures both in the laboratory and in the field to assess potential environmental impacts. The authors also concluded that adequate chemical analysis is required to generate data for empirically based toxicity models (e.g., the target lipid model and PETROTOX; see Chapter 3 for a detailed discussion).

Emerging Issues and Advances

A large quantity of experimental toxicity data is available that could be analyzed to continue to investigate the question as to whether exposure media containing chemically dispersed oil is more toxic than is media containing physically dispersed oil. Most of these data are from variable dilution preparation methods. The analysis would need to include a quantitative estimate of the microdroplet concentration at each dilution, estimates of the dissolved concentrations, and the use of toxic units as the dose metric. Methods are available to do this analysis. Chapter 3 provides a more detailed discussion of the problems that can occur when using different media preparation methods, and the reader is encouraged to review that information.

One promising new protocol for oil dosing, known as passive dosing, could potentially eliminate the confusion caused by use of varying WAF methods (see Chapter 3 for details). The added advantage of this approach is that it minimizes the interference introduced in toxicity data by oil microdroplets, thus generating exposures based on truly dissolved components that partition through permeable membranes. These soluble fractions are known to the primary drivers of hydrocarbon toxicity.

HUMAN HEALTH

Consideration of Human Health in Oil Spills

State of the Art

Integration of human and ecological health continues to be a major topic of interest among scientists and policy makers, including efforts by the National Research Council particularly aimed at coastal areas (IOM, 2014; NRC, 2013) and by federal agencies (Sandifer et al., 2015). These reviews focus primarily on understanding the role of human activities in degrading coastal and oceanic ecosystems as well as the value of a healthy ocean and coast in supporting human health and well-being.

However, oil spills and the response to oil spills present a slightly different challenge, but they nevertheless require a similar systems approach that integrates across multiple disciplines.

Existing Net Environmental Benefit Analysis tools like the Spill Impact Mitigation Assessment or the Comparative Risk Assessment (see Chapter 5) do not consistently include human health. Some have argued that it is unnecessary because human health considerations override all others throughout an oil spill response. There is extensive literature about the challenges of integrating human health considerations in standard Environmental Impact Statements (EISs). These include debates about whether human health is best incorporated within an EIS or should be separate in the form of a Health Impact Analysis or similar instrument. Deciding how best to incorporate health into the systems approach, which is the basis for effective decision making in response to oil spills, has begun and merits further consideration.

Potential Pitfalls and Methodological Challenges

Study of the potential impact of environmental factors on human health presents a number of methodological challenges (see Chapter 4) that are at least partially distinguishable from studies of ecosystem effects. These include:

- an inability to perform a fully controlled epidemiology study for environmental risks;

- implications of health effects in individual humans;

- implications of the wide variety in human vulnerability; and

- ethical issues particularly germane to human studies.

Epidemiology Studies for Environmental Risks

The gold standard for epidemiological methodology, the double-blind randomized controlled trial, is not possible for usual environmental epidemiology. A randomized controlled trial of a potential therapeutic agent for a specific disease usually consists of randomly assigning half of a group of volunteers with the disease to the agent and the other half to a placebo. The participants are not aware of which group they are in—nor is the medical team. Endpoints indicative of therapeutic

efficacy and toxicity are then compared between the two groups. This controlled experimental design is not possible for environmental epidemiology for which most studies take advantage of uncontrolled differences in exposures. Whether the difference is geographical or temporal, numerous potential confounding factors limit interpretation of any observed association between cause and effect. Accordingly, the acceptability of a cause and effect relationship is often determined by having multiple studies that replicate the original finding, preferably performed by different investigators using different methodology in different populations with different sources of exposure to the agent of concern. Determining the potential human health impact of dispersant use during an oil spill, which is not predictable as to place and time, is very challenging. Also crucial to acceptability of an observed association is the biological plausibility of the association for which toxicological studies in laboratory animals or in vitro are central. The criteria involved in considering causality are usually assigned to Bradford Hill (Hill, 1965; Schünemann et al., 2011).

Implications of Health Effects in Individual Humans

Consideration of trade-offs is central to response decisions (see Chapter 5). In general, the health of individual humans carries greater weight than does the health of individual members of an ecosystem, although not necessarily to an entire ecosystem, which arguably itself has benefits to human well-being. The oil spill response process clearly guides the Response Coordinator to avoid obvious risk of adverse human health consequences, but the trade-off is less clear when it is between ecosystem effects and a lower level of human health risk to workers and to community members, particularly among those who suffer from preexisting conditions that increase their vulnerability.

Issues related to the wide variability in human vulnerability also include the difficulty in extrapolation of epidemiological and toxicological findings to those at highest risk. Vulnerable populations of particular societal concern include pregnant women and young children. Workers who enlist in the response effort may have preexisting health conditions that are not adequately determined prior to joining this workforce. While toxicological studies are valuable in comparing the relative toxicity among dispersants, extrapolating from animal or in vitro studies to humans, and particularly to vulnerable populations, presents challenges.

Ethical Issues Germane to Human Studies

Before beginning a study of workers and community members potentially affected by an oil spill, approval is required of an Institutional Review Board (IRB) that has the goal of protecting the welfare and privacy of all human subjects. Furthermore, the study must comply with the Health Insurance Portability and Accountability Act privacy rules. IRB approvals require significant planning. For an oil spill this is complicated by the fact that often the most effective approach requires collaboration among multiple academic and governmental institutions, each one having its own IRB that must approve the protocol in advance. After the difficulties encountered for the DWH studies, the National Institute of Environmental Health Sciences (NIEHS) and the Centers for Disease Control and Prevention have worked on developing off-the-shelf documents that can speedily be adapted for the next oil spill.7

Emerging Issues and Challenges to Exposure Assessments for Dispersants

Delays due to the need for IRB and other clearances specific to human studies are among the challenges to assessing human exposure resulting from an oil spill as compared to studies of

___________________

7 See https://disasterinfo.nlm.nih.gov/content/files/RAPIDD%20Protocol_v8.0_2015-07-16_508_CLEAN.pdf.

nonhuman biota. Biological markers of exposure which can be detected in human blood or urine can be particularly useful in determining the extent of exposure to crude oil components, including some for PAHs that have been recently developed in NIEHS-supported studies (Huang et al., 2014) and an analytical method for DOSS that conceivably could be useful in evaluation of human-derived biospecimens (El Said et al., 2010; Flurer et al., 2010). As a rule, however, biomarkers relevant to oil spill response only persist in the human body for short time periods. Accordingly, significant delay in initiating studies, as happened following the DWH spill, preclude dependence on biomarkers.

Delays in starting the studies also complicate the ability of workers to clearly remember whether they were exposed to dispersants when filling out a questionnaire at a later date. The accuracy of questionnaire responses is also complicated by a number of other factors. These include the fact that the response to potential exposure to dispersants does not substantially differ from that to crude oil and its derivatives, being dependent on similar good industrial hygiene practices. This means that in the relatively hurried nature of any spill response, keeping track of whether dispersant was potentially present may not be sufficiently important to inform the worker.

Questionnaire studies also are notoriously susceptible to what is known as recall or response bias. In situations of uncertainty, people are more likely to respond positively to questions about potential exposure, particularly when the issue has been publicized as one of concern—such as with dispersants. It is unclear as to whether the possibility of future litigation leading to funding for those exposed may contribute to recall bias in a situation such as the DWH spill response.

Development of badges or other monitors of dispersant exposure may be helpful for future exposure studies of workers or of community members.

Indirect Implications of Dispersant Use on Worker Health and Safety and on Community Health and Resilience: Temporal Factors

A major but indirect health impact of an oil spill is on the psychosocial health and resilience of communities suffering from concerns about their health; about the shorter-term economic, cultural, and environmental impacts; and about the possible long-term implications of the existence of an offshore oil industry. It is a reasonable assumption that the duration of both the oil spill and the resultant response activity is directly related to the extent of adverse psychosocial effects on individuals and communities. If, in fact, dispersant use speeds up the recovery process, presumably it would mitigate against longer-term psychosocial impacts and will improve community confidence in their longer-term prospects. Similarly, the risk of worker injury and illness presumably is related to the duration of response activities.

Implication of Dispersant Use on the Toxicity of Crude Oil Components: Benzene and PAHs

The carcinogenic components of crude oil are benzene and PAHs. Benzene is relatively volatile such that exposure of workers and, less likely, of community members would occur via inhalation. PAHs generally remain in the water. As PAHs are of concern because of their uptake into seafood eaten by humans, closures of fisheries—with attendant psychosocial, economic, and cultural impacts—are particularly problematic. Dispersants change the distribution of crude oil components, enhancing the dissolution of both PAHs and benzene. Greater dissolution of benzene in the water column could reduce the health risk to responders exposed to the volatile oil components in the air. Conversely, increased PAHs in the water column could raise the level of PAHs in seafood species, thereby affecting fishery closures and seafood consumption. As an additional complication, dissolution may differ depending on the avenue of delivery of the dispersant (e.g., subsea versus

surface) or other local factors. While there is some inferential evidence that benzene is more likely to remain in water rather than be volatilized with subsea dispersant application, the committee was unable to find equivalent evidence of a change in PAH levels in seafood as a result of dispersant use.

TOOLS FOR OIL SPILL RESPONSE DECISION MAKING

Risk Assessment Tools

State of the Art

The Distinction Between Operational Versus Environmental Monitoring

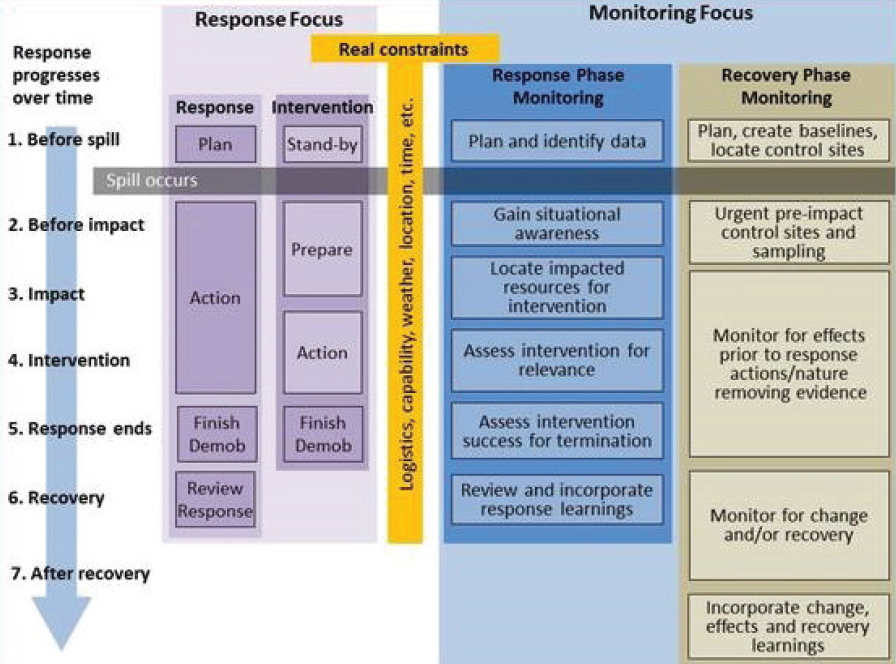

Monitoring during an oil spill response is typically divided into two different categories:

- Operational Monitoring (or Type I monitoring), which collects near real-time data that are directly relevant to ongoing response operations or are needed to evaluate ongoing response strategies; and

- Environmental Monitoring (or Type II monitoring), which may include short- and long-term damage assessments, surveying recovery, and other purely scientific studies during and after an oil spill.

The Australian Maritime Safety Authority (AMSA) Spill Monitoring Handbook (AMSA, 2003) provided a comprehensive overview on all aspects of spill monitoring—both operational and environmental. AMSA released an updated Oil Spill Monitoring Handbook in 2016 (AMSA, 2016; see Figure 7.8). In 2013, both the API (2013) and the National Response Team (NRT, 2013) released guidelines focused on dispersant and dispersed oil monitoring. The API plan was specific to SSDI, while the NRT plan addressed both surface and SSDI.

In 2014, a multi-organization team in the United Kingdom released a guide for monitoring subsea oil releases and dispersant releases in UK waters (Law et al., 2014), and a review of new and emerging monitoring technologies suggests that future operational monitoring may be greatly assisted by use of unmanned, remotely operated, and autonomous surveillance equipment.

The underlying theme in these spill monitoring documents is that a good operational monitoring plan should incorporate the elements in shown Figure 7.8.

Shipboard Dispersant Operational Monitoring Protocols

During the DWH oil spill, a sizable number of assets (ships, equipment, and personnel) were deployed for operational monitoring, environmental effects monitoring, and damage assessment monitoring. While many of the latter studies (effects and damage assessment) were initiated later into the response (weeks or months after the spill response had started), operational monitoring was initiated within days of spill onset. Operational monitoring is intended to directly inform operational decision making during the response. There are several key elements to a dispersant operational monitoring plan. They include being:

- rapidly deployable;

- flexible and allowing for “phased deployment” based on needs and operational timelines;

- scientifically based;

- robust, using existing, proven technologies; and

- clear as to “action thresholds” for continued dispersant response operations.

Potential Pitfalls

In 2013, two sets of guidelines were published for dispersant operational monitoring. The API guidelines, Industry Recommended Subsea Dispersant Monitoring Plan (API, 2013), were specific to subsea dispersant injection. The NRT guidelines, Environmental Monitoring for Atypical Dispersant Operations, included guidance for both subsea dispersant injection and prolonged surface dispersant application (NRT, 2013). Both documents are intended to support development of operational, incident-specific monitoring plans, but they have often been confused with the USCG Special Monitoring of Applied Response Technologies protocols that are aimed at monitoring surface dispersant application at smaller, ephemeral oil spills. Having three different dispersant monitoring documents, authored by different organizations and with varying procedures for data collection and reporting, can be problematic.

While both the API plan and the NRT plan focus on operational monitoring, some data collected under the NRT plan could be made available for damage assessment purposes. Merging operational and NRDA data needs could create conflicts in priorities for sample collection, possibly causing a delay in the reporting of operational effectiveness back to the Unified Area Command if both types of data are being collected from a single research vessel.

Despite these challenges, both documents provide a flexible framework for incident-specific monitoring plan development and recommend the type of data that should be collected. However, these plans are not a replacement for detailed shipboard Field Test Plans, which should be devel-

oped prior to an operational monitoring research cruise. With limited time to deploy this type of field monitoring in the early hours of a spill response involving dispersants, it is essential that the operational monitoring protocols are standardized to ensure that the three main objectives of dispersant operational monitoring are achieved:

Objective 1: Confirmation of dispersant effectiveness under present spill conditions; for example, meteorology and (physical) oceanography conditions, oil type, weathering state, particulate matter, and marine snow production.

Objective 2: Initial field screening characterization of the dispersed oil concentrations at depths within the water column. These data can be very useful in calculating toxic units and therefore characterizing toxicity (as explained in Chapter 3).

Objective 3: Detailed laboratory chemical characterizing of relevant water samples, which allows decision makers to refine estimates on potential toxicity or other biological effects that may have occurred.

Proper field protocols are essential to ensure:

- safe deployment of the research team and equipment;

- proper use of field equipment;

- consistent field sample collection, preparation and analysis; and

- accurate, timely reporting across multiple research vessels back to the decision makers within the Unified Area Command.

Capturing these field protocols in a comprehensive shipboard research plan that is available for deployment prior to an incident occurring is essential. Important information for inclusion in the plan includes:

- minimum required training for all shipboard research personnel;

- shipboard cruise hazards identification;

- safety data sheets for all chemical components used on board;

- Occupational Safety and Health Administration monitoring requirements for inhalation of volatile organic compounds (e.g., real-time BTEX detection);

- required field collection permits;

- proper manufacturer instructions for operation of all scientific equipment;

- sample handling, labeling, and chain of custody procedures;

- weather contingencies; and

- prioritization of all sampling because there will be times when not all parameters can be sampled for various reasons. The team needs to know what samples and parameters to give the highest priority to in order to reach the overarching goals of the sampling mission.

Emerging Issues and Advances

Rapid Field Screening for Hydrocarbons