Time as a Primary System Metric

DAN C. KRUPKA

Time—the interval from the start of manufacturing activity to its completion —is the single most useful and powerful metric that any firm can employ to measure its manufacturing operation. This paper argues that time is a more useful and universal metric than cost and quality because it can be used to drive improvements in both. 1 If we were offered a second choice of metric, we would add the variance of that interval. The traditional view is that cost, quality, and time are the important elements for assessing manufacturing performance. Here it is argued that properly managing time will ensure that the other two metrics fall in line.

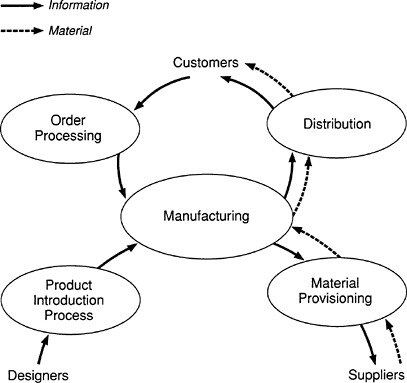

To begin, it is necessary to recognize that manufacturing operations —the activities that take place within the walls of a factory—can no longer be treated as the system to be optimized. Instead, in considering a manufacturer, we must think of several systems, of which manufacturing is but one (see Figure 1 ). Customers' orders for products are conveyed by an ordering system to the manufacturing system, whose output then flows through a distribution system to the customer. Rapid and flexible response requires that materials and parts flow quickly into manufacturing; that requires a

|

1 |

In making this suggestion, I am echoing George Stalk, Jr., and Thomas M. Hout who advance this argument in their book, Competing Against Time. Many of the ideas presented in their book become even more convincing when considered in light of the concepts discussed in the section on lessons from queuing models. |

FIGURE 1 Targets for interval reduction. Several systems are needed to define a manufacturer besides the activities that take place within the factory walls.

short and predictable interval for the material provisioning system. In addition to ensuring high performance for these systems, a successful firm must be capable of rapidly translating its designs into manufactured products. Hence, we need a well-crafted and rapid product introduction system.

All of these systems bear great similarity to one another. Each consists of a sequence of processes and prescriptions for defining how entities —materials, products, or information—are to flow from one process to another. Currently, manufacturing processes on the factory floor are better understood because they have a long history of analysis and design. As many are finding, however, the other systems considered here can be analyzed and improved by employing methods analogous to those applied on the factory floor.

To manage these systems as part of an integrated whole requires a metric that ties them together operationally. Time is that metric. In contrast, there is no common definition of quality throughout the system. On the factory floor, we speak of defect levels, yields, or rework rates. Al-

though analogous terms may be applied to processes in the other systems, it is conceptually difficult to compare, for example, the severity of a defect in a customer's order with a defect on a silicon wafer. Not so with time.

What about cost? The difficulty with using cost to measure the performance of the system is that it is a lagging metric. Books are closed monthly, not daily; the calculation of costs always requires arbitrary allocations of expenses. Costs, as traditionally calculated, are volume dependent. Reducing time (and its variance, as will be discussed later) is always a benefit; cutting costs is not. Consider for example, the consequences of reduced staffing in a pilot production facility, thereby unwittingly creating a bottleneck in the product introduction process. A significant delay here may result in dramatically reduced profits over the life of the product (Reinertsen, 1983). Thus, it is difficult to make meaningful comparisons between the capabilities of manufacturing systems using cost as a metric.

The foregoing arguments may sound academic. They are not. Competing against time is fast becoming today's business strategy. According to Stalk and Hout (1990), compressing time has been observed to lead to the following sequence of changes: productivity rises; prices can be increased as responsiveness to customer orders is improved; risks are lowered because reliance on volatile forecasts is reduced; and market share increases as a result of improved responsiveness. In light of these very practical arguments, the measurement of time throughout an enterprise is critically important.

TIME AS A DIAGNOSTIC TOOL AND A DRIVER OF QUALITY AND COST

Not only is time an excellent metric for the overall system (Manufacturing with a big M) and its subsystems (such as factory floor operations); it can be used to guide activities to improve performance. Moreover, reducing the interval, be it in product introduction or in manufacturing, will improve quality and cost. To use time as a diagnostic tool to improve a system, it is invariably profitable to begin by creating a diagram of the processes that make up that system. For manufacturing processes that have been designed by engineers, such diagrams are generally available. Such is not the case for product introduction or other nonmanufacturing systems. And yet these systems are often more complex than those encountered on the factory floor. Analysis of such diagrams often reveals the presence of steps that add no value or that consist of re-creating—at the risk of introducing errors —information created elsewhere. Eliminating these steps will shorten the system's interval, reduce costs, and often improve quality by reducing opportunities for the introduction of errors.

The diagram will also reveal opportunities for concurrent execution of activities. Introducing parallelism into a system that had consisted of serial process steps often carries benefits that extend well beyond the time saved.

An excellent example is product introduction as practiced by Japanese automobile manufacturers. Their cycles are considerably shorter than those of their U.S.-based and European competitors (Clark and Fujimoto, 1989a) because they have replaced a phased approach in which the activities of manufacturing engineers follow those of the product designers by an overlapping approach: Cross-functional teams are established early in the process, allowing preliminary information created by the designers to be assessed by manufacturing engineers. As a result, the overall product introduction interval is shortened, leading to lower development costs and a more manufacturable product possessing higher quality. A further payoff is that firms with the ability to introduce products rapidly can be much more responsive to market trends.

LESSONS FROM SIMPLE QUEUEING MODELS

Despite the inability of many firms to create systems characterized by the virtual elimination of non-value-adding steps and by the introduction of much concurrency, the prescriptions outlined in the previous section are straightforward. Even if a firm had made all the suggested improvements, however, there would remain opportunities for reducing its interval. Insights from elementary queueing theory show how relentless concentration on the time metric leads to additional improvements in performance.

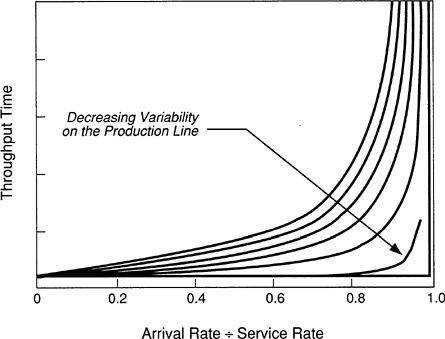

Figure 2 shows the average throughput time of a single-server queue (such as that associated with a single machine on the factory floor) as a function of the system's capacity, measured as the ratio of the arrival rate of entities to the rate at which the resource (or machine) is able to perform its function (Whitt, 1983). (Purists would prefer more careful definitions. My intent, however, is to sacrifice technical rigor for simplicity of exposition.) The important point is that, for a given capacity utilization, the throughput time depends on the degree of variability in the system. In fact, if all variability were removed, the throughput time would be equal to the time required to perform the designated task—the so-called service time—until the arrival rate exceeded the service rate. At that point, a queue would form and grow without limit.

It is important to note that the ordinate on Figure 2 is the average throughput time. The throughput time fluctuates and, as one would expect, the lower the variability in the system, the lower the variance of the throughput time. Consequently, to assess the performance of a system, we should measure not only its throughput time but also its variance. 2 How does the variability arise? We distinguish two classes of sources: those affecting

|

2 |

This point is also made by Hopp et al. (1990). Their paper suggests incorrectly (p. 80), however, that queue time can be directly addressed and is unaffected by setup time. |

FIGURE 2 General results from queueing theory. Here the average throughput time for a single server queue illustrates the effect of variability in the system.

the arrival rate and those affecting the service rate. On the factory floor the arrival rate at a given stage may fluctuate for any number of reasons—for example, arrivals from upstream stages that are not in phase, problems encountered at the preceding stage, equipment failure, and random batching. The service rate is affected by problems experienced with the equipment, variable setup times, lack of clear instructions, or lack of operator skills.

It is important to observe that at levels of capacity utilization that make economic sense, say in the neighborhood of 0.8, small decreases in the service rate (which effectively shift the system to a higher level of capacity utilization) lead to a large increase in throughput time. In addition, an increase in variability in either the arrival or the service rate, leads to a large increase in throughput time.

Although the curves shown in Figure 2 are calculated for a single-server queue, analogous results are obtained for a network of queues, such as a manufacturing facility or the ordering process for a complex product. In general, therefore, the prescriptions for reducing throughput time (or manufacturing interval) are the same: reduce variability in the system and strive to increase the service rate.

How do these prescriptions translate into quality and cost improve-

ments? The answers are straightforward, at least in principle. Reducing the interval by reducing variability requires that the sources of this variability be systematically eliminated. This requires reducing rework rates and machine downtimes, smoothing the flow in a manufacturing line by reducing batch sizes and by appropriate sequencing, devising approaches to minimizing shortages of materials, improving operator skills, ensuring that bills of material are accurate, or improving the accuracy of storeroom on-hand balances. All these, and many others, are activities that are addressed in a typical manufacturing environment and which are measured with a variety of metrics. By thinking of these activities as being aimed at reducing variability, we see how time and its variance, as high-level metrics, drive improvements in quality and cost.

The foregoing discussion glosses over an important concept—translating the high-level metric of time into the performance of a specific activity, be it on the shop floor or at a stage in the product introduction process. For example, management cannot demand that operators reduce the manufacturing interval on their line. High-level, time-based goals must be translated into the activities that will support those goals. And the responsibility for performing that translation lies with management.

Although it should be obvious from the foregoing discussion that reducing variability reduces costs, it is worth considering an example that dramatically illustrates the point. A manufacturing manager wished to confirm the need for an extremely expensive machine. The factory already possessed one such machine, which was fed by the outputs of two different lines, but its queue time had become unbearably long. Upon investigation, it was discovered that the material handler responsible for moving the product from the two feeder lines to the expensive machine wished to minimize the number of trips that he made. He preferred to transfer the product in large batches rather than moving it as soon as it arrived at the end of the line. Since the expensive machine was already highly utilized, the materials handler's strategy had a devastating effect on the line's performance. By changing the material handler's schedule, the need to spend an additional $1 million was avoided.

Earlier, with regard to the potentially unfortunate consequences of cost reduction in pilot operations, it was suggested that reducing time is always recommended. Such a prescription requires careful interpretation because mindless pursuit of shortened intervals by the addition of excess capacity could lead to higher costs. Until the importance of speed is more widely acknowledged, however, it is excessive zeal in cost-cutting and the neglect of the more fundamental parameter, time, that poses the greater danger to the majority of firms.

The discussion in this and the previous section demonstrates that time, as a primary metric, is the ultimate detector of inefficiencies in an overall

system. It quickly uncovers flawed performance. For example, if the time that elapses between placement of a customer's order and receipt of payment is much longer than the manufacturing interval, the overall system is clearly inefficient. Even on the factory floor, high first-pass yields—a favorite measure of overall performance—may lull us into complacency. A far better measure is the manufacturing interval or the ratio of value-added process time to the manufacturing interval. The latter allows one to determine how close the process is to reaching the theoretically minimum time.

APPLYING THE LESSONS LEARNED IN MANUFACTURING

In the opening section we observed that the nonmanufacturing systems such as product introduction bear an important similarity to the shop floor. Since more sophisticated approaches such as queueing analysis or simulation have been applied primarily in the manufacturing environment, are there less obvious concepts that can be ported from the factory floor? The answer, of course, is yes.

Perhaps the most important lesson is that these nonmanufacturing environments should be conceived, at least qualitatively, as systems through which entities should flow rapidly. One area in which firms often economize is pilot production. In light of the arguments presented here, that may be a false economy.

We may also draw a few additional lessons. Just as flow through a manufacturing line is facilitated by small lot sizes, so speed in product development can be achieved by aiming for small increments in product capability and introducing these often (Gomory and Schmitt, 1988). Whether or not they draw the analogy with lot sizing, Japanese manufacturers have skillfully applied this concept, particularly in consumer products. On the factory floor, performance is enhanced by organizing manufacturing into focused factories. In product development, the analogous response is the establishment of cross-functional teams. In an ideal line, product, once started, flows automatically and requires no scheduling. To achieve rapid product introduction, the design should move ahead once the specifications are frozen; there should be no reopening of specifications. Also, just as short manufacturing intervals are facilitated by total quality control, so rapid product introduction depends on the quality of underlying processes.

Finally, just as engineers are essential to just-in-time and total quality improvements on the shop floor, so are they essential to engineering the other systems. In fact, we are now seeing the birth of a new branch of engineering—an offshoot of industrial engineering —that we might call industrial operations engineering.